Navigating the Path to CyberPeace: Insights and Strategies

Featured #factCheck Blogs

Executive Summary

A video featuring India’s External Affairs Minister S. Jaishankar is being widely circulated on social media with the claim that he urged US Secretary of State Marco Rubio and US President Donald Trump to hand over the “handlers” of the so-called “Cockroach Janata Party” to India. The viral post further alleges that Jaishankar described the organisation as a “Pakistani and Iranian proxy group.” CyberPeace Research Wing research found the viral claim to be fake. External Affairs Minister S. Jaishankar did not make any statement regarding the “Cockroach Party” or its alleged handlers during the press conference. The viral video has been edited and is being shared with a misleading claim.

Claim

A verified X (formerly Twitter) user shared the viral clip and claimed that during a joint press conference, Jaishankar said:“I request Marco Rubio and Trump to hand over the handlers of the Cockroach Party because they are Pakistani and Iranian proxy groups.”

Fact Check

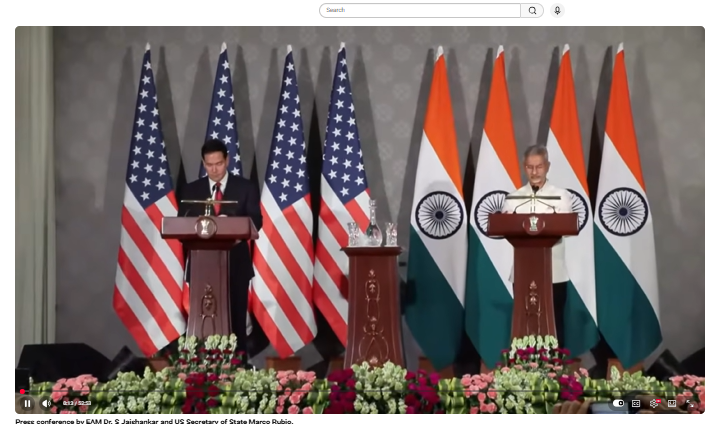

To verify the claim, we converted the viral clip into key frames and conducted a reverse image search. During the research, we found the original video uploaded on May 24, 2026, on the official YouTube channel of the Ministry of External Affairs.

The video was captioned:“Press conference of EAM Dr S Jaishankar and US Secretary of State Marco Rubio.”

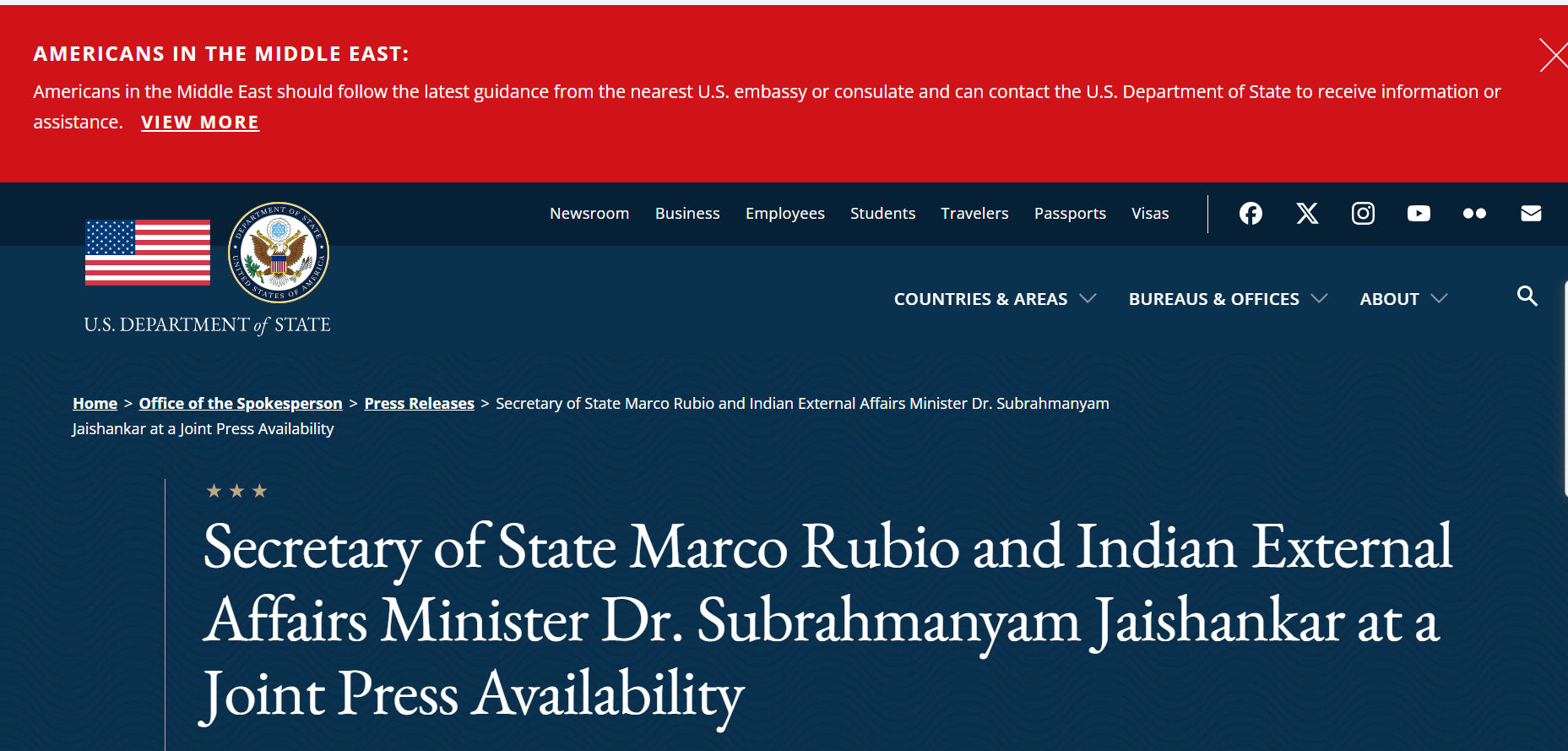

A review of the full press conference confirmed that Jaishankar made no mention of any “Cockroach Party,” its alleged handlers, or any Pakistani or Iranian proxy network. Further verification of the official transcripts published by both the Indian Ministry of External Affairs and the United States Department of State also found no references to the terms “Cockroach Party,” “handlers,” “Pakistani proxy,” or any statements matching the viral claim.

https://www.state.gov/releases/office-of-the-spokesperson/2026/05/secretary-of-state-marco-rubio-and-indian-external-affairs-minister-dr-subrahmanyam-jaishankar-at-a-joint-press-availability

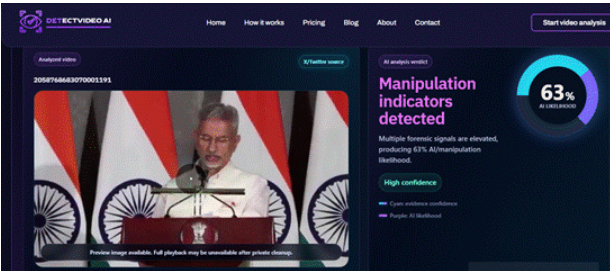

In the final stage of verification, the viral clip was analysed using an AI detection tool. The analysis suggested that the audio had been manipulated and that the video appeared to be edited. The tool indicated a 63 percent probability that the clip had been altered using AI-based editing techniques.

Conclusion

The research confirms that the viral claim is fake. S. Jaishankar did not make any statement regarding the “Cockroach Party” or its alleged handlers during the press conference. The viral clip has been edited and is being shared with misleading claims.

Executive Summary

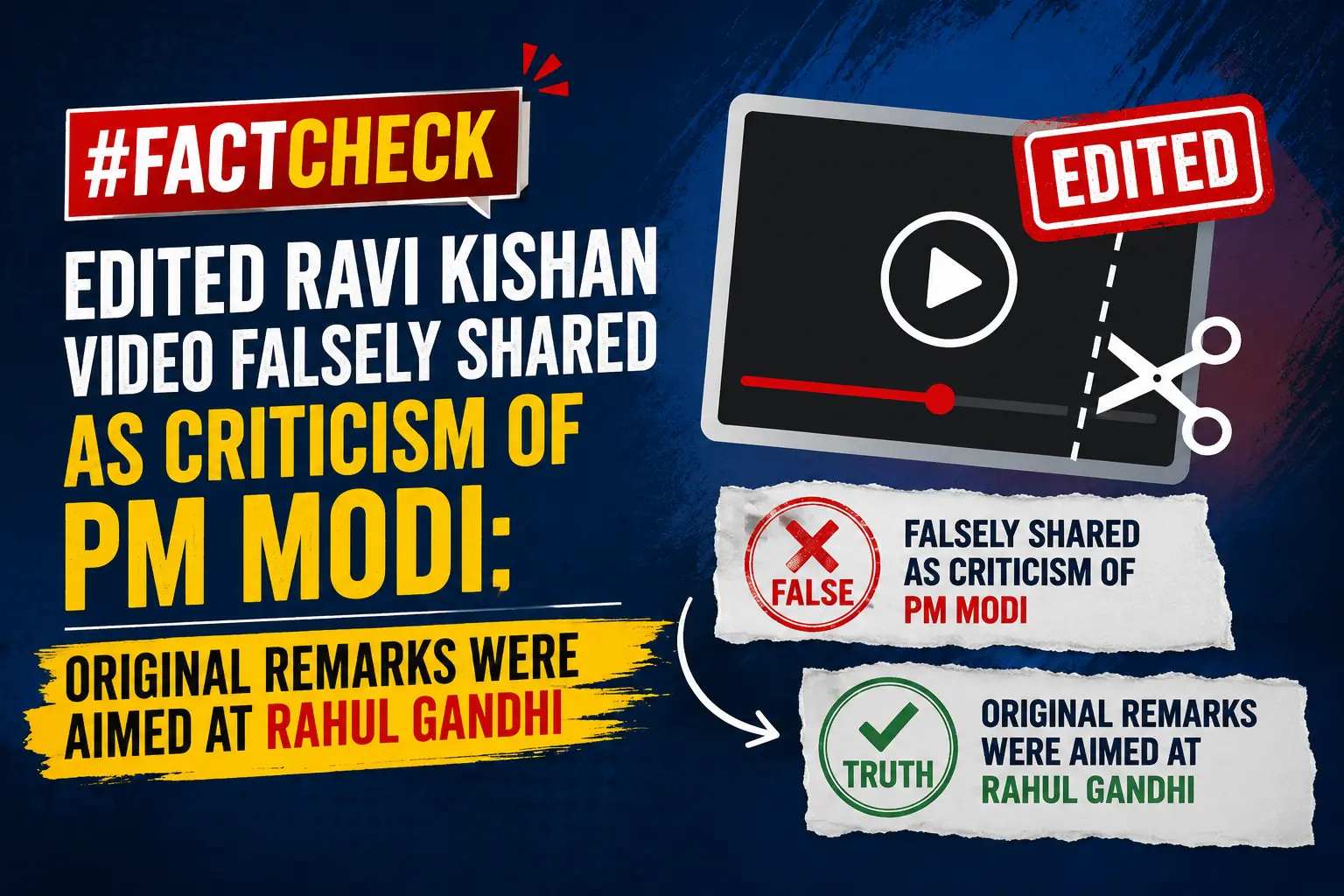

A video of BJP MP Ravi Kishan is being widely circulated on social media with the claim that the Gorakhpur MP was mocking Prime Minister Narendra Modi and criticizing his working style and frequent foreign visits.In the viral clip, Ravi Kishan can be heard saying that “he likes to travel,” “comes to Parliament only for a few minutes,” and does not like pressure or responsibility. The clip also features him using phrases such as “azad panchhi” (free bird) and “azad parinda.” However, CyberPeace Research Wingresearch found the claim to be misleading. The research revealed that in the original video, Ravi Kishan was actually criticizing Congress leader Rahul Gandhi. A cropped portion of his statement is being shared out of context with a false claim.

Claim

An X (formerly Twitter) user shared the viral clip and wrote that Ravi Kishan was referring to Prime Minister Narendra Modi, alleging that Modi enjoys travelling abroad, spends little time in Parliament, and feels uncomfortable under pressure.

- https://x.com/Aarti202/status/2058523226305900586

- https://archive.ph/j5MaV

Fact Check

To verify the viral claim, we performed a reverse search using key frames from the video. During the research, we found the original video uploaded on the Facebook page of ANI on May 13, 2026.

The caption of the post read:“War does not seem to be ending…” Ravi Kishan warns the country about the ongoing conflict in the Middle East.At around the 4-minute-40-second timestamp, an ANI reporter asks Ravi Kishan about Rahul Gandhi distancing himself from the CBI Director selection process. Responding to that question, Ravi Kishan makes the remarks that later went viral.

This clearly establishes that Ravi Kishan was not referring to Prime Minister Narendra Modi, but was commenting on Rahul Gandhi. In his response, he says that “it is good that he has freed himself” and refers to Rahul Gandhi as an “azad parinda” (free bird).

During the research, we also found the same video posted on Ravi Kishan’s official X account on May 23. The caption of the post stated:“Congress should now free its prince Rahul Gandhi.”This further confirms that the viral clip has been misleadingly edited and shared out of context

Conclusion

The research found that Ravi Kishan’s remarks in the original video were directed at Rahul Gandhi, not Prime Minister Narendra Modi. An edited portion of the video has been falsely shared with a misleading claim.

Executive Summary

A video of Prime Minister Narendra Modi is being widely shared on social media, in which he appears to announce that all ration card holders will receive free mobile phones, provided no member of their family is a government employee. However, research by the CyberPeace has found this claim to be false. Our research reveals that the viral video is AI-generated and does not reflect any real announcement.

Claim:

An Instagram user shared the viral video with the caption, “If you have a ration card, you will get a free mobile phone.”

- Post link: https://www.instagram.com/reels/DWqDKWxy6lJ/

- Archived link: https://archive.ph/wip/dmpIf

Fact Check

To verify the claim, we first conducted a keyword-based search on Google. However, we did not find any credible media reports supporting such an announcement, raising doubts about the authenticity of the video. We then checked the official government welfare schemes portal, myscheme.gov.in, which provides verified information about central government schemes. No such scheme offering free mobile phones to ration card holders was found on the platform.

Conclusion

Our research confirms that the viral video is fake and AI-generated. There is no official announcement or credible report suggesting that ration card holders will receive free mobile phones under any government scheme. The video has been digitally manipulated using artificial intelligence and is being circulated with a misleading claim. This serves as another example of how AI-generated content can be used to spread misinformation.

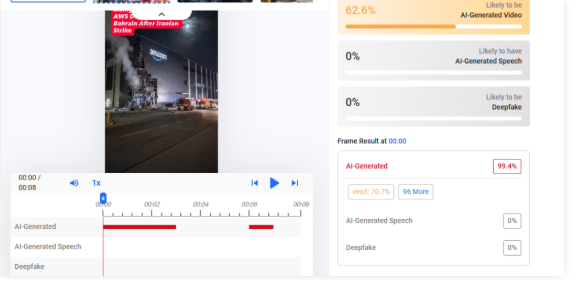

Executive Summary

A video showing a damaged building allegedly belonging to Amazon is going viral on social media. The clip is being shared with the claim that it depicts the aftermath of an Iranian missile strike on an Amazon data center in Bahrain on April 1, 2026. However, research by CyberPeace has found the claim to be misleading. While reports confirm that Iran targeted a U.S.-linked cloud infrastructure in Bahrain, the viral video itself is not real footage and has been created using artificial intelligence.

Claim

A Facebook user, “Tripti Speaks,” shared the viral video on April 2, 2026, with the caption:“Iranian attack on Amazon’s cloud computing data center in Bahrain. IRGC fired missiles at Batelco in Bahrain where AWS infrastructure is located, damaging servers and disrupting services.”

- Archived link::https://perma.cc/XH7S-QTX6

Fact Check

To verify the claim, we extracted multiple keyframes from the viral video and conducted a reverse image search using Google. However, we did not find any credible sources or reports featuring this specific footage. This raised suspicion about the authenticity of the video. We then analyzed it using the AI detection tool Hive Moderation, which indicated a 63% probability that the video is AI-generated.

According to a report published by Reuters on April 1, 2026, Iran launched a missile attack targeting Amazon’s cloud computing operations in Bahrain. The Islamic Revolutionary Guard Corps (IRGC) had earlier warned that U.S.-linked companies in the Middle East—including Microsoft, Google, and Apple—could be targeted.

Conclusion

Our research found that while there are credible reports confirming an Iranian attack on cloud infrastructure linked to Amazon in Bahrain, the viral video circulating on social media does not depict the real incident. The footage shows no presence in verified news coverage and has been flagged by AI detection tools as likely artificial. Therefore, the video is AI-generated and misleadingly linked to the incident.

Executive Summary

An image of Prime Minister Narendra Modi is being widely circulated on social media. The picture is being shared with the claim that during an election campaign in Assam, a full-fledged shooting set was arranged in a tea garden where Modi interacted with women workers, complete with cameras, microphones, lights, and a director-led production team. However, research by the CyberPeace has found the claim to be false. Our research reveals that the viral image is AI-generated and is being shared with a misleading narrative.

Claim

An Instagram user shared the viral image with the caption suggesting that such a large-scale “shoot” setup had been arranged and questioned the cost involved.

Post link:

Fact Check

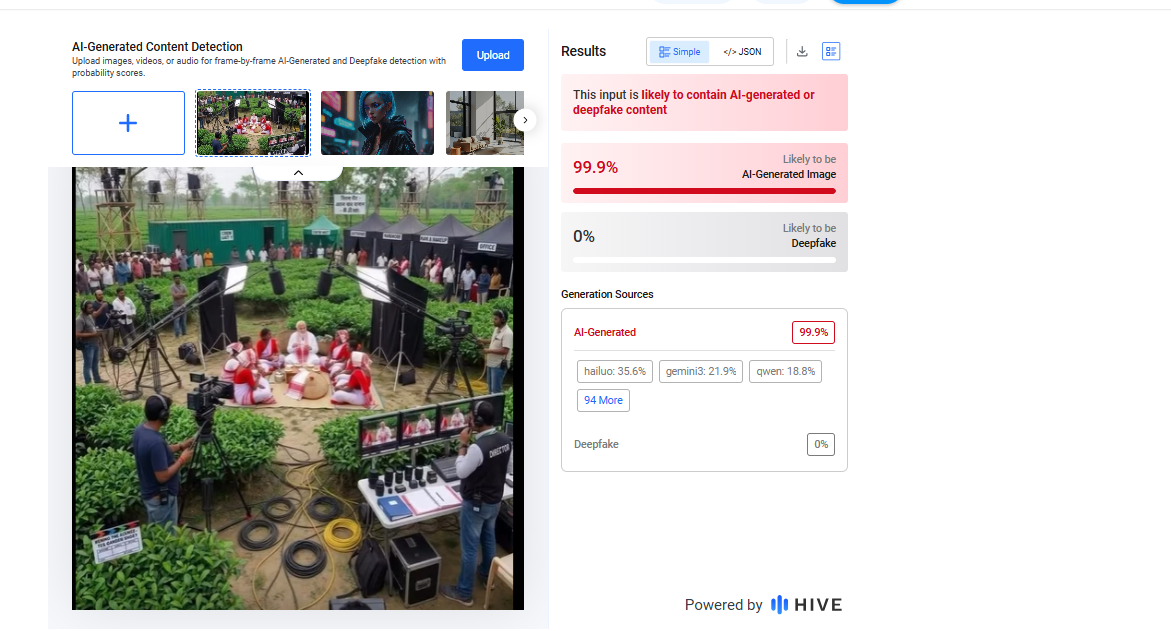

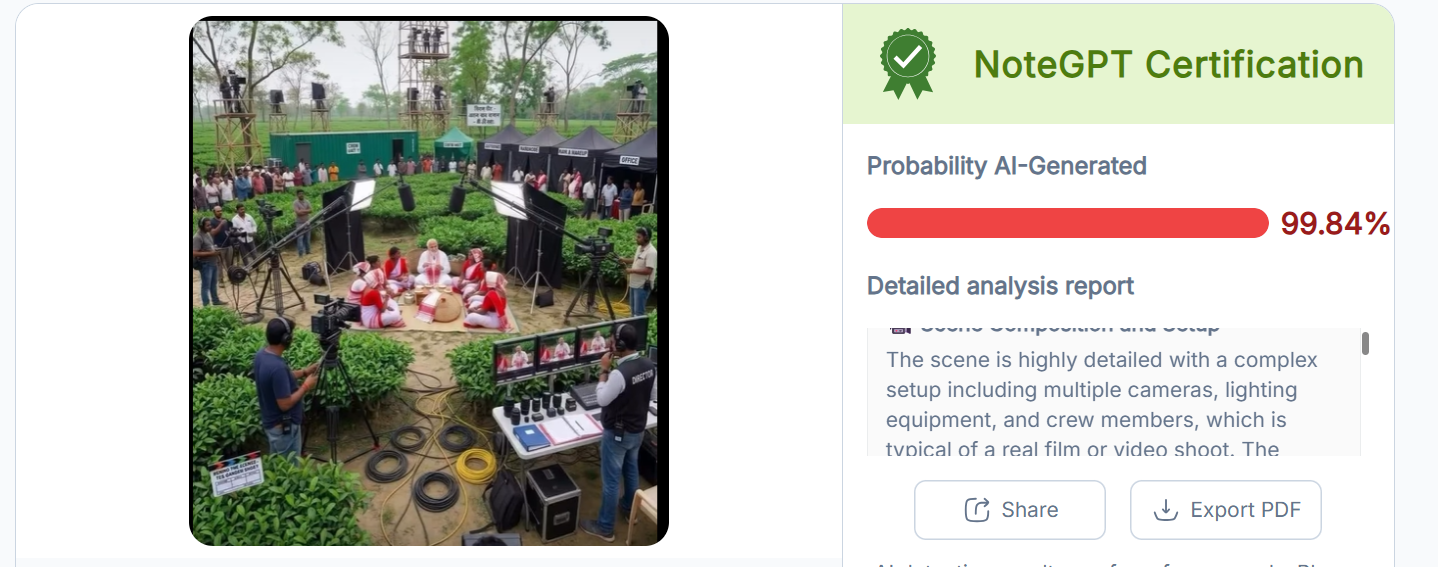

To verify the claim, we conducted a keyword-based search on Google. However, we did not find any credible media reports supporting the claim that such a shooting setup was arranged during Prime Minister Modi’s visit to Assam. Upon closely examining the viral image, we noticed several visual inconsistencies that raised suspicion about it being artificially generated. To confirm this, we analyzed the image using the AI detection tool Hive Moderation, which indicated that the image is approximately 99% AI-generated.

To further validate the findings, we also tested the image using another AI detection tool, NoteGPT, which similarly classified the image as 99% AI-generated.

For context, ahead of the 2026 Assam Assembly elections, Prime Minister Narendra Modi has been on a campaign visit to the state. According to a report by DD News, he visited a tea garden in Dibrugarh, where he interacted with women workers and even plucked tea leaves himself.

Conclusion

Our research clearly establishes that the viral image of Prime Minister Narendra Modi is not authentic and has been digitally created using AI tools. There is no evidence to support the claim that a staged shooting setup involving cameras, lights, and a production crew was arranged during his visit. The image is being circulated with a misleading narrative to create a false impression. This case highlights how AI-generated visuals can be used to distort real events and spread misinformation.

Executive Summary

Following the tragic cruise accident at Bargi Dam in Jabalpur, a heartbreaking image of a woman lying unconscious in a river with a child resting on top of her has gone viral on social media. Users are claiming that the picture shows victims of the recent Bargi Dam accident. Research by CyberPeace Research Wing found that the viral claim is false. The circulating image was created using AI (Artificial Intelligence) and is now being misleadingly linked to the real tragedy. However, reports indicate that a similar real-life image of a mother and child did emerge after the accident.

Claim

An X user shared the viral image on May 1, 2026, claiming that despite wearing a life jacket, the mother lost her life while trying to save her child. The emotional post praised mothers’ sacrifice and linked the image directly to the Bargi Dam cruise mishap An X user shared the viral image on May 1, 2026, claiming that despite wearing a life jacket, the mother lost her life while trying to save her child. The emotional post praised mothers’ sacrifice and linked the image directly to the Bargi Dam cruise mishap

Fact Check

To verify the claim, we searched relevant keywords on Google but found no credible news reports connecting the viral image to the Bargi Dam accident. A closer examination of the image revealed multiple visual inconsistencies. The hands of the woman and child appear unnaturally merged at one point, while the woman’s eyebrows seem split into two sections. Such distortions are common indicators of AI-generated imagery.

We then analyzed the picture using AI detection tool Hive Moderation, which estimated nearly a 90% probability that the image was AI-generated.

During the research , we also found a clarification post from the official Facebook account of the Jabalpur District Collector, who stated that the viral image was AI-generated or sourced elsewhere and had no connection with the Bargi cruise accident.

According to a report published by NDTV on May 2, 2026, the accident occurred on April 30 near Khamaria Island when an overloaded tourist cruise capsized amid strong winds, heavy rain, and rising waves. At least nine people died, while 28 others were rescued.

Conclusion

Our research confirms that the viral mother-child image being linked to the Bargi Dam tragedy is fake. The picture was created using AI and falsely circulated in connection with the real cruise accident.

Executive Summary

As West Bengal heads for vote counting on May 4, 2026, following the second phase of Assembly polling held on April 29, a video is being widely shared on social media. The clip shows security personnel baton-charging civilians, with users claiming it depicts force being used during the West Bengal Assembly Elections 2026. Research by CyberPeace Research Wing found that the viral claim is misleading. The video is actually from Bangladesh and is being falsely linked to the West Bengal elections to spread confusion.

Claim

A Facebook user named “Adv Mohd Salman” shared the clip on April 29, 2026, using Bengal-related hashtags and claiming that voters standing in line were beaten to influence the election outcome. The post alleged that free and fair voting rights were being suppressed.

Fact Check

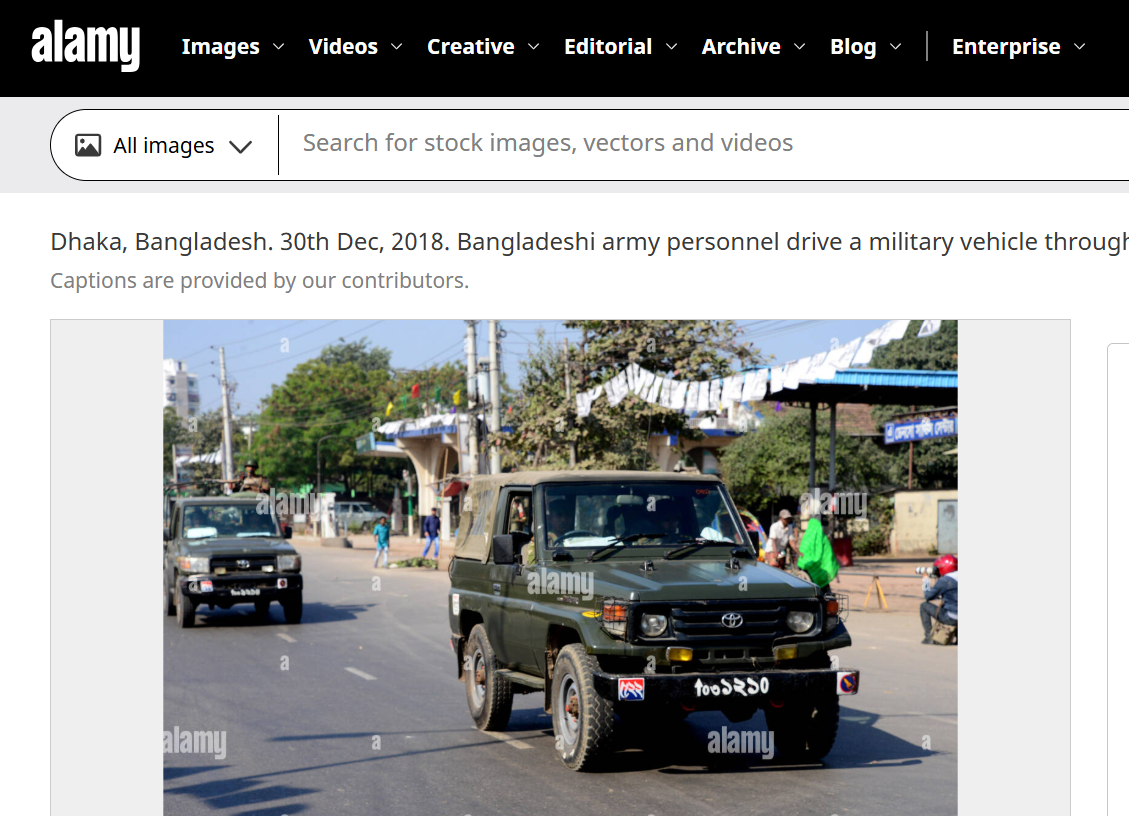

To verify the claim, we closely examined the viral video. A vehicle visible in the footage had a registration number written in a non-Hindi script. Using Google Lens reverse image search, we found a matching image uploaded on Alamy on December 30, 2018. The image showed a military vehicle with the same script and registration style seen in the viral clip.

According to the description on the platform, the image was taken in Dhaka during Bangladesh’s national elections and showed Bangladeshi army personnel moving through a street near a polling station. This confirms that the viral footage is not related to the 2026 West Bengal Assembly elections.

Conclusion

Our research confirms that the video showing security personnel baton-charging civilians is from Bangladesh, not West Bengal. It is being falsely shared as footage from the 2026 West Bengal Assembly elections to mislead users.

Executive Summary

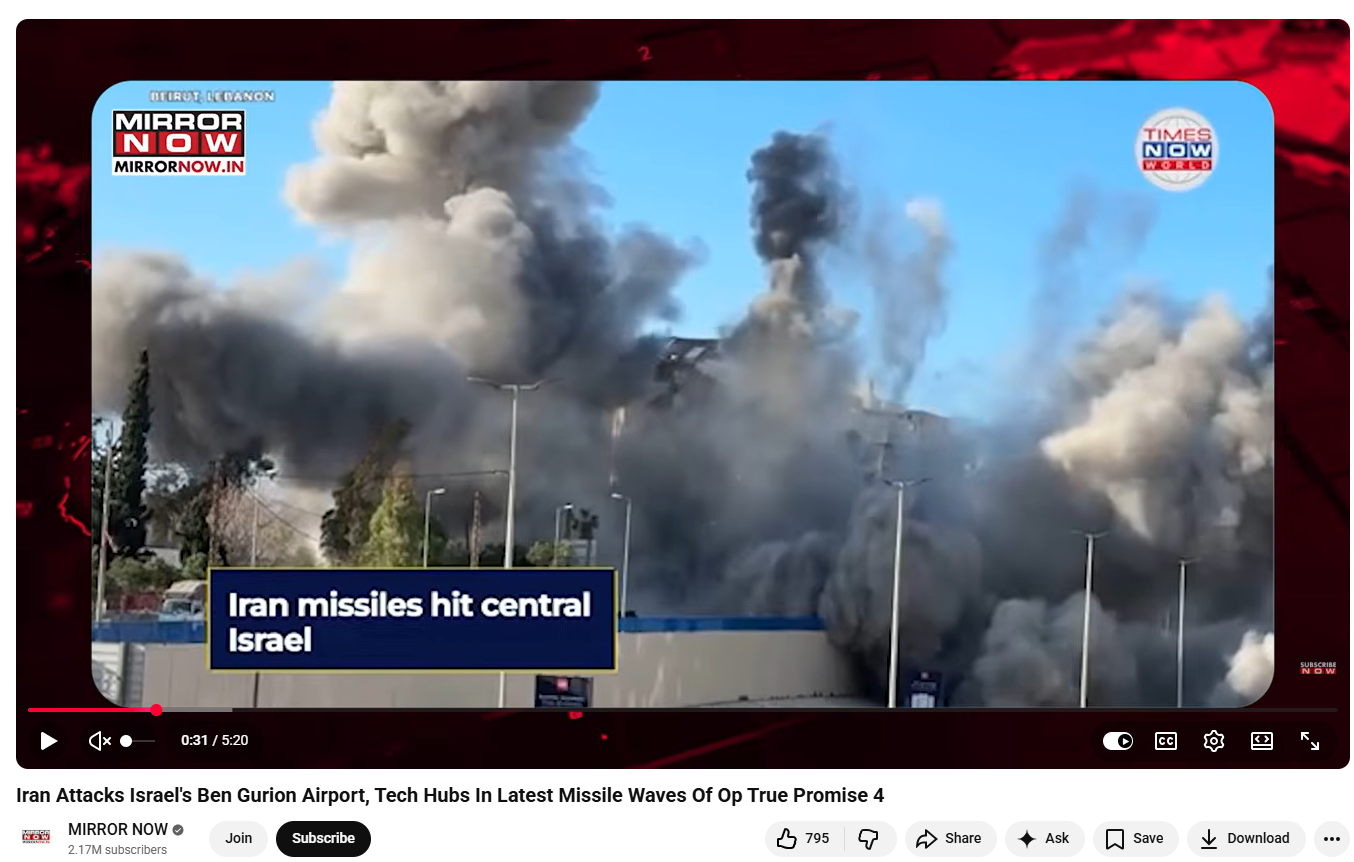

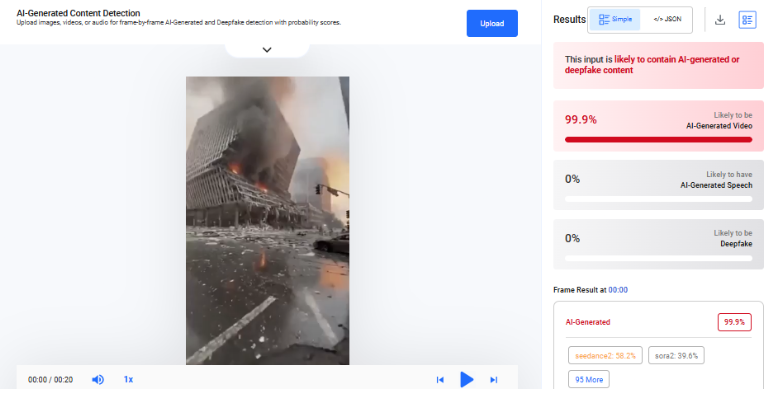

A video is going viral on social media showing a massive building engulfed in flames and collapsing into debris. It is being widely claimed that Iran launched a powerful attack that destroyed Israel’s army headquarters. However, research by CyberPeace reveals that this claim is misleading. The viral video is AI-generated and has no connection to any real-world event.

Claim

An X (formerly Twitter) user shared the viral video with the caption: “Iran has targeted Israel’s army headquarters. It seems Israel’s dream of becoming ‘Greater Israel’ will remain unfulfilled.”

Post link:

- https://x.com/KAMESHKUMAR96/status/2039009484069368083

Archived version:

- https://archive.ph/HKXkK

- https://x.com/KAMESHKUMAR96/status/2039009484069368083

- https://archive.ph/HKXkK

Similar videos have also been shared by other users on social media:

Fact Check

To verify the claim, we extracted keyframes from the viral video and conducted a reverse image search. During this process, we found several credible media reports confirming that Iran has carried out drone and missile attacks on Israel and the Gulf regions in recent times. However, none of these reports featured the viral video, indicating that it is not authentic footage.

- https://www.youtube.com/watch?v=fxDBX90bYng

A closer examination of the video revealed multiple visual inconsistencies commonly associated with AI-generated content. For instance, a building on the left side appears to bend and collapse in a rubber-like manner—something that is physically unrealistic for structures made of concrete and steel. Additionally, the smoke and flames appear unnatural and lack realistic dynamics.

To further verify, we analyzed the video using the AI detection tool Hive Moderation, which classified it as 99.9% AI-generated.

We also tested the video using the Deepfake-o-Meter platform.The AVSRDD (2025) model detected it as 99.5% AI-generated

Conclusion

Our research clearly establishes that the viral video claiming Iran destroyed Israel’s army headquarters is false and misleading. The footage does not appear in any credible news coverage of recent attacks, which strongly indicates that it is not real. Moreover, multiple AI detection tools consistently classify the video as artificially generated, with extremely high probability scores. Visual anomalies in the clip further support this finding.

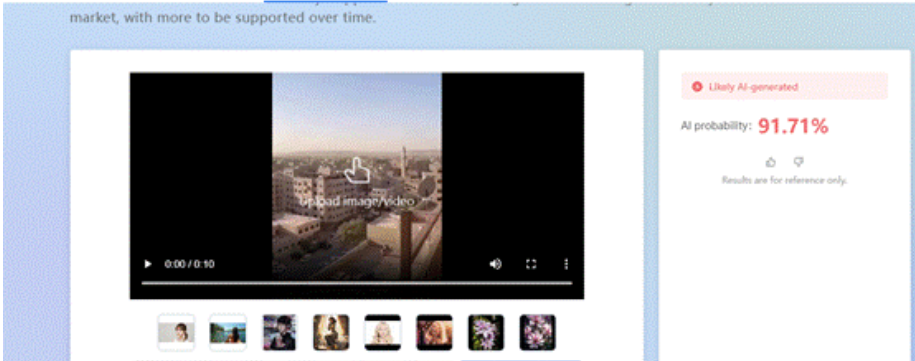

Executive Summary:

A video is rapidly circulating on social media following claims that Iran’s national security chief Ali Larijani was killed in an Israeli airstrike. The viral clip is being shared with the assertion that it shows the moment Israel launched a powerful attack on Iran to eliminate Larijani, allegedly shaking the ground due to the intensity of the strike However, research by CyberPeace has found the claim to be misleading. The viral video is AI-generated and has no connection to real-world events.

Claim:

Social media users have shared the video with alarming captions. One such post by Deepak Sharma reads:

“WAR UPDATE… Iran is in its final phase… Israel is striking selectively… This attack will leave you shocked… Iran’s national security chief Ali Larijani has been killed in this attack… The intensity of the strike shook the Iranian الأرض.

Post links:

Similar videos were also shared by other users:

- urabh_raj3026/status/2038834832869032026

- https://x.com/ibmindia20/status/2038938020154597447

- https://x.com/Saurabh_raj3026/status/2038834832869032026

Fact Check

To verify the claim, we extracted keyframes from the viral video and conducted a reverse image search. During this process, we found the same video on Instagram, uploaded on March 9, 2026, by the account “_iranwar_2026” without any descriptive caption.

According to a BBC report, Ali Larijani died on March 17 in an Israeli strike. This establishes that the viral video predates the reported incident, making the claim factually inconsistent. Further examination of the Instagram account revealed that it frequently shares pro-Iran content, including gaming visuals and AI-generated clips, raising doubts about the authenticity of the video.

To strengthen the verification, we analyzed the viral clip using the AI detection tool “Zhuque AI Detection Assistant.” The results indicated a 91.71% probability that the video is AI-generated, confirming that it is not real footage.

Conclusion

The viral claim linking the video to an Israeli airstrike that allegedly killed Ali Larijani is misleading and factually incorrect. Multiple layers of verification show that the video existed online before the reported incident, ruling out any direct connection. Additionally, AI detection analysis strongly suggests that the video is artificially generated. The source account’s pattern of sharing AI and gaming-related content further weakens the credibility of the claim. There is no verified evidence to support that the viral clip depicts a real attack or any event related to Larijani’s death. Instead, the video appears to be a digitally created visual circulated without context to amplify misinformation.

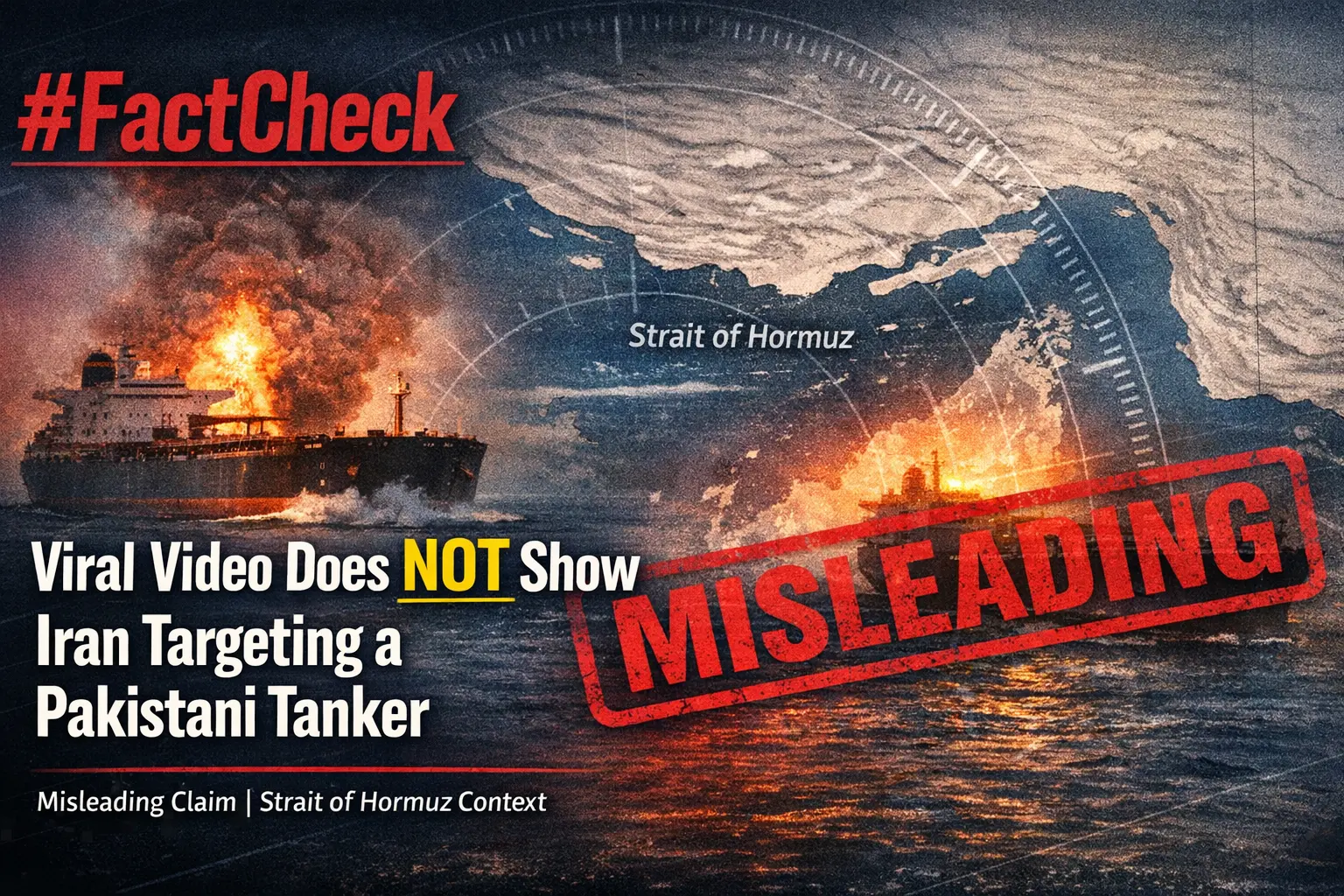

Executive Summary

Amid the ongoing tensions involving the United States, Israel, and Iran, a video of a cargo ship engulfed in flames is being widely shared across social media platforms. The clip shows a vessel burning intensely at sea, with users claiming that Iran targeted the ship with a drone for attempting to cross the Strait of Hormuz without permission. Some users have also claimed that the destroyed vessel was a Pakistani-flagged oil tanker hit by Iranian missiles. However, research by CyberPeace found the claim to be false. Our verification also reveals that the viral video is being misrepresented.

Claim

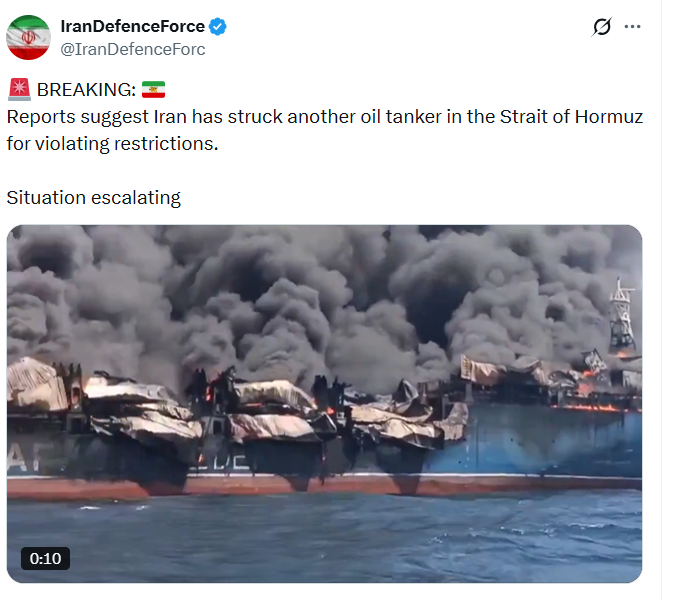

Social media users, including an X (formerly Twitter) account named “IranDefenceForce,” shared the video claiming that Iran targeted an oil tanker in the Strait of Hormuz for allegedly violating restrictions.

Fact Check

A keyword-based news search led us to multiple credible reports mentioning a statement by Iran’s Foreign Minister Abbas Araghchi. According to reports, Iran had allowed ships from “friendly countries” including India, China, Russia, Iraq, and Pakistan to pass through the Strait of Hormuz.

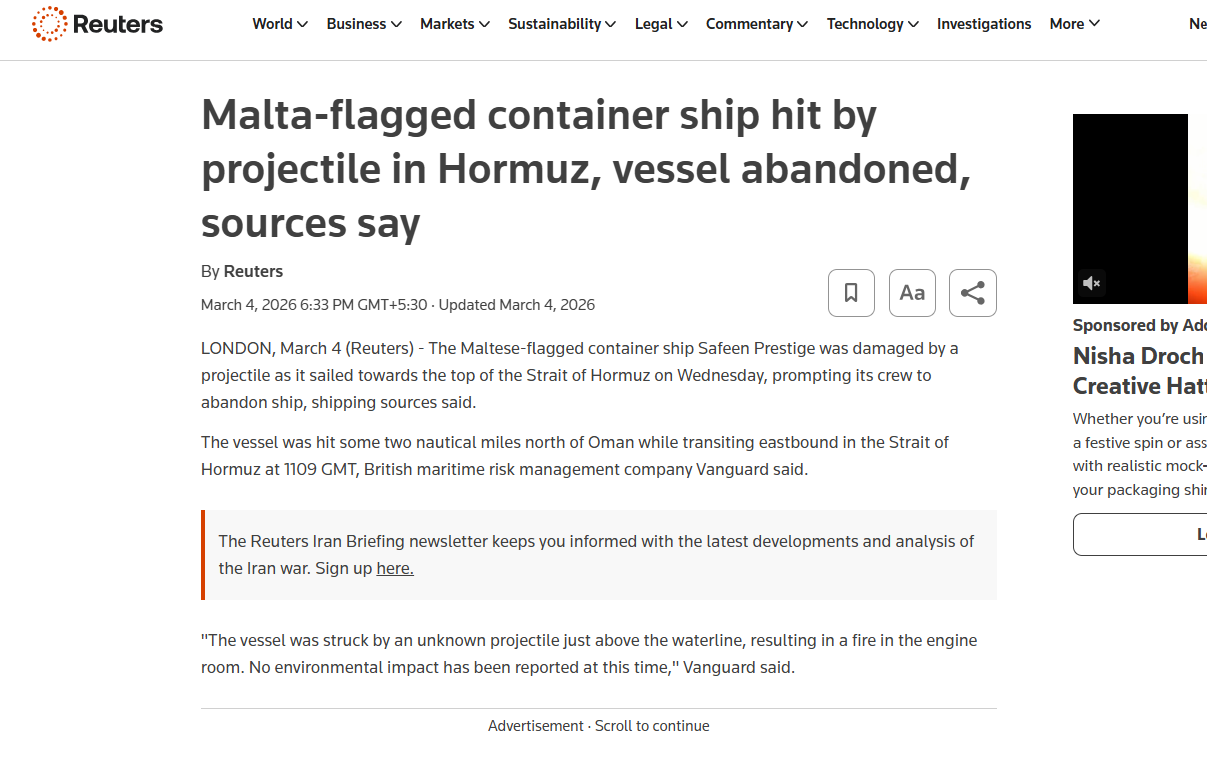

A March 26, 2026 report by The Hindu stated that Araghchi also emphasized Iran’s assertion of sovereignty over the strategic waterway connecting the Persian Gulf and the Gulf of Oman. The same statement was also shared via the official X handle of the Iranian Consulate in Mumbai. During a frame-by-frame analysis of the viral video, we noticed the word “SAFEEN” written on a part of the ship. Using this clue, we conducted a targeted news search and found a report by Reuters dated March 4, 2026.

According to the report, a Malta-flagged container ship named Safeen Prestige was damaged in an attack while heading toward the Strait of Hormuz. Shipping sources cited in the report stated that the vessel was struck around 1109 GMT while sailing eastward, approximately two nautical miles north of Oman. The ship had reportedly departed from Sharjah Port in the United Arab Emirates but was damaged before reaching its destination. Its last known location was in the Persian Gulf. Additionally, earlier this month, another cargo vessel named Mayuri Naree was also attacked near Iran’s Qeshm Island. As per Reuters, an explosion caused a fire in the engine room, after which 20 crew members were rescued by the Omani navy, while three remained missing.

Conclusion

The viral video does not show Iran targeting a Pakistani oil tanker for violating restrictions in the Strait of Hormuz. In reality, the clip features the Malta-flagged container ship Safeen Prestige, which was damaged in an unidentified attack in the Persian Gulf. The claim being circulated on social media is misleading.

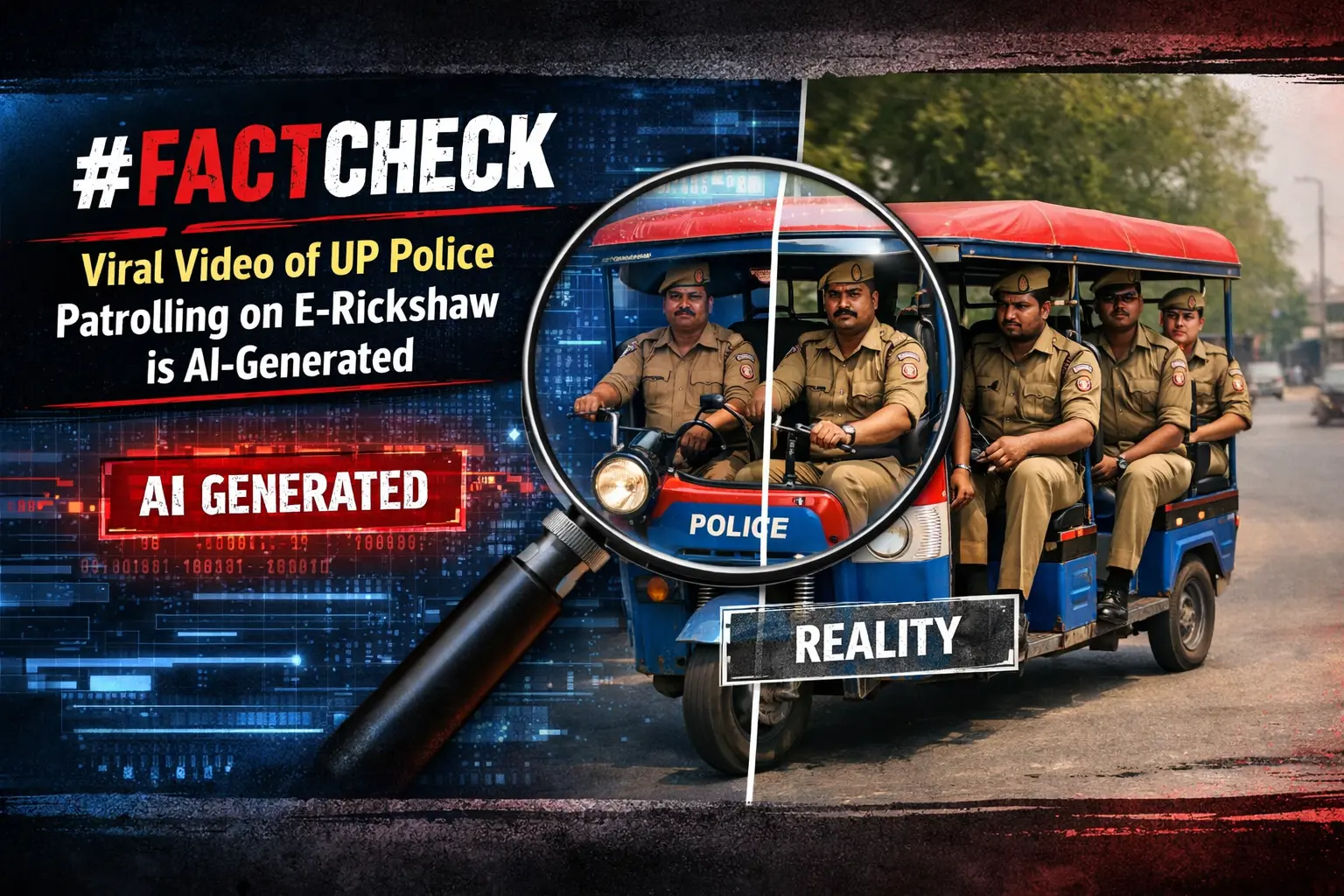

Executive Summary

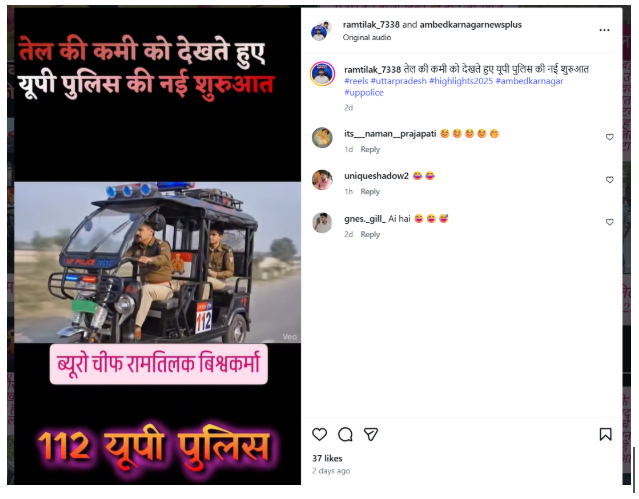

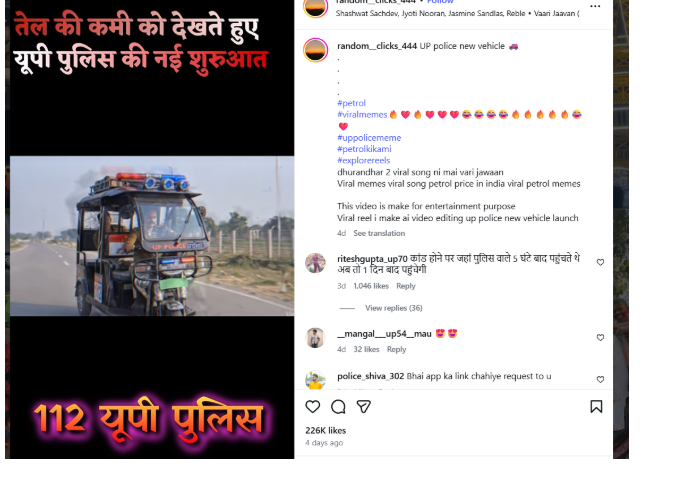

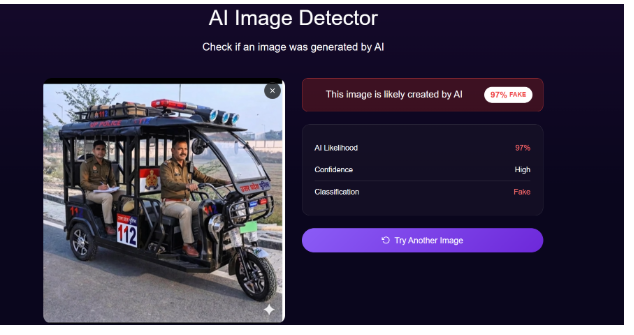

A video is being widely shared on social media showing a police officer driving an e-rickshaw, while two other policemen are seen in the back seat. Users sharing the clip claim that, due to a shortage of petrol, this is a new initiative by the Uttar Pradesh Police. However, research by CyberPeace found the viral claim to be false. Our research also confirms that the video is not real but AI-generated.

Claim

An Instagram user shared the viral video claiming that due to fuel shortages, Uttar Pradesh Police has started patrolling using e-rickshaws.

- Post link: https://www.instagram.com/reel/DWepKWXAeiE/

- Archive: https://archive.ph/QBNXs

Fact Check

To verify the claim, we first conducted a keyword search on Google but found no credible media reports supporting this claim.

Next, we extracted keyframes from the viral video and performed a reverse image search using Google Lens. During this process, we found the same video uploaded on an Instagram channel on March 28, 2026. The uploader clearly mentioned that the video was created purely for entertainment purposes.

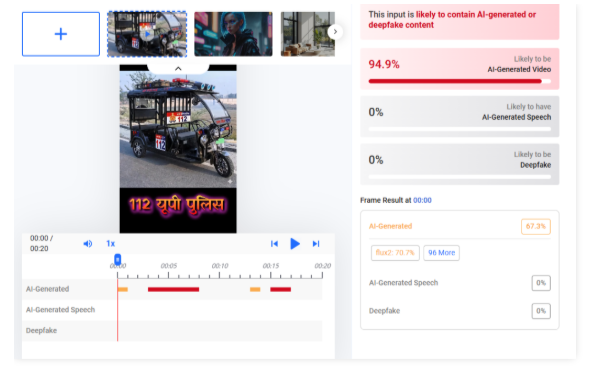

We further analyzed the video using AI detection tools. When scanned with Hive Moderation, the results indicated that the video is approximately 94% AI-generated.

In the next step, we also tested the clip using DeepAI. According to its analysis, the video is about 97% AI-generated.

Conclusion

Our research clearly shows that the viral video is not authentic. It is an AI-generated clip created for entertainment purposes, and the claim that Uttar Pradesh Police has started e-rickshaw patrolling due to petrol shortage is false.

Executive Summary

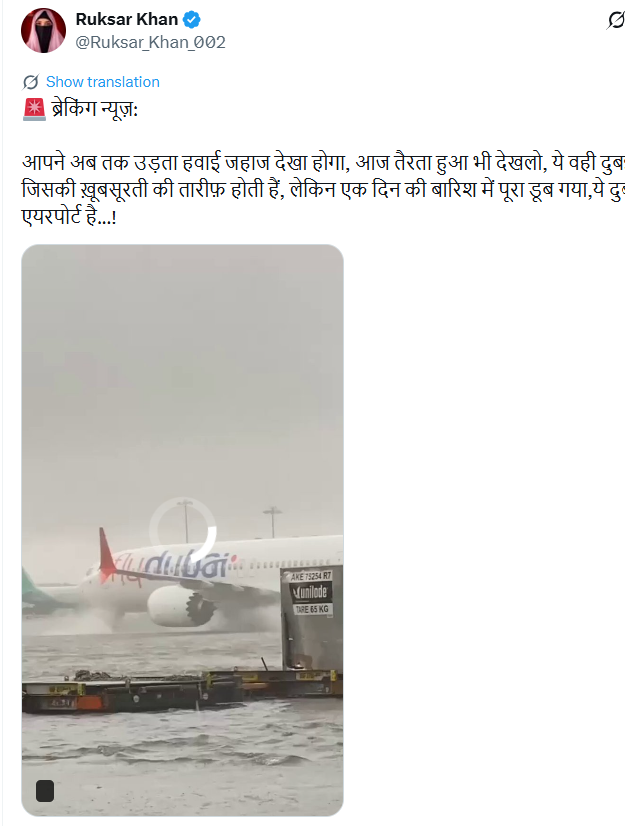

Amid reports of heavy rainfall and flooding in several cities of the United Arab Emirates, a video is being widely circulated on social media claiming to show recent scenes from Dubai. The clip allegedly depicts severe waterlogging at Dubai Airport and inside shopping malls, with users linking it to a “recent storm.”According to research by CyberPeace, the viral footage is not recent. The video is actually a compilation of three different clips stitched together and dates back to 2024, when Dubai experienced unprecedented flooding following heavy rains.

Claim

The misleading post was shared by an X (formerly Twitter) user named ‘Ruksar Khan’ on March 28, 2026, with a caption suggesting that Dubai had been submerged after just one day of rain. The post attempted to sensationalize the situation by portraying the visuals as current.

Fact Check:

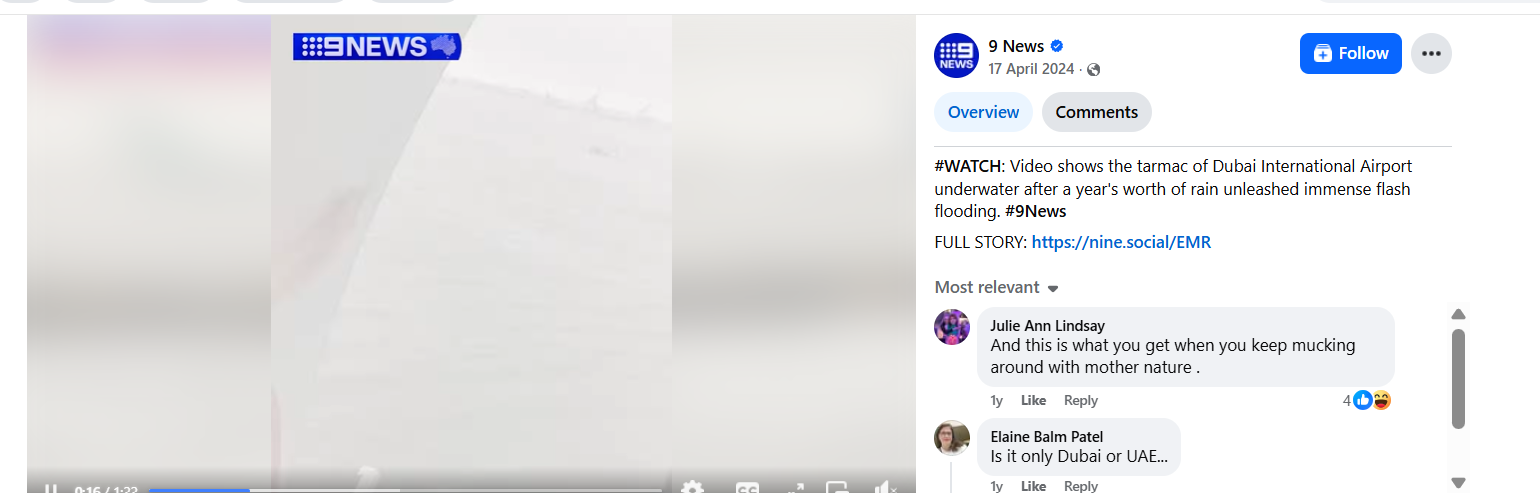

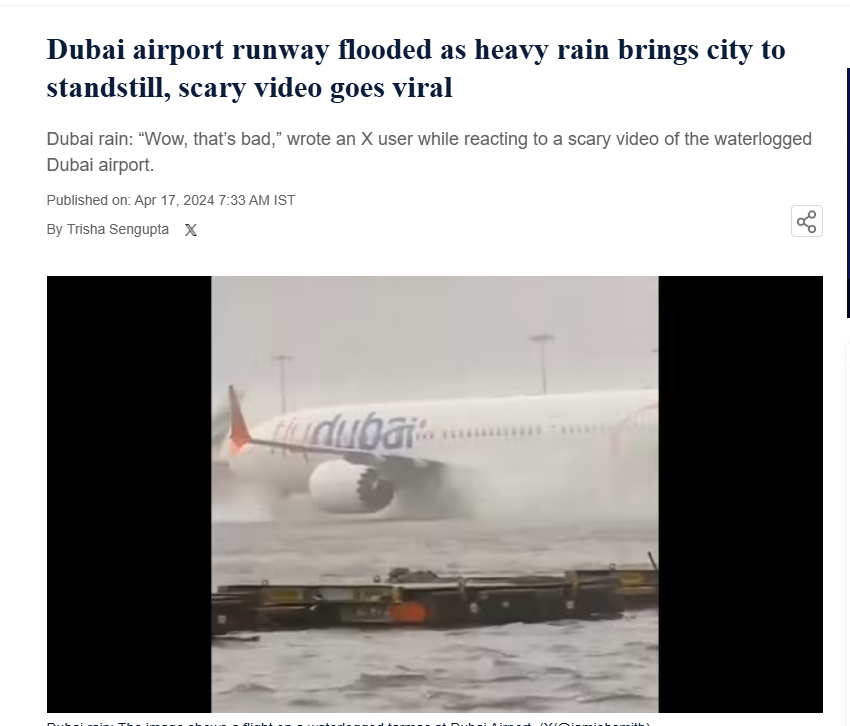

To verify the claim, keyframes from the viral video were extracted using the InVid tool and analyzed through reverse image search. One of the clips was traced to a Facebook post by “9 News,” uploaded on April 17, 2024. The video showed waterlogged runways at Dubai International Airport following intense rainfall and flooding.

Further verification led to a report published by Hindustan Times on April 17, 2024, which featured similar visuals and confirmed that the footage was from the floods that hit Dubai in 2024.

Conclusion:

The viral claim suggesting that the video shows recent flooding in Dubai is false. The footage is nearly two years old and originates from the 2024 floods in Dubai. It is now being reshared with misleading claims to create confusion around current weather events.

Executive Summary

A video is rapidly circulating on social media showing a man enthusiastically dancing to the Hindi song Sun Sahiba Sun. The clip is being shared with a sensational claim that it is a private video leaked from the hacked email account of FBI Director Kash Patel. In the video, a man can be seen dancing in a casual setting while people in the background cheer him on. Several users have linked the clip to an alleged cyberattack by Iran-linked hackers, attempting to connect it with ongoing international developments.

However, research by the CyberPeace found that the video has been available online since at least 2020. It also resurfaced in 2022, long before the current claims emerged. There is no connection between the video and Kash Patel or any hacking incident. Further research confirmed that the clip is not recent and has no link to any cybersecurity breach. In 2022, the same video had gone viral as a humorous post, with claims that the man was celebrating because his wife had temporarily gone to her maternal home.

Claim

On March 29, 2026, an Instagram user named ‘greyinsightsbharat’ shared the video claiming it was leaked from Patel’s hacked Gmail account. The caption read:“FBI Director Kash Patel's Gmail Hacked by Iranian Hackers; His Alleged Dancing Video Leaked.”

The research involved extracting keyframes from the video and conducting reverse image searches, which revealed that the same clip had been shared multiple times in the past with different, unrelated claims.

Fact Check

A reverse search also led to a December 2022 media report featuring the same visuals. According to that report, the video showed a man joyfully dancing to celebrate his wife’s temporary visit to her parental home.

Additionally, findings confirm that the footage has existed online since at least 2020 and has previously gone viral. The song featured in the clip is from the 1985 Bollywood film Ram Teri Ganga Maili, originally sung by legendary artist Lata Mangeshkar.

Conclusion:

The claim that the viral dance video is a leaked private clip of FBI Director Kash Patel is false and misleading. Verified findings show that the video has been available on the internet since at least 2020 and had already gone viral in 2022 in a completely different and humorous context. There is no evidence linking the clip to any recent cyberattack, email hack, or data breach involving Patel. The resurfacing of this old video with a fabricated narrative highlights how unrelated content is often repurposed to create sensational misinformation, especially during sensitive geopolitical situations. Users are advised to verify such claims through credible sources before sharing, as misleading posts like these can distort public understanding and spread confusion.

Executive Summary

Following the reported box office success of ‘Dhurandhar 2: The Revenge’, released on March 19, 2026, a video of Ranveer Singh visiting a temple is being widely shared on social media. Users claim that the actor visited the Kashi Vishwanath Temple to offer prayers after the film’s success. Research by CyberPeace found that the viral claim is misleading. The video of Ranveer Singh visiting the Kashi Vishwanath Temple is not recent. It dates back to 2024, when he visited the temple with Kriti Sanon, and is unrelated to the release or success of ‘Dhurandhar 2: The Revenge’.

Claim

An Instagram user “newsbharatplus” shared the video on March 26, 2026, with a caption stating that after the massive success of Dhurandhar 2, Ranveer Singh visited the temple and performed rituals.

Fact Check

To verify the claim, we extracted keyframes from the viral video and conducted a reverse image search. This led us to a report published by Dainik Jagran on April 14, 2024. According to the report, Ranveer Singh had visited the Kashi Vishwanath Temple along with Kriti Sanon and noted fashion designer Manish Malhotra. During the visit, the trio was seen offering prayers, wearing traditional attire, and applying sandalwood tilak.

https://www.jagran.com/entertainment/bollywood-ranveer-singh-and-kriti-sanon-visits-kashi-vishwanath-temple-with-manish-malhotra-see-photos-here-23696781.html

We also found a video report on the official YouTube channel of Times Now Navbharat, uploaded on April 15, 2024, showing Ranveer Singh and Kriti Sanon at the temple. The report also featured visuals from a fashion event held in Varanasi.

- https://www.youtube.com/watch?v=OMuW_SVbfb4

Conclusion

The viral claim is misleading. The video of Ranveer Singh visiting the Kashi Vishwanath Temple is not recent. It dates back to 2024, when he visited the temple with Kriti Sanon, and is unrelated to the release or success of ‘Dhurandhar 2: The Revenge’.