#FactCheck - "AI-Generated Image of UK Police Officers Bowing to Muslims Goes Viral”

Executive Summary:

A viral picture on social media showing UK police officers bowing to a group of social media leads to debates and discussions. The investigation by CyberPeace Research team found that the image is AI generated. The viral claim is false and misleading.

Claims:

A viral image on social media depicting that UK police officers bowing to a group of Muslim people on the street.

Fact Check:

The reverse image search was conducted on the viral image. It did not lead to any credible news resource or original posts that acknowledged the authenticity of the image. In the image analysis, we have found the number of anomalies that are usually found in AI generated images such as the uniform and facial expressions of the police officers image. The other anomalies such as the shadows and reflections on the officers' uniforms did not match the lighting of the scene and the facial features of the individuals in the image appeared unnaturally smooth and lacked the detail expected in real photographs.

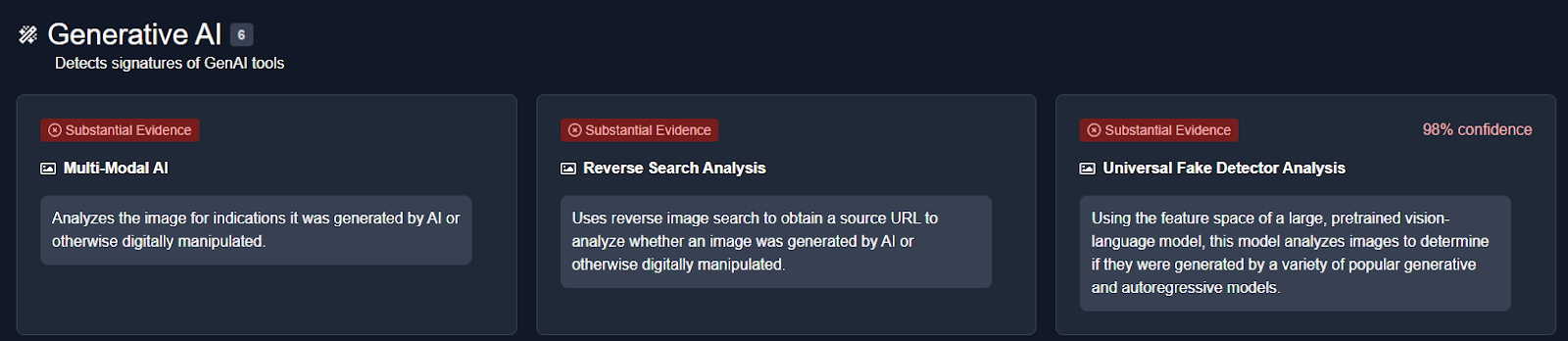

We then analysed the image using an AI detection tool named True Media. The tools indicated that the image was highly likely to have been generated by AI.

We also checked official UK police channels and news outlets for any records or reports of such an event. No credible sources reported or documented any instance of UK police officers bowing to a group of Muslims, further confirming that the image is not based on a real event.

Conclusion:

The viral image of UK police officers bowing to a group of Muslims is AI-generated. CyberPeace Research Team confirms that the picture was artificially created, and the viral claim is misleading and false.

- Claim: UK police officers were photographed bowing to a group of Muslims.

- Claimed on: X, Website

- Fact Check: Fake & Misleading

Related Blogs

Introduction

Since the inception of the Internet and social media platforms like Facebook, X (Twitter), Instagram, etc., the government and various other stakeholders in both foreign jurisdictions and India have looked towards the intermediaries to assume responsibility for the content floated on these platforms, and various legal provisions showcase that responsibility. For the first time in many years, these intermediaries come together to moderate the content by setting a standard for the creators and propagators of this content. The influencer marketing industry in India is at a crucial juncture, with its market value projected to exceed Rs. 3,375 crore by 2026. But every industry is coupled with its complications; like in this scenario, there is a section of content creators who fail to maintain the standard of integrity and propagate content that raises concerns of authenticity and transparency, often violating intellectual property rights (IPR) and privacy.

As influencer marketing continues to shape digital consumption, the need for ethical and transparent content grows stronger. To address this, the India Influencer Governing Council (IIGC) has released its Code of Standards, aiming to bring accountability and structure to the fast-evolving online space.

Bringing Accountability to the Digital Fame Game

The India Influencer Governing Council (IIGC), established on 15th February, 2025, is founded with the objective to empower creators, advocate for fair policies, and promote responsible content creation. The IIGC releases the Code of Standard, not a moment too soon; it arrives just in time, a necessary safeguard before social media devolves into a chaotic marketplace where anything and everything is up for grabs. Without effective regulation, digital platforms become the marketplace for misinformation and exploitation.

The IIGC leads the movement with clarity, stating that the Code is a significant piece that spans across 20 crucial sections governing key areas such as paid partnership disclosures, AI-generated personas, content safety, and financial compliance.

Highlights from the Code of Standard

- The Code exhibits a technical understanding of the industry of content creation and influencer marketing. The preliminary sections advocate for accuracy, transparency, and maintaining credibility with the audience that engages with the content. Secondly, the most fundamental development is with regard to the “Paid Partnership Disclosure” included in Section 2 of the Code that mandates disclosure of any material connection, such as financial agreements or collaboration with the brand.

- Another development, which potently comes at a befitting hour, is the disclosure of “AI Influencers”, which establishes that the nature of the influencer has to be disclosed, and such influencers, whether fully virtual or partially AI-enhanced, must maintain the same standards as any human influencer.

- The code ranges across various other aspects of influencer marketing, such as expressing unpaid “Admiration” for the brand and public criticism of the brand, being free from personal bias, honouring financial agreements, non-discrimination, and various other standards that set the stage for a safe and fair digital sphere.

- The Code also necessitates that the platform users and the influencers handle sexual and sensitive content with sincere deliberation, and usage of such content shall be for educational and health-related contexts and must not be used against community standards. The Code includes various other standards that work towards making digital platforms safer for younger generations and impressionable minds.

A Code Without Claws? Challenges in Enforcement

The biggest obstacle to the effective implementation of the code is distinguishing between an honest promotion and a paid brand collaboration without any explicit mention of such an agreement. This makes influencer marketing susceptible to manipulation, and the manipulation cannot be tackled with a straitjacket formula, as it might be found in the form of exaggerated claims or omission of critical information.

Another hurdle is the voluntary compliance of the influencers with the advertising standards. Influencer marketing is an exercise in a borderless digital cyberspace, where the influencers often disregard the dignified standards to maximise their earnings and commercial motives.

The debate between self-regulation and government oversight is constantly churning, where experience tells us that overreliance on self-regulation has proven to be inadequate, and succinct regulatory oversight is imperative in light of social media platforms operating as a transnational commercial marketplace.

CyberPeace Recommendations

- Introduction of a licensing framework for influencers that fall into the “highly followed” category with high engagement, who are more likely to shape the audience’s views.

- Usage of technology to align ethical standards with influencer marketing practices, ensuring that misleading advertisements do not find a platform to deceive innocent individuals.

- Educating the audience or consumers on the internet about the ramifications of negligence and their rights in the digital marketplace. Ensuring a well-established grievance redressal mechanism via digital regulatory bodies.

- Continuous and consistent collaboration and cooperation between influencers, brands, regulators, and consumers to establish an understanding and foster transparency and a unified objective to curb deceptive advertising practices.

References

- https://iigc.org/code-of-standards/influencers/code-of-standards-v1-april.pdf

- https://legalonus.com/the-impact-of-influencer-marketing-on-consumer-rights-and-false-advertising/

- https://exhibit.social/news/india-influencer-governing-council-iigc-launched-to-shape-the-future-of-influencer-marketing/

Introduction

Deepfakes are artificial intelligence (AI) technology that employs deep learning to generate realistic-looking but phoney films or images. Algorithms use large volumes of data to analyse and discover patterns in order to provide compelling and realistic results. Deepfakes use this technology to modify movies or photos to make them appear as if they involve events or persons that never happened or existed.The procedure begins with gathering large volumes of visual and auditory data about the target individual, which is usually obtained from publicly accessible sources such as social media or public appearances. This data is then utilised for training a deep-learning model to resemble the target of deep fakes.

Recent Cases of Deepfakes-

In an unusual turn of events, a man from northern China became the victim of a sophisticated deep fake technology. This incident has heightened concerns about using artificial intelligence (AI) tools to aid financial crimes, putting authorities and the general public on high alert.

During a video conversation, a scammer successfully impersonated the victim’s close friend using AI-powered face-swapping technology. The scammer duped the unwary victim into transferring 4.3 million yuan (nearly Rs 5 crore). The fraud occurred in Baotou, China.

AI ‘deep fakes’ of innocent images fuel spike in sextortion scams

Artificial intelligence-generated “deepfakes” are fuelling sextortion frauds like a dry brush in a raging wildfire. According to the FBI, the number of nationally reported sextortion instances came to 322% between February 2022 and February 2023, with a notable spike since April due to AI-doctored photographs. And as per the FBI, innocent photographs or videos posted on social media or sent in communications can be distorted into sexually explicit, AI-generated visuals that are “true-to-life” and practically hard to distinguish. According to the FBI, predators often located in other countries use doctored AI photographs against juveniles to compel money from them or their families or to obtain actual sexually graphic images.

Deepfake Applications

- Lensa AI.

- Deepfakes Web.

- Reface.

- MyHeritage.

- DeepFaceLab.

- Deep Art.

- Face Swap Live.

- FaceApp.

Deepfake examples

There are numerous high-profile Deepfake examples available. Deepfake films include one released by actor Jordan Peele, who used actual footage of Barack Obama and his own imitation of Obama to convey a warning about Deepfake videos.

A video shows Facebook CEO Mark Zuckerberg discussing how Facebook ‘controls the future’ with stolen user data, most notably on Instagram. The original video is from a speech he delivered on Russian election meddling; only 21 seconds of that address were used to create the new version. However, the vocal impersonation fell short of Jordan Peele’s Obama and revealed the truth.

The dark side of AI-Generated Misinformation

- Misinformation generated by AI-generated the truth, making it difficult to distinguish fact from fiction.

- People can unmask AI content by looking for discrepancies and lacking the human touch.

- AI content detection technologies can detect and neutralise disinformation, preventing it from spreading.

Safeguards against Deepfakes-

Technology is not the only way to guard against Deepfake videos. Good fundamental security methods are incredibly effective for combating Deepfake.For example, incorporating automatic checks into any mechanism for disbursing payments might have prevented numerous Deepfake and related frauds. You might also:

- Regular backups safeguard your data from ransomware and allow you to restore damaged data.

- Using different, strong passwords for different accounts ensures that just because one network or service has been compromised, it does not imply that others have been compromised as well. You do not want someone to be able to access your other accounts if they get into your Facebook account.

- To secure your home network, laptop, and smartphone against cyber dangers, use a good security package such as Kaspersky Total Security. This bundle includes anti-virus software, a VPN to prevent compromised Wi-Fi connections, and webcam security.

What is the future of Deepfake –

Deepfake is constantly growing. Deepfake films were easy to spot two years ago because of the clumsy movement and the fact that the simulated figure never looked to blink. However, the most recent generation of bogus videos has evolved and adapted.

There are currently approximately 15,000 Deepfake videos available online. Some are just for fun, while others attempt to sway your opinion. But now that it only takes a day or two to make a new Deepfake, that number could rise rapidly.

Conclusion-

The distinction between authentic and fake content will undoubtedly become more challenging to identify as technology advances. As a result, experts feel it should not be up to individuals to discover deep fakes in the wild. “The responsibility should be on the developers, toolmakers, and tech companies to create invisible watermarks and signal what the source of that image is,” they stated. Several startups are also working on approaches for detecting deep fakes.

Introduction:

The National Security Council Secretariat, in strategic partnership with the Rashtriya Raksha University, Gujarat, conducted a 12-day Bharat National Cyber Security Exercise in 2024 (from 18th November to 29th November). This exercise included landmark events such as a CISO (Chief Information Security Officers) Conclave and a Cyber Security Start-up exhibition, which were inaugurated on 27 November 2024. Other key features of the exercise include cyber defense training, live-fire simulations, and strategic decision-making simulations. The aim of the exercise was to equip senior government officials and personnel in critical sector organisations with skills to deal with cybersecurity issues. The event also consisted of speeches, panel discussions, and initiatives such as the release of the National Cyber Reference Framework (NCRF)- which provides a structured approach to cyber governance, and the launch of the National Cyber Range(NCR) 1.0., a cutting-edge facility for cyber security research training.

The Deputy National Security Advisor, Shri T.V. Ravichandran (IPS) reiterated, through his speech, the importance of the inclusion of technology in challenges with respect to cyber security and shaping India’s cyber strategy in a manner that is proactive. The CISOs of both government and private entities were encouraged to take up multidimensional efforts which included technological upkeep but also soft skills for awareness.

CyberPeace Outlook

The Bharat National Cybersecurity Exercise (Bharat NCX) 2024 underscores India’s commitment to a comprehensive and inclusive approach to strengthening its cybersecurity ecosystem. By fostering collaboration between startups, government bodies, and private organizations, the initiative facilitates dialogue among CISOs and promotes a unified strategy toward cyber resilience. Platforms like Bharat NCX encourage exploration in the Indian entrepreneurial space, enabling startups to innovate and contribute to critical domains like cybersecurity. Developments such as IIT Indore’s intelligent receivers (useful for both telecommunications and military operations) and the Bangalore Metro Rail Corporation Limited’s plans to establish a dedicated Security Operations Centre (SOC) to counter cyber threats are prime examples of technological strides fostering national cyber resilience.

Cybersecurity cannot be understood in isolation: it is an integral aspect of national security, impacting the broader digital infrastructure supporting Digital India initiatives. The exercise emphasises skills training, creating a workforce adept in cyber hygiene, incident response, and resilience-building techniques. Such efforts bolster proficiency across sectors, aligning with the government’s Atmanirbhar Bharat vision. By integrating cybersecurity into workplace technologies and fostering a culture of awareness, Bharat NCX 2024 is a platform that encourages innovation and is a testament to the government’s resolve to fortify India’s digital landscape against evolving threats.

References

- https://ciso.economictimes.indiatimes.com/news/cybercrime-fraud/bharat-cisos-conclave-cybersecurity-startup-exhibition-inaugurated-under-bharat-ncx-2024/115755679

- https://pib.gov.in/PressReleasePage.aspx?PRID=2078093

- https://timesofindia.indiatimes.com/city/indore/iit-indore-unveils-groundbreaking-intelligent-receivers-for-enhanced-6g-and-military-communication-security/articleshow/115265902.cms

- https://www.thehindu.com/news/cities/bangalore/defence-system-to-be-set-up-to-protect-metro-rail-from-cyber-threats/article68841318.ece

- https://rru.ac.in/wp-content/uploads/2021/04/Brochure12-min.pdf