Introduction

In todays time, we can access any information in seconds and from the comfort of our homes or offices. The internet and its applications have been substantial in creating an ease of access to information, but the biggest question which still remains unanswered is Which information is legit and which one is fake? As netizens, we must be critical of what information we access and how.

Influence of Bad actors

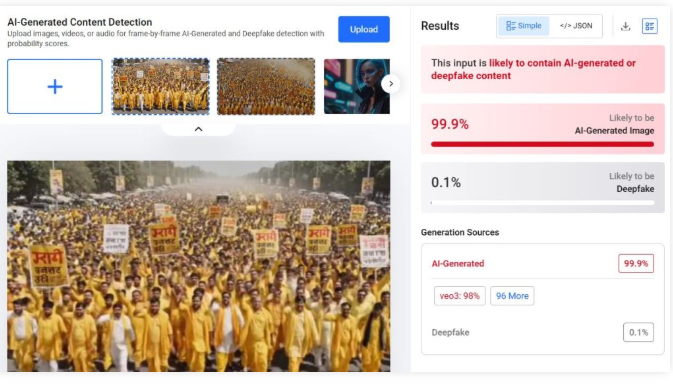

The bad actors are one of the biggest threats to our cyberspace as they make the online world full of fear and activities which directly impact the users financial or emotional status by exploitaing their vulnerabilities and attacking them using social engineering. One such issue is website spoofing. In website spoofing, the bad actors try and create a website similar to the original website of any reputed brand. The similarity is so uncanny that the first time or occasional website users find it very difficult to find the difference between the two websites. This is basically an attempt to access sensitive information, such as personal and financial information, and in some cases, to spread malware into the users system to facilitate other forms of cybercrimes. Such websites will have very lucrative offers or deals, making it easier for people to fall prey to such phoney websites In turn, the bad actors can gain sensitive information right from the users without even calling or messaging them.

The Incident

A Noida based senior citizen couple was aggreved by using their dishwasher, and to get it fixed, they looked for the customer care number on their web browser. The couple came across a customer care number- 1800258821 for IFB, a electronics company. As they dialed the number and got in touch with the fake customer care representative, who, upon hearing the couple’s issue, directed them to a supposedly senior official of the company. The senior official spoke to the lady, despite of the call dropping few times, he was admant on staying in touch with the lady, once he had established the trust factor, he asked the lady to download an app which he potrayed to be an app to register complaints and carry out quick actions. The fake senior offical asked the lady to share her location and also asked her to grant few access permissions to the application along with a four digit OTP which looked harmless. He further asked the kady to make a transaction of Rs 10 as part of the complaint processing fee. Till this moment, the couple was under the impression that their complaimt had been registred and the issue with their dishwasher would be rectified soon.

The couple later at night recieved a message from their bank, informing them that Rs 2.25 lakh had been debited from their joint bank account, the following morning, they saw yet another text message informing them of a debit of Rs 5.99 lakh again from their account. The couple immediatly understood that they had become victims to cyber fraud. The couple immediatly launched a complaint on the cyber fraud helpline 1930 and their respective bank. A FIR has been registerd in the Noida Cyber Cell.

How can senior citizens prevent such frauds?

Senior citizens can be particularly vulnerable to cyber frauds due to their lack of familiarity with technology and potential cognitive decline. Here are some safeguards that can help protect them from cyber frauds:

- Educate seniors on common cyber frauds: It’s important to educate seniors about the most common types of cyber frauds, such as phishing, smishing, vishing, and scams targeting seniors.

- Use strong passwords: Encourage seniors to use strong and unique passwords for their online accounts and to change them regularly.

- Beware of suspicious emails and messages: Teach seniors to be wary of suspicious emails and messages that ask for personal or financial information, even if they appear to be from legitimate sources.

- Verify before clicking: Encourage seniors to verify the legitimacy of links before clicking on them, especially in emails or messages.

- Keep software updated: Ensure seniors keep their software, including antivirus and operating system, up to date.

- Avoid public Wi-Fi: Discourage seniors from using public Wi-Fi for sensitive transactions, such as online banking or shopping.

- Check financial statements: Encourage seniors to regularly check their bank and credit card statements for any suspicious transactions.

- Secure devices: Help seniors secure their devices with antivirus and anti-malware software and ensure that their devices are password protected.

- Use trusted sources: Encourage seniors to use trusted sources when making online purchases or providing personal information online.

- Seek help: Advise seniors to seek help if they suspect they have fallen victim to a cyber fraud. They should contact their bank, credit card company or report the fraud to relevant authorities. Calling 1930 should be the first and primary step.

Conclusion

The cyberspace is new space for people of all generations, the older population is a little more vulnerble in this space as they have not used gadgets or internet for most f theur lives, and now they are dependent upon the devices and application for their convinience, but they still do not understand the technology and its dark side. As netizens, we are responsible for safeguarding the youth and the older population to create a wholesome, safe, secured and sustainable cyberecosystem. Its time to put the youth’s understanding of tech and the life experience of the older poplaution in synergy to create SoPs and best practices for erradicating such cyber frauds from our cyberspace. CyberPeace Foundation has created a CyberPeace Helpline number for victims where they will be given timely assitance for resolving their issues; the victims can reach out the helpline on +91 95700 00066 and thay can also mail their issues on helpline@cyberpeace.net.