#Fact Check – Analysis of Viral Claims Regarding India's UNSC Permanent Membership

Executive Summary:

Recently, there has been a massive amount of fake news about India’s standing in the United Security Council (UNSC), including a veto. This report, compiled scrupulously by the CyberPeace Research Wing, delves into the provenance and credibility of the information, and it is debunked. No information from the UN or any relevant bodies has been released with regard to India’s permanent UNSC membership although India has swiftly made remarkable progress to achieve this strategic goal.

Claims:

Viral posts claim that India has become the first-ever unanimously voted permanent and veto-holding member of the United Nations Security Council (UNSC). Those posts also claim that this was achieved through overwhelming international support, granting India the same standing as the current permanent members.

Factcheck:

The CyberPeace Research Team did a thorough keyword search on the official UNSC official website and its associated social media profiles; there are presently no official announcements declaring India's entry into permanent status in the UNSC. India remains a non-permanent member, with the five permanent actors- China, France, Russia, United Kingdom, and USA- still holding veto power. Furthermore, India, along with Brazil, Germany, and Japan (the G4 nations), proposes reform of the UNSC; yet no formal resolutions have come to the surface to alter the status quo of permanent membership. We then used tools such as Google Fact Check Explorer to uncover the truth behind these viral claims. We found several debunked articles posted by other fact-checking organizations.

The viral claims also lack credible sources or authenticated references from international institutions, further discrediting the claims. Hence, the claims made by several users on social media about India becoming the first-ever unanimously voted permanent and veto-holding member of the UNSC are misleading and fake.

Conclusion:

The viral claim that India has become a permanent member of the UNSC with veto power is entirely false. India, along with the non-permanent members, protests the need for a restructuring of the UN Security Council. However, there have been no official or formal declarations or commitments for alterations in the composition of the permanent members and their powers to date. Social media users are advised to rely on verified sources for information and refrain from spreading unsubstantiated claims that contribute to misinformation.

- Claim: India’s Permanent Membership in UNSC.

- Claimed On: YouTube, LinkedIn, Facebook, X (Formerly Known As Twitter)

- Fact Check: Fake & Misleading.

Related Blogs

In the vast, uncharted territories of the digital world, a sinister phenomenon is proliferating at an alarming rate. It's a world where artificial intelligence (AI) and human vulnerability intertwine in a disturbing combination, creating a shadowy realm of non-consensual pornography. This is the world of deepfake pornography, a burgeoning industry that is as lucrative as it is unsettling.

According to a recent assessment, at least 100,000 deepfake porn videos are readily available on the internet, with hundreds, if not thousands, being uploaded daily. This staggering statistic prompts a chilling question: what is driving the creation of such a vast number of fakes? Is it merely for amusement, or is there a more sinister motive at play?

Recent Trends and Developments

An investigation by India Today’s Open-Source Intelligence (OSINT) team reveals that deepfake pornography is rapidly morphing into a thriving business. AI enthusiasts, creators, and experts are extending their expertise, investors are injecting money, and even small financial companies to tech giants like Google, VISA, Mastercard, and PayPal are being misused in this dark trade. Synthetic porn has existed for years, but advances in AI and the increasing availability of technology have made it easier—and more profitable—to create and distribute non-consensual sexually explicit material. The 2023 State of Deepfake report by Home Security Heroes reveals a staggering 550% increase in the number of deepfakes compared to 2019.

What’s the Matter with Fakes?

But why should we be concerned about these fakes? The answer lies in the real-world harm they cause. India has already seen cases of extortion carried out by exploiting deepfake technology. An elderly man in UP’s Ghaziabad, for instance, was tricked into paying Rs 74,000 after receiving a deep fake video of a police officer. The situation could have been even more serious if the perpetrators had decided to create deepfake porn of the victim.

The danger is particularly severe for women. The 2023 State of Deepfake Report estimates that at least 98 percent of all deepfakes is porn and 99 percent of its victims are women. A study by Harvard University refrained from using the term “pornography” for creating, sharing, or threatening to create/share sexually explicit images and videos of a person without their consent. “It is abuse and should be understood as such,” it states.

Based on interviews of victims of deepfake porn last year, the study said 63 percent of participants talked about experiences of “sexual deepfake abuse” and reported that their sexual deepfakes had been monetised online. It also found “sexual deepfake abuse to be particularly harmful because of the fluidity and co-occurrence of online offline experiences of abuse, resulting in endless reverberations of abuse in which every aspect of the victim’s life is permanently disrupted”.

Creating deepfake porn is disturbingly easy. There are largely two types of deepfakes: one featuring faces of humans and another featuring computer-generated hyper-realistic faces of non-existing people. The first category is particularly concerning and is created by superimposing faces of real people on existing pornographic images and videos—a task made simple and easy by AI tools.

During the investigation, platforms hosting deepfake porn of stars like Jennifer Lawrence, Emma Stone, Jennifer Aniston, Aishwarya Rai, Rashmika Mandanna to TV actors and influencers like Aanchal Khurana, Ahsaas Channa, and Sonam Bajwa and Anveshi Jain were encountered. It takes a few minutes and as little as Rs 40 for a user to create a high-quality fake porn video of 15 seconds on platforms like FakeApp and FaceSwap.

The Modus Operandi

These platforms brazenly flaunt their business association and hide behind frivolous declarations such as: the content is “meant solely for entertainment” and “not intended to harm or humiliate anyone”. However, the irony of these disclaimers is not lost on anyone, especially when they host thousands of non-consensual deepfake pornography.

As fake porn content and its consumers surge, deepfake porn sites are rushing to forge collaborations with generative AI service providers and have integrated their interfaces for enhanced interoperability. The promise and potential of making quick bucks have given birth to step-by-step guides, video tutorials, and websites that offer tools and programs, recommendations, and ratings.

Nearly 90 per cent of all deepfake porn is hosted by dedicated platforms that charge for long-duration premium fake content and for creating porn—of whoever a user wants, and take requests for celebrities. To encourage them further, they enable creators to monetize their content.

One such website, Civitai, has a system in place that pays “rewards” to creators of AI models that generate “images of real people'', including ordinary people. It also enables users to post AI images, prompts, model data, and LoRA (low-rank adaptation of large language models) files used in generating the images. Model data designed for adult content is gaining great popularity on the platform, and they are not only targeting celebrities. Common people are equally susceptible.

Access to premium fake porn, like any other content, requires payment. But how can a gateway process payment for sexual content that lacks consent? It seems financial institutes and banks are not paying much attention to this legal question. During the investigation, many such websites accepting payments through services like VISA, Mastercard, and Stripe were found.

Those who have failed to register/partner with these fintech giants have found a way out. While some direct users to third-party sites, others use personal PayPal accounts to manually collect money in the personal accounts of their employees/stakeholders, which potentially violates the platform's terms of use that ban the sale of “sexually oriented digital goods or content delivered through a digital medium.”

Among others, the MakeNude.ai web app – which lets users “view any girl without clothing” in “just a single click” – has an interesting method of circumventing restrictions around the sale of non-consensual pornography. The platform has partnered with Ukraine-based Monobank and Dublin’s BetaTransfer Kassa which operates in “high-risk markets”.

BetaTransfer Kassa admits to serving “clients who have already contacted payment aggregators and received a refusal to accept payments, or aggregators stopped payments altogether after the resource was approved or completely freeze your funds”. To make payment processing easy, MakeNude.ai seems to be exploiting the donation ‘jar’ facility of Monobank, which is often used by people to donate money to Ukraine to support it in the war against Russia.

The Indian Scenario

India currently is on its way to design dedicated legislation to address issues arising out of deepfakes. Though existing general laws requiring such platforms to remove offensive content also apply to deepfake porn. However, persecution of the offender and their conviction is extremely difficult for law enforcement agencies as it is a boundaryless crime and sometimes involves several countries in the process.

A victim can register a police complaint under provisions of Section 66E and Section 66D of the IT Act, 2000. Recently enacted Digital Personal Data Protection Act, 2023 aims to protect the digital personal data of users. Recently Union Government issued an advisory to social media intermediaries to identify misinformation and deepfakes. Comprehensive law promised by Union IT minister Ashwini Vaishnav will be able to address these challenges.

Conclusion

In the end, the unsettling dance of AI and human vulnerability continues in the dark web of deepfake pornography. It's a dance that is as disturbing as it is fascinating, a dance that raises questions about the ethical use of technology, the protection of individual rights, and the responsibility of financial institutions. It's a dance that we must all be aware of, for it is a dance that affects us all.

References

- https://www.indiatoday.in/india/story/deepfake-porn-artificial-intelligence-women-fake-photos-2471855-2023-12-04

- https://www.hindustantimes.com/opinion/the-legal-net-to-trap-peddlers-of-deepfakes-101701520933515.html

- https://indianexpress.com/article/opinion/columns/with-deepfakes-getting-better-and-more-alarming-seeing-is-no-longer-believing/

.webp)

Introduction

In an era where digital connectivity drives employment, investment, and communication, the most potent weapon of cybercriminals is ‘gaining trust’ with their sophisticated tactics. Prayagraj has been a recent battleground in India's cybercrime landscape. Within a one-year crackdown, over 10,400 SIM cards, 612 mobile device IMEIs, and 59 bank accounts were blocked, exposing a sprawling international fraud network. These activities primarily targeted unsuspecting individuals through Telegram job postings, fake investment tips, and mobile app scams, highlighting the darker side of convenience in cyberspace. With India now experiencing a wave of scams enabled by technology, this crackdown establishes a precedent for concerted cyber policing and awareness among citizens.

Digital Deceit: How the Scams Operated

SIM cards that have been issued through fake or stolen identities are increasingly being used by cybercriminals in Prayagraj and elsewhere. These SIMs were the initial weapon in a highly organised fraud system, allowing criminals to conduct themselves anonymously while abusing messaging services like WhatsApp and Telegram. The gangs involved in these scams, some of which have been linked by reports to nations like Nepal, Pakistan, China, Dubai, and Myanmar, enticed their victims with rich-yielding stock market advice, remote employment offers, and weekend employment promises. After getting a target engaged, victims were slowly manipulated into sending money in the name of application fees, verification fees, or investment contributions.

API Abuse and OTP Interception

What's more alarming about these scams is their tech-savviness. From Prayagraj's cybercrime squad, several syndicates are reported to have employed API-based mobile applications to intercept OTPs (One-Time Passwords) sent to Indian numbers. Such apps, cleverly disguised as genuine services or work-from-home software, collected personal details like bank account credentials and payment card data, allowing wrongdoers to carry out unauthorised transactions in a matter of minutes. The pilfered funds were then quickly transferred through several mule accounts, rendering the money trail almost untraceable.

The Human Impact: How Citizens Were Trapped

Victims tended to come from job-hunting groups, students, or housewives seeking to earn additional income. Often, the scammers persuaded users to join Telegram channels providing free investment advice or job-referral-based schemes, creating an illusion of authenticity. Once on board, victims were sometimes even paid small commissions initially, creating a false sense of success. This tactic, known as “advance-fee confidence building,” made victims more likely to invest larger sums later, ultimately leading to complete financial loss.

Digital Arrest Threats and Bitcoin Ransom Scams

Aside from investment and job scam complaints, the cybercrime cell also saw several "digital arrest" scams, where victims were forced to send money under the threat of engaging in criminal activities. Bitcoin extortion schemes were also used in some cases, with perpetrators threatening exposure of victims' personal information or browsing history on the internet unless they were paid in cryptocurrency.

Law Enforcement’s Cyber Shield: Local Action, Global Impact

Identifying the extent of the threat, Prayagraj authorities implemented strategic measures to enable local policing. Cyber Units have been formed in each of the 43 police stations in the district, each made up of a sub-inspector, head constable, constable, lady constable, and computer operator. This decentralised model enables response in real-time, improved victim support, and quicker forensic analysis of hacked devices. The nodal officer for cyber operations said that this multi-level action is not punitive but preventive, meant to break syndicates before more harm is caused.

CyberPeace Recommendations: Prevention is Power

As cybercrime gets advanced, citizens will also have to keep pace with it. Prayagraj's experience highlights the importance of public awareness, digital literacy, and instant response processes. To assist in preventing people from falling victim to such scams, CyberPeace advises the following:

- Don't click on dubious APK links sent on WhatsApp or Telegram.

- Do not share OTPs or confidential details, even if the source appears to be familiar.

- Never download unfamiliar apps that demand access to SMS or financial information.

- Block your SIM card, payment cards, and bank accounts at once if your phone is stolen.

- Report all cyber frauds to cybercrime.gov.in or your local Cyber Cell.

- Never join investment or job groups on social sites without verification.

- Refuse video calls from unknown numbers; some scammers use this method of recording or blackmailing victims.

Conclusion

Prayagraj crackdown uncovers both the magnitude and versatility of cybercrime in the present. From trans-border cartels to Telegram job scams, the cyber front is as intricate as ever. But this incident also illustrates what can be achieved when technology, law enforcement, and public awareness come together. To stay safe from cyber threats, a cyber-conscious citizenry is as important as an effective cyber cell for India. At CyberPeace, we know that defending cyberspace begins with cyber resilience, and the story of Prayagraj should encourage communities everywhere to take active digital precautions.

References

- https://www.hindustantimes.com/cities/lucknow-news/over-10k-sims-blocked-as-job-investment-frauds-rise-in-prayagraj-101753715061234.html

- https://consumer.ftc.gov/articles/how-recognize-and-avoid-phishing-scams

- https://faq.whatsapp.com/2286952358121083

- https://education.vikaspedia.in/viewcontent/education/digital-litercy/information-security/preventing-online-scams-cert-in-advisory?lgn=en

- https://cybercrime.gov.in/Accept.aspx

- https://www.linkedin.com/pulse/perils-advance-fee-fraud-protecting-yourself-from-scammers-sharma/

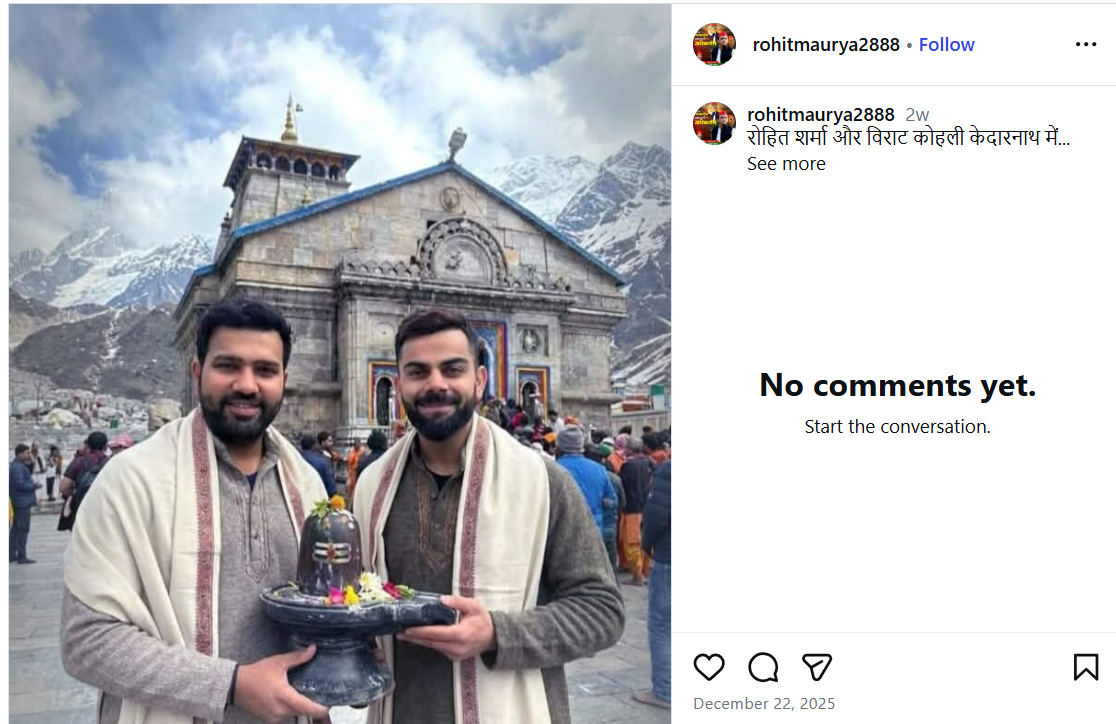

A photo featuring Indian cricketers Virat Kohli and Rohit Sharma is being widely shared on social media. In the image, both players are seen holding a Shivling, with the Kedarnath temple visible in the background. Users sharing the image claim that Virat Kohli and Rohit Sharma recently visited Kedarnath.

However, CyberPeace Foundation’s investigation found the claim to be false. Our verification established that the viral image is not real but has been created using Artificial Intelligence (AI) and is being circulated with a misleading narrative.

The Claim

An Instagram user shared the viral image on December 22, 2025, with the caption stating that Rohit Sharma and Virat Kohli are in Kedarnath. The post has since been widely reshared by other users, who assumed the image to be authentic. Link, archive link, screenshot:

Fact Check

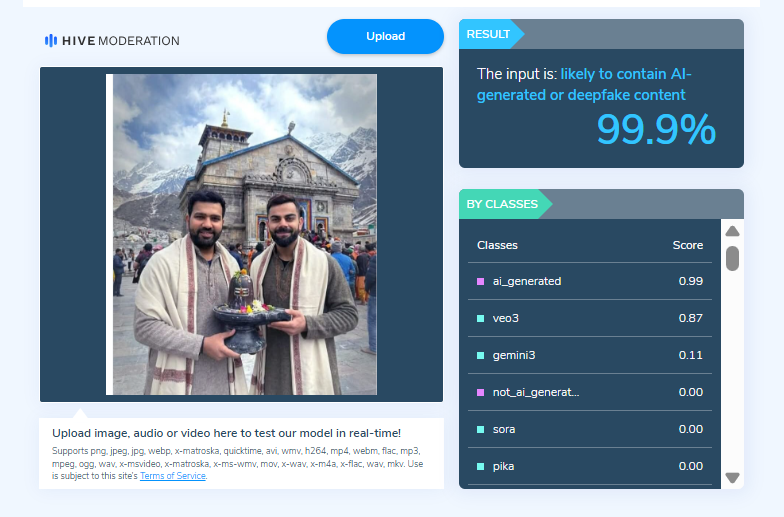

On closely examining the viral image, the Desk noticed visual inconsistencies suggesting that it may be AI-generated. To verify this, the image was scanned using the AI detection tool HIVE Moderation. According to the results, the image was found to be 99 per cent AI-generated.

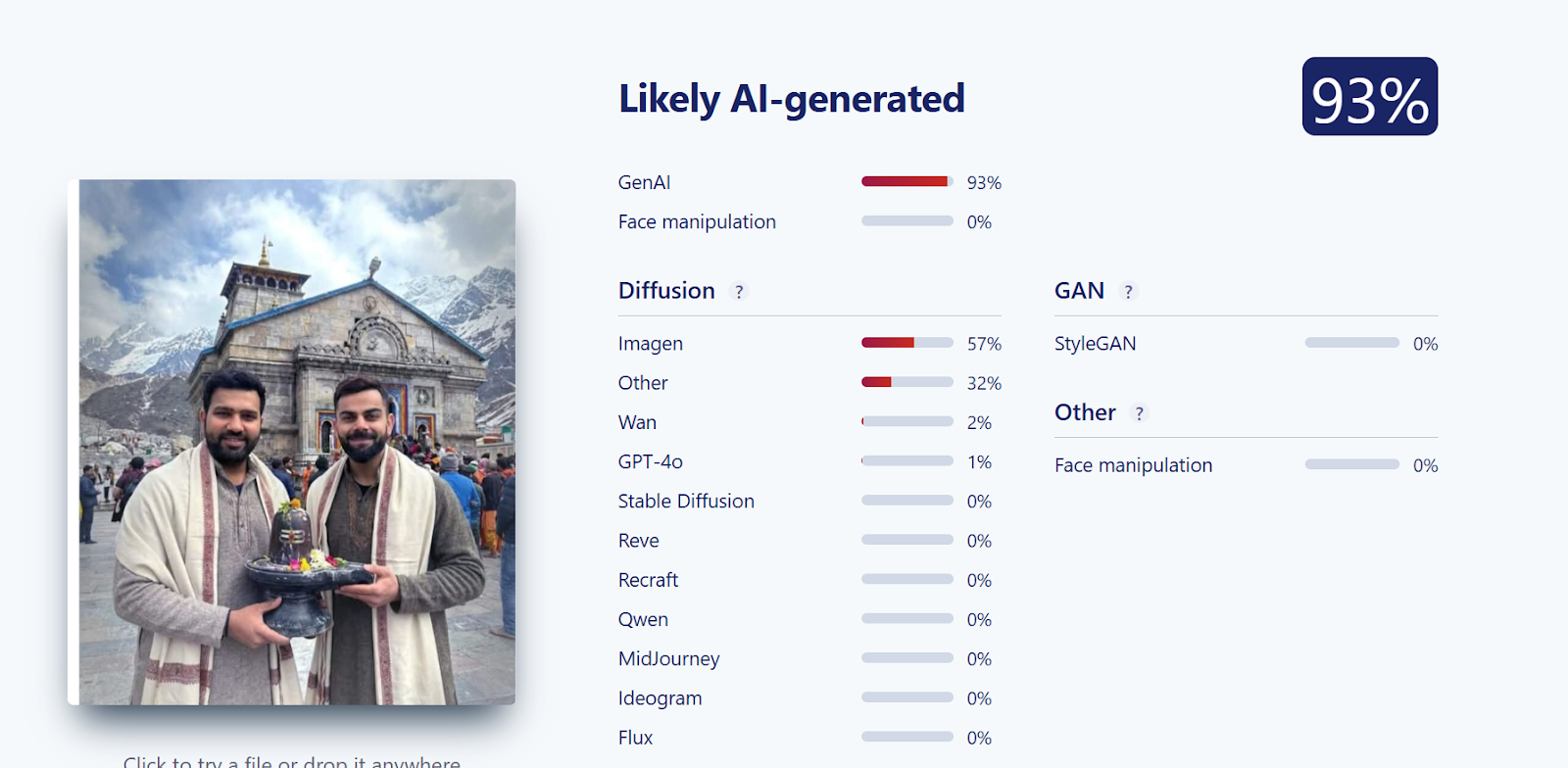

Further verification was conducted using another AI detection tool, Sightengine. The analysis revealed that the image was 93 per cent likely to be AI-generated, reinforcing the findings from the previous tool.

Conclusion

CyberPeace Foundation’s research confirms that the viral image claiming Virat Kohli and Rohit Sharma visited Kedarnath is fabricated. The image has been generated using AI technology and is being falsely shared on social media as a real photograph.