Introduction

Intricate and winding are the passageways of the modern digital age, a place where the reverberations of truth effortlessly blend, yet hauntingly contrast, with the echoes of falsehood. The latest thread in this fabric of misinformation is a claim that has scurried through the virtual windows of social media platforms, gaining the kind of traction that is both revelatory and alarming of our times. It is a narrative that speaks to the heart of India's cultural and religious fabric—the construction of the Ram Temple in Ayodhya, a project enshrined in the collective consciousness of a nation and steeped in historical significance.

The claim in question, a spectre of misinformation, suggests that the Ram Temple's construction has been covertly shifted 3 kilometres from its original, hallowed ground—the birthplace, as it were, of Lord Ram. This assertion, which spread through the echo chambers of social media, has been bolstered by a screenshot of Google Maps, a digital cartographer that has accidentally become a pawn in this game of truth and deception. The image purports to showcase the location of Ram Mandir as distinct and distant from the site where the Babri Masjid once stood, a claim went viral on social media and has caught the public's reactions.

The Viral Tempest

In the face of such a viral tempest, IndiaTV's fact-checking arm, IndiaTVFactCheck, has stepped into the fray, wielding the sword of veracity against the Goliath of falsehood. Their investigation into this viral claim was meticulous, a deep dive into the digital representations that have fueled this controversy. Upon examining the viral Google Maps screenshot, they noticed markings at two locations: one labelled as Shri Ram Janmabhoomi Temple and the other as Babri Masjid. The latter, upon closer inspection and with the aid of Google's satellite prowess, was revealed to be the Shri Sita-Ram Birla Temple, a place of worship that stands in quiet dignity, far removed from the contentious whispers of social media.

The truth, as it often does, lay buried beneath layers of user-generated content on Google Maps, where the ability to tag any location with a name has sometimes led to the dissemination of incorrect information. This can be corrected, of course, but not before it has woven itself into the fabric of public discourse. The fact-check by IndiaTV revealed that the location mentioned in the viral screenshot is, indeed, the Shri Sita-Ram Birla Temple and the Ram Temple is being constructed at its original, intended site.

This revelation is not merely a victory for truth over falsehood but also a testament to the resilience of facts in the face of a relentless onslaught of misinformation. It is a reminder that the digital realm, for all its wonders, is also a shadowy theatre where narratives are constructed and deconstructed with alarming ease. The very basis of all the fake narratives that spread around significant events, such as the consecration ceremony of the Ram Temple, is the manipulation of truth, the distortion of reality to serve nefarious ends of spreading misinformation.

Fake Narratives; Misinformation

Consider the elaborate fake narratives spun around the ceremony, where hours have been spent on the internet building a web of deceit. Claims such as 'Mandir wahan nahin banaya gaya' (The temple is not being built at the site of the demolition) and the issuance of new Rs 500 notes for the Ram Mandir were some pieces of misinformation that went viral on social media amid the preparations for the consecration ceremony. These repetitive claims, albeit differently worded, were spread to further a single narrative on the internet, a phenomenon that a study published in Nature said could be attributed to people taking some peripheral cues as signals for truth, which can increase with repetition.

The misinformation incidents surrounding the Ram Temple in Ayodhya are a microcosm of the larger battle between truth and misinformation. The false claims circulating online assert that the ongoing construction is not taking place at the original Babri Masjid site but rather 3 kilometres away. This misinformation, shared widely on social media has been debunked upon closer examination. The claim is based on a screenshot of Google Maps showing two locations: the construction site of the Shri Ram Janmabhoomi Temple and another spot labeled 'Babar Masjid permanently closed' situated 3 kilometers away. The assertion questions the legitimacy of demolishing the Babri Masjid if the temple is being built elsewhere. However, a thorough fact-check reveals the claim to be entirely unfounded.

Deep Scrutiny

Upon scrutiny, the screenshot indicates that the second location marked as 'Babar Masjid' is, in fact, the Sita-Ram Birla Temple in Ayodhya. This is verified by comparing the Google Maps satellite image with the actual structure of the Birla Temple. Notably, the viral screenshot misspells 'Babri Masjid' as 'Babar Masjid,' casting doubt on its credibility. Satellite images from Google Earth Pro clearly depict the construction of a temple-like structure at the precise coordinates of the original Babri Masjid demolition site (26°47'43.74'N 82°11'38.77'E). Comparing old and new satellite images further confirms that major construction activities began in 2011, aligning with the initiation of the Ram Temple construction.

Moreover, existing photographs of the Babri Masjid, though challenging to precisely match, share essential structural elements with the current construction site, reinforcing the location as the original site of the mosque. Hence the viral claim that the Ram Temple is being constructed 3 kilometers away from the Babri Masjid site is indubitably false. Evidence from historical photographs, satellite images and google images conclusively refute this misinformation, attesting that the temple construction is indeed taking place at the same location as the original Babri Masjid.

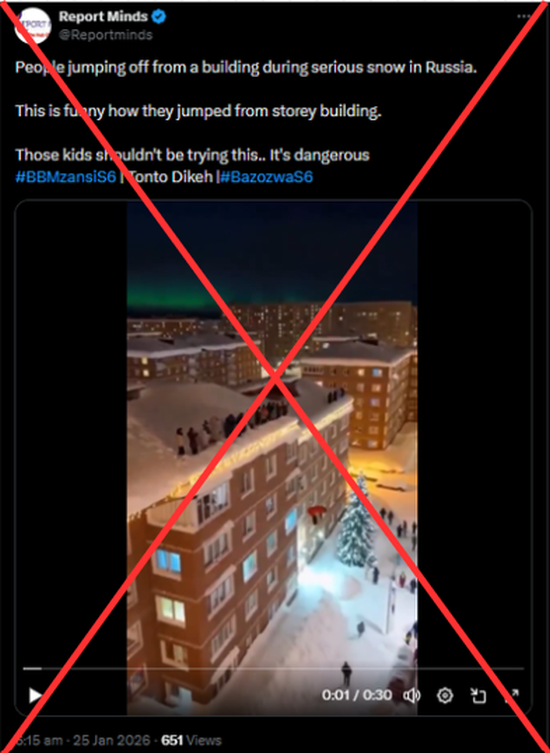

Viral Misinformation: A false claim based on a misleading Google Maps screenshot suggests the Ram Temple construction in Ayodhya has been covertly shifted 3 kilometres away from its original Babri Masjid site.

Fact Check Revealed: IndiaTVFactCheck debunked the misinformation, confirming that the viral screenshot actually showed the Shri Sita-Ram Birla Temple, not the Babri Masjid site. The Ram Temple is indeed being constructed at its original, intended location, exposing the falsehood of the claim.

Conclusion

The case of the Ram Temple is a pitiful reminder of the power of misinformation and the significance of fact-checking in preserving the integrity of truth. It is a clarion call to question, to uphold the integrity of facts in a world increasingly stymied in the murky waters of falsehoods. Widespread misinformation highlights the critical role of fact-checking in dispelling false narratives. It serves as a reminder of the ongoing battle between truth and misinformation in the digital age, emphasising the importance of upholding the integrity of facts for a more informed society.

References

.webp)