#FactCheck: Old Jerusalem Clash Video Falsely Shared as Chaos at Tel Aviv Airport

Executive Summary

A video is being widely shared on social media showing a group of people clashing near a counter. The clip is being claimed to be from Ben Gurion Airport in Tel Aviv, Israel. Users allege that panic caused by Iranian missile threats has led people to try to flee the country, resulting in chaos and fights over flight tickets. However, a research by the CyberPeace found the claim to be false. Our findings reveal that the video is not related to the recent tensions and is actually from 2025.

Claim:

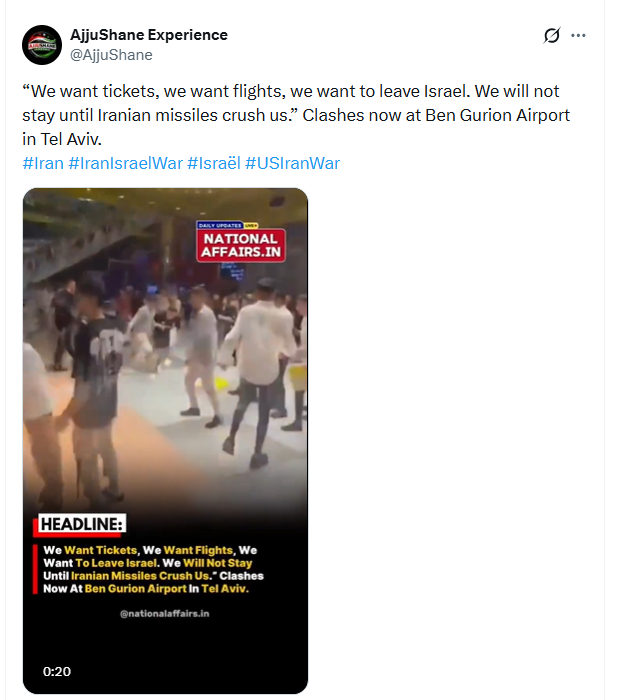

The viral video is being shared with the claim that chaos has erupted at Tel Aviv’s airport, with people trying to leave Israel due to Iranian attacks. An X user named “AjjuShane Experience (@AjjuShane)” shared the video with the caption: “We need tickets, we need flights, we want to leave Israel. We will not stay here until Iranian missiles crush us. Clashes are now happening at Tel Aviv’s Ben Gurion Airport.”

Post link:

- https://x.com/AjjuShane/status/2032584953112965238

- https://x.com/AjjuShane/status/2032584953112965238

Fact Check:

To verify the claim, we extracted keyframes from the video and conducted a reverse image search on Google. During the research , we found the same video on a Facebook page named Ynet, where it was shared on July 20, 2025.

- https://www.facebook.com/share/p/1NgTmpaZCs/

- https://www.facebook.com/share/p/1NgTmpaZCs/

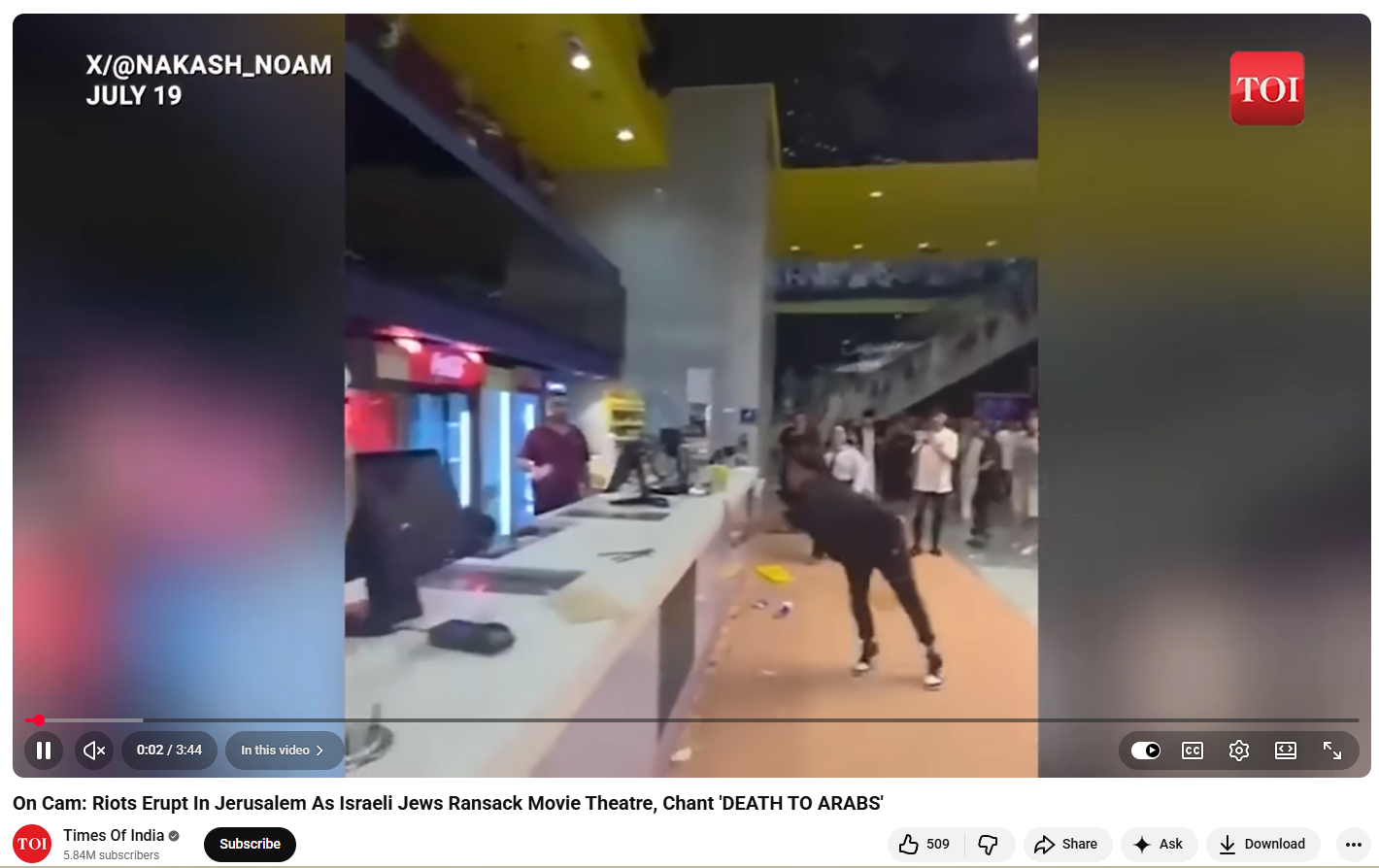

The video carried a caption in Hebrew. Upon translation, it stated that the incident took place at “Cinema City” in Jerusalem, where dozens of Jewish youths clashed with Arab cafeteria workers. The visuals showed youths vandalizing property and throwing objects at staff members, while staff retaliated. Some individuals sustained minor injuries, but no serious harm was reported. We also found the same video on the YouTube channel of The Times of India, published on July 20, 2025. The caption mentioned that anti-Arab riots broke out inside a Cinema City theatre in Jerusalem on July 19, showing youths vandalizing the premises and clashing with Arab employees.

Conclusion:

Our research clearly shows that the viral video is from 2025 and unrelated to any recent Iran-Israel tensions. It is being misleadingly shared as a recent incident from Tel Aviv airport.

Related Blogs

The race for global leadership in AI is in full force. As China and the US emerge as the ‘AI Superpowers’ in the world, the world grapples with the questions around AI governance, ethics, regulation, and safety. Some are calling this an ‘AI Arms Race.’ Most of the applications of these AI systems are in large language models for commercial use or military applications. Countries like Germany, Japan, France, Singapore, and India are now participating in this race and are not mere spectators.

The Government of India’s Ministry of Electronics and Information Technology (MeitY) has launched the IndiaAI Mission, an umbrella program for the use and development of AI technology. This MeitY initiative lays the groundwork for supporting an array of AI goals for the country. The government has allocated INR 10,300 crore for this endeavour. This mission includes pivotal initiatives like the IndiaAI Compute Capacity, IndiaAI Innovation Centre (IAIC), IndiaAI Datasets Platform, IndiaAI Application Development Initiative, IndiaAI FutureSkills, IndiaAI Startup Financing, and Safe & Trusted AI.

There are several challenges and opportunities that India will have to navigate and capitalize on to become a significant player in the global AI race. The various components of India’s ‘AI Stack’ will have to work well in tandem to create a robust ecosystem that yields globally competitive results. The IndiaAI mission focuses on building large language models in vernacular languages and developing compute infrastructure. There must be more focus on developing good datasets and research as well.

Resource Allocation and Infrastructure Development

The government is focusing on building the elementary foundation for AI competitiveness. This includes the procurement of AI chips and compute capacity, about 10,000 graphics processing units (GPUs), to support India’s start-ups, researchers, and academics. These GPUs have been strategically distributed, with 70% being high-end newer models and the remaining 30% comprising lower-end older-generation models. This approach ensures that a robust ecosystem is built, which includes everything from cutting-edge research to more routine applications. A major player in this initiative is Yotta Data Services, which holds the largest share of 9,216 GPUs, including 8,192 Nvidia H100s. Other significant contributors include Amazon AWS's managed service providers, Jio Platforms, and CtrlS Datacenters.

Policy Implications: Charting a Course for Tech Sovereignty and Self-reliance

With this government initiative, there is a concerted effort to develop indigenous AI models and reduce tech dependence on foreign players. There is a push to develop local Large Language Models and domain-specific foundational models, creating AI solutions that are truly Indian in nature and application. Many advanced chip manufacturing takes place in Taiwan, which has a looming China threat. India’s focus on chip procurement and GPUs speaks to a larger agenda of self-reliance and sovereignty, keeping in mind the geopolitical calculus. This is an important thing to focus on, however, it must not come at the cost of developing the technological ‘know-how’ and research.

Developing AI capabilities at home also has national security implications. When it comes to defence systems, control over AI infrastructure and data becomes extremely important. The IndiaAI Mission will focus on safe and trusted AI, including developing frameworks that fit the Indian context. It has to be ensured that AI applications align with India's security interests and can be confidently deployed in sensitive defence applications.

The big problem here to solve here is the ‘data problem.’ There must be a focus on developing strategies to mitigate the data problem that disadvantages the Indian AI ecosystem. Some data problems are unique to India, such as generating data in local languages. While other problems are the ones that appear in every AI ecosystem development lifecycle namely generating publicly available data and licensed data. India must strengthen its ‘Digital Public Infrastructure’ and data commons across sectors and domains.

India has proposed setting up the India Data Management Office to serve as India’s data regulator as part of its draft National Data Governance Framework Policy. The MeitY IndiaAI expert working group report also talked about operationalizing the India Datasets Platform and suggested the establishment of data management units within each ministry.

Economic Impact: Growth and Innovation

The government’s focus on technology and industry has far-reaching economic implications. There is a push to develop the AI startup ecosystem in the country. The IndiaAI mission heavily focuses on inviting ideas and projects under its ambit. The investments will strengthen the IndiaAI startup financing system, making it easier for nascent AI businesses to obtain capital and accelerate their development from product to market. Funding provisions for industry-led AI initiatives that promote social impact and stimulate innovation and entrepreneurship are also included in the plan. The government press release states, "The overarching aim of this financial outlay is to ensure a structured implementation of the IndiaAI Mission through a public-private partnership model aimed at nurturing India’s AI innovation ecosystem.”

The government also wants to establish India as a hub for sustainable AI innovation and attract top AI talent from across the globe. One crucial aspect that needs to be worked on here is fostering talent and skill development. India has a unique advantage, that is, top-tier talent in STEM fields. Yet we suffer from a severe talent gap that needs to be addressed on a priority basis. Even though India is making strides in nurturing AI talents, out-migration of tech talent is still a reality. Once the hardware manufacturing “goods-side” of economics transitions to service delivery in the field of AI globally, India will need to be ready to deploy its talent. Several structural and policy interfaces, like the New Education Policy and industry-academic partnership frameworks, allow India to capitalize on this opportunity.

India’s talent strategy must be robust and long-term, focusing heavily on multi-stakeholder engagement. The government has a pivotal role here by creating industry-academia interfaces and enabling tech hubs and innovation parks.

India's Position in the Global AI Race

India’s foreign policy and geopolitical standpoint have been one of global cooperation. This must not change when it comes to AI. Even though this has been dubbed as the “AI Arms Race,” India should encourage worldwide collaboration on AI R&D through collaboration with other countries in order to strengthen its own capabilities. India must prioritise more significant open-source AI development, work with the US, Europe, Australia, Japan, and other friendly countries to prevent the unethical use of AI and contribute to the formation of a global consensus on the boundaries for AI development.

The IndiaAI Mission will have far-reaching implications for India’s diplomatic and economic relations. The unique proposition that India comes with is its ethos of inclusivity, ethics, regulation, and safety from the get-go. We should keep up the efforts to create a powerful voice for the Global South in AI. The IndiaAI Mission marks a pivotal moment in India's technological journey. Its success could not only elevate India's status as a tech leader but also serve as a model for other nations looking to harness the power of AI for national development and global competitiveness. In conclusion, the IndiaAI Mission seeks to strengthen India's position as a global leader in AI, promote technological independence, guarantee the ethical and responsible application of AI, and democratise the advantages of AI at all societal levels.

References

- Ashwini Vaishnaw to launch IndiaAI portal, 10 firms to provide 14,000 GPUs. (2025, February 17). https://www.business-standard.com/. Retrieved February 25, 2025, from https://www.business-standard.com/industry/news/indiaai-compute-portal-ashwini-vaishnaw-gpu-artificial-intelligence-jio-125021700245_1.html

- Global IndiaAI Summit 2024 being organized with a commitment to advance responsible development, deployment and adoption of AI in the country. (n.d.). https://pib.gov.in/PressReleaseIframePage.aspx?PRID=2029841

- India to Launch AI Compute Portal, 10 Firms to Supply 14,000 GPUs. (2025, February 17). apacnewsnetwork.com. https://apacnewsnetwork.com/2025/02/india-to-launch-ai-compute-portal-10-firms-to-supply-14000-gpus/

- INDIAai | Pillars. (n.d.). IndiaAI. https://indiaai.gov.in/

- IndiaAI Innovation Challenge 2024 | Software Technology Park of India | Ministry of Electronics & Information Technology Government of India. (n.d.). http://stpi.in/en/events/indiaai-innovation-challenge-2024

- IndiaAI Mission To Deploy 14,000 GPUs For Compute Capacity, Starts Subsidy Plan. (2025, February 17). www.businessworld.in. Retrieved February 25, 2025, from https://www.businessworld.in/article/indiaai-mission-to-deploy-14000-gpus-for-compute-capacity-starts-subsidy-plan-548253

- India’s interesting AI initiatives in 2024: AI landscape in India. (n.d.). IndiaAI. https://indiaai.gov.in/article/india-s-interesting-ai-initiatives-in-2024-ai-landscape-in-india

- Mehra, P. (2025, February 17). Yotta joins India AI Mission to provide advanced GPU, AI cloud services. Techcircle. https://www.techcircle.in/2025/02/17/yotta-joins-india-ai-mission-to-provide-advanced-gpu-ai-cloud-services/

- IndiaAI 2023: Expert Group Report – First Edition. (n.d.). IndiaAI. https://indiaai.gov.in/news/indiaai-2023-expert-group-report-first-edition

- Satish, R., Mahindru, T., World Economic Forum, Microsoft, Butterfield, K. F., Sarkar, A., Roy, A., Kumar, R., Sethi, A., Ravindran, B., Marchant, G., Google, Havens, J., Srichandra (IEEE), Vatsa, M., Goenka, S., Anandan, P., Panicker, R., Srivatsa, R., . . . Kumar, R. (2021). Approach Document for India. In World Economic Forum Centre for the Fourth Industrial Revolution, Approach Document for India [Report]. https://www.niti.gov.in/sites/default/files/2021-02/Responsible-AI-22022021.pdf

- Stratton, J. (2023, August 10). Those who solve the data dilemma will win the A.I. revolution. Fortune. https://fortune.com/2023/08/10/workday-data-ai-revolution/

- Suri, A. (n.d.). The missing pieces in India’s AI puzzle: talent, data, and R&D. Carnegie Endowment for International Peace. https://carnegieendowment.org/research/2025/02/the-missing-pieces-in-indias-ai-puzzle-talent-data-and-randd?lang=en

- The AI arms race. (2024, February 13). Financial Times. https://www.ft.com/content/21eb5996-89a3-11e8-bf9e-8771d5404543

Executive Summary

A video is rapidly circulating on social media showing a man enthusiastically dancing to the Hindi song Sun Sahiba Sun. The clip is being shared with a sensational claim that it is a private video leaked from the hacked email account of FBI Director Kash Patel. In the video, a man can be seen dancing in a casual setting while people in the background cheer him on. Several users have linked the clip to an alleged cyberattack by Iran-linked hackers, attempting to connect it with ongoing international developments.

However, research by the CyberPeace found that the video has been available online since at least 2020. It also resurfaced in 2022, long before the current claims emerged. There is no connection between the video and Kash Patel or any hacking incident. Further research confirmed that the clip is not recent and has no link to any cybersecurity breach. In 2022, the same video had gone viral as a humorous post, with claims that the man was celebrating because his wife had temporarily gone to her maternal home.

Claim

On March 29, 2026, an Instagram user named ‘greyinsightsbharat’ shared the video claiming it was leaked from Patel’s hacked Gmail account. The caption read:“FBI Director Kash Patel's Gmail Hacked by Iranian Hackers; His Alleged Dancing Video Leaked.”

The research involved extracting keyframes from the video and conducting reverse image searches, which revealed that the same clip had been shared multiple times in the past with different, unrelated claims.

Fact Check

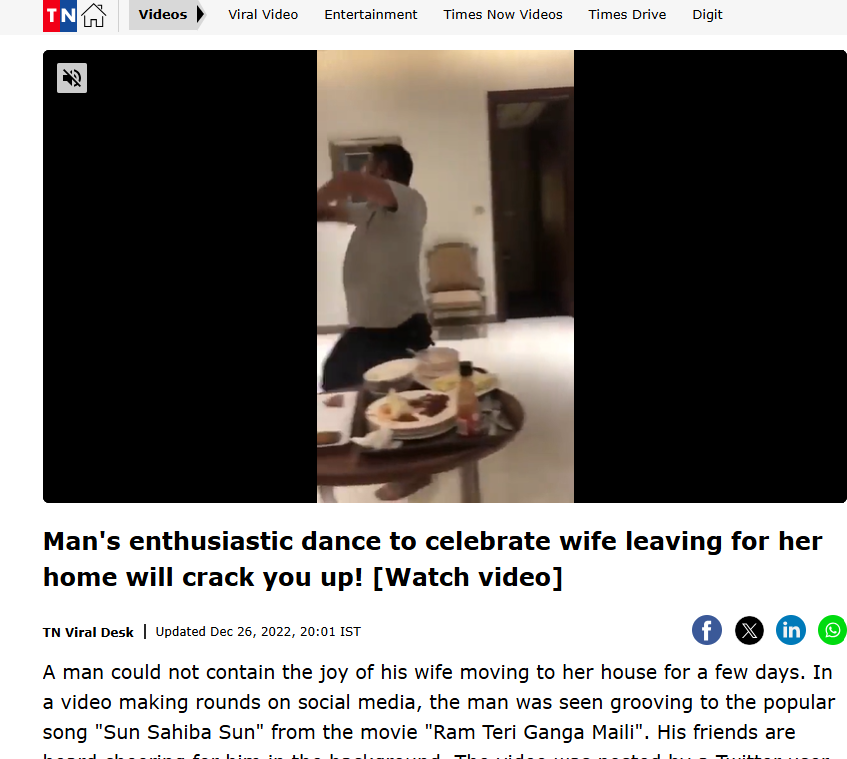

A reverse search also led to a December 2022 media report featuring the same visuals. According to that report, the video showed a man joyfully dancing to celebrate his wife’s temporary visit to her parental home.

Additionally, findings confirm that the footage has existed online since at least 2020 and has previously gone viral. The song featured in the clip is from the 1985 Bollywood film Ram Teri Ganga Maili, originally sung by legendary artist Lata Mangeshkar.

Conclusion:

The claim that the viral dance video is a leaked private clip of FBI Director Kash Patel is false and misleading. Verified findings show that the video has been available on the internet since at least 2020 and had already gone viral in 2022 in a completely different and humorous context. There is no evidence linking the clip to any recent cyberattack, email hack, or data breach involving Patel. The resurfacing of this old video with a fabricated narrative highlights how unrelated content is often repurposed to create sensational misinformation, especially during sensitive geopolitical situations. Users are advised to verify such claims through credible sources before sharing, as misleading posts like these can distort public understanding and spread confusion.

A video is being widely shared on social media showing a monkey, with users claiming that the animal is immersed in devotion to Lord Hanuman. The clip is being circulated with assertions that the monkey was seen participating in Hanuman Aarti. Cyber Peace Foundation’s research found that the viral claim is fake. Our investigation revealed that the video is not real and has been generated using artificial intelligence tools.

Claim

On January 6, 2026, Facebook users shared the viral video claiming, “A monkey was seen immersed in devotion during Hanuman Aarti.”

- Post link: https://www.facebook.com/reel/1261813845766976

- Archived link: https://archive.ph/anid5

Screenshots of the post can be seen below.

FactCheck:

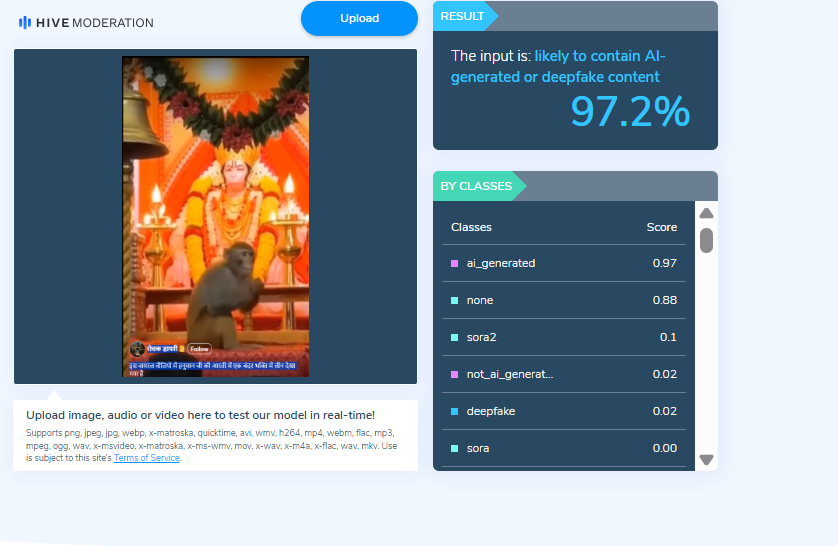

When we closely examined the viral video, we noticed several visual inconsistencies. These anomalies raised suspicion that the video might be AI-generated. To verify this, we scanned the video using the AI detection tool Hive Moderation. According to the results, the video was found to be 97 percent AI-generated.

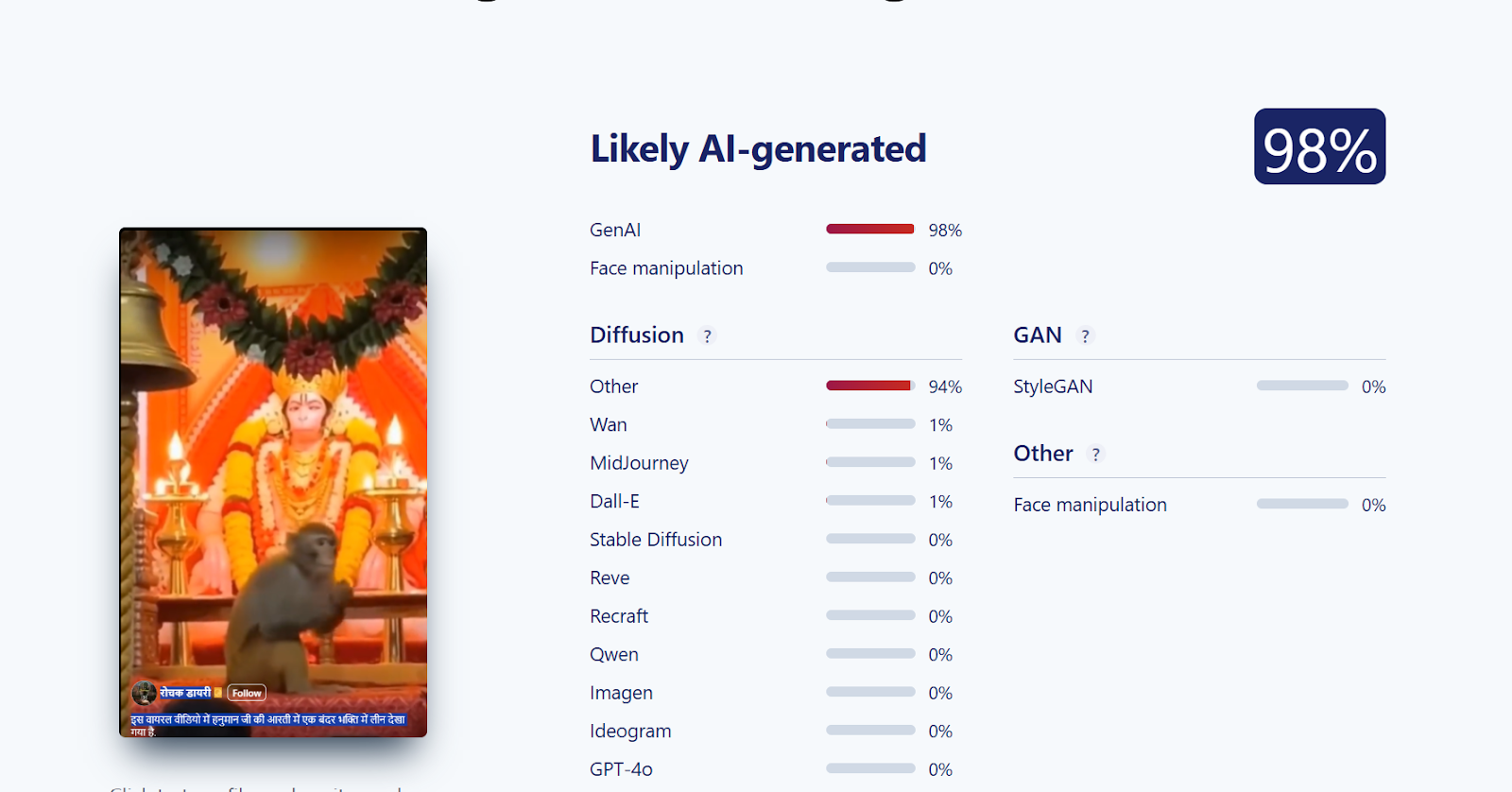

Further, we analysed the video using another AI detection tool, Sightengine. The tool’s assessment indicated that the viral video is 98 percent AI-generated.

Conclusion

Our investigation confirms that the viral video claiming to show a monkey immersed in devotion to Lord Hanuman is AI-generated and not real. The claim circulating on social media is false and misleading.