#FactCheck: Misleading Claim Amid West Asia Conflict: Old Yemen Video Shared as Iran’s Attack on Tel Aviv

Executive Summary

Amid the ongoing tensions in West Asia between the United States–Israel alliance and Iran since February 28, 2026, a video is rapidly going viral on social media. The clip shows buildings engulfed in flames and thick plumes of smoke following an attack. Several users are sharing it with the claim that it depicts Iran’s recent strike on Tel Aviv, Israel. However, an research by the CyberPeace found the claim to be misleading. The viral video is actually from August 2025, when Israel carried out airstrikes in Sanaa, the capital of Yemen. It has no connection to the current conflict.

Claim:

An Instagram user ‘iran_.news24’ posted the video on March 27, 2026, with the caption: “Iran has turned Israel’s largest city Tel Aviv into hell—fears that 200,000 people have died in the war so far.”

Fact Check

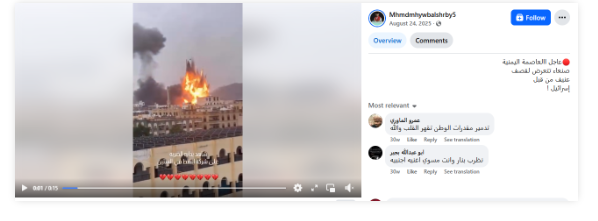

To verify the viral claim, keyframes of the video were extracted and searched using Google Lens. The same video was found posted on August 24, 2025, by a Facebook user ‘Mhmdmhywbalshrby5’. The accompanying text, when translated, stated that it showed Israeli bombardment of Sanaa, Yemen.

Similarly, another Instagram user ‘ae5ce’ had also shared the same video on August 24, 2025, identifying it as footage from Sanaa.

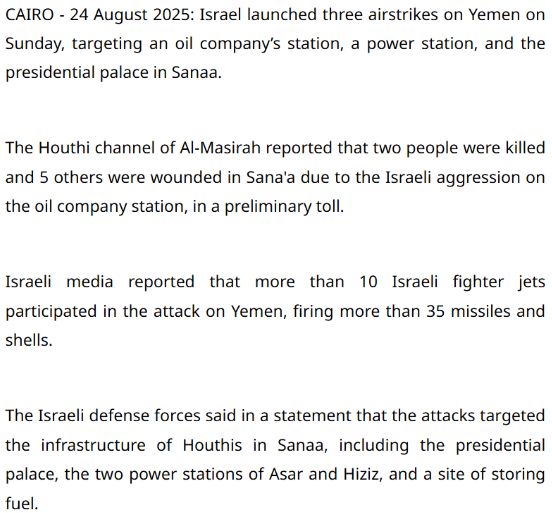

Media reports further support this finding. According to a report published by Egypt Today on August 24, 2025, Israel carried out multiple airstrikes in Sanaa targeting key locations, including an oil station, a power facility, and the presidential palace. Casualties were also reported. The strikes were said to be in response to attacks by Houthi forces.

Additionally, the New York Post shared another video of the same incident from a different angle on its X (formerly Twitter) handle on August 25, 2025.

Conclusion

The video being circulated with the claim of Iran attacking Tel Aviv is actually old footage from Israeli airstrikes in Yemen in August 2025. It is unrelated to the ongoing conflict.

Related Blogs

In the digital era of the present day, a nation’s strength no longer gets measured only by the number of missiles or aircraft it has in its inventory. Rather, it also calls for defending the digital borders. Major infrastructures like power grids and dams are increasingly being targeted by cyberattacks in the global security environment that modern militaries operate in. When communication channels are vulnerable to an information breach, cybersecurity becomes a crucial component of national defence.

Why is cybersecurity a crucial national security concern in the modern era?

The technologies and procedures that shield digital devices, networks, and systems from unwanted access or attacks are referred to as cybersecurity. Cyberattacks are silent in the context of national security, in contrast to conventional warfare. They are swift and are also capable of causing a massive disruption without even a single case of physical infiltration. However, hostile states, terrorist organisations, or criminal networks may be able to steal any classified information or disrupt military infrastructure due to a cybersecurity breach in a military network.

To fully comprehend the significance of cybersecurity, let's examine the various approaches, such as:

- Protecting critical infrastructures- Today's nations rely heavily on digital networks to run vital services like banking, transportation, electricity, water supply, and healthcare. Therefore, a cyberattack on these systems could cause problems across the country and interfere with our daily activities. Therefore, it is also seen that the military forces of a nation closely work in synergy with other government agencies and private organizations to create a strong ecosystem of security in this sector.

- Safeguarding military operations in the present age- The armed forces heavily rely on digital tools for communication, mission planning, surveillance, and coordination. In case the cyber intruders get access to those systems, then a lot of major operational hurdles can come up in the form of breach of mission details, disruption of channels, and compromise of the confidentiality of military operations. These are certain conditions that make cybersecurity an important aspect for protecting the physical bases and the security architectures.

- Preventing cyber warfare- With the evolution of the geopolitical landscape, state and non-state actors are now resorting to cyberattacks to gather intelligence, disrupt security networks, and influence political outcomes. Still, strong cybersecurity can help nations to ensure, detect, defend, and respond to threats in an effective manner.

- Securing government databases- The government databases are known for storing sensitive information about the citizens, military assets, diplomatic data, and vital information related to major national infrastructures. If these get compromised, then it can weaken the strategic position of the nation and put the national security of the nation at a grave risk. Therefore, it becomes necessary to protect government data as a priority.

How can countries improve their cybersecurity defences?

Countries all over the world are developing their cyber capabilities using a variety of tactics to protect against the increasing number of cyber threats. A few of these can be interpreted as;

- Creating cyber defence units- The majority of contemporary armed forces have created specialised cyber domains devoted to threat identification. Their responsibilities have been centred on keeping an eye on those dangers, stopping intrusions, and reacting quickly to cyberattacks.

- Public-Private Partnerships- To safeguard vital industries like energy grids, financial networks, and communication systems, the government collaborates with private businesses and technology suppliers. Additionally, these collaborations foster innovation to improve the overall defence against cyberattacks.

- Establishing international collaborations- Cyber threats do not respect our borders. As a result, which countries are increasing their share of intelligence, best practices, and defensive strategies with their allies? Groups like NATO have conducted a joint cyber defence exercise to prepare for dealing with a digital future.

However, these collaborations can help to develop a united front against cybercrime.

Core Pillars of the modern military cyber defence

The modern defence strategies have been built upon several key designated pillars that are designed to prevent, detect, and respond to cyber threats, which can be mentioned as;

- Cyberspace as an operational domain- Militaries have now begun to treat cyberspace like the land, air, sea, and space as domains where wars can both begin and also end. Developing some dedicated cyber units to conduct digital operations to defend networks and engage in a range of counter-cyber activities when required.

- Active and proactive defence- Instead of passively waiting for the attacks to happen, real-time monitoring tools are used for blocking the threats that arise. Proactive defence goes a step further by hunting for potential threats before they can reach the networks.

- • Protection of vital infrastructures- The armed forces collaborate closely with civilian organisations and agencies to secure vital infrastructures that are important to the country. Critical infrastructure is protected from cyberattacks by layered defence, which includes encryption, stringent access control, and ongoing monitoring.

- • Strengthening alliances- Countries can develop a strong and well-coordinated defence system by exchanging intelligence to carry out cooperative cyber operations.

- Fostering innovation for the development of a workforce- Cyber threats evolve at a rapid pace, which calls for the military to invest in advanced technologies like AI-driven systems, secure cloud technologies, besides ensure continuous training related to cybersecurity.

Conclusion

The modern militaries have adopted the method of protecting digital networks to defend their land and seas. Cybersecurity has become the new line of defence to protect government data and vital defence infrastructure from serious and unseen threats. The countries are building a secure, robust, and resilient digital future with the aid of solid alliances, cutting-edge technologies, knowledgeable workers, and a proactive defence strategy.

References

- https://www.ssh.com/academy/cyber-defense-strategy-dod-perspective#:~:text=Defence%20organizations%20are%20prime%20targets,SSH%20Key%20Management%20and%20Compliance

- https://www.fortinet.com/resources/cyberglossary/cyber-warfare#:~:text=Advanced%20endpoint%20security%20adds%20proactive,information%20by%20halting%20unauthorized%20transfers

- https://medium.com/@lynnfdsouza/the-impact-of-cyber-warfare-on-modern-military-strategies-c77cf6d1a788

- https://ccoe.dsci.in/blog/why-cybersecurity-is-critical-for-national-defense-protecting-countries-in-the-digital-age

Introduction

Ransomware is one of the serious cyber threats as it causes consequences such as financial losses, data loss, and reputation damage. Recently in 2023, a new ransomware called Akira ransomware emerged or surfaced. It has targeted and affected various enterprises or industries, such as BSFI, Construction, Education, Healthcare, Manufacturing, real estate and consulting, primarily based in the United States. Akira ransomware has targeted industries by exploiting the double-extortion technique by exfiltrating and encrypting sensitive data and imposing the threat on victims to leak or sell the data on the dark web if the ransom is not paid. The Akira ransomware gang has extorted a ransom ranging from $200,000 to millions of dollars.

Uncovering the Akira Ransomware operations and their targets

Akira ransomware gang has gained unauthorised access to computer systems by using sophisticated encryption algorithms to encrypt the Data. When such an encryption process is completed, the affected device or network will not be able to access its files or use its data.

The affected files by Akira ransomware showed the extension named “.akira”, and the file’s icon shows blank white pages. The Akira ransomware has developed a data leak site so as to extort victims. And it has also used the ransom note named “akira_readme.txt”.

Akira ransomware steeled the corporate data of various organisations, which the Akira ransomware gang used as leverage while threatening the affected organisation with high ransom demands. Akira Ransomware gang threaten the victims to leak their sensitive data or corporate data in the public domain if the demanded ransom amount is not paid. Akira ransomware gang has leaked the data of four organisations and the size ranges from 5.9GB to 259 GB of data leakage.

Akira Ransomware gang communicating with Victims

The Akira ransomware has provided a unique negotiation password to each victim to initiate communication. Where the ransomware gang deployed a chat system for the purpose of negotiation and demanding ransom from the affected organisations. They have deployed a ransom note as akira_readme.txt so as to provide information as to how they have affected the victim’s files or data along with links to the Akira data leak site and negotiation site.

How Akira Ransomware is different from Pegasus Spyware

Pegasus, developed in the year 2011, belongs to one of the most powerful family of spyware. Once it has infected, it can spear your phone and your text messages or emails. It has the ability to turn your phone into a surveillance device, from copying your messages to harvesting your photos and recording calls. In fact, it has the ability to record you through your phone camera or record your conversation by using your microphone, it also has the ability to track your pinpoint location. In contrast, newly Akira ransomware affects encrypting your files and preventing access to your Data and then asking for ransom n the pretext of leaking your data or for decryption.

How to recover from malware attacks

If affected by such type of malware attack, you can use anti-malware tools such as SpyHunter 5 or Malwarebytes to scan your system. These are the security software which can scan your system and remove suspicious malware files and entries. If you are unable to perform the scan or antivirus in normal mode due to malware in your system, you can use it in Safe Mode. And try to find a relevant decryptor which can help you to recover your files. Do not fall into a ransomware gang’s trap because there is no guarantee that they will help you to recover or will not leak your data after paying the ransom amount.

Best practices to be safe from such ransomware attacks

Conclusion

The Akira ransomware operation poses serious threats to various organisations worldwide. There is a high need to employ robust cybersecurity measures to safeguard networks and sensitive data. Organisations must ensure to keep their software system updated and backed up to a secure network on a regular basis. Paying the ransom is illegal mean instead you should report the incident to law enforcement agencies and can consult with cybersecurity professionals for the recovery method.

Over The Top (OTT)

OTT messaging platforms have taken the world by storm; everyone across the globe is working on OTT platforms, and they have changed the dynamics of accessibility and information speed forever. Whatsapp is one of the leading OTT messaging platforms under the tech giant Meta as of 2013. All tasks, whether personal or professional, can be performed over Whatsapp, and as of today, Whatsapp has 2.44 billion users worldwide, with 487.5 Million users in India alone[1]. With such a vast user base, it is pertinent to have proper safety and security measures and mechanisms on these platforms and active reporting options for the users. The growth of OTT platforms has been exponential in the previous decade. As internet penetration increased during the Covid-19 pandemic, the following factors contributed towards the growth of OTT platforms –

- Urbanisation and Westernisation

- Access to Digital Services

- Media Democratization

- Convenience

- Increased Internet Penetration

These factors have been influential in providing exceptional content and services to the consumers, and extensive internet connectivity has allowed people from the remotest part of the country to use OTT messaging platforms. But it is pertinent to maintain user safety and security by the platforms and abide by the policies and regulations to maintain accountability and transparency.

New Safety Features

Keeping in mind the safety requirements and threats coming with emerging technologies, Whatsapp has been crucial in taking out new technology and policy-based security measures. A number of new security features have been added to WhatsApp to make it more difficult to take control of other people’s accounts. The app’s privacy and security-focused features go beyond its assertion that online chats and discussions should be as private and secure as in-person interactions. Numerous technological advancements pertaining to that goal have focussed on message security, such as adding end-to-end encryption to conversations. The new features allegedly increase user security on the app.

WhatsApp announced that three new security features are now available to all users on Android and iOS devices. The new security features are called Account Protect, Device Verification, and Automatic Security Codes

- For instance, a new programme named “Account Protect” will start when users migrate an account from an old device to a new one. If users receive an unexpected alert, it may be a sign that someone is trying to access their account without their knowledge. Users may see an alert on their previous handset asking them to confirm that they are truly transitioning away from it.

- To make sure that users cannot install malware to access other people’s messages, another function called “Device Verification” operates in the background. Without the user’s knowledge, this feature authenticates devices in the background. In particular, WhatsApp claims it is concerned about unlicensed WhatsApp applications that contain spyware made explicitly for this use. Users do not need to take any action due to the company’s new checks that help authenticate user accounts to prevent this.

- The final feature is dubbed “automatic security codes,” It builds on an already-existing service that lets users verify that they are speaking with the person they believe they are. This is still done manually, but by default, an automated version will be carried out with the addition of a tool to determine whether the connection is secure.

While users can now view the code by visiting a user’s profile, the social media platform will start to develop a concept called “Key Transparency” to make it easier for its users to verify the validity of the code. Update to the most recent build if you use WhatsApp on Android because these features have already been released. If you use iOS, the security features have not yet been released, although an update is anticipated soon.

Conclusion

Digital safety is a crucial matter for netizens across the world; platforms like Whatsapp, which enjoy a massive user base, should lead the way in terms of OTT platforms’ cyber security by inculcating the use of emerging technologies, user reporting, and transparency in the principles and also encourage other platforms to replicate their security mechanisms to keep bad actors at bay. Account Protect, Device Verification, and Automatic Security Codes will go a long way in protecting the user’s interests while simultaneously maintaining convenience, thus showing us that the future with such platforms is bright and secure.

[1] https://verloop.io/blog/whatsapp-statistics-2023/#:~:text=1.,over%202.44%20billion%20users%20worldwide.