Background

Cyber slavery and online trafficking have become alarming challenges in Southeast Asia. Against this backdrop, India successfully rescued 197 of its citizens from Mae Sot in Thailand on November 10, 2025, using two Indian Air Force flights. The evacuees had fled Myanmar’s Myawaddy region in October after intense military operations forced them to escape. This was India’s second rescue effort within a week, following the November 6 mission that brought back 270 nationals from similar conditions. The operations were coordinated by the Indian Embassy in Bangkok and the Consulate in Chiang Mai, with crucial assistance from the Royal Thai Government.

The Operation and Bilateral Cooperation

The operation was carried out with the presence and supervision of Prime Minister Anutin Charnvirakul of Thailand and Indian Ambassador Nagesh Singh, who were both present at the ceremony in Mae Sot. This way, the two countries have not only proved but also cemented their bond to fight the crimes which were mentioned before and more than that, they have even promised to facilitate communication between their authorities. Prime Minister Charnvirakul thanked India for the quick intervention and added that Thailand would be giving the needed support for the repatriation of the other victims as well.

“Both parties reaffirmed their strong commitment to the fight against cross-border crimes, including cyber scams and human trafficking, in the region and to improving cooperation among the relevant agencies in both countries.”, Embassy of India, Bangkok.

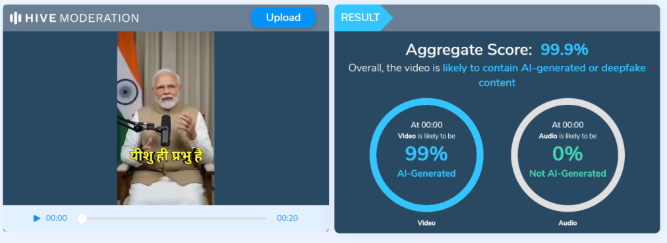

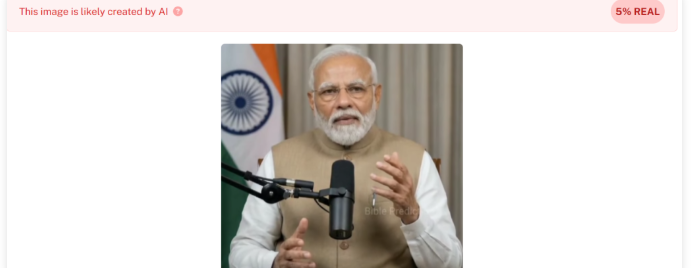

The Cyber Scam Network

The Myawaddy area in Myanmar has made a quick shift to become a hotspot for the entire world of cybercrimes. Moreover, the crimes are especially committed by the organised criminal groups that take advantage of foreign nationals. After the Myanmar military imposed a restriction in late October, over 1,500 people from 28 nations moved to Thailand because of the KK Park cyber hub and other centres being raided.

A UN report (2025) indicated that this fraud activity is part of a larger network that extends the countries populated with very low-tech criminals who target the most naïve, and they are the very ones who end up being tortured. The trafficked persons often belong to the local population or come from neighbouring countries and are recruited with the promise of high salaries as IT or customer service agents, only to be imprisoned in a compound where they are forced to perform phishing, investment fraud, and cryptocurrency scams aimed at the victims all over the globe. These centres operate in border territories having poor governance, easy-to-cross borders, and little police presence, hence making human trafficking a major factor contributing to cybercrime.

India’s Response and Preventive Measures

The Indian Embassy in Thailand worked hand in hand with the Thai government to facilitate bringing back and repatriating the Indian citizens who had entered Thailand illegally when they were escaping Myanmar.

The embassy was far from helpless in the matter. In the case of the embassy's advisory, they suggested to the citizens that:

- It is mandatory to check the authenticity of the job offers and the agents before securing employment in other countries.

- Such employment by means of tourist or visa-free entry permits should be avoided, as such entries allow only for a short-duration visit or tourism.

- Be careful of ads claiming high pay for online or remote work in Southeast Asia.

The embassy reiterated the Government of India’s commitment to ensuring easy access to assistance for citizens overseas and to addressing the growing intersection between cyber fraud and human trafficking.

CyberPeace Analysis and Advisory

The case of Myawaddy demonstrates that cybercrime and human trafficking have grappled to become a complicated global threat. The scam centres gradually come to depend on the trafficked labour of people who are being forced to commit the fraud digitally under coercion. This underlines the requirement for the cybersecurity measures that consider the rights of humans and the protection of the victims, not only the technical defence.

- Cybercrime–Human Trafficking Convergence:

Cybercrime has moved up to the level of a human trafficking operation. The unwilling victims of such fraud schemes are scared for their very lives or even more, not of a reliable way out. This situation is such that one cannot tell where cyber exploitation ends and forced labour begins.

- Cross-Border Enforcement Challenges:

To effectively carry out their unlawful acts, the criminals use legal and jurisdictional loopholes that are present across borders. Dismantling such networks requires the regional cooperation of India, Thailand, and ASEAN countries.

- Socioeconomic Vulnerability:

The situation with unemployment being stagnant and the public not being educated about the situation makes people, especially the youth, very prone to scams of getting hired overseas. Thus, to prevent this uneducated flocking to the fraudsters, it is necessary to constantly implant in them the knowledge of online literacy and the importance of verification of job offers.

- Public–Private Coordination:

The scammers’ mode of operation usually includes online recruitment through social media and encrypted platforms where their victims can be found and contacted. In this regard, cooperation among government institutions, tech platforms, and civil society is imperative to put an end to the operation of these digital trafficking channels.

CyberPeace Expert Advisory

To lessen the possibility of such incidents, CyberPeace suggests the following preventive and policy measures:

Individuals:

- Trust but verify: Before giving your approval to anything, always verify the job offer by official embassy websites or MEA-approved recruiting agencies first.

- Watch out for red flags: If a recruiter offers a very high salary for almost no work, asks for tourist visas, or gives no written contract, be very careful and pull out immediately.

- Protect your documents: Give a trusted person the responsibility of keeping both digital and physical copies of your passport and visa, and also register your travel with the MADAD portal.

- Report if in doubt: If an agent looks suspicious, contact the nearest Indian Embassy or Consulate or report it to cybercrime.gov.in or the 1930 Helpline.

Policymakers and Agencies:

- Strengthen Bilateral Task Forces: Set up armed forces of cyber and human trafficking enforcement units in South and Southeast Asian countries.

- Support Regional Awareness Campaigns: In addition to targeted advisories in local languages, the most vulnerable job seekers in Tier-2 and Tier-3 cities should also receive such awareness in their languages.

- Overseas Employment Advertising should be regulated: All digital job postings should be made to meet transparency standards and fraudulent recruitment should be punished with heavy fines.

- Invest in Digital Forensics and Intelligence Sharing: Create common databases for monitoring international cybercriminal groups.

Conclusion

The return of Indian citizens from Thailand represents a significant humanitarian and diplomatic milestone and highlights that cybercrime, though carried out through digital channels, remains deeply human in nature. International cooperation, well-informed citizens, and a rights-based cybersecurity approach are the minimum requirements for a global campaign against the new breed of cybercrime that is characterised by fraud and trafficking working hand in hand. CyberPeace reminds everyone that digital vigilance, verification, and collaboration across borders are the most effective ways to prevent online abuse and such crimes.

Reference