#FactCheck -Scripted Video of Pre-Wedding Roka at Metro Station Misleads Users

Executive Summary

A video is going viral on social media showing a woman performing a pre-wedding ritual called “Roka” for a couple at a metro station. Many users are sharing the clip believing it to be a real incident. CyberPeace found in its research that the viral claim is false. The video is actually scripted.

Claim:

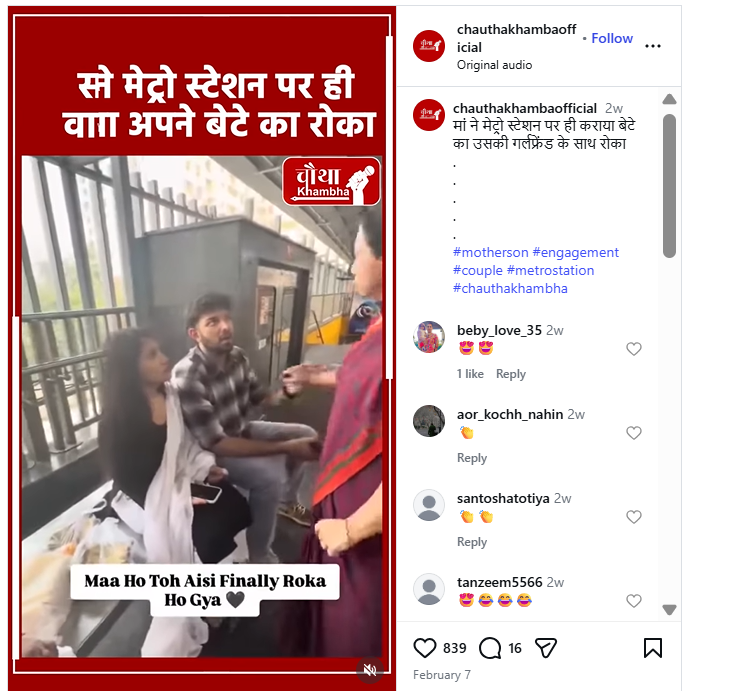

An Instagram user posted the video on February 7, 2026, with the caption, “A mother performed her son’s Roka with his girlfriend at a metro station.”

Fact Check:

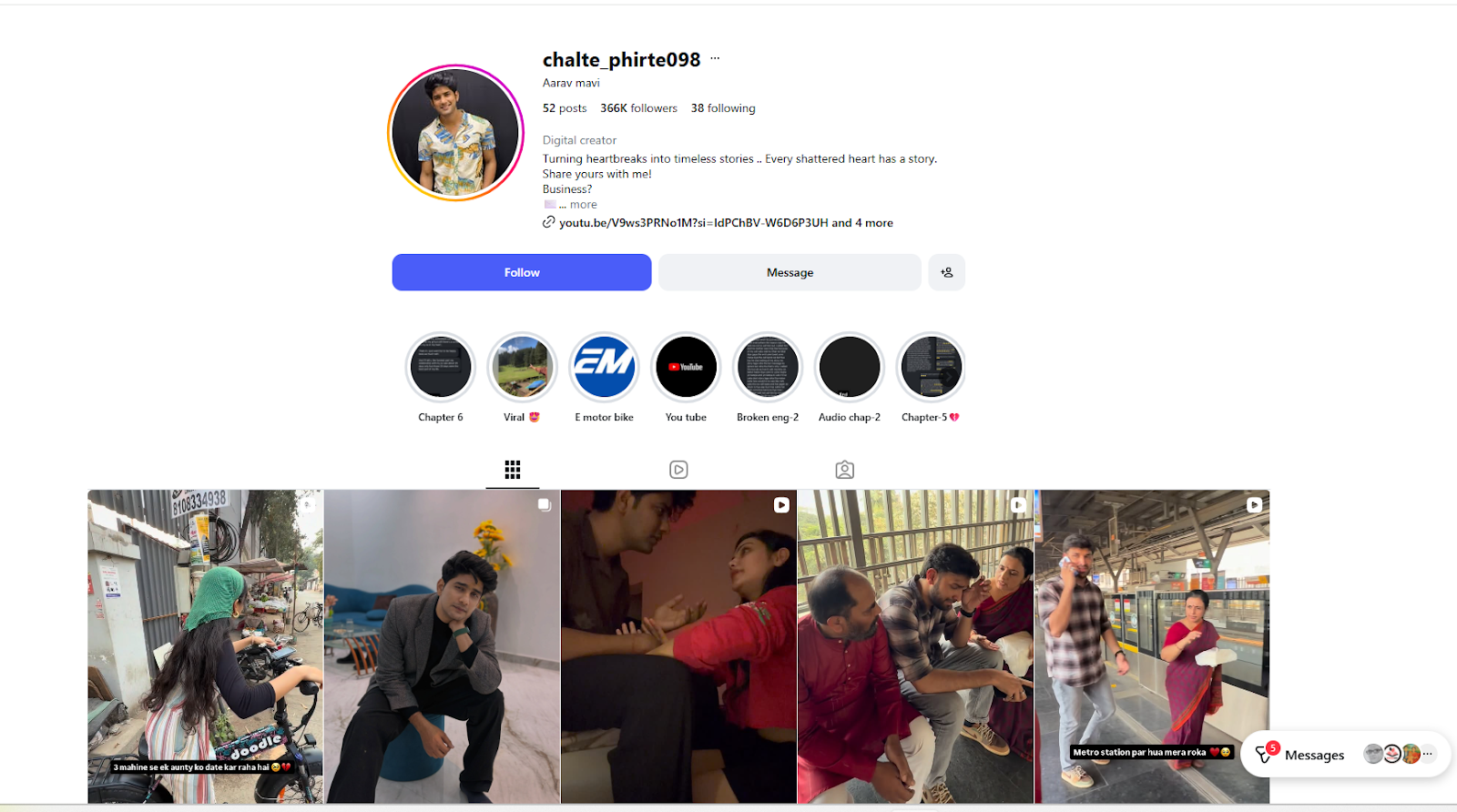

To verify the claim, we conducted a reverse image search using Google Lens on screenshots from the viral video. We found the same video was first uploaded on February 5, 2026, by an Instagram account named “chalte_phirte098.” The profile belongs to digital content creator Aarav Mavi, who regularly posts relationship and breakup-related videos.

Although the viral clip does not include any disclaimer stating that it is scripted, an older video posted by the creator on December 16, 2025, clarifies that his content is based on real-life stories shared by people but is filmed using professional actors. Several similar staged videos are also available on his profile on Instagram.

Conclusion:

Our research clearly shows that the viral video claiming to show a pre-wedding Roka ceremony at a metro station is not real. It was created by a content creator for entertainment purposes. Therefore, the claim circulating on social media is misleading.

Related Blogs

Executive Summary:

As we researched a viral social media video we encountered, we did a comprehensive fact check utilizing reverse image search. The video circulated with the claim that it shows illegal Bangladeshi in Assam's Goalpara district carrying homemade spears and attacking a police and/or government official. Our findings are certain that this claim is false. This video was filmed in the Kishoreganj district, Bangladesh, on July 1, 2025, during a political argument involving two rival factions of the Bangladesh Nationalist Party (BNP). The footage has been intentionally misrepresented, putting the report into context regarding Assam to disseminate false information.

Claim:

The viral video shows illegal Bangladeshi immigrants armed with spears marching in Goalpara, Assam, with the intention of attacking police or officials.

Fact Check:

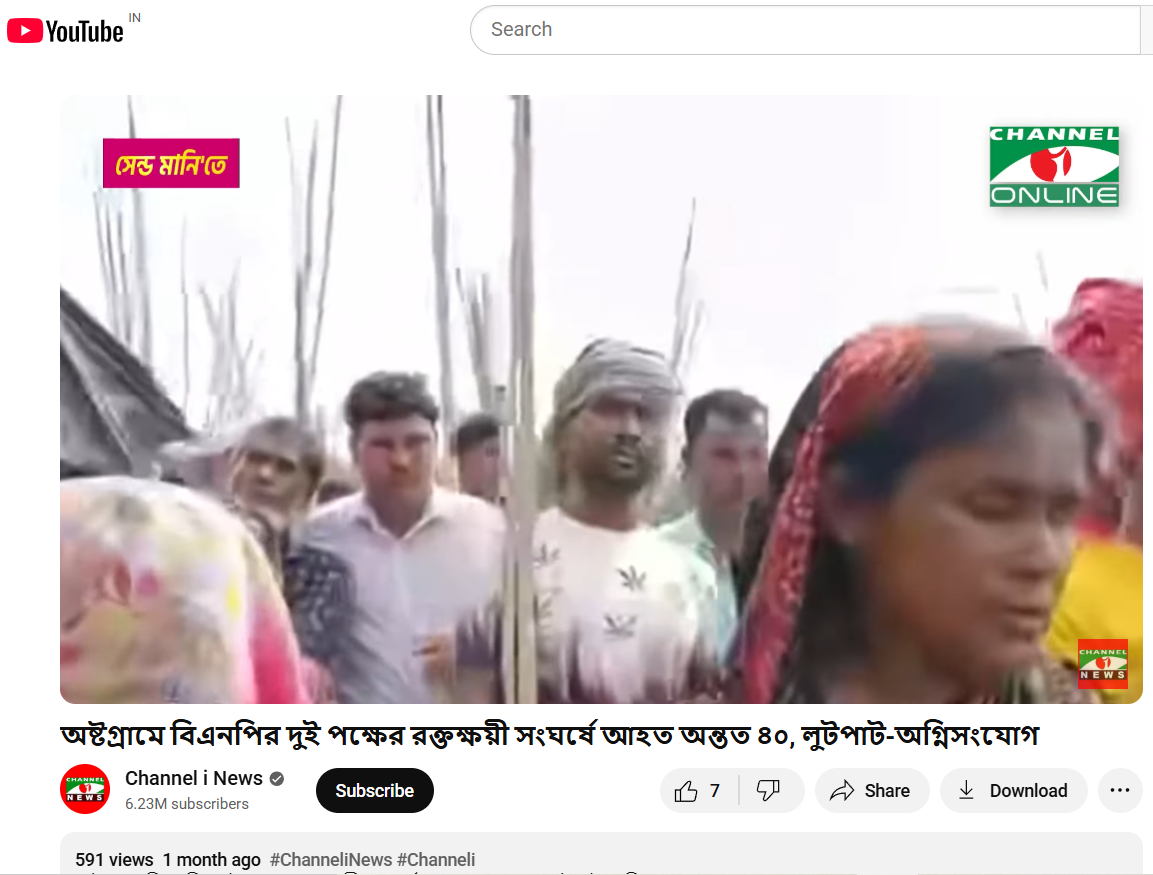

To establish if the claim was valid, we performed a reverse image search on some of the key frames from the video. We did our research on a number of news articles and social media posts from Bangladeshi sources. This led us to a reality check as the events confirmed in these reports took place in Ashtagram, Kishoreganj district, Bangladesh, in a violent political confrontation between factions of the Bangladesh Nationalist Party (BNP) on July 1, 2025, that ultimately resulted in about 40 injuries.

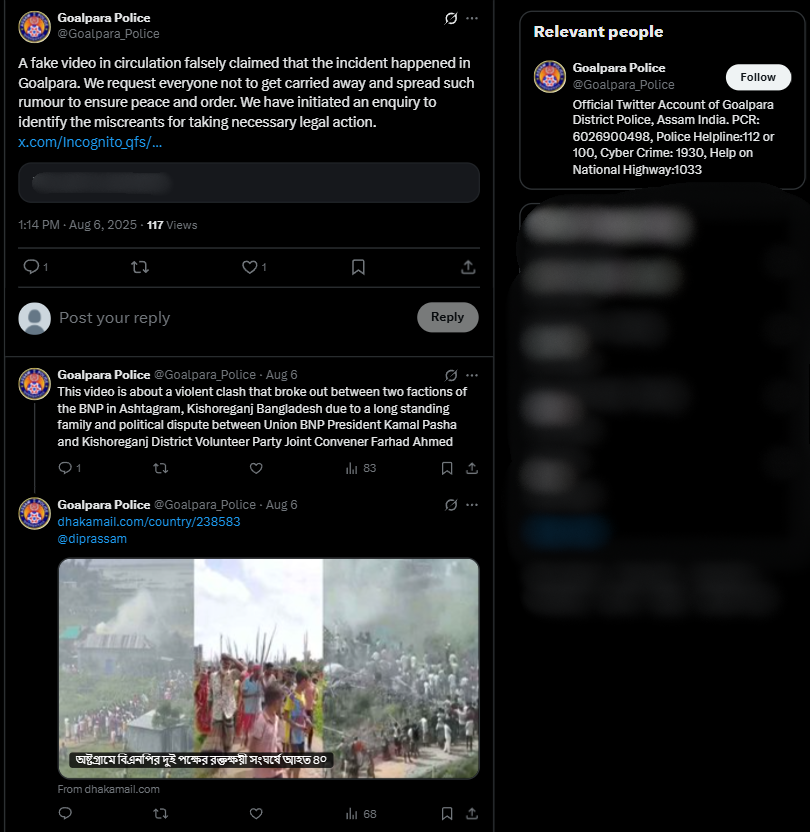

We also found on local media, in particular, Channel i News reported full accounts of the viral report and showed images from the video post. The individuals seen in the video were engaged in a political fight and wielding makeshift spears rather than transitioning into a cross-border attack. The Assam Police issued an official response on X (formerly Twitter) that denied the claim, while noting that nothing of that nature occurred in Goalpara nor in any other district of Assam.

Conclusion:

Based on our research, we conclude that the viral video does not show unlawful Bangladeshi immigrants in Assam. It depicts a political clash in Kishoreganj, Bangladesh, on July 1, 2025. The claim attached to the video is completely untrue and is intended to mislead the public as to where and what the incident depicted is.

Claim: Video shows illegal migrants with spears moving in groups to assault police!

Claimed On: Social Media

Fact Check: False and Misleading

In a recent ruling, a U.S. federal judge sided with Meta in a copyright lawsuit brought by a group of prominent authors who alleged that their works were illegally used to train Meta’s LLaMA language model. While this seems like a significant legal victory for the tech giant, it may not be so. Rather, this is a good case study for creators in the USA to refine their legal strategies and for policymakers worldwide to act quickly to shape the rules of engagement between AI and intellectual property.

The Case: Meta vs. Authors

In Kadrey v. Meta, the plaintiffs alleged that Meta trained its LLaMA models on pirated copies of their books, violating copyright law. However, U.S. District Judge Vince Chhabria ruled that the authors failed to prove two critical things: that their copyrighted works had been used in a way that harmed their market and that such use was not “transformative.” In fact, the judge ruled that converting text into numerical representations to train an AI was sufficiently transformative under the U.S. fair use doctrine. He also noted that the authors’ failure to demonstrate economic harm undermined their claims. Importantly, he clarified that this ruling does not mean that all AI training data usage is lawful, only that the plaintiffs didn’t make a strong enough case.

Meta even admitted that some data was sourced from pirate sites like LibGen, but the Judge still found that fair use could apply because the usage was transformative and non-exploitative.

A Tenuous Win

Chhabria’s decision emphasised that this is not a blanket endorsement of using copyrighted content in AI training. The judgment leaned heavily on the procedural weakness of the case and not necessarily on the inherent legality of Meta’s practices.

Policy experts are warning that U.S. courts are currently interpreting AI training as fair use in narrow cases, but the rulings may not set the strongest judicial precedent. The application of law could change with clearer evidence of commercial harm or a more direct use of content.

Moreover, the ruling does not address whether authors or publishers should have the right to opt out of AI model training, a concern that is gaining momentum globally.

Implications for India

The case highlights a glaring gap in India’s copyright regime: it is outdated. Since most AI companies are located in the U.S., courts have had the opportunity to examine copyright in the context of AI-generated content. India has yet to start. Recently, news agency ANI filed a case alleging copyright infringement against OpenAI for training on its copyrighted material. However, the case is only at an interim stage. The final outcome of the case will have a significant impact on the legality of these language models being able to use copyrighted material for training.

Considering that India aims to develop “state-of-the-art foundational AI models trained on Indian datasets” under the IndiaAI Mission, the lack of clear legal guidance on what constitutes fair dealing when using copyrighted material for AI training is a significant gap.

Thus, key points of consideration for policymakers include:

- Need for Fair Dealing Clarity: India’s fair-dealing provisions under the Copyright Act, 1957, are narrower than U.S. fair use. The doctrine may have to be reviewed to strike a balance between this law and the requirement of diverse datasets to develop foundational models rooted in Indian contexts. A parallel concern regarding data privacy also arises.

- Push for Opt-Out or Licensing Mechanisms: India should consider whether to introduce a framework that requires companies to license training data or provide an opt-out system for creators, especially given the volume of Indian content being scraped by global AI systems.

- Digital Public Infrastructure for AI: India’s policymakers could take this opportunity to invest in public datasets, especially in regional languages, that are both high quality and legally safe for AI training.

- Protecting Local Creators: India needs to ensure that its authors, filmmakers, educators and journalists are protected from having their work repurposed without compensation, since power asymmetries between Big Tech and local creators can lead to exploitation of the latter.

Conclusion

The ruling in Meta’s favour is just one win for the developer. The real questions about consent, compensation and creative control remain unanswered. Meanwhile, the lesson for India is urgent: it needs AI policies that balance innovation with creator rights and provide legal certainty and ethical safeguards as it accelerates its AI ecosystem. Further, as global tech firms race ahead, India must not remain a passive data source; it must set the terms of its digital future. This will help the country move a step closer to achieving its goal of building sovereign AI capacity and becoming a hub for digital innovation.

References

- https://www.theguardian.com/technology/2025/jun/26/meta-wins-ai-copyright-lawsuit-as-us-judge-rules-against-authors

- https://www.wired.com/story/meta-scores-victory-ai-copyright-case/

- https://www.cnbc.com/2025/06/25/meta-llama-ai-copyright-ruling.html

- https://www.mondaq.com/india/copyright/1348352/what-is-fair-use-of-copyright-doctrine

- https://www.pib.gov.in/PressReleasePage.aspx?PRID=2113095#:~:text=One%20of%20the%20key%20pillars,models%20trained%20on%20Indian%20datasets.

- https://www.ndtvprofit.com/law-and-policy/ani-vs-openai-delhi-high-court-seeks-responses-on-copyright-infringement-charges-against-chatgpt

Introduction

The Online Lottery Scam involves a scammer reaching out through email, phone or SMS to inform you that you have won a significant amount of money in a lottery, instructing you to contact an agent at a specific phone number or email address that actually belongs to the fraudster. Once the agent is reached out to, the recipient will need to cover processing charges in order to claim the lottery reward. Upfront Paying is required in order to receive your reward. However, actual rewards come at no cost. Additionally, such defective 'offers’ often contain phishing attacks, tricking users into clicking on malicious links.

Modus Operandi

The common lottery fraud starts with a message stating that the receiver has won a large lottery prize. These messages are frequently crafted to imitate official correspondence from reputable institutions, sweepstakes, or foreign administrations. The scammers request the receiver to give personal information like name, address, and banking details, or to make a payment for taxes, processing fees, or legal procedures. After the victim sends the money or discloses their personal details, the scammers may vanish or persist in requesting more payments for different reasons.

Tactics and Psychological Manipulation

These fraudulent techniques mostly rely on psychological manipulation to work. Fraudsters by persuading the victims create the fake sense of emergency that they must act quickly in order to get the lottery prize. Additionally, they prey on people's hopes for a better life by convincing them that this unanticipated gain has the power to change their destiny. Many people fall prey to the scam because they are driven by the desire to get wealthy and fail to recognize the warning indications. Additionally, fraudsters frequently use convincing language and fictitious documentation that appears authentic, hence users need to be extra cautious and recognise the early signs of such online fraudulent activities.

Festive Season and Uptick in Deceptive Online Scams

As the festive season begins, there is a surge in deceptive online scams that aim at targeting innocent internet users. A few examples of such scams include, free Navratri garba passes, quiz participation opportunities, coupons offering freebies, fake offers of cheap jewellery, counterfeit product sales, festival lotteries, fake lucky draws and charity appeals. Most of these scams are targeted to lure the victims for financial gain.

In 2023, CyberPeace released a research report on the Navratri festivities scam where we highlighted the ‘Tanishq iPhone 15 Gift’ scam which involved fraudsters posing as Tanishq, a well-known jewellery brand, and offering fake iPhone 15 as Navratri gifts. Victims were lured into clicking on malicious links. CyberPeace issued a detailed advisory within the report, highlighting that the public must exercise vigilance, scrutinise the legitimacy of such offers, and take precautionary measures to shield themselves from falling prey to such deceptive cyber schemes.

Preventive Measures for Lottery Scams

To avoid lottery scams ,users should avoid responding to messages or calls about fake lottery wins, verify the source of the lottery, maintain confidentiality by not sharing sensitive personal details, approach unexpected windfalls with scepticism, avoid upfront payment requests, and recognize manipulative tactics by scammers. Ignoring messages or calls about fake lottery wins is a smart move. Verifying the source and asking probing questions is also crucial. Users are also advisednot to click on such unsolicited links of lottery prizes received in emails or messages as such links can be phishing attempts. These best practices can help protect the victims against scammers who pressurise victims to act quickly that led them to fall prey to such scams.

Must-Know Tips to Prevent Lottery Scams

● It is advised to steer clear of any communication that offers lotteries or giveaways, as these are often perceived as too good to be true.

● It is advised to refrain from transferring money to individuals/entities who are unknown without verifying their identity and credibility.

● If you have already given the fraudsters your bank account details, it is crucial to alert your bank immediately.

● Report any such incidents on the National Cyber Crime Reporting Portal at cybercrime.gov.in or Cyber Crime Helpline Number 1930.