#FactCheck -AI-Generated Clip Misleads Users as Monkey ‘Saves’ Child Under Falling Tree Branch

Executive Summary

A video showing a monkey allegedly saving the life of a sleeping child is rapidly going viral on social media. In the clip, a monkey can be seen picking up a child sleeping on a mat under a tree and moving the child away moments before a heavy tree branch falls at the same spot. Social media users are sharing the video as a “miracle of nature” and praising the emotional sensitivity and instincts of animals. However, research conducted by CyberPeace Research Wing found that the viral video is not real and was created using artificial intelligence tools.

Claim

The caption accompanying the viral post states:“In a shocking incident, a monkey was seen stepping in to save an innocent child sleeping under a tree from imminent danger. People nearby were stunned by the scene. It is being claimed that the monkey sensed the danger around the child and tried to protect him. The unusual incident has now gone viral on social media, with many saying that emotions and compassion are not limited to humans, animals can also understand feelings.”

The video has been widely shared across social media platforms

- https://www.instagram.com/reels/DYMvhRPTcCA/

- https://archive.ph/https://www.instagram.com/reels/DYMvhRPTcCA/

Fact Check

To verify the authenticity of the video, we extracted keyframes from the clip and conducted a reverse image search. During the research, we found the same video uploaded on May 8, 2026, on an Instagram page named Instagram user “mojilo_vandro.” The caption of the original upload did not provide any factual context and presented the video in a dramatic, miracle-like manner.

We further examined the Instagram account and found that it regularly posts several AI-generated videos featuring monkeys performing heroic or emotional acts. Importantly, the account owner has also identified themselves as an “AI video creator” in the bio section.

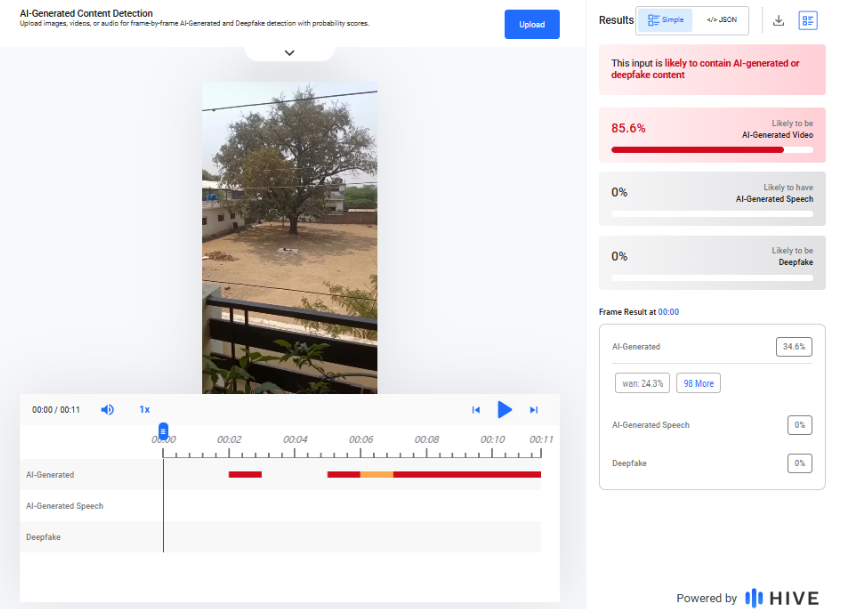

To further analyze the clip, we tested it using the AI detection tool Hive Moderation. The tool’s analysis classified the viral video as 85.6% likely to be AI-generated. We also checked the clip using another AI detection platform, Deepfake-o-meter. Its AVSRDD (2025) detection model flagged the video as potentially AI-generated with a 100% confidence score.

Conclusion

The evidence gathered during our research clearly shows that the viral video claiming to show a monkey saving a sleeping child from a falling tree branch is not authentic. The clip was created using AI-generated visual techniques and does not depict a real incident.

.webp)