#FactCheck - Viral video of Defence Minister Rajnath Singh supporting Israeli attacks on Iran is a deepfake

Executive Summary:

A video of India’s Defence Minister Rajnath Singh is going viral on social media. The post claims that Rajnath Singh is openly supporting Israeli-American attacks against Iran. In the video, he can allegedly be heard saying that Prime Minister Narendra Modi had visited Israel before the war began and warned Tehran that disturbing peace would have serious consequences.

Research by CyberPeace found that the viral video is a deepfake created using Artificial Intelligence (AI). Rajnath Singh has not made any such statement about Iran or the Israel-US conflict.

Claim

A Facebook user “Sheikh Sadeque Ali” shared the video on March 2, 2026. The caption of the post reads, “Indian Defence Minister Rajnath Singh is supporting Israel’s attack on Iran. This clearly shows that India supports the killing of Muslims.”

In the viral video, Rajnath Singh appears to say in English: “Prime Minister Modi’s visit to Israel before the attack on Iran reflects India’s solidarity with its strategic partner… He warned Tehran that hostile actions would have serious consequences for regional peace.”

Fact Check:

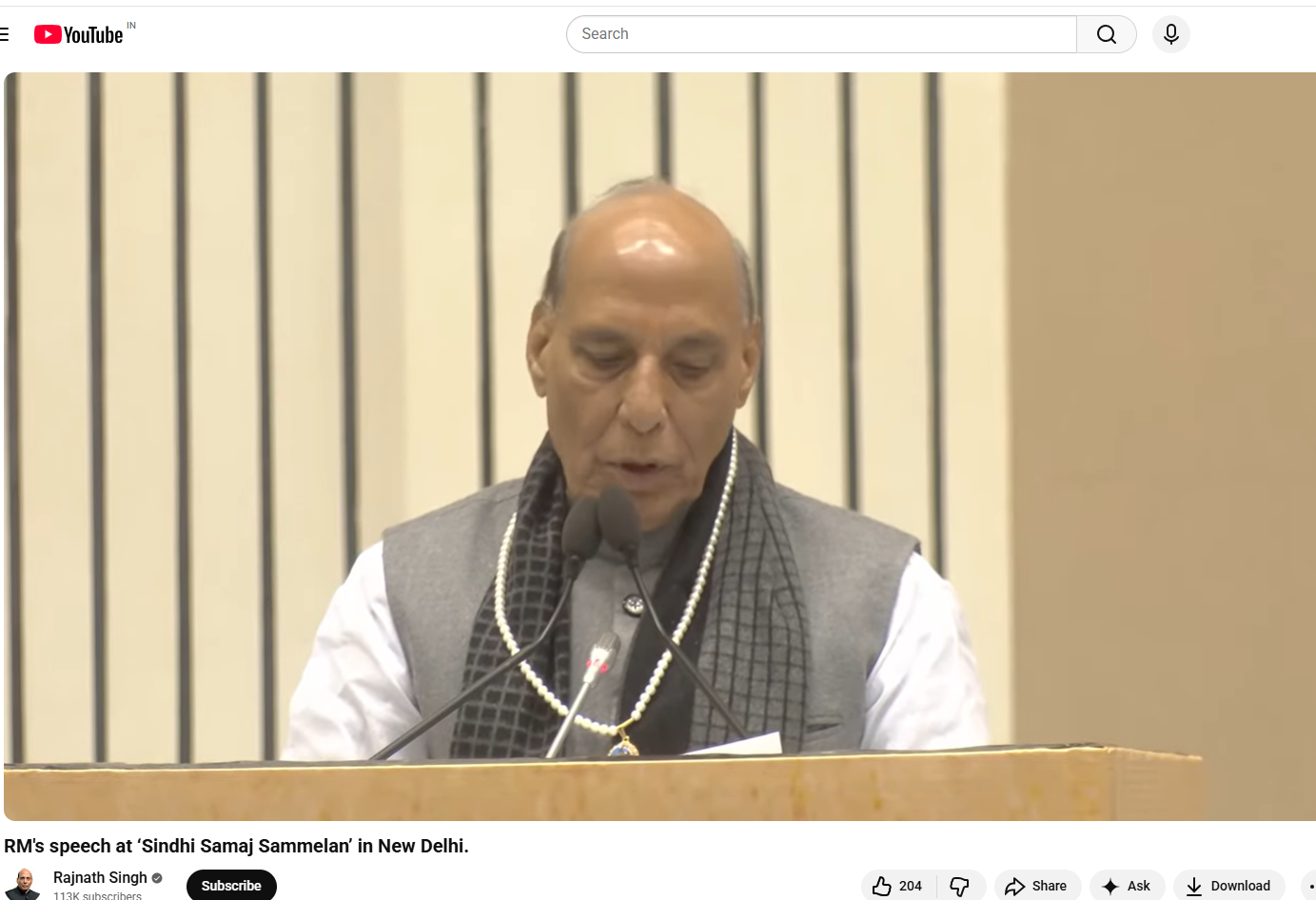

To verify the claim, we extracted keyframes from the viral video and conducted a reverse image search. During the research , we found the original video on Rajnath Singh’s official YouTube channel. The video was uploaded on November 23, 2025.In the original video, Rajnath Singh was addressing a Sindhi community conference in Delhi. During his speech, he was talking about Sindhi culture and the history of Partition. He did not mention Israel, Iran or any Middle East conflict during the entire program.

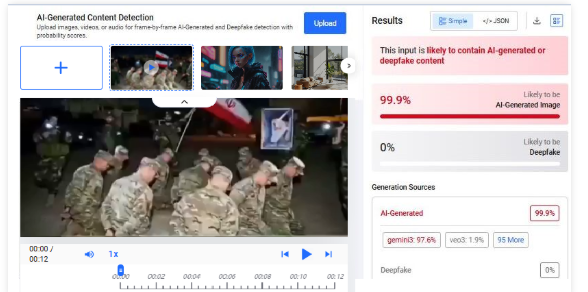

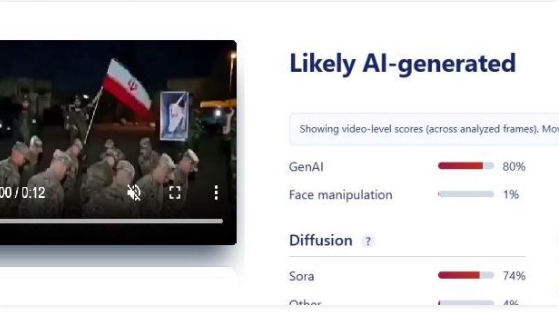

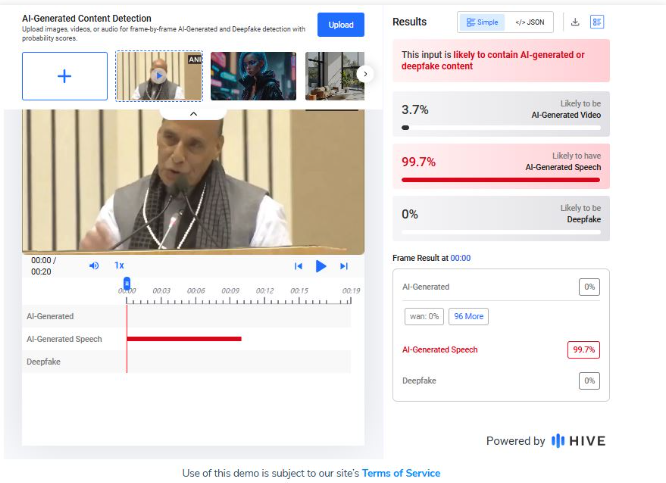

Upon closely examining the viral video, technical inconsistencies between the lip movements and the audio (lip-sync discrepancies) can be observed, which strongly indicate that the video may have been generated using AI. To verify this, we analysed the clip using several AI-detection tools. The AI detection tool Hive Moderation indicated that the video has a 99% probability of being AI-generated.

Conclusion:

Our research found that the viral video of Rajnath Singh is a deepfake. He has not made any statement supporting Israel or opposing Iran. The original video is from a Sindhi community event in Delhi, which has been digitally altered using AI to spread a misleading claim.