#FactCheck - Viral Claim About Nitish Kumar’s Resignation Over UGC Protests Is Misleading

Executive Summary

A news video is being widely circulated on social media with the claim that Bihar Chief Minister Nitish Kumar has resigned from his post in protest against the ongoing UGC-related controversy. Several users are sharing the clip while alleging that Kumar stepped down after opposing the issue. However, CyberPeace research has found the claim to be false. The researchrevealed that the video being shared is from 2022 and has no connection whatsoever with the UGC or any recent protests related to it. An old video has been misleadingly linked to a current issue to spread misinformation on social media.

Claim:

An Instagram user shared a video on January 26 claiming that Bihar Chief Minister Nitish Kumar had resigned. The post further alleged that the news was first aired on Republic channel and that Kumar had submitted his resignation to then-Governor Phagu Chauhan. The link to the post, its archived version, and screenshots can be seen below. (Links as provided)

Fact Check:

To verify the claim, CyberPeace first conducted a keyword-based search on Google. No credible or established media organisation reported any such resignation, clearly indicating that the viral claim lacked authenticity.

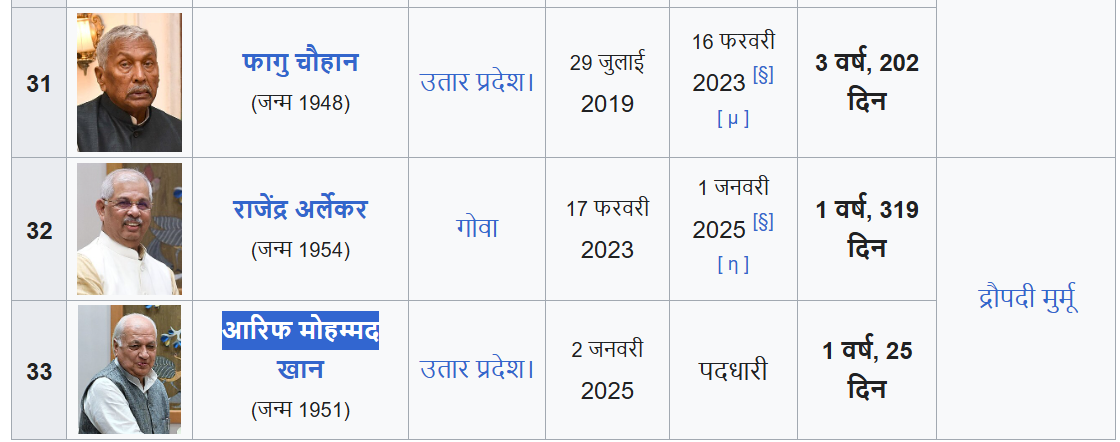

Further, the voiceover in the viral video states that Nitish Kumar handed over his resignation to Governor Phagu Chauhan. However, Phagu Chauhan ceased to be the Governor of Bihar in February 2023. The current Governor of Bihar is Arif Mohammad Khan, making the claim in the video factually incorrect and misleading.

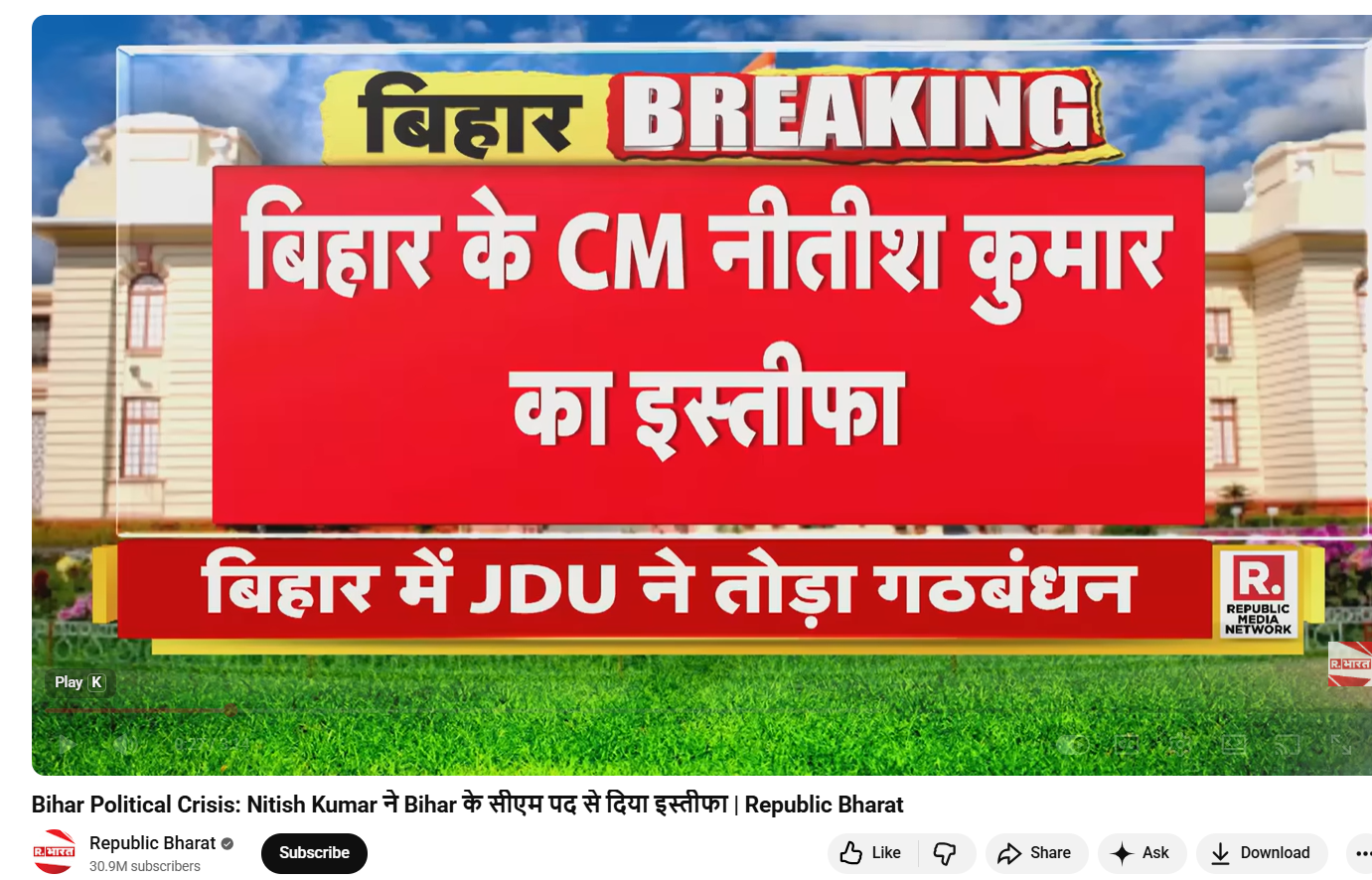

In the next step, keyframes from the viral video were extracted and reverse-searched using Google Lens. This led to the official YouTube channel of Republic Bharat, where the full version of the same video was found. The video was uploaded on August 9, 2022. This clearly establishes that the clip circulating on social media is not recent and is being shared out of context.

Conclusion

CyberPeace’s research confirms that the viral video claiming Nitish Kumar resigned over the UGC issue is false. The video dates back to 2022 and has no link to the current UGC controversy. An old political video has been deliberately circulated with a misleading narrative to create confusion on social media.

Related Blogs

Introduction

India is reaching a turning point in its technological development when the AI Impact Summit 2026 is held in New Delhi. Artificial Intelligence (AI)is transforming economies, labour markets, governance structures and even the grammar of public discourse. It is no longer a frontier of speculation. The challenge facing the Summit is not whether AI will change our societies, it has already done so but rather whether inclusiveness and human dignity will serve as the foundation for this change.

India’s AI journey is defined by scale. The nation has one of the biggest user bases for cutting edge AI systems worldwide. According to projections, AI may create millions of new technology-driven occupations by 2030 and change the nature of millions more. This is a structural reconfiguration rather than an incremental alteration. The stakes are high for a country with a large youth population and diverse socioeconomic diversity.

India’s Tryst with Artificial Intelligence

India’s tryst with AI is a developmental imperative occurring at a civilisational scale not a show put on for a western favour. AI is still portrayed in many international storylines as a competition between China’s state backed rapidity, Europe’s sophisticated regulations and Silicon Valley’s capital. India is far too frequently a huge consumer market rather than a significant force behind the AI era. Such evaluations undervalue a nation that has already proven its capacity to implement technology at a democratic scale through its digital public infrastructure. AI in India is about more than just improving algorithms, it’s about giving millions more people access to social safety, healthcare, agriculture and education.

The scepticism overlooks a deeper truth, India innovates not from abundance but from urgency. India remains certain that technical advancement must be in line with social justice and inclusive growth. The recollections from history suggest that India’s greatest technological strides have often followed underestimation.

A Conclave of Contagious Ideas

India has long been the favourite underestimation of certain western observers, a nation of 1.4 billion people, the world’s fifth largest economy, a noisy democracy with inconvenient geopolitical realities, often assessed by counterparts governing populations smaller than many of its states. Advice follows in spades, sometimes from cities that mastered the art of strategic improvisation long before they preached restraint and sometimes with lectures on innovation, governance and order.

However, there are times when hierarchies need to be rearranged. It was hard to overlook the symbolism when Ranvir Sachdeva, the youngest keynote speaker at the AI Impact Summit, 2026, took the stage, “I’m here as the youngest keynote speaker at the Indian AI Impact Summit,” he said, discussing how he’s connecting ancient Indian beliefs to contemporary technology and the various strategies that other countries are doing to develop AI. In that simple articulation lay a quiet rebuttal, a civilization that once debated metaphysics under banyan trees is now debating ethics in plenary halls. History constantly demonstrates that India’s permanent address has never been underestimation.

From New Delhi to Geneva: The Global Arc of AI Governance

Now that the AI Impact Summit, 2026 is coming to an end, what’s left is not just the recollection of its size but also the form of new international dialogue. The New Delhi Declaration, a remarkable highlight of the Summit, was signed by eighty-eight nations and international organisations to support the democratic spread of AI.

The increasing complexity of the AI order was also made clear by the Summit. Pledges for investments totalled hundred of billions. The U.S. led Pax Silica effort was joined by India. SovereignLLMs in the country were introduced. At the same time, spectators were reminded that the politics of AI are inextricably linked to its promise via logistical challenges, protest disruptions and business rivalries. Although nations are not bound by the New Delhi Declaration it does represent a growing consensus that acceleration must be accompanied by governance.

The revelation that the 2027 AI Impact Summit will be in Geneva represents a significant shift in this regard. Guy Parmelin, the president of Switzerland, described the upcoming chapter as one that is primarily concerned with international law and good governance in an attempt to guarantee that the future of AI is not entirely in the hands of powerful nations. From scale and ambition in New Delhi to normative consolidation in Europe, Geneva, longtime hotbed of multilateral diplomacy, provides symbolic continuity.

Concluding Confluence

It is tempting to view the Global CyberPeace Summit (GCS), a Pre-Summit Event of AI Impact Summit held in close succession at Bharat Mandapam on 10th February, 2026. They formed a strong intellectual arc. At GCS, inclusion was not ornamental. A deeper message was conveyed by India Signing Hands’ involvement and purposeful emphasis on accessibility, digital systems must be created with, not just for, those on margins. Resilience must start at the economic level, according to the AI-enabled cybersecurity engagement for MSMEs. Participants were reminded during the talks on Technology Facilitated Gender-Based Violence (TFGBV), CSAM prevention and child safety that technological arguments only gain significance when they are connected to real-world outcomes.

When Geneva takes over in 2027, the issue will not just be how AI should be regulated, but also what ethical foundation that governance is built upon. New Delhi’s belief that wisdom and power must coexist may be its contribution to this developing narrative. That persistence has content than spectacle, as well as possibly the faint form of technical conscience.

WhatsApp messages masquerading as an offer from Maruti Suzuki with links luring unsuspecting users with the promise of Maruti Suzuki 40th Anniversary Celebration presents, have been making the rounds on the app. If you receive such messages try to stay away from it, as it can be a scam.

The Research Wing of CyberPeace Foundation along with Autobot Infosec Private Limited have conducted a study based on a WhatsApp message that contained a link pretending to be a free gift offer from Maruti Suzuki which asks users to participate in a survey in order to get a chance to win a Maruti Baleno Sigma MT car.

Warning SignsThe campaign pretends to be an offer from Maruti Suzuki but is hosted on a third party domain instead of the official Maruti Suzuki website which makes it more suspicious.

The domain names associated with the campaign have been registered in very recent times.

Multiple redirections have been noticed between the links.

No reputed site would ask its users to share the campaign on WhatsApp.

The prize is kept really attractive to lure the laymen.

Grammatical mistakes have been noticed.

A congratulations message appears on the landing page with an attractive photo of Maruti Suzuki cars that asks users to participate in a quick survey in order to get a “Maruti Suzuki BALENO Sigma MT”. Also, the bottom of the page seems to appear like a comment section with public comments establishing the truthfulness of the offer.

The survey starts with some basic questions like Do you know Maruti Suzuki?, How old are you?, How do you think of Maruti Suzuki?, Are you male or female? Etc. Once the user answers the questions a “congratulatory message” is displayed.

On clicking the OK button users are given three attempts to win the prize. After completing all the attempts a message pops up that the user has won “Maruti Suzuki BALENO Sigma MT”. It then prompts the user to share the message on WhatsApp.

Strangely enough the user has to keep clicking the WhatsApp button until the progress bar completes. After clicking on the green ‘WhatsApp’ button multiple times it shows a section where an instruction has been given to complete registration in order to get the prize.

After clicking on the green ‘Complete registration’ button, it redirects the user to multiple advertisements web pages varying each time the user clicks on the button.

During the analysis the research team found a javascript code called hm.js was being executed in the background from the host hm[.]baidu[.]com which is a subdomain of Baidu and is used for Baidu Analytics, also known as Baidu Tongji. The important part is that Baidu is a Chinese multinational technology company specializing in Internet-related services, products and artificial intelligence, headquartered in Beijing’s Haidian district, China.To read the full report, please click (https://www.cyberpeace.org/CyberPeace/Repository/20210828Research-report-on-Maruti-Suzuki-40th-Anniversary-Celebration-free-gift-scam.pdf) here:

Conclusive Summary

1. The whole research activity was performed in a secured sandbox environment where the WhatsApp application was not installed. If any user opens the link from a device like smartphones where the WhatsApp application is installed, the sharing features on the site will open the Whatsapp application on the device to share the link.

2. The campaign collects browser and system information from the users.

3. Most of the domain names associated with the campaign have the registrant country as China.

4. Cybercriminals used Cloudflare technologies to mask the real IP addresses of the front-end domain names used in this Maruti Suzuki 40th Anniversary Celebration free gift campaign. But during the phases of investigation, the research team has identified a domain name that was requested in the background and has been traced as belonging to China.

CyberPeace Advisory

1. CyberPeace Foundation and Autobot Infosec recommend that people should avoid opening such messages sent via social platforms.

2. If at all, the user gets into this trap, it could lead to whole system compromise such as access to the microphone, Camera, Text Messages, Contacts, Pictures, Videos, Banking Applications, etc as well as financial losses.

3. Do not share confidential details like login credentials, banking information with such a type of scam.

4. Do not share or forward fake messages containing links without proper verification.

5. There is a need for International Cyber Cooperation between countries to bust the cybercriminal gangs running the fraud campaigns affecting individuals and organizations, to make Cyberspace resilient and peaceful.

Introduction

In today’s digital world, where everything is related to data, the more data you own, the more control and compliance you have over the market, which is why companies are looking for ways to use data to improve their business. But at the same time, they have to make sure they are protecting people’s privacy. It is very tricky to strike a balance between both of them. Imagine you are trying to bake a cake where you need to use all the ingredients to make it taste great, but you also have to make sure no one can tell what’s in it. That’s kind of what companies are dealing with when it comes to data. Here, ‘Pseudonymisation’ emerges as a critical technical and legal mechanism that offers a middle ground between data anonymisation and unrestricted data processing.

Legal Framework and Regulatory Landscape

Pseudonymisation, as defined by the General Data Protection Regulation (GDPR) in Article 4(5), refers to “the processing of personal data in such a manner that the personal data can no longer be attributed to a specific data subject without the use of additional information, provided that such additional information is kept separately and is subject to technical and organisational measures to ensure that the personal data are not attributed to an identified or identifiable natural person”. This technique represents a paradigm shift in data protection strategy, enabling organisations to preserve data utility while significantly reducing privacy risks. The growing importance of this balance is evident in the proliferation of data protection laws worldwide, from GDPR in Europe to India’s Digital Personal Data Protection Act (DPDP) of 2023.

Its legal treatment varies across jurisdictions, but a convergent approach is emerging that recognises its value as a data protection safeguard while maintaining that the pseudonymised data remains personal data. Article 25(1) of GDPR recognises it as “an appropriate technical and organisational measure” and emphasises its role in reducing risks to data subjects. It protects personal data by reducing the risk of identifying individuals during data processing. The European Data Protection Board’s (EDPB) 2025 Guidelines on Pseudonymisation provide detailed guidance emphasising the importance of defining the “pseudonymisation domain”. It defines who is prevented from attributing data to specific individuals and ensures that the technical and organised measures are in place to block unauthorised linkage of pseudonymised data to the original data subjects. In India, while the DPDP Act does not explicitly define pseudonymisation, legal scholars argue that such data would still fall under the definition of personal data, as it remains potentially identifiable. The Act defines personal data defined in section 2(t) broadly as “any data about an individual who is identifiable by or in relation to such data,” suggesting that the pseudonymised information, being reversible, would continue to require compliance with data protection obligations.

Further, the DPDP Act, 2023 also includes principles of data minimisation and purpose limitation. Section 8(4) says that a “Data Fiduciary shall implement appropriate technical and organisational measures to ensure effective observance of the provisions of this Act and the Rules made under it.” The concept of Pseudonymization fits here because it is a recognised technical safeguard, which means companies can use pseudonymization as one of the methods or part of their compliance toolkit under Section 8(4) of the DPDP Act. However, its use should be assessed on a case to case basis, since ‘encryption’ is also considered one of the strongest methods for protecting personal data. The suitability of pseudonymization depends on the nature of the processing activity, the type of data involved, and the level of risk that needs to be mitigated. In practice, organisations may use pseudonymization in combination with other safeguards to strengthen overall compliance and security.

The European Court of Justice’s recent jurisprudence has introduced nuanced considerations about when pseudonymised data might not constitute personal data for certain entities. In cases where only the original controller possesses the means to re-identify individuals, third parties processing such data may not be subject to the full scope of data protection obligations, provided they cannot reasonably identify the data subjects. The “means reasonably likely” assessment represents a significant development in understanding the boundaries of data protection law.

Corporate Implementation Strategies

Companies find that pseudonymisation is not just about following rules, but it also brings real benefits. By using this technique, businesses can keep their data more secure and reduce the damage in the event of a breach. Customers feel more confident knowing that their information is protected, which builds trust. Additionally, companies can utilise this data for their research or other important purposes without compromising user privacy.

Key Benefits of Pseudonymisation:

- Enhanced Privacy Protection: It hides personal details like names or IDs with fake ones (with artificial values or codes), making it harder for accidental privacy breaches.

- Preserved Data Utility: Unlike completely anonymous data, pseudonymised data keeps its usefulness by maintaining important patterns and relationships within datasets.

- Facilitate Data Sharing: It’s easier to share pseudonymised data with partners or researchers because it protects privacy while still being useful.

However, using pseudonymisation is not as easy as companies have to deal with tricky technical issues like choosing the right methods, such as encryption or tokenisation and managing security keys safely. They have to implement strong policies to stop anyone from figuring out who the data belongs to. This can get expensive and complicated, especially when dealing with a large amount of data, and it often requires expert help and regular upkeep.

Balancing Privacy Rights and Data Utility

The primary challenge in pseudonymisation is striking the right balance between protecting individuals' privacy and maintaining the utility of the data. To get this right, companies need to consider several factors, such as why they are using the data, the potential hacker's level of skill, and the type of data being used.

Conclusion

Pseudonymisation offers a practical middle ground between full anonymisation and restricted data use, enabling organisations to harness the value of data while protecting individual privacy. Legally, it is recognised as a safeguard but still treated as personal data, requiring compliance under frameworks like GDPR and India’s DPDP Act. For companies, it is not only regulatory adherence but also ensuring that it builds trust and enhances data security. However, its effectiveness depends on robust technical methods, governance, and vigilance. Striking the right balance between privacy and data utility is crucial for sustainable, ethical, and innovation-driven data practices.

References:

- https://gdpr-info.eu/art-4-gdpr/

- https://www.meity.gov.in/static/uploads/2024/06/2bf1f0e9f04e6fb4f8fef35e82c42aa5.pdf

- https://gdpr-info.eu/art-25-gdpr/

- https://www.edpb.europa.eu/system/files/2025-01/edpb_guidelines_202501_pseudonymisation_en.pdf

- https://curia.europa.eu/juris/document/document.jsf?text=&docid=303863&pageIndex=0&doclang=EN&mode=req&dir=&occ=first&part=1&cid=16466915

- https://curia.europa.eu/juris/document/document.jsf?text=&docid=303863&pageIndex=0&doclang=EN&mode=req&dir=&occ=first&part=1&cid=16466915