#FactCheck - Viral Video Falsely Linked to India; Actually from Bangladesh

A video circulating widely on social media shows a child throwing stones at a moving train, while a few other children can also be seen climbing onto the engine. The video is being shared with a communal narrative, with claims that the incident took place in India.

Cyber Peace Foundation’s research found the viral claim to be misleading. Our research revealed that the video is not from India, but from Bangladesh, and is being falsely linked to India on social media.

Claim:

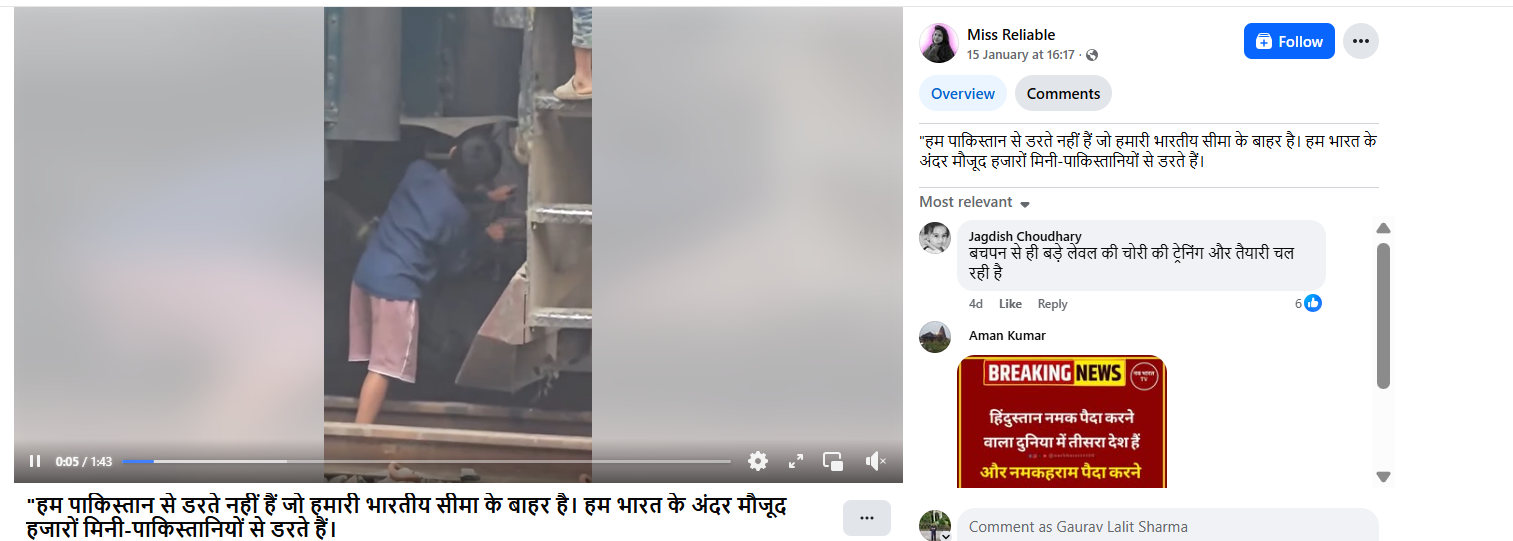

On January 15, 2026, a Facebook user shared the viral video claiming it depicted an incident from India. The post carried a provocative caption stating, “We are not afraid of Pakistan outside our borders. We are afraid of the thousands of mini-Pakistans within India.” The post has been widely circulated, amplifying communal sentiments.

Fact Check:

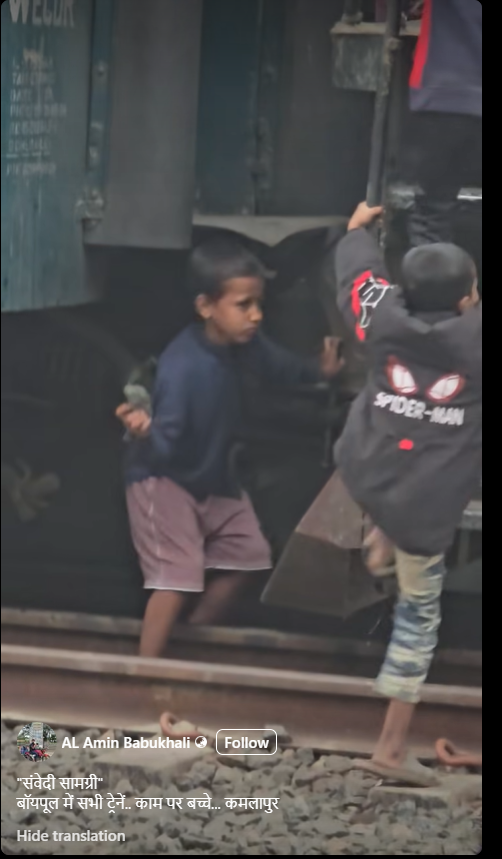

To verify the authenticity of the video, we conducted a reverse image search using Google Lens by extracting keyframes from the viral clip. During this process, we found the same video uploaded on a Bangladeshi Facebook account named AL Amin Babukhali on December 28, 2025. The caption of the original post mentions Kamalapur, which is a well-known railway station in Bangladesh. This strongly indicates that the incident did not occur in India.

Further analysis of the video shows that the train engine carries the marking “BR”, along with text written in the Bengali language. “BR” stands for Bangladesh Railways, confirming the origin of the train. To corroborate this further, we searched for images related to Bangladesh Railways using Google’s open tools. We found multiple images on Getty Images showing train engines with the same design and markings as seen in the viral video. The visual match clearly establishes that the train belongs to Bangladesh Railways.

Conclusion

Our research confirms that the viral video is from Bangladesh, not India. It is being shared on social media with a false and misleading claim to give it a communal angle and link it to India.