#FactCheck - Viral Clip Not Harish Rana’s Farewell, Linked to Surat Woman’s Organ Donation

Executive Summary

Amid reports that AIIMS Delhi has initiated the process to implement the Supreme Court’s decision allowing passive euthanasia for Harish Rana, a video is being shared on social media claiming to show his emotional farewell. However, research by the CyberPeace found the viral claim to be misleading. Our research revealed that the video has no connection to the Harish Rana case. In reality, the footage is from Surat, Gujarat, where the family of a 45-year-old brain-dead woman donated her organs.

Claim:

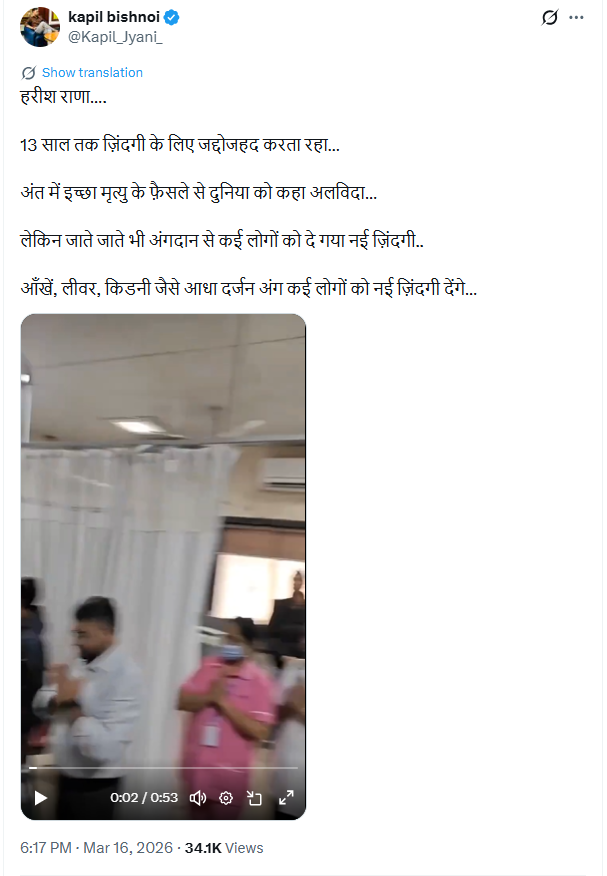

On social media platform X (formerly Twitter), a user shared the viral video on March 16, 2026, with the caption:

“Harish Rana… struggled for life for 13 years… in the end said goodbye to the world through euthanasia… but even while leaving, gave new life to many through organ donation… eyes, liver, kidneys and several other organs will give a new life to many…”

Post link and archive link are given below:

Fact Check

We took screenshots from the viral video and conducted a reverse image search. This led us to an Instagram handle where the same video was uploaded on January 27, 2026.

- https://www.instagram.com/reels/DUAt_42k2ME/

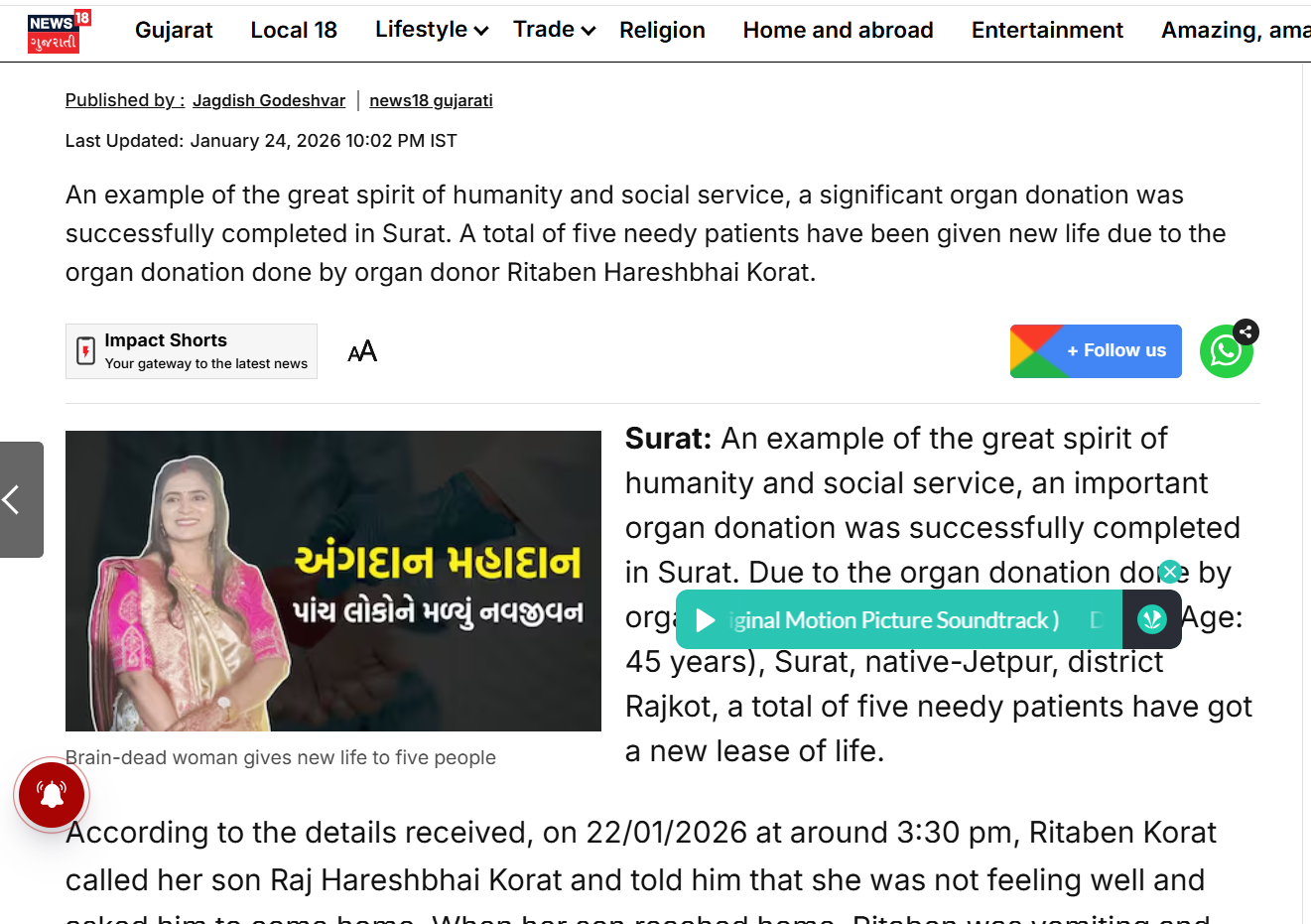

According to the caption on the Instagram post, the video shows a brain-dead woman in Surat whose liver, both kidneys, and eyes were donated. The post also included an image of the woman. Based on clues from the viral video, we conducted a keyword search on Google and found a report on the website of News18 Gujarati.

According to the report, organ donation by Ritaben Hareshbhai Korat in Surat gave a new life to five patients. The report also carried her photograph, matching the visuals seen in the viral video.

Conclusion:

Our research found that the viral video has no connection to Harish Rana. It actually shows an incident from Surat, Gujarat, where the family of a 45-year-old brain-dead woman, Ritaben Korat, donated her organs.

.webp)