#FactCheck - AI-Generated Video Falsely Claims Death of Iran’s Supreme Leader

Executive Summary

Iran’s Supreme Leader Ayatollah Ali Khamenei was reportedly killed in a major attack carried out by Israel and the United States, with claims circulating that Iranian state media confirmed his death early Sunday morning. Amid these claims, a video is being widely shared on social media. The viral video shows a body trapped under debris. Users sharing the clip claim that the body seen in the footage is that of Ayatollah Ali Khamenei. However, research conducted by CyberPeace found the viral claim to be false. Our research revealed that the video is not authentic but AI-generated.

Claim:

On March 1, 2026, an Instagram user shared the viral video with the caption: “Shaheed Ayatollah Sayyid Ali Hosseini Khamenei — Neither fled nor hid in a bunker, embraced death like a brave man.” The link to the post and its archived version are provided below along with a screenshot.

Fact Check:

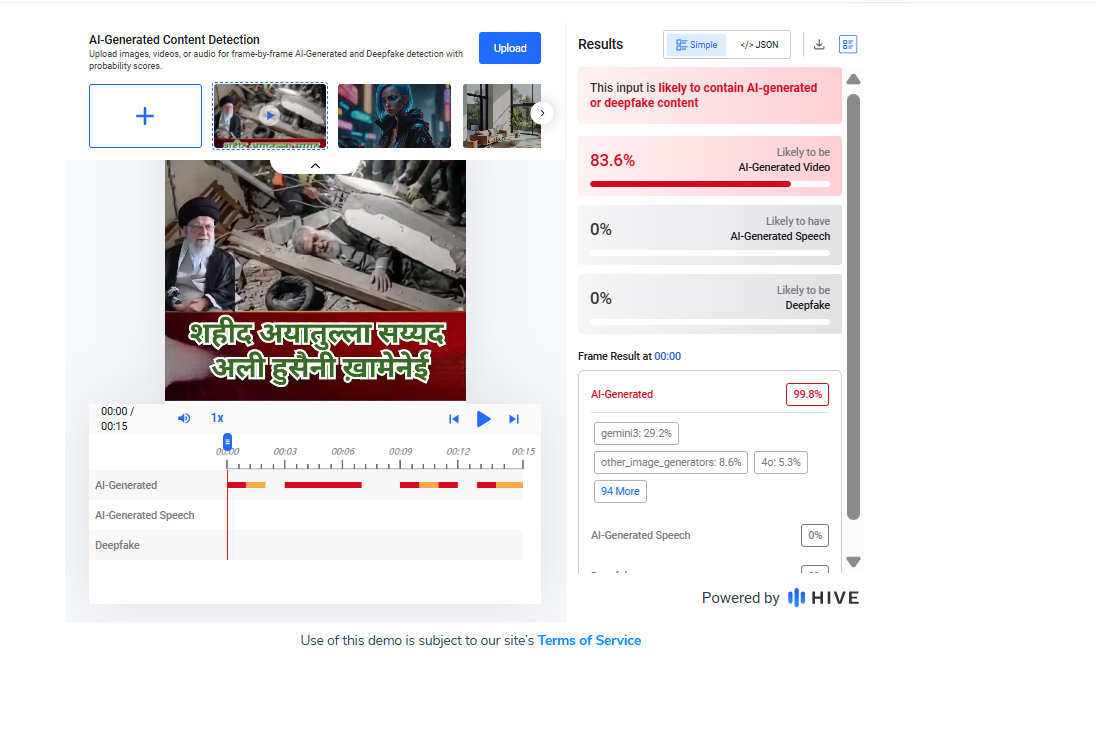

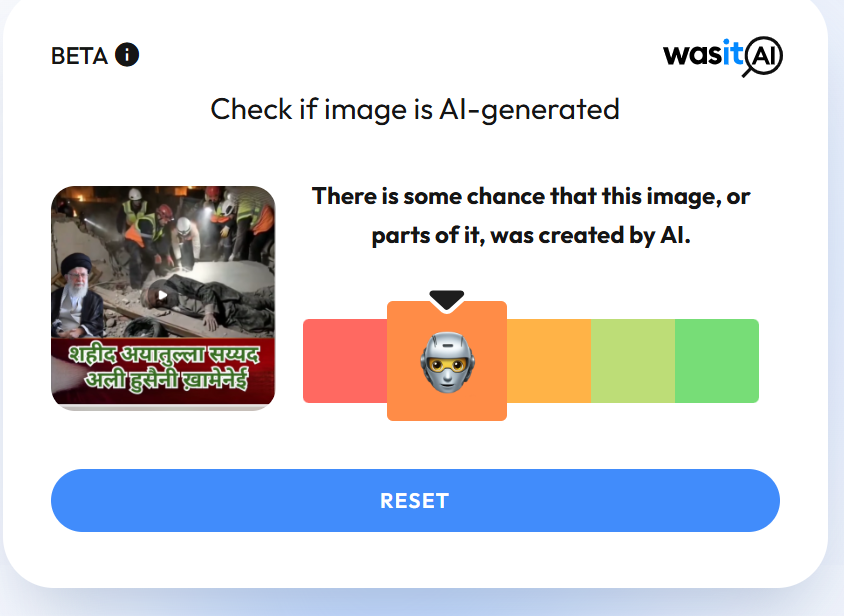

Upon closely examining the viral video, we noticed several visual irregularities and technical inconsistencies. This raised suspicion about its authenticity. We then scanned the video using the AI detection tool Hive Moderation. The results indicated that approximately 83 percent of the content showed signs of being AI-generated.

To further verify the claim, we also analyzed the video using another AI detection tool, WasItAI. The findings similarly suggested that the video was generated using artificial intelligence.

Conclusion:

Our research establishes that the viral video is not real. It has been artificially generated using AI and is being shared with misleading claims.

Related Blogs

.webp)

Introduction

The AI Action Summit is a global forum that brings together world leaders, policymakers, technology experts, and industry representatives to discuss AI governance, ethics, and its role in society. This year, the week-long Paris AI Action Summit officially culminated on the 11th of February, 2025. It brought together experts from the industry, policymakers, and other dignitaries to discuss Artificial Intelligence and its challenges. The event was co-chaired by Indian Prime Minister Narendra Modi and French President Emmanuel Macron. In line with the summit, the Indian delegation actively engaged in the 2nd India-France AI Policy Roundtable, an official side event of the summit, and the 14th India-France CEOs Forum. These discussions were on diverse sectors including defense, aerospace, technology, etc. among other things.

Prime Minister Modi’s Address

During the AI Action Summit in Paris, Prime Minister Narendra Modi drew attention to the revolutionary effect of AI in politics, the economy, security, and society. Stressing the requirement of international cooperation, he promoted strong frameworks of governance to combat AI-based risks and consequently, build public confidence in new technologies. Needed efforts with respect to cybersecurity issues such as deepfakes and disinformation were also acknowledged.

Democratising AI, and sharing its benefits, particularly with the Global South not only aligned with Sustainable Development Goals (SDGs) but also affirmed India’s resolve towards sharing expertise and best practices. India’s remarkable feat of creating a Digital Public Infrastructure, that caters to a population of 1.4 billion through open and accessible technology was highlighted as well.

Among the key announcements, India revealed its plans to create its own Large Language Model (LLM) that reflects the country's linguistic diversity, strengthening its AI aspirations. Further, India will be hosting the next AI Action Summit, reaffirming its position in international AI leadership. The Prime Minister also welcomed France's initiatives, such as the launch of the "AI Foundation" and the "Council for Sustainable AI", initiated by President Emmanuel Macron. He emphasized the necessity to extend the Global Partnership for AI and to get it more representative and inclusive so that Global South voices are actually incorporated into AI innovation and governance.

Other Perspectives

Though there were 58 countries that signed the international agreement on a more open, inclusive, sustainable, and ethical approach to AI development (including India, France, and China), the UK and the US have refused to sign the international agreement at the AI Summit stating their issues with global governance and national security. While the former raised concerns about the lack of sufficient details regarding the establishment of global AI governance and AI’s effect on national security as their reason, the latter showcased its reservations about the overly wide AI regulations which had the potential to hamper a transformative industry. Meanwhile, the US is also looking forward to ‘Stargate’, its $500 billion AI infrastructure project alongside the companies- OpenAI, Softbank, and Oracle.

CyberPeace Insights

The Summit has garnered greater significance with the backdrop of the release of platforms such as DeepSeek R1, China’s AI assistant system similar to that of OpenAI’s ChatGPT. On its release, it was the top-rated free application on Apple’s app store and sent the technology stocks tumbling. Moreover, investors world over appreciated the creation of the model which was made roughly in about $5 million while other AI companies spent more in comparison (keeping in mind the restrictions caused by the chip export controls in China). This breakthrough challenges the conventional notion that massive funding is a prerequisite for innovation, offering hope for India’s burgeoning AI ecosystem. With the IndiaAI mission and fewer geopolitical restrictions, India stands at a pivotal moment to drive responsible AI advancements.

References:

- https://www.mea.gov.in/press-releases.htm?dtl/39023/Prime_Minister_cochairs_AI_Action_Summit_in_Paris_February_11_2025

- https://indianexpress.com/article/explained/explained-sci-tech/what-is-stargate-trumps-500-billion-ai-project-9793165/

- https://pib.gov.in/PressReleasePage.aspx?PRID=2102056

- https://pib.gov.in/PressReleasePage.aspx?PRID=2101947

- https://pib.gov.in/PressReleasePage.aspx?PRID=2101896

- https://www.timesnownews.com/technology-science/uk-and-us-decline-to-sign-global-ai-agreement-at-paris-ai-action-summit-here-is-why-article-118164497

- https://www.thehindu.com/sci-tech/technology/india-57-others-sign-paris-joint-statement-on-inclusive-sustainable-ai/article69207937.ece

Executive Summary:

A viral video (archive link) claims General Upendra Dwivedi, Chief of Army Staff (COAS), admitted to losing six Air Force jets and 250 soldiers during clashes with Pakistan. Verification revealed the footage is from an IIT Madras speech, with no such statement made. AI detection confirmed parts of the audio were artificially generated.

Claim:

The claim in question is that General Upendra Dwivedi, Chief of Army Staff (COAS), admitted to losing six Indian Air Force jets and 250 soldiers during recent clashes with Pakistan.

Fact Check:

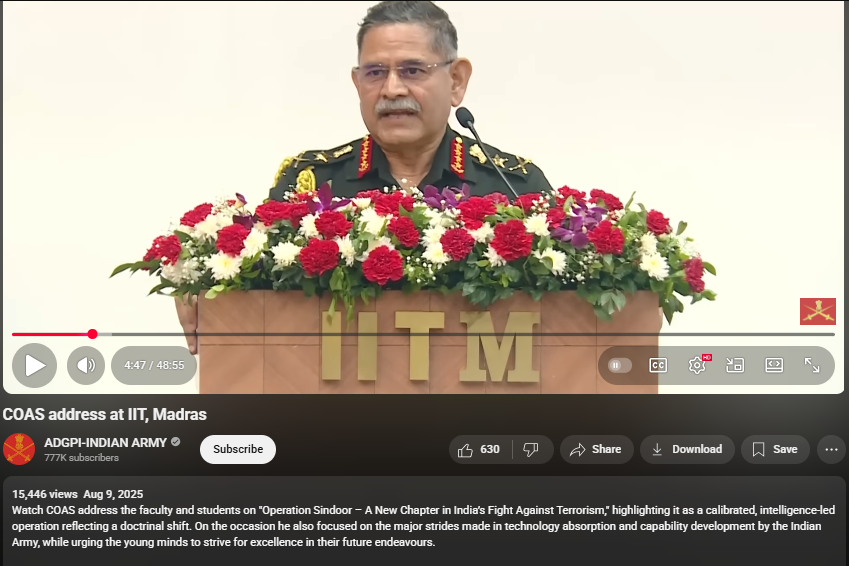

Upon conducting a reverse image search on key frames from the video, it was found that the original footage is from IIT Madras, where the Chief of Army Staff (COAS) was delivering a speech. The video is available on the official YouTube channel of ADGPI – Indian Army, published on 9 August 2025, with the description:

“Watch COAS address the faculty and students on ‘Operation Sindoor – A New Chapter in India’s Fight Against Terrorism,’ highlighting it as a calibrated, intelligence-led operation reflecting a doctrinal shift. On the occasion, he also focused on the major strides made in technology absorption and capability development by the Indian Army, while urging young minds to strive for excellence in their future endeavours.”

A review of the full speech revealed no reference to the destruction of six jets or the loss of 250 Army personnel. This indicates that the circulating claim is not supported by the original source and may contribute to the spread of misinformation.

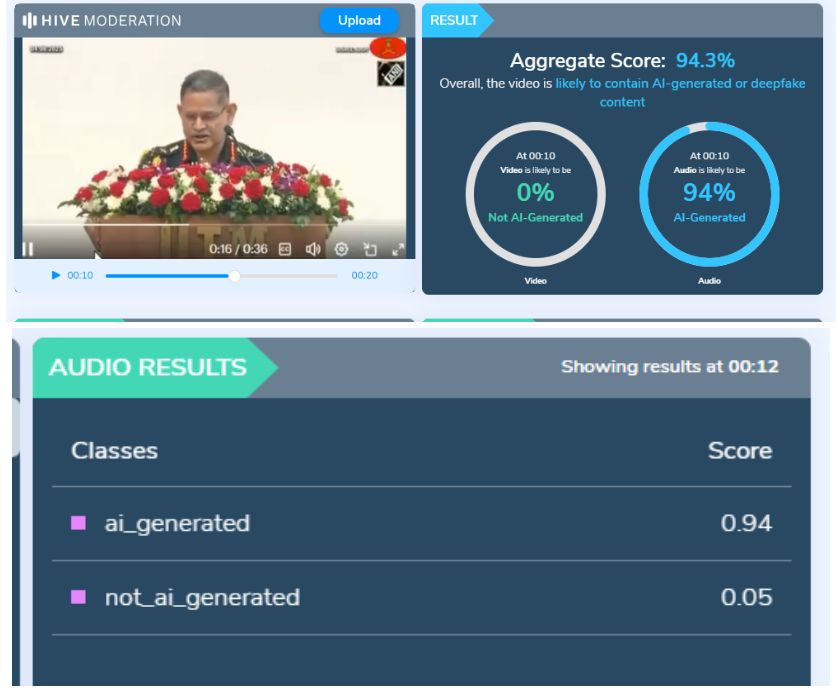

Further using AI Detection tools like Hive Moderation we found that the voice is AI generated in between the lines.

Conclusion:

The claim is baseless. The video is a manipulated creation that combines genuine footage of General Dwivedi’s IIT Madras address with AI-generated audio to fabricate a false narrative. No credible source corroborates the alleged military losses.

- Claim: AI-Generated Audio Falsely Claims COAS Admitted to Loss of 6 Jets and 250 Soldiers

- Claimed On: Social Media

- Fact Check: False and Misleading

Executive Summary

A video purportedly showing former Chief of Defence Staff (CDS) General Anil Chauhan criticizing the government over “Operation Sindoor” is being widely shared on social media. In the viral clip, Chauhan is allegedly heard saying that the Indian military was unable to complete the operation due to political interference and that, unlike Pakistan’s military, India’s armed forces did not receive adequate political support. Users claim that he made these remarks while announcing his resignation.

CyberPeace Research Wing research found the claim to be false. The viral video is a deepfake created by manipulating an original video of General Chauhan’s farewell ceremony at the end of his tenure. In the authentic footage, the former CDS expressed satisfaction with his tenure and thanked the three armed services for their support. The altered clip appears to have been shared with the intention of spreading misinformation. Similar AI-manipulated videos targeting senior Indian military officials have surfaced on social media in the past.

Claim

On June 1, 2026, Facebook user “Meenu Kundu Dhakal” shared the viral video with the caption: “The army is facing political interference. The government has turned the armed forces into a tool for gathering votes.”

https://www.facebook.com/100092961600658/posts/1669160500794363/

https://perma.cc/SE6J-5JC6

Fact Check

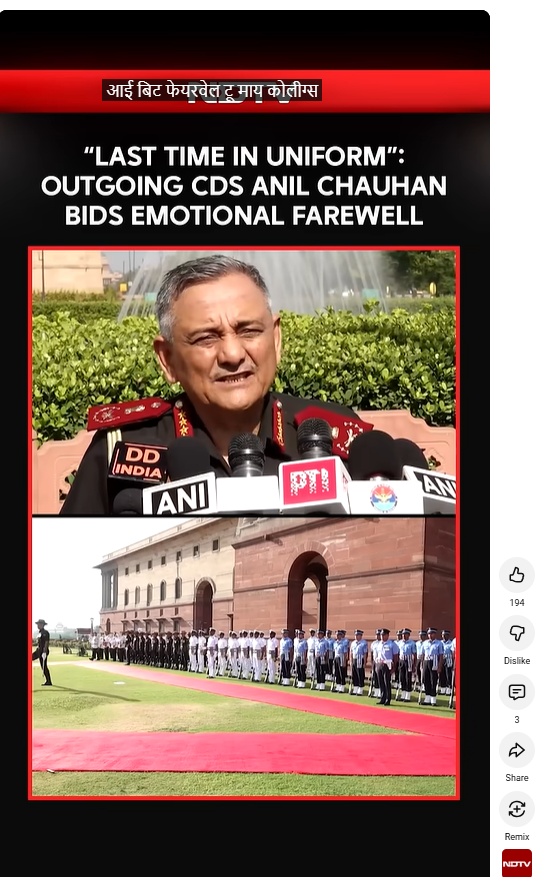

To verify the claim, we extracted keyframes from the viral video and conducted reverse-image searches. The original video was found on the official Instagram account of ANI, where it was posted on May 30, 2026. In the authentic footage, General Chauhan says:“I thank the three services and Headquarters IDS for it. With the conclusion of the guard of honour, I bid farewell to my colleagues in uniform, comrades in arms forever. I just laid the wreath at the War Memorial for the last time in uniform, as a humble tribute to those who laid down their lives in the line of duty. After the wreath-laying, I was welcomed by friends, relatives, and well-wishers. This is symbolic of my transition from uniform to civilian life. I had a very satisfying and excellent tenure. Thank you. Jai Hind.”

https://www.instagram.com/reels/DY8-5sqgF1B/

The same footage was also found on multiple news platforms covering General Chauhan’s farewell ceremony upon completion of his tenure. None of the reports mentioned any criticism of the government or comments regarding political interference in military affairs.

https://www.youtube.com/shorts/J-Fi8F32huo

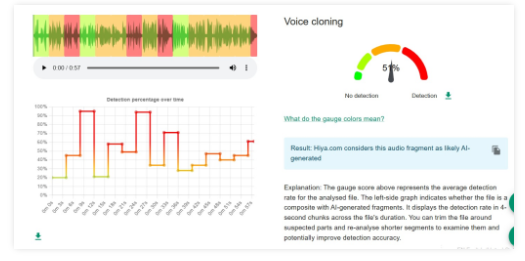

To assess whether the viral clip had been manipulated, we analyzed the audio using AI-detection tools. The tool “Hiya” indicated a 51 percent likelihood that the audio was AI-generated.

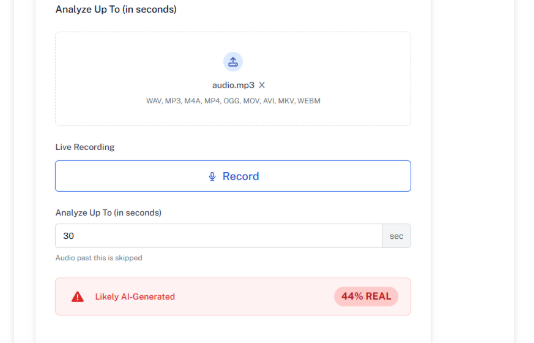

Another detection tool, “Undetectable,” found indicators suggesting a 44 percent probability of AI-generated audio content.

Conclusion

The viral video claiming to show former CDS General Anil Chauhan criticizing the government’s handling of “Operation Sindoor” is a deepfake. The original video was recorded during his farewell ceremony at the conclusion of his tenure. In the authentic footage, General Chauhan described his tenure as “very satisfying and excellent” and thanked the armed forces for their support. The viral clip has been digitally manipulated and is being shared to spread misinformation.