#FactCheck -AI-Generated Image Falsely Claims Mukesh and Nita Ambani Gifted Luxury Car to Suryakumar Yadav

Executive Summary

A picture circulating on social media allegedly shows Reliance Industries chairman Mukesh Ambani and Nita Ambani presenting a luxury car to India’s T20 team captain Suryakumar Yadav. The image is being widely shared with the claim that the Ambani family gifted the cricketer a luxury car in recognition of his outstanding performance. However, research conducted by the CyberPeace found the viral claim to be false. The research revealed that the image being circulated online is not authentic but generated using artificial intelligence (AI).

Claim

On February 8, 2025, a Facebook user shared the viral image claiming that Mukesh Ambani and Nita Ambani gifted a luxury car to Suryakumar Yadav following his brilliant innings. The post has been widely circulated across social media platforms. In another instance, a user shared a collage in which one image shows Suryakumar Yadav receiving an award, while another depicts him with Nita Ambani, further amplifying the claim.

- https://www.facebook.com/61559815349585/posts/122207061746327178/?rdid=0MukeT6c7WK1uB8m#

- https://archive.ph/wip/UH9Xh

Fact Check:

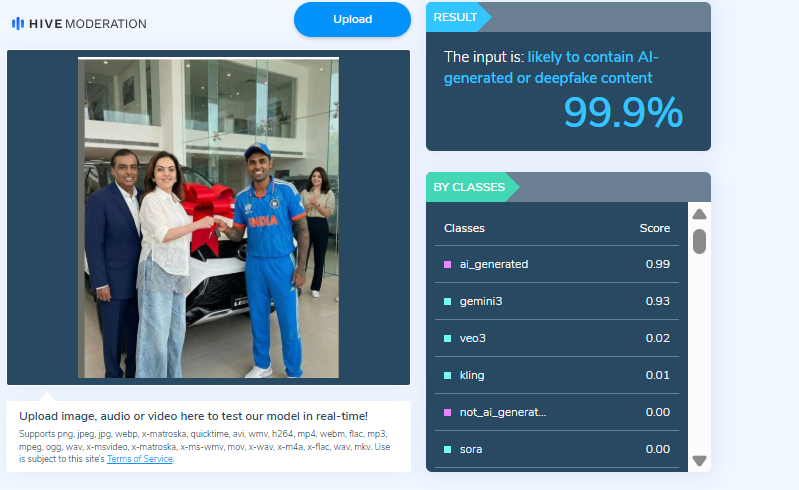

Upon closely examining the viral image, certain visual inconsistencies raised suspicion that it might be AI-generated. To verify its authenticity, the image was analysed using the AI detection tool Hive Moderation, which indicated a 99 percent probability that the image was AI-generated.

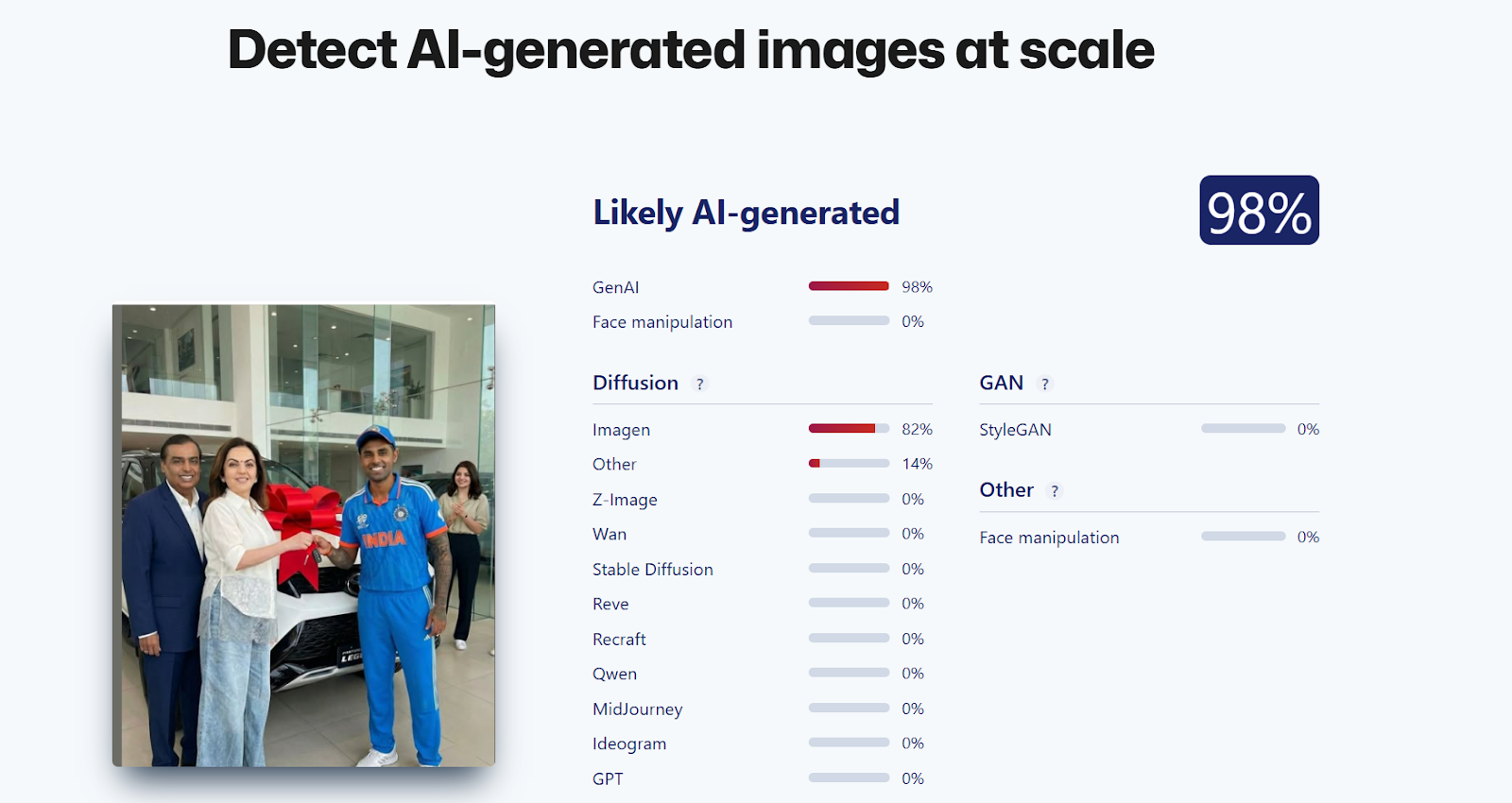

In the next step of the research, the image was also analysed using another AI detection tool, Sightengine, which found a 98 percent likelihood that the image was created using artificial intelligence.

Conclusion

The research clearly establishes that the viral image claiming Mukesh Ambani and Nita Ambani gifted a luxury car to Suryakumar Yadav is misleading. The picture is not real and has been generated using AI.

Related Blogs

Introduction

There is a rising desire for artificial intelligence (AI) laws that limit threats to public safety and protect human rights while allowing for a flexible and inventive setting. Most AI policies prioritize the use of AI for the public good. The most compelling reason for AI innovation as a valid goal of public policy is its promise to enhance people's lives by assisting in the resolution of some of the world's most difficult difficulties and inefficiencies and to emerge as a transformational technology, similar to mobile computing. This blog explores the complex interplay between AI and internet governance from an Indian standpoint, examining the challenges, opportunities, and the necessity for a well-balanced approach.

Understanding Internet Governance

Before delving into an examination of their connection, let's establish a comprehensive grasp of Internet Governance. This entails the regulations, guidelines, and criteria that influence the global operation and management of the Internet. With the internet being a shared resource, governance becomes crucial to ensure its accessibility, security, and equitable distribution of benefits.

The Indian Digital Revolution

India has witnessed an unprecedented digital revolution, with a massive surge in internet users and a burgeoning tech ecosystem. The government's Digital India initiative has played a crucial role in fostering a technology-driven environment, making technology accessible to even the remotest corners of the country. As AI applications become increasingly integrated into various sectors, the need for a comprehensive framework to govern these technologies becomes apparent.

AI and Internet Governance Nexus

The intersection of AI and Internet governance raises several critical questions. How should data, the lifeblood of AI, be governed? What role does privacy play in the era of AI-driven applications? How can India strike a balance between fostering innovation and safeguarding against potential risks associated with AI?

- AI's Role in Internet Governance:

Artificial Intelligence has emerged as a powerful force shaping the dynamics of the internet. From content moderation and cybersecurity to data analysis and personalized user experiences, AI plays a pivotal role in enhancing the efficiency and effectiveness of Internet governance mechanisms. Automated systems powered by AI algorithms are deployed to detect and respond to emerging threats, ensuring a safer online environment.

A comprehensive strategy for managing the interaction between AI and the internet is required to stimulate innovation while limiting hazards. Multistakeholder models including input from governments, industry, academia, and civil society are gaining appeal as viable tools for developing comprehensive and extensive governance frameworks.

The usefulness of multistakeholder governance stems from its adaptability and flexibility in requiring collaboration from players with a possible stake in an issue. Though flawed, this approach allows for flaws that may be remedied using knowledge-building pieces. As AI advances, this trait will become increasingly important in ensuring that all conceivable aspects are covered.

The Need for Adaptive Regulations

While AI's potential for good is essentially endless, so is its potential for damage - whether intentional or unintentional. The technology's highly disruptive nature needs a strong, human-led governance framework and rules that ensure it may be used in a positive and responsible manner. The fast emergence of GenAI, in particular, emphasizes the critical need for strong frameworks. Concerns about the usage of GenAI may enhance efforts to solve issues around digital governance and hasten the formation of risk management measures.

Several AI governance frameworks have been published throughout the world in recent years, with the goal of offering high-level guidelines for safe and trustworthy AI development. The OECD's "Principles on Artificial Intelligence" (OECD, 2019), the EU's "Ethics Guidelines for Trustworthy AI" (EU, 2019), and UNESCO's "Recommendations on the Ethics of Artificial Intelligence" (UNESCO, 2021) are among the multinational organizations that have released their own principles. However, the advancement of GenAI has resulted in additional recommendations, such as the OECD's newly released "G7 Hiroshima Process on Generative Artificial Intelligence" (OECD, 2023).

Several guidance documents and voluntary frameworks have emerged at the national level in recent years, including the "AI Risk Management Framework" from the United States National Institute of Standards and Technology (NIST), a voluntary guidance published in January 2023, and the White House's "Blueprint for an AI Bill of Rights," a set of high-level principles published in October 2022 (The White House, 2022). These voluntary policies and frameworks are frequently used as guidelines by regulators and policymakers all around the world. More than 60 nations in the Americas, Africa, Asia, and Europe had issued national AI strategies as of 2023 (Stanford University).

Conclusion

Monitoring AI will be one of the most daunting tasks confronting the international community in the next centuries. As vital as the need to govern AI is the need to regulate it appropriately. Current AI policy debates too often fall into a false dichotomy of progress versus doom (or geopolitical and economic benefits versus risk mitigation). Instead of thinking creatively, solutions all too often resemble paradigms for yesterday's problems. It is imperative that we foster a relationship that prioritizes innovation, ethical considerations, and inclusivity. Striking the right balance will empower us to harness the full potential of AI within the boundaries of responsible and transparent Internet Governance, ensuring a digital future that is secure, equitable, and beneficial for all.

References

- The Key Policy Frameworks Governing AI in India - Access Partnership

- AI in e-governance: A potential opportunity for India (indiaai.gov.in)

- India and the Artificial Intelligence Revolution - Carnegie India - Carnegie Endowment for International Peace

- Rise of AI in the Indian Economy (indiaai.gov.in)

- The OECD Artificial Intelligence Policy Observatory - OECD.AI

- Artificial Intelligence | UNESCO

- Artificial intelligence | NIST

Introduction

Since the inception of the Internet and social media platforms like Facebook, X (Twitter), Instagram, etc., the government and various other stakeholders in both foreign jurisdictions and India have looked towards the intermediaries to assume responsibility for the content floated on these platforms, and various legal provisions showcase that responsibility. For the first time in many years, these intermediaries come together to moderate the content by setting a standard for the creators and propagators of this content. The influencer marketing industry in India is at a crucial juncture, with its market value projected to exceed Rs. 3,375 crore by 2026. But every industry is coupled with its complications; like in this scenario, there is a section of content creators who fail to maintain the standard of integrity and propagate content that raises concerns of authenticity and transparency, often violating intellectual property rights (IPR) and privacy.

As influencer marketing continues to shape digital consumption, the need for ethical and transparent content grows stronger. To address this, the India Influencer Governing Council (IIGC) has released its Code of Standards, aiming to bring accountability and structure to the fast-evolving online space.

Bringing Accountability to the Digital Fame Game

The India Influencer Governing Council (IIGC), established on 15th February, 2025, is founded with the objective to empower creators, advocate for fair policies, and promote responsible content creation. The IIGC releases the Code of Standard, not a moment too soon; it arrives just in time, a necessary safeguard before social media devolves into a chaotic marketplace where anything and everything is up for grabs. Without effective regulation, digital platforms become the marketplace for misinformation and exploitation.

The IIGC leads the movement with clarity, stating that the Code is a significant piece that spans across 20 crucial sections governing key areas such as paid partnership disclosures, AI-generated personas, content safety, and financial compliance.

Highlights from the Code of Standard

- The Code exhibits a technical understanding of the industry of content creation and influencer marketing. The preliminary sections advocate for accuracy, transparency, and maintaining credibility with the audience that engages with the content. Secondly, the most fundamental development is with regard to the “Paid Partnership Disclosure” included in Section 2 of the Code that mandates disclosure of any material connection, such as financial agreements or collaboration with the brand.

- Another development, which potently comes at a befitting hour, is the disclosure of “AI Influencers”, which establishes that the nature of the influencer has to be disclosed, and such influencers, whether fully virtual or partially AI-enhanced, must maintain the same standards as any human influencer.

- The code ranges across various other aspects of influencer marketing, such as expressing unpaid “Admiration” for the brand and public criticism of the brand, being free from personal bias, honouring financial agreements, non-discrimination, and various other standards that set the stage for a safe and fair digital sphere.

- The Code also necessitates that the platform users and the influencers handle sexual and sensitive content with sincere deliberation, and usage of such content shall be for educational and health-related contexts and must not be used against community standards. The Code includes various other standards that work towards making digital platforms safer for younger generations and impressionable minds.

A Code Without Claws? Challenges in Enforcement

The biggest obstacle to the effective implementation of the code is distinguishing between an honest promotion and a paid brand collaboration without any explicit mention of such an agreement. This makes influencer marketing susceptible to manipulation, and the manipulation cannot be tackled with a straitjacket formula, as it might be found in the form of exaggerated claims or omission of critical information.

Another hurdle is the voluntary compliance of the influencers with the advertising standards. Influencer marketing is an exercise in a borderless digital cyberspace, where the influencers often disregard the dignified standards to maximise their earnings and commercial motives.

The debate between self-regulation and government oversight is constantly churning, where experience tells us that overreliance on self-regulation has proven to be inadequate, and succinct regulatory oversight is imperative in light of social media platforms operating as a transnational commercial marketplace.

CyberPeace Recommendations

- Introduction of a licensing framework for influencers that fall into the “highly followed” category with high engagement, who are more likely to shape the audience’s views.

- Usage of technology to align ethical standards with influencer marketing practices, ensuring that misleading advertisements do not find a platform to deceive innocent individuals.

- Educating the audience or consumers on the internet about the ramifications of negligence and their rights in the digital marketplace. Ensuring a well-established grievance redressal mechanism via digital regulatory bodies.

- Continuous and consistent collaboration and cooperation between influencers, brands, regulators, and consumers to establish an understanding and foster transparency and a unified objective to curb deceptive advertising practices.

References

- https://iigc.org/code-of-standards/influencers/code-of-standards-v1-april.pdf

- https://legalonus.com/the-impact-of-influencer-marketing-on-consumer-rights-and-false-advertising/

- https://exhibit.social/news/india-influencer-governing-council-iigc-launched-to-shape-the-future-of-influencer-marketing/

Executive Summary

A video is being widely shared on social media claiming that members of the so-called ‘Cockroach Janta Party (CJP)’ caught a police officer in Chhattisgarh red-handed while accepting a bribe of ₹30,000. The viral posts also claim that the group has intensified its so-called campaign to “clean the rotten system.” However, CyberPeace Research Wing research found the claim to be misleading.The research revealed that the video has no connection with the ‘Cockroach Janta Party (CJP)’. The original footage dates back to February 2026 and shows Chhattisgarh Police sub-inspector Abdul Munaf being caught by the Anti-Corruption Bureau (ACB) in an alleged ₹25,000 bribery case.

Claim

A Facebook user shared the viral video on May 23, 2026, claiming that members of the “Cockroach Janta Party” caught a police officer red-handed taking a ₹30,000 bribe. The post further stated that the group had intensified its campaign against corruption. The link and archived post are provided.

Fact Check

To verify the claim, we extracted key frames from the viral video and conducted a reverse image search. During the research, we found a report published on the Navbharat Times website dated February 26, 2026, which contained visuals matching the viral vi

According to the report, the Anti-Corruption Bureau (ACB) in Korea district, Chhattisgarh, arrested a corrupt police station in-charge while accepting a bribe of ₹25,000. After being caught, the officer attempted to assert authority using his uniform and resisted the search procedure, but soon gave up. Further verification using keywords from the Navbharat Times report led to a similar story published by Dainik Bhaskar, which also contained the same visuals.

Additionally, a related context about the satirical “Cockroach Janta Party (CJP)” trend was found in a Times Now Hindi report, which explains that the term emerged as an online satire following a controversial remark attributed to the Chief Justice of India regarding unemployed youth. The statement was later clarified.

Conclusion

The research confirms that the viral video has no connection with the ‘Cockroach Janta Party (CJP)’. The original footage is from a February 2026 incident in which Chhattisgarh Police sub-inspector Abdul Munaf was arrested by the ACB in a bribery case involving ₹25,000. The video has been falsely linked to a misleading narrative on social media.