#FactCheck : Old images of US sailors falsely linked to ongoing Iran tensions

Executive Summary

After Donald Trump said that US Navy ships would soon begin escorting tankers through the Strait of Hormuz, several old images resurfaced on social media with claims that they show American sailors recently captured by Iran amid the ongoing Middle East tensions. Research by CyberPeace found that the viral posts are misleading. The images being circulated are nearly a decade old and have no connection to the ongoing situation in the Middle East.

Claim:

Posts circulating on Facebook alleged that Iran had captured 10 US Navy personnel — nine men and one woman — and detained them at a military base on Farsi Island. The caption further claimed that the incident was reported by Iranian official Ali Larijani and denied by Donald Trump.

https://www.facebook.com/photo/?fbid=1381610870661566&set=pcb.1381611363994850

Fact Check

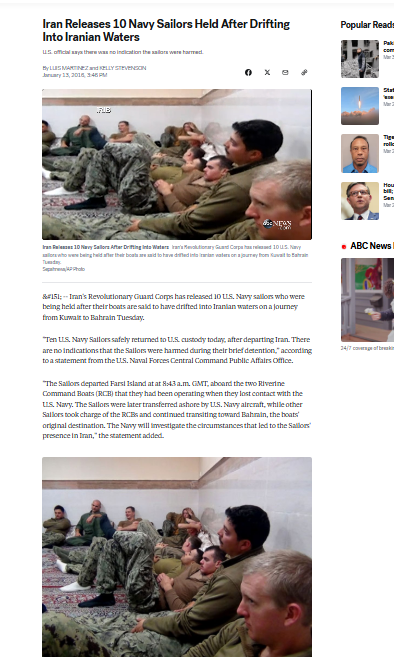

A reverse image search revealed that the viral images are not recent. They were published as early as January 13, 2016, by ABC News in a report titled “Iran Releases 10 Navy Sailors Held After Drifting Into Iranian Waters.”

Further checks showed that the same images were distributed by AFP, with credits to Sepah News, the media wing of Iran’s Revolutionary Guards.

Context

The images relate to a 2016 incident in which two US Navy patrol boats accidentally entered Iranian waters. The crew was detained and taken to Farsi Island. Iran later released the sailors after determining that the intrusion was unintentional and that there was no hostile intent.

Conclusion

The viral posts are misleading. The images being shared are nearly a decade old and unrelated to the ongoing situation in the Middle East.

Related Blogs

.webp)

Introduction

According to Statista, the global artificial intelligence software market is forecast to grow by around 126 billion US dollars by 2025. This will include a 270% increase in enterprise adoption over the past four years. The top three verticals in the Al market are BFSI (Banking, Financial Services, and Insurance), Healthcare & Life Sciences, and Retail & e-commerce. These sectors benefit from vast data generation and the critical need for advanced analytics. Al is used for fraud detection, customer service, and risk management in BFSI; diagnostics and personalised treatment plans in healthcare; and retail marketing and inventory management.

The Chairperson of the Competition Commission of India’s Chief, Smt. Ravneet Kaur raised a concern that Artificial Intelligence has the potential to aid cartelisation by automating collusive behaviour through predictive algorithms. She explained that the mere use of algorithms cannot be anti-competitive but in case the algorithms are manipulated, then that is a valid concern about competition in markets.

This blog focuses on how policymakers can balance fostering innovation and ensuring fair competition in an AI-driven economy.

What is the Risk Created by AI-driven Collusion?

AI uses predictive algorithms, and therefore, they could lead to aiding cartelisation by automating collusive behaviour. AI-driven collusion could be through:

- The use of predictive analytics to coordinate pricing strategies among competitors.

- The lack of human oversight in algorithm-induced decision-making leads to tacit collusion (competitors coordinate their actions without explicitly communicating or agreeing to do so).

AI has been raising antitrust concerns and the most recent example is the partnership between Microsoft and OpenAI, which has raised concerns among other national competition authorities regarding potential competition law issues. While it is expected that the partnership will potentially accelerate innovation, it also raises concerns about potential anticompetitive effects such as market foreclosure or the creation of barriers to entry for competitors and, therefore, has been under consideration in the German and UK courts. The problem here is in detecting and proving whether collusion is taking place.

The Role of Policy and Regulation

The uncertainties induced by AI regarding its effects on competition create the need for algorithmic transparency and accountability in mitigating the risks of AI-driven collusion. It leads to the need to build and create regulatory frameworks that mandate the disclosure of algorithmic methodologies and establish a set of clear guidelines for the development of AI and its deployment. These frameworks or guidelines should encourage an environment of collaboration between competition watchdogs and AI experts.

The global best practices and emerging trends in AI regulation already include respect for human rights, sustainability, transparency and strong risk management. The EU AI Act could serve as a model for other jurisdictions, as it outlines measures to ensure accountability and mitigate risks. The key goal is to tailor AI regulations to address perceived risks while incorporating core values such as privacy, non-discrimination, transparency, and security.

Promoting Innovation Without Stifling Competition

Policymakers need to ensure that they balance regulatory measures with innovation scope and that the two priorities do not hinder each other.

- Create adaptive and forward-thinking regulatory approaches to keep pace with technological advancements that take place at the pace of development and allow for quick adjustments in response to new AI capabilities and market behaviours.n

- Competition watchdogs need to recruit domain experts to assess competition amid rapid changes in the technology landscape. Create a multi-stakeholder approach that involves regulators, industry leaders, technologists and academia who can create inclusive and ethical AI policies.

- Businesses can be provided incentives such as recognition through certifications, grants or benefits in acknowledgement of adopting ethical AI practices.

- Launch studies such as the CCI’s market study to study the impact of AI on competition. This can lead to the creation of a driving force for sustainable growth with technological advancements.

Conclusion: AI and the Future of Competition

We must promote a multi-stakeholder approach that enhances regulatory oversight, and incentivising ethical AI practices. This is needed to strike a delicate balance that safeguards competition and drives sustainable growth. As AI continues to redefine industries, embracing collaborative, inclusive, and forward-thinking policies will be critical to building an equitable and innovative digital future.

The lawmakers and policymakers engaged in the drafting of the frameworks need to ensure that they are adaptive to change and foster innovation. It is necessary to note that fair competition and innovation are not mutually exclusive goals, they are complementary to each other. Therefore, a regulatory framework that promotes transparency, accountability, and fairness in AI deployment must be established.

References

- https://www.thehindu.com/sci-tech/technology/ai-has-potential-to-aid-cartelisation-fair-competition-integral-for-sustainable-growth-cci-chief/article69041922.ece

- https://www.marketsandmarkets.com/Market-Reports/artificial-intelligence-market-74851580.html

- https://www.ey.com/en_in/insights/ai/how-to-navigate-global-trends-in-artificial-intelligence-regulation#:~:text=Six%20regulatory%20trends%20in%20Artificial%20Intelligence&text=These%20include%20respect%20for%20human,based%20approach%20to%20AI%20regulation.

- https://www.business-standard.com/industry/news/ai-has-potential-to-aid-fair-competition-for-sustainable-growth-cci-chief-124122900221_1.html

In the Intricate mazes of the digital world, where the line between reality and illusion blurs, the quest for truth becomes a Sisyphean task. The recent firestorm of rumours surrounding global pop icon Dua Lipa's visit to Rajasthan, India, is a poignant example of this modern Dilemma. A single image, plucked from the continuum of time and stripped of context, became the fulcrum upon which a narrative of sexual harassment was precariously balanced. This incident, a mere droplet in the ocean of digital discourse, encapsulates the broader phenomenon of misinformation—a spectre that haunts the virtual halls of our interconnected existence.

Misinformation Incident

Amidst the ceaseless hum of social media, a claim surfaced with the tenacity of a weed in fertile soil: Dua Lipa, the three-time Grammy Award winner, had allegedly been subjected to sexual harassment during her sojourn in the historic city of Jodhpur. The evidence? A viral picture, its origins murky, accompanied by a caption that seemed to confirm the worst fears of her ardent followers. The digital populace quickly reacted, with many sharing the image, asserting the claim's veracity without pause for verification.

Unraveling the Fabric of Fake News: Fact-Checking Dua Lipa's India Experience

The narrative gained momentum through platforms of dubious credibility, such as the Twitter handle,' which, upon closer scrutiny by the Digital Forensics Research and Analytics Center, was revealed to be a purveyor of fake news. The very fabric of the claim began to unravel as the original photo was traced back to the official Facebook page of RVCJ Media, untainted by the allegations that had been so hastily ascribed to it. Moreover, the silence of Dua Lipa on the matter, rather than serving as a testament to the truth, inadvertently fueled the fires of speculation—a stark reminder of the paradox where the absence of denial is often misconstrued as an affirmation.

The pop star's words, shared on her Instagram account, painted a starkly different picture of her experience in India. She spoke not of fear and harassment, but of gratitude and joy, describing her trip as 'deeply meaningful' and expressing her luck to be 'within the magic' with her family. The juxtaposition of her heartfelt account with the sinister narrative constructed around her serves as a cautionary tale of the power of misinformation to distort and defile.

A Political Microcosm: Bye Elections of Telangana

Another incident is electoral misinformation, the political landscape of Telangana, India, bristled with anticipation as the Election Commission announced bye-elections for two Member of Legislative Council (MLC) seats. Here, too, the machinery of misinformation whirred into action, with political narratives being shaped and reshaped through the lens of partisan prisms. The electoral process, transparent in its intent, became susceptible to selective amplification, with certain facets magnified or distorted to fit entrenched political narratives. The bye-elections, thus, became a battleground not just for political supremacy but also for the integrity of information.

The Far-Reaching Claws of Misinformation: Fact Check

The misinformation regarding the experience of dua lipa upon India's visit and another incident of political Microcosm of Misinformation in Telangana are manifestations of a global challenge. Misinformation, adapts to the different contours of its environment, whether it be the gritty arena of politics or the glitzy realm of stardom. Its tentacles reach far and wide, with geopolitical implications that can destabilise regions, sow discord, and undermine the very pillars of democracy. The erosion of trust that misinformation engenders is perhaps its most insidious effect, as it chips away at the bedrock of societal cohesion and collective well-being.

Paradox of Technology

The same technological developments that have allowed the spread of misinformation also hold the keys to its containment. Artificial intelligence-powered fact-checking tools, blockchain-enabled transparency counter-measures, and comprehensive digital literacy campaigns stand as bulwarks against falsehoods. These tools, however, are not panaceas; they require the active engagement and critical thinking skills of each digital citizen to be truly effective.

Conclusion

As we stand at the cusp of the digital age, the way forward demands vigilance, collaboration, and innovation. Cultivating a digitally literate person, capable of discerning the nuances of digital content, is paramount. Governments, the tech industry, media companies, and civil society must join forces in a common front, leveraging their collective expertise in the battle against misinformation. Promoting algorithmic accountability and fostering diverse information ecosystems will also be crucial in mitigating the inadvertent amplification of falsehoods.

In the end, discerning truth in the digital age is a delicate process. It requires us to be attuned to the rhythm of reality, and wary of the seductive allure of unverified claims. As we navigate this digital realm, remember that the truth is not just a destination but a journey that demands our unwavering commitment to the pursuit of what is real and what is right.

References

- https://telanganatoday.com/eci-releases-schedule-for-bye-elections-to-two-mlc-seats-in-telangana

- https://www.oneindia.com/fact-check/was-pop-singer-dua-lipa-sexually-harassed-in-rajasthan-during-her-india-trip-heres-the-truth-3718833.html?story=3

- https://www.thequint.com/news/webqoof/edited-graphic-of-dua-lipa-being-sexually-harassed-in-jodhpur-falsely-shared-fact-check

Executive Summary:

A video of Pakistani Olympic gold medalist and Javelin player Arshad Nadeem wishing Independence Day to the People of Pakistan, with claims of snoring audio in the background is getting viral. CyberPeace Research Team found that the viral video is digitally edited by adding the snoring sound in the background. The original video published on Arshad's Instagram account has no snoring sound where we are certain that the viral claim is false and misleading.

Claims:

A video of Pakistani Olympic gold medalist Arshad Nadeem wishing Independence Day with snoring audio in the background.

Fact Check:

Upon receiving the posts, we thoroughly checked the video, we then analyzed the video in TrueMedia, an AI Video detection tool, and found little evidence of manipulation in the voice and also in face.

We then checked the social media accounts of Arshad Nadeem, we found the video uploaded on his Instagram Account on 14th August 2024. In that video, we couldn’t hear any snoring sound.

Hence, we are certain that the claims in the viral video are fake and misleading.

Conclusion:

The viral video of Arshad Nadeem with a snoring sound in the background is false. CyberPeace Research Team confirms the sound was digitally added, as the original video on his Instagram account has no snoring sound, making the viral claim misleading.

- Claim: A snoring sound can be heard in the background of Arshad Nadeem's video wishing Independence Day to the people of Pakistan.

- Claimed on: X,

- Fact Check: Fake & Misleading