#FactCheck -AI-Generated Image Falsely Linked to Kotdwar Shop Controversy

Executive Summary

A dispute had recently emerged in Kotdwar, Uttarakhand, over the name of a shop. During the controversy, a local youth, Deepak Kumar, came forward in support of the shopkeeper. The incident subsequently became a subject of discussion on social media, with users expressing varied reactions. Meanwhile, a photo began circulating on social media showing a burqa-clad woman presenting a bouquet to Deepak Kumar. The image is being shared with the claim that All India Majlis-e-Ittehadul Muslimeen (AIMIM)’s women’s president, Rubina, welcomed “Mohammad Deepak Kumar” by presenting him with a bouquet. However, research conducted by the CyberPeace found the viral claim to be false. The research revealed that users are sharing an AI-generated image with a misleading claim.

Claim:

On social media platform Instagram, a user shared the viral image claiming that AIMIM’s women’s president Rubina welcomed “Mohammad Deepak Kumar” by presenting him with a bouquet. The link to the post, its archived version, and a screenshot are provided below.

Fact Check:

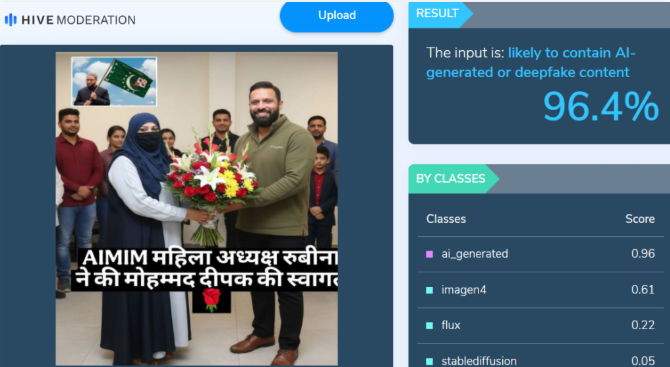

Upon closely examining the viral image, certain inconsistencies raised suspicion that it could be AI-generated. To verify its authenticity, the image was analysed using the AI detection tool Hive Moderation, which indicated a 96 percent probability that the image was AI-generated.

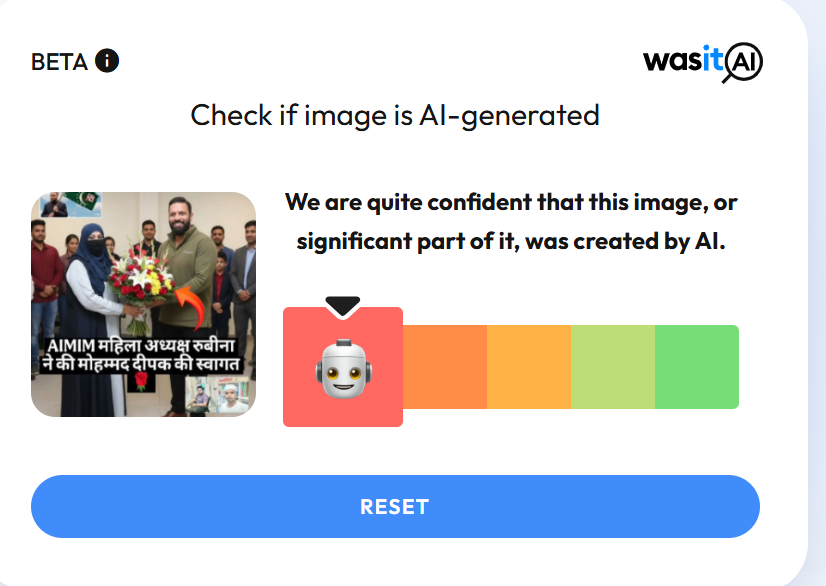

In the next stage of the research , the image was also analysed using another AI detection tool, Wasit AI, which likewise identified the image as AI-generated.

Conclusion

The research establishes that users are circulating an AI-generated image with a misleading claim linking it to the Kotdwar controversy.

Related Blogs

Executive Summary:

In recent times an image showing the President of AIMIM, Asaduddin Owaisi holding a portrait of Hindu deity Lord Rama, has gone viral on different social media platforms. After conducting a reverse image search, CyberPeace Research Team then found that the picture was fake. The screenshot of the Facebook post made by Asaduddin Owaisi in 2018 reveals him holding Ambedkar’s picture. But the photo which has been morphed shows Asaduddin Owaisi holding a picture of Lord Rama with a distorted message gives totally different connotations in the political realm because in the 2024 Lok Sabha elections, Asaduddin Owaisi is a candidate from Hyderabad. This means there is a need to ensure that before sharing any information one must check it is original in order to eliminate fake news.

Claims:

AIMIM Party leader Asaduddin Owaisi standing with the painting of Hindu god Rama and the caption that reads his interest towards Hindu religion.

Fact Check:

In order to investigate the posts, we ran a reverse search of the image. We identified a photo that was shared on the official Facebook wall of the AIMIM President Asaduddin Owaisi on 7th April 2018.

Comparing the two photos we found that the painting Asaduddin Owaisi is holding is of B.R Ambedkar whereas the viral image is of Lord Rama, and the original photo was posted in the year 2018.

Hence, it was concluded that the viral image was digitally modified to spread false propaganda.

Conclusion:

The photograph of AIMIM President Asaduddin Owaisi holding up one painting of Lord Rama is fake as it has been morphed. The photo that Asaduddin Owaisi uploaded on a Facebook page on 7 Apr 2018 depicted him holding a picture of Bhimrao Ramji Ambedkar. This photograph was digitally altered and the false captions were written to give an altogether different message of Asaduddin Owaisi. It has even highlighted the necessity of fighting fake news that has spread widely through social media platforms especially during the political realm.

- Claim: AIMIM President Asaduddin Owaisi was holding a painting of the Hindu god Lord Rama in his hand.

- Claimed on: X (Formerly known as Twitter)

- Fact Check: Fake & Misleading

The United Nations in December 2019 passed a resolution that established an open-ended ad hoc committee. This committee was tasked to develop a ‘comprehensive international convention on countering the use of ICTs for criminal purposes’. The UN Convention on Cybercrime is an initiative of the UN member states to foster the principles of international cooperation and establish legal frameworks to provide mechanisms for combating cybercrime. The negotiations for the convention had started in early 2022. It became the first binding international criminal justice treaty to have been negotiated in over 20 years upon its adoption by the UN General Assembly.

This convention addresses the limitations of the Budapest Convention on Cybercrime by encircling a broader range of issues and perspectives from the member states. The UN Convention against Cybercrime will open for signature at a formal ceremony hosted in Hanoi, Viet Nam, in 2025. The convention will finally enter into force 90 days after being ratified by the 40th signatory.

Objectives and Features of the Convention

- The UN Convention against Cybercrime addresses various aspects of cybercrime. These include prevention, investigation, prosecution and international cooperation.

- The convention aims to establish common standards for criminalising cyber offences. These include offences like hacking, identity theft, online fraud, distribution of illegal content, etc. It outlines procedural and technical measures for law enforcement agencies for effective investigation and prosecution while ensuring due process and privacy protection.

- Emphasising the importance of cross-border collaboration among member states, the convention provides mechanisms for mutual legal assistance, extradition and sharing of information and expertise. The convention aims to enhance the capacity of developing countries to combat cybercrime through technical assistance, training, and resources.

- It seeks to balance security measures with the protection of fundamental rights. The convention highlights the importance of safeguarding human rights and privacy in cybercrime investigations and enforcement.

- The Convention emphasises the importance of prevention through awareness campaigns, education, and the promotion of a culture of cybersecurity. It encourages collaborations through public-private partnerships to enhance cybersecurity measures and raise awareness, such as protecting vulnerable groups like children, from cyber threats and exploitation.

Key Provisions of the UN Cybercrime Convention

Some key provisions of the Convention are as follows:

- The convention differentiates cyber-dependent crimes like hacking from cyber-enabled crimes like online fraud. It defines digital evidence and establishes standards for its collection, preservation, and admissibility in legal proceedings.

- It defines offences against confidentiality, integrity, and availability of computer data and includes unauthorised access, interference with data, and system sabotage. Further, content-related offences include provisions against distributing illegal content, such as CSAM and hate speech. It criminalises offences like identity theft, online fraud and intellectual property violations.

- LEAs are provided with tools for electronic surveillance, data interception, and access to stored data, subject to judicial oversight. It outlines the mechanisms for cross-border investigations, extradition, and mutual legal assistance.

- The establishment of a central body to coordinate international efforts, share intelligence, and provide technical assistance includes the involvement of experts from various fields to advise on emerging threats, legal developments, and best practices.

Comparisons with the Budapest Convention

The Budapest Convention was adopted by the Committee of Ministers of the Council of Europe at the 109th Session on 8 November 2001. This Convention was the first international treaty that addressed internet and computer crimes. A comparison between the two Conventions is as follows:

- The global participation in the UNCC is inclusive of all UN member states whereas the latter had primarily European with some non-European signatories.

- The scope of the UNCC is broader and covers a wide range of cyber threats and cybercrimes, whereas the Budapest convention is focused on specific offences like hacking and fraud.

- UNCC strongly focuses on privacy and human rights protections and the Budapest Convention had limited focus on human rights.

- UNCC has extensive provisions for assistance to developing countries and this is in contrast to the Budapest Convention which did not focus much on capacity building.

Future Outlook

The development of the UNCC was a complex process. The diverse views on key issues have been noted and balancing different legal systems, cultural perspectives and policy priorities has been a challenge. The rapid technology evolution that is taking place requires the Convention to be adaptable to effectively address emerging cyber threats. Striking a balance remains a critical concern. The Convention aims to provide a blended approach to tackling cybercrime by addressing the needs of countries, both developed and developing.

Conclusion

The resolution containing the UN Convention against Cybercrime is a step in global cooperation to combat cybercrime. It was adopted without a vote by the 193-member General Assembly and is expected to enter into force 90 days after ratification by the 40th signatory. The negotiations and consultations are finalised for the Convention and it is open for adoption and ratification by member states. It seeks to provide a comprehensive legal framework that addresses the challenges posed by cyber threats while respecting human rights and promoting international collaboration.

References

- https://consultation.dpmc.govt.nz/un-cybercrime-convention/principlesandobjectives/supporting_documents/Background.pdf

- https://news.un.org/en/story/2024/12/1158521

- https://www.interpol.int/en/News-and-Events/News/2024/INTERPOL-welcomes-adoption-of-UN-convention-against-cybercrime#:~:text=The%20UN%20convention%20establishes%20a,and%20grooming%3B%20and%20money%20laundering

- https://www.cnbctv18.com/technology/united-nations-adopts-landmark-global-treaty-to-combat-cybercrime-19529854.htm

Introduction

In the dynamic intersection of pop culture and technology, an unexpected drama unfolded in the virtual world, where the iconic Taylor Swift account has been temporarily blocked on X . The incident sent a shockwave through the online community, sparking debates and speculation about the misuse of deepfake technology.

Taylor Swift's searches on social media platform X have been restored after a temporary blockage was lifted following outrage over her explicit AI images. The social media site, formerly known as Twitter, temporarily restricted searches for Taylor Swift as a temporary measure to address a flood of AI-generated deepfake images that went viral across X and other platforms.

X has mentioned it is actively removing the images and taking appropriate actions against the accounts responsible for spreading them. While Swift has not spoken publicly about the fake images, a report stated that her team is "considering legal action" against the site which published the AI-generated images.

The Social Media Frenzy

As news of temporary blockages spread like wildfire across social media platforms, users engaged in a frenzy of reactions. The fake picture was re-shared 24,000 times, with tens of thousands of users liking the post. This engagement supercharged the deepfake image of Taylor Swift, and by the time the moderators woke up, it was too late. Hundreds of accounts began reposting it, which started an online trend. Taylor Swift's AI video reached an even larger audience. The source of the photograph wasn't even known to begin with. The revelations are causing outrage. American lawmakers from across party lines have spoken. One of them said they were astounded, while another said they were shocked.

AI Deepfake Controversy

The deepfake controversy is not new. There are lot of cases such as Rashmika Mandana, Sachin Tendulkar, and now Taylor Swift have been the victims of such misuse of Deepfake technology. The world is facing a concern about the misuse of AI or deepfake technology. With no proactive measures in place, this threat will only worsen affecting privacy concerns for individuals. This incident has opened a debate among users and industry experts on the ethical use of AI in the digital age and its privacy concerns.

Why has the Incident raised privacy concerns?

The emergence of Taylor Swift's deepfake has raised privacy concerns for several reasons.

- Misuse of Personal Imagery: Deepfake uses AI and its algorithms to superimpose one person’s face onto another person’s body, the algorithms are processed again and again till the desired results are obtained. In the case of celebrities or higher-position people, it's very easy for crooks to get images and generate a deepfake. In the case of Taylor Swift, her images are misused. The misuse of Images can have serious consequences for an individual's reputation and privacy.

- False narrative and Manipulation: Deepfake opens the door for public reaction and spreads false narratives, causing harm to reputation, and affecting personal and professional life. Such false narratives through deepfakes may influence public opinion and damage reputation making it challenging for the person to control it.

- Invasion of Privacy: Creating a deepfake involves gathering a significant amount of information about their targets without their consent. The use of such personal information for the creation of AI-generated content without permission raises serious privacy concerns.

- Difficulty in differentiation: Advanced Deepfake technology makes it difficult for people to differentiate between genuine and manipulated content.

- Potential for Exploitation: Deepfake could be exploited for financial gain or malicious motives of the cyber crooks. These videos do harm the reputation, damage the brand name, and partnerships, and even hamper the integrity of the digital platform upon which the content is posted, they also raise questions about the platform’s policy or should we say against the zero-tolerance policy on posting the non-consensual nude images.

Is there any law that could safeguard Internet users?

Legislation concerning deepfakes differs by nation and often spans from demanding disclosure of deepfakes to forbidding harmful or destructive material. Speaking about various countries, the USA including its 10 states like California, Texas, and Illinois have passed criminal legislation prohibiting deepfake. Lawmakers are advocating for comparable federal statutes. A Democrat from New York has presented legislation requiring producers to digitally watermark deepfake content. The United States does not criminalise such deepfakes but does have state and federal laws addressing privacy, fraud, and harassment.

In 2019, China enacted legislation requiring the disclosure of deepfake usage in films and media. Sharing deepfake pornography became outlawed in the United Kingdom in 2023 as part of the Online Safety Act.

To avoid abuse, South Korea implemented legislation in 2020 criminalising the dissemination of deepfakes that endanger the public interest, carrying penalties of up to five years in jail or fines of up to 50 million won ($43,000).

In 2023, the Indian government issued an advisory to social media & internet companies to protect against deepfakes that violate India'sinformation technology laws. India is on its way to coming up with dedicated legislation to deal with this subject.

Looking at the present situation and considering the bigger picture, the world urgently needs strong legislation to combat the misuse of deepfake technology.

Lesson learned

The recent blockage of Taylor Swift's searches on Elon Musk's X has sparked debates on responsible technology use, privacy protection, and the symbiotic relationship between celebrities and the digital era. The incident highlights the importance of constant attention, ethical concerns, and the potential dangers of AI in the digital landscape. Despite challenges, the digital world offers opportunities for growth and learning.

Conclusion

Such deepfake incidents highlight privacy concerns and necessitate a combination of technological solutions, legal frameworks, and public awareness to safeguard privacy and dignity in the digital world as technology becomes more complex.

References:

- https://www.hindustantimes.com/world-news/us-news/taylor-swift-searches-restored-on-elon-musks-x-after-brief-blockage-over-ai-deepfakes-101706630104607.html

- https://readwrite.com/x-blocks-taylor-swift-searches-as-explicit-deepfakes-of-singer-go-viral/