#FactCheck : Iraq Religious Gathering Video Misused as Khamenei Funeral Footage

Executive Summary

A video showing a massive gathering of people dressed in black is widely circulating on social media. The clip is being shared with the claim that it shows crowds mourning the funeral of Iran’s Supreme Leader Ayatollah Ali Khamenei following his alleged killing in February 2026 However, research by the CyberPeace found that the claim is misleading and the video is unrelated to Iran.

Claim:

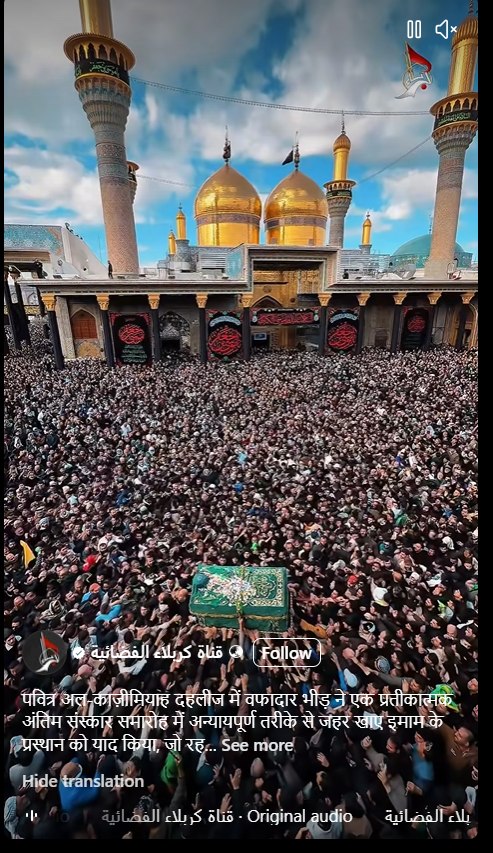

The viral video shows a large crowd gathered in a public square, with a mosque featuring a golden dome visible in the background. Social media posts claim that the footage captures mourners attending Ayatollah Khamenei’s funeral after his reported death in a joint US-Israel operation.

Fact Check:

To verify the claim, we extracted keyframes from the video and conducted a reverse image search. This led us to a similar clip uploaded on January 15 by an Iraqi broadcaster, Karbala TV, on Facebook. In the footage, a large crowd can be seen carrying a symbolic coffin near a shrine with a golden dome—matching the visuals seen in the viral video. According to the Arabic caption, the video shows a “symbolic funeral” procession held at the Kazimayn Shrine in Baghdad, Iraq. The event is part of an annual religious observance commemorating Imam Musa al-Kazim, the seventh Imam in Shia Islam, who is believed to have died after being poisoned in the 8th century.

Every year, large numbers of Shia devotees gather at the shrine in Baghdad to pay their respects during this commemoration. The visuals seen in the viral clip are consistent with this annual gathering.

Conclusion:

The claim that the video shows crowds at Ayatollah Khamenei’s funeral is false. The footage is unrelated and actually depicts a religious gathering in Baghdad, Iraq, held as part of an annual Shia ritual.

Related Blogs

Introduction

Over the past few months, cybercriminals have upped the ante with highly complex methods targeting innocent users. One such scam is a new one that exploits WhatsApp users in India and globally. A seemingly harmless picture message is the entry point to stealing money and data. Downloading seemingly harmless images via WhatsApp can unknowingly install malware on your smartphone. This malicious software can compromise your banking applications, steal passwords, and expose your personal identity. With such malware-laced instant messages now making headlines, it is advised for netizens to exercise extreme caution while handling media received on messaging platforms.

How Does the WhatsApp Photo Scam Work?

Cybercriminals began embedding malicious code in images being shared on WhatsApp. Here is how the attack typically works:

- The user receives a WhatsApp message from an unknown number with an image.

- The image may appear harmless—a greeting, meme, or holiday card—but it's packed with hidden malware.

- When the user taps to download the image, the malware gets installed on the phone in silent mode.

- Once installed, the malware is able to capture keystrokes, read messages, swipe banking applications, swipe credentials, and even hijack device functionality.

- Allegedly, in its advanced versions, it can exploit two-factor authentication (2FA) and make unauthorised transactions.

Who Is Being Targeted?

This scam targets both Android and iPhone users, with a focus on vulnerable groups like senior citizens, busy workers during peak seasons, and members of WhatsApp groups flooded with forwarded messages. Experts warn that a single careless click is enough to compromise an entire device.

What Can the Malware Do?

Upon installation, the malware grants hackers a terrifying level of access:

- Track user activity via keylogging or screen capture.

- Pilfer banking credentials and initiate fund transfers automatically.

- Obtain SMS or app-based 2FA codes, evading security layers.

- Clone identity information, such as Aadhaar details, digital wallets, and email access.

- Control device operations, including the camera and microphone.

This level of intrusion can result in not just financial loss but long-term digital impersonation or blackmail.

Safety Measures for WhatsApp Users

- Never Download Media from Suspicious Numbers

Do not download any files or pictures, even if the content appears to be familiar, unless you have faith in the source. Spread this advice among family members, particularly the older generation.

- Turn off Auto-Download in WhatsApp Settings

Navigate to Settings > Storage and Data > Media Auto-Download. Switch off auto-download for mobile data, Wi-Fi, and roaming.

- Install and Update Mobile Security Apps

Ensure your phone is equipped with a good antivirus or mobile security app that is updated from time to time.

- Block and Report Potential Scammers

WhatsApp offers the ability to block and report senders in a straightforward manner. This ensures that it notifies the platform and others as well.

- Educate Your Community

Share your knowledge on cyber hygiene with family, friends, and colleagues. Many people fall victim simply because they aren't aware of the risks, staying informed and spreading the word can make a big difference.

Advisories and Response

The Indian Cybercrime Coordination Centre (I4C) and other state cyber cells have released several alerts on increasing fraud via messaging platforms. Law enforcement agencies are appealing to the public not only to be vigilant but also to report any incident at once through the National Cybercrime Reporting Portal (cybercrime.gov.in).

Conclusion

The WhatsApp photo scam is a stark reminder that not all dangers come with a warning. A picture can now be a Trojan horse, propagating silently from device to device and draining personal money. Do not engage with unwanted media, refresh and update your privacy and security settings. Cyber criminals survive on neglect and ignorance, but through digital hygiene and vigilance, we can fight against these types of emerging threats.

References

- https://www.opswat.com/blog/how-emerging-image-based-malware-attacks-threaten-enterprise-defenses

- https://www.indiatvnews.com/technology/news/whatsapp-photo-scam-alert-downloading-random-images-could-cost-you-big-2025-05-06-988855

- https://www.hindustantimes.com/india-news/what-is-the-whatsapp-image-scam-and-how-can-you-stay-safe-from-it-101744353412848.html

- https://faq.whatsapp.com/898107234497196/?helpref=uf_share

- https://www.welivesecurity.com/en/malware/malware-hiding-in-pictures-more-likely-than-you-think/

- https://faq.whatsapp.com/573786218075805

- https://www.reversinglabs.com/blog/malware-in-images

Executive Summary:

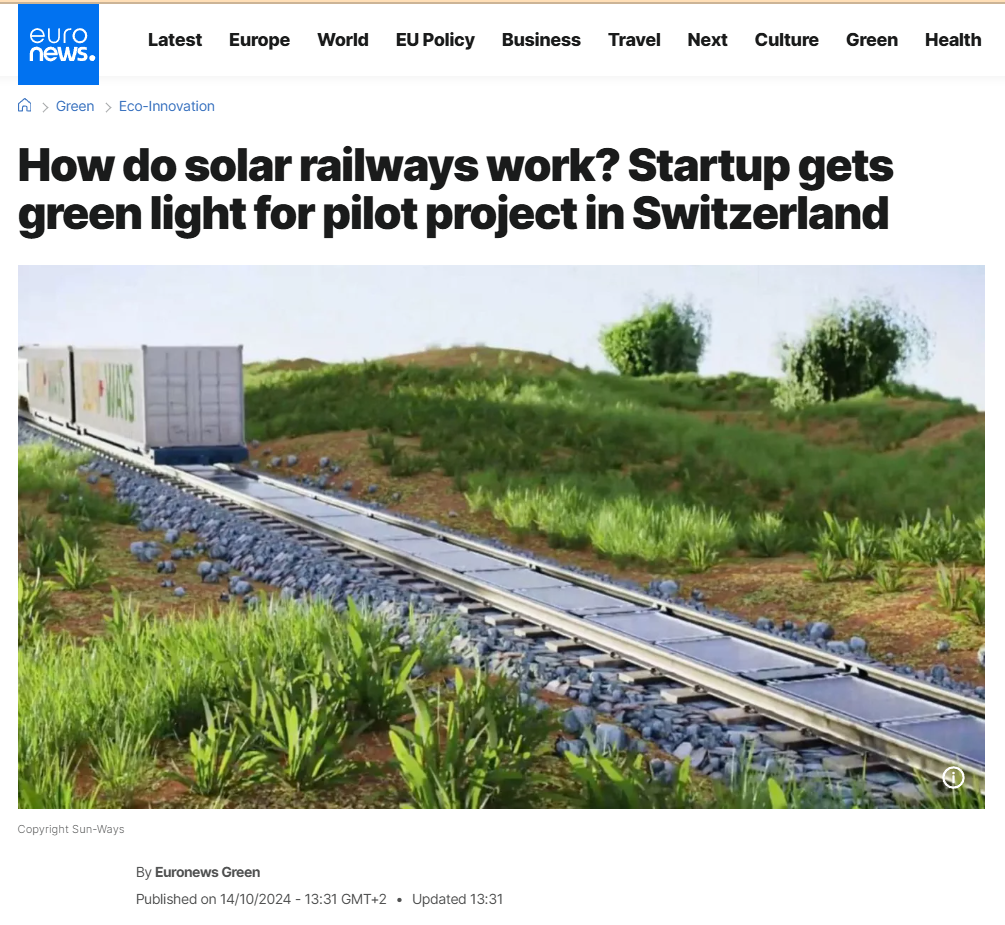

Social media has been overwhelmed by a viral post that claims Indian Railways is beginning to install solar panels directly on railway tracks all over the country for renewable energy purposes. The claim also purports that India will become the world's first country to undertake such a green effort in railway systems. Our research involved extensive reverse image searching, keyword analysis, government website searches, and global media verification. We found the claim to be completely false. The viral photos and information are all incorrectly credited to India. The images are actually from a pilot project by a Swiss start-up called Sun-Ways.

Claim:

According to a viral post on social media, Indian Railways has started an all-India initiative to install solar panels directly on railway tracks to generate renewable energy, limit power expenses, and make global history in environmentally sustainable rail operations.

Fact check:

We did a reverse image search of the viral image and were soon directed to international media and technology blogs referencing a project named Sun-Ways, based in Switzerland. The images circulated on Indian social media were the exact ones from the Sun-Ways pilot project, whereby a removable system of solar panels is being installed between railway tracks in Switzerland to evaluate the possibility of generating energy from rail infrastructure.

We also thoroughly searched all the official Indian Railways websites, the Ministry of Railways news article, and credible Indian media. At no point did we locate anything mentioning Indian Railways engaging or planning something similar by installing solar panels on railway tracks themselves.

Indian Railways has been engaged in green energy initiatives beyond just solar panel installation on program rooftops, and also on railway land alongside tracks and on train coach roofs. However, Indian Railways have never installed solar panels on railway tracks in India. Meanwhile, we found a report of solar panel installations on the train launched on 14th July 2025, first solar-powered DEMU (diesel electrical multiple unit) train from the Safdarjung railway station in Delhi. The train will run from Sarai Rohilla in Delhi to Farukh Nagar in Haryana. A total of 16 solar panels, each producing 300 Wp, are fitted in six coaches.

We also found multiple links to support our claim from various media links: Euro News, World Economy Forum, Institute of Mechanical Engineering, and NDTV.

Conclusion:

After extensive research conducted through several phases including examining facts and some technical facts, we can conclude that the claim that Indian Railways has installed solar panels on railway tracks is false. The concept and images originate from Sun-Ways, a Swiss company that was testing this concept in Switzerland, not India.

Indian Railways continues to use renewable energy in a number of forms but has not put any solar panels on railway tracks. We want to highlight how important it is to fact-check viral content and other unverified content.

- Claim: India’s solar track project will help Indian Railways run entirely on renewable energy.

- Claimed On: Social Media

- Fact Check: False and Misleading

Introduction

The Pahalgam terror attack, which took place on April 22, 2025, was a tragic incident that shook the nation. The National Investigation Agency (NIA) formally took over the Pahalgam terrorist attack case on Sunday, April 27, 2025. Following India's strikes on Pakistan, tensions between the two countries have heightened, leading to concerns about potential escalation, including the risk of cyber attacks and the spread of misinformation that could further complicate the situation. It is crucial for corporations, critical sectors, and all netizens in India to stay proactive and vigilant against cyber attacks, while also being cautious of the risks of misinformation. This includes protecting themselves from being affected and avoiding the inadvertent or deliberate spread of false information.

Be Careful with the Information You Consume and Share

It is crucial to note that the Press Information Bureau (PIB) has alerted citizens to stay cautious of fake narratives being circulated by Pakistani handles. Through an official fact check, PIB debunked several misleading claims aimed at undermining India’s internal stability and security forces. Citizens are urged to verify any suspicious content via PIB Fact Check before sharing it further. As social media becomes a hub for viral content, netizens must be cautious about the information they consume and share. Misleading information, old videos, false claims, and misinformation flood the platform, making it essential to be mindful of the content you consume and share, as spreading unverified content can have severe consequences.

CyberPeace Recommends Following Crucial Cyber Safety Tips to Stay Vigilant Against Potential Digital Threats:

- Do not open/download any video file you receive in social media groups or from unknown sources.

- As per several media reports, a video file named "Dance of the Hilary" is being circulated, which may be intended for a cyber attack on India. Please refrain from clicking, downloading, or sharing any such file. Additionally, there are reports of suspicious files circulating on WhatsApp, including tasksche.exe, OperationSindoor.ppt, and OperationSindhu.pptx. Do not download or open any of these files, as they may pose a serious cyber threat.

- To receive accurate alerts, you can enable government notifications on your iPhone. Go to Settings > Notifications and scroll down to Government Alerts. Make sure all the toggles under Government Alerts are turned on. This will allow you to receive timely information and important alerts from government agencies, and your device will display critical notifications to keep you informed and safe.

- Turn off automatic media download in WhatsApp to reduce the risk of downloading potentially harmful files.

- To protect your privacy, disable location services on apps like WhatsApp, Instagram, Snapchat, and X unless absolutely necessary.

- Refrain from sharing sensitive information like government data, confidential details, or personal records on unsecured devices or networks.

- To avoid misinformation and manipulation during conflict, verifying and cross-checking the news before sharing it with anyone is crucial. Stay updated with official news updates, and be cautious while sharing information.

Conclusion

In times of heightened tensions, all of us need to stay vigilant, protect our digital spaces, and verify the information we encounter. Together, we can safeguard ourselves from cyber threats and misinformation, ensuring the safety, stability, and digital security of our nation. As proud citizens, let us unite to protect both our physical and digital well-being.

References

- https://www.thehindu.com/news/national/pakistan-has-unleashed-propaganda-machine-in-response-to-successful-operation-sindoor-ib-ministry/article69549084.ece

- https://sambadenglish.com/national-international-news/india/centre-asks-people-to-stay-alert-against-misinformation-in-social-media-9048169

- https://www.youtube.com/watch?v=gLHo_Vd1_H0&t=19s