#FactCheck- Viral ‘Prison Torture’ Video Not from Israel, Taken from Iraqi TV Show

Executive Summary

Israel’s parliament, the Knesset, recently passed a bill allowing military courts to impose the death penalty on Palestinians convicted of killing Israelis. Amid this backdrop, a video has gone viral on social media showing men in black uniforms beating detainees inside a prison, with claims linking it to alleged torture by Israeli forces. However, a research by the CyberPeace found the claim to be false. The viral video is not related to Israel or any real incident, but is actually from an Iraqi television series titled “Beit Umm Layla.”

Claim

Sharing the video, a user on X (formerly Twitter) wrote:“Live footage: IDF soldiers always torture Palestinian hostages before executing them. Please don’t let us die in silence.”

Fact Check

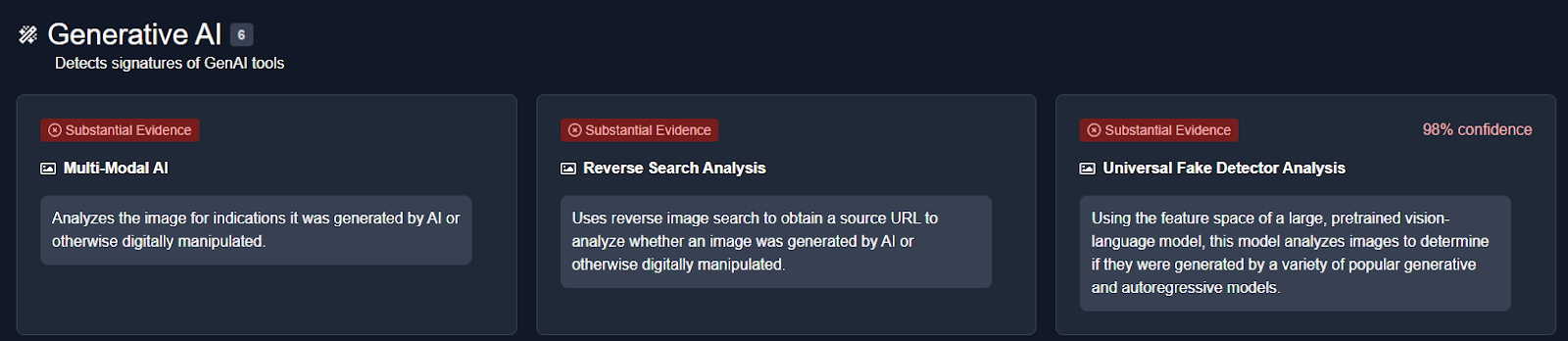

To verify the claim, we extracted keyframes from the viral video and conducted a reverse image search. This led us to a longer version of the clip posted on March 9 by the Iraqi channel Al-Iraqiya on its Facebook and Instagram pages.

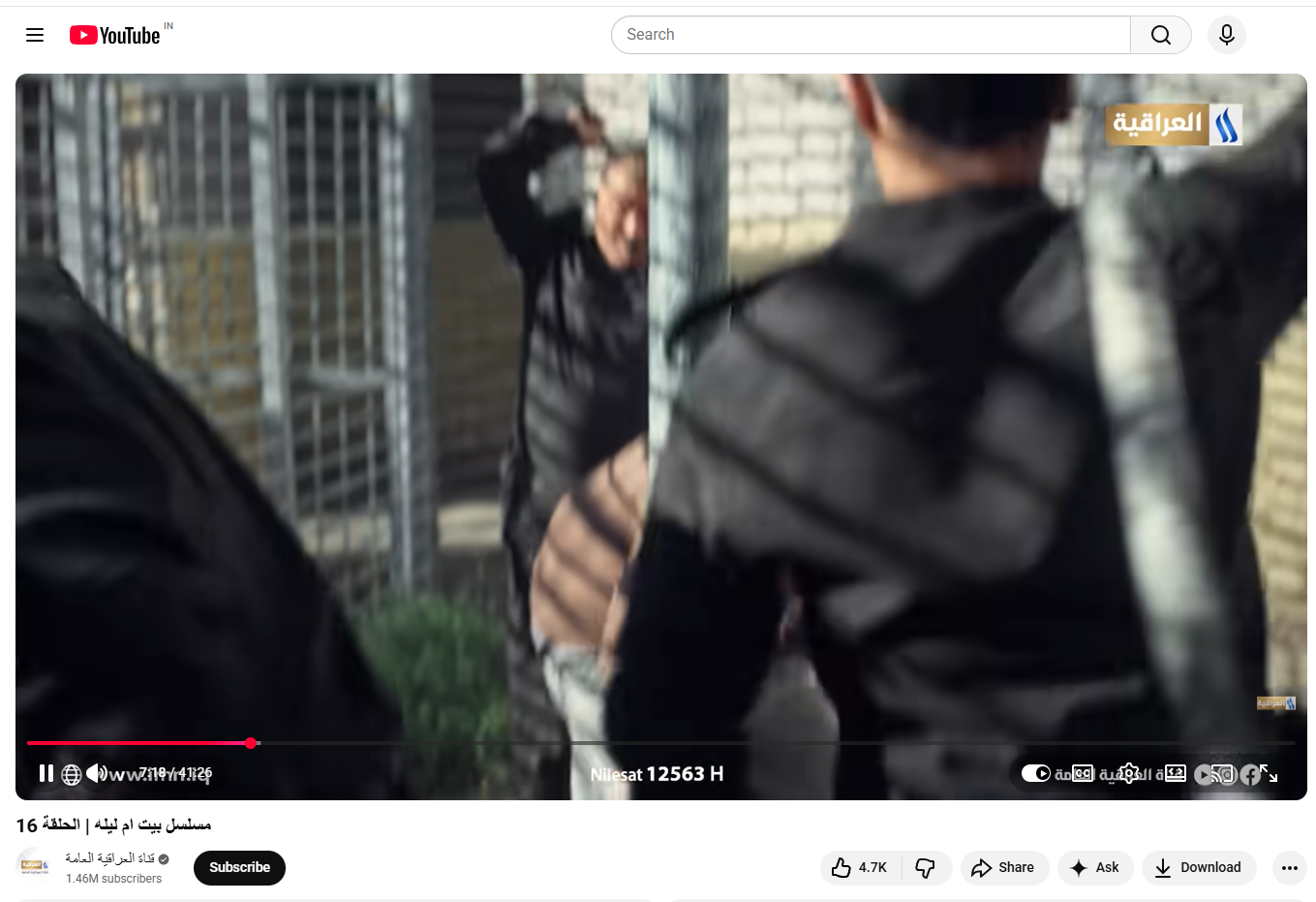

The posts clearly identified the footage as part of “Beit Umm Layla,” a popular Iraqi TV series. Further research showed that the full series is available on Al-Iraqiya’s official YouTube channel, where 25 episodes were uploaded between February 19 and March 20. The viral clip corresponds to Episode 16 of the show.

Additionally, information available on the Arabic entertainment website elCinema indicates that the series, released on February 18, is a socio-political drama focusing on prisoners and the psychological struggles faced by them and their families.

Conclusion

The viral claim is false and misleading. The video does not depict any real incident involving Israeli forces or Palestinian detainees. Instead, it is a fictional scene from an Iraqi television drama series.There is no credible evidence to support the claim that the footage shows torture by Israeli soldiers. The clip has been taken out of context and shared with a misleading narrative to provoke emotional reactions.