#FactCheck - Viral Postcard Attributing Fake UGC Statement to Keshav Prasad Maurya Is False

Executive Summary

A postcard claiming that Uttar Pradesh Deputy Chief Minister Keshav Prasad Maurya commented on the Supreme Court’s stay on the new UGC regulations is being widely shared on social media. The viral postcard suggests that Maurya stated the Modi government would “fight till its last breath” to implement the UGC law and appealed to Dalit, backward and tribal communities to trust the government as their true well-wisher. However, an research by the CyberPeace has found that the viral postcard is fake. Keshav Prasad Maurya has not made any such statement.

Claim

A Facebook user shared the postcard with the caption:“Now read it yourself. Statement of Deputy CM Keshav Prasad Maurya — the Modi government will fight till its last breath to implement the UGC law. An appeal to Dalit, backward and tribal communities to trust the government, calling it their true well-wisher.”

(Archived version of the post available here.)

Fact Check:

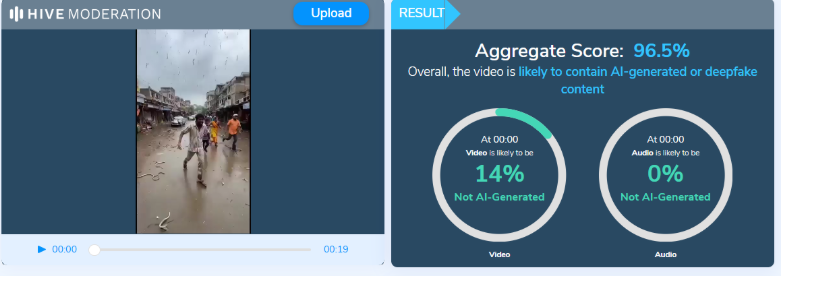

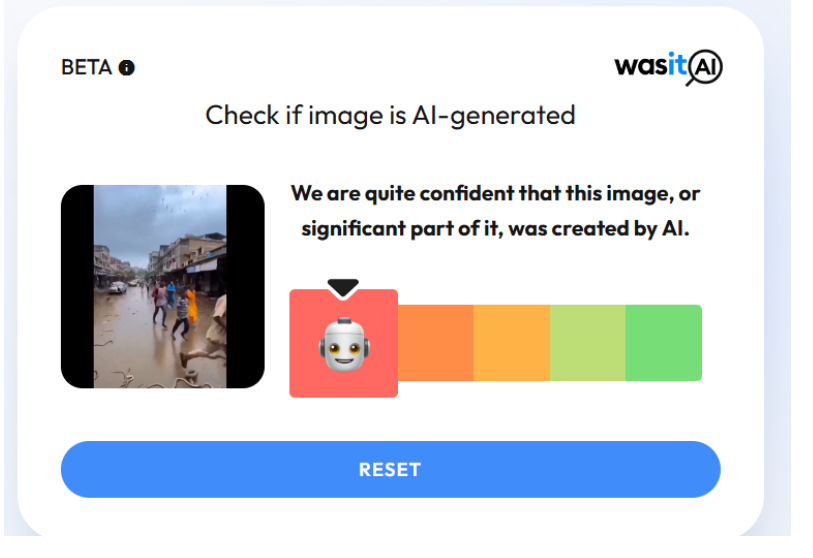

During the research, we did not find any credible news reports mentioning such a statement by Deputy Chief Minister Keshav Prasad Maurya regarding the UGC regulations or the Supreme Court’s order. A closer examination of the viral postcard revealed several inconsistencies. Notably, the text on the postcard lacks proper punctuation, such as commas and full stops, which is unusual for professionally designed news graphics. The postcard carries the logo of Navbharat Times (NBT). However, when compared with genuine NBT postcards, the font style used in the viral image does not match NBT’s official design. We also traced the original NBT postcard that appears to have been edited to create the fake one. In the authentic postcard, shared by NBT on January 20, Keshav Prasad Maurya is quoted as saying: Where the lotus has bloomed, it will continue to bloom, and where it has not, under the guidance of PM Modi and the leadership of Nitin Nabin, the lotus will bloom.”

The original statement was digitally altered, and a fabricated quote was inserted to create the viral postcard.

Conclusion

CyberPeace research clearly establishes that the viral postcard is fake. The original Navbharat Times postcard has been tampered with, and Keshav Prasad Maurya’s actual statement has been replaced with a fabricated quote, which is now being circulated with a misleading claim.