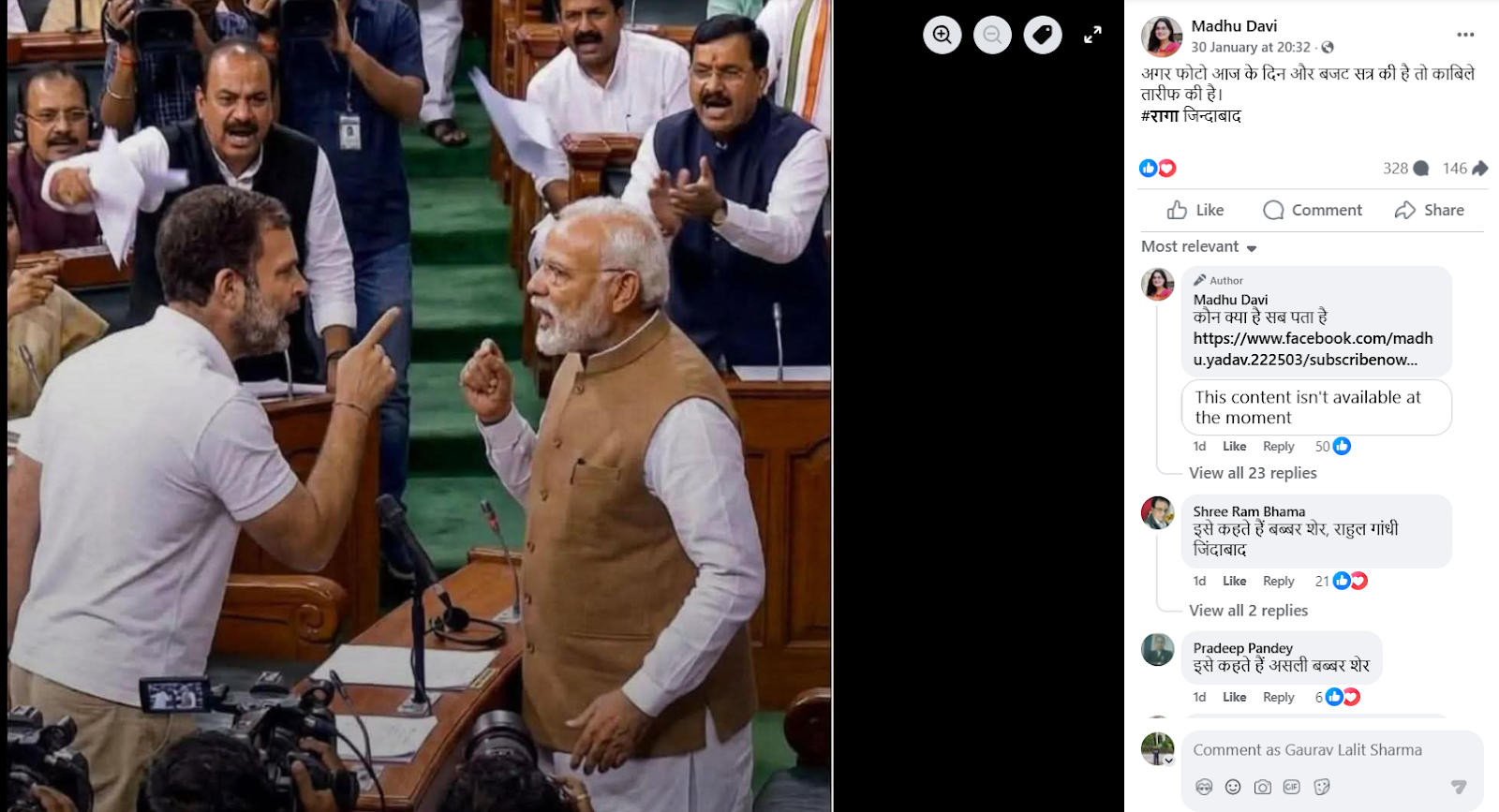

#FactCheck - Viral Photo of Modi and Rahul Gandhi in Parliament Found to Be AI-Generated

Executive Summary

An image showing Prime Minister Narendra Modi and Leader of Opposition in the Lok Sabha and Congress MP Rahul Gandhi standing face to face inside Parliament is going viral on social media. Several users are sharing the image claiming that the photograph was taken during the ongoing Budget Session, suggesting a direct face-off between the two leaders inside Parliament. However, research conducted by the CyberPeacehas found that the viral claim is false. The image in question is not real but has been generated using Artificial Intelligence (AI). The AI-generated image is now being shared on social media with a misleading claim.

Claim

A Facebook user named Madhu Davi shared the viral image on January 30, 2026, with the caption: “If this photo is from today and the Budget Session, it is commendable. RAGA Zindabad.”

(Archived version of the post available here.)

- https://www.facebook.com/photo/?fbid=759145877237871&set=a.110639115421887

- https://perma.cc/N2XD-TZ32?type=image

Fact Check:

To verify the viral claim, we first conducted a keyword search on Google to check whether any credible media outlet had reported such an incident during the Budget Session. However, no news reports supporting the claim were found. We then performed a reverse image search using Google Lens, but this too did not yield any reliable media reports or evidence confirming the authenticity of the image. This raised suspicion that the image might be AI-generated. To further verify, the image was analysed using the AI detection tool Hive Moderation. The tool indicated a probability of over 99 per cent that the image was generated using Artificial Intelligence.

Conclusion

CyberPeace research confirms that the image being circulated with the claim that Prime Minister Narendra Modi and Rahul Gandhi came face to face during the Budget Session is fake. The viral image has been created using AI and is being shared with a false and misleading narrative.

Related Blogs

Introduction

Cyber-attacks are another threat in this digital world, not exclusive to a single country, that could significantly disrupt global movements, commerce, and international relations all of which experienced first-hand when a cyber-attack occurred at Heathrow, the busiest airport in Europe, which threw their electronic check-in and baggage systems into a state of chaos. Not only were there chaos and delays at Heathrow, airports across Europe including Brussels, Berlin, and Dublin experienced delay and had to conduct manual check-ins for some flights further indicating just how interconnected the world of aviation is in today's world. Though Heathrow assured passengers that the "vast majority of flights" would operate, hundreds were delayed or postponed for hours as those passengers stood in a queue while nearly every European airport's flying schedule was also negatively impacted.

The Anatomy of the Attack

The attack specifically targeted Muse software by Collins Aerospace, a software built to allow various airlines to share check-in desks and boarding gates. The disruption initially perceived to be technical issues soon turned into a logistical nightmare, with airlines relying on Muse having to engage in horror-movie-worthy manual steps hand-tagging luggage, verifying boarding passes over the phone, and manually boarding passengers. While British Airways managed to revert to a backup system, most other carriers across Heathrow and partner airports elsewhere in Europe had to resort to improvised manual solutions.

The trauma was largely borne by the passengers. Stories emerged about travelers stranded on the tarmac, old folks left barely able to walk without assistance, and even families missing important connections. It served to remind everyone that the aviation world, with its schedules interlocked tightly across borders, can see even a localized system failure snowball into a continental-level crisis.

Cybersecurity Meets Aviation Infrastructure

In the last two decades, aviation has become one of the more digitally dependent industries in the world. From booking systems and baggage handling issues to navigation and air traffic control, digital systems are the invisible scaffold on which flight operations are supported. Though this digitalization has increased the scale of operations and enhanced efficiency, it must have also created many avenues for cyber threats. Cyber attackers increasingly realize that to target aviation is not just about money but about leverage. Just interfering with the check-in system of a major hub like Heathrow is more than just financial disruption; it causes panic and hits the headlines, making it much more attractive for criminal gangs and state-sponsored threat actors.

The Heathrow incident is like the worldwide IT crash in July 2024-thwarting activities of flights caused by a botched Crowdstrike update. Both prove the brittleness of digital dependencies in aviation, where one failure point triggering uncontrollable ripple effects spanning multiple countries. Unlike conventional cyber incidents contained within corporate networks, cyber-attacks in aviation spill on to the public sphere in real time, disturbing millions of lives.

Response and Coordination

Heathrow Airport first added extra employees to assist with manual check-in and told passengers to check flight statuses before traveling. The UK's National Cyber Security Centre (NCSC) collaborated with Collins Aerospace, the Department for Transport, and law enforcement agencies to investigate the extent and source of the breach. Meanwhile, the European Commission published a statement that they are "closely following the development" of the cyber incident while assuring passengers that no evidence of a "widespread or serious" breach has been observed.

According to passengers, the reality was quite different. Massive passenger queues, bewildering announcements, and departure time confirmations cultivated an atmosphere of chaos. The wrenching dissonance between the reassurances from official channel and Kirby needs to be resolved about what really happens in passenger experiences. During such incidents, technical restoration and communication flow are strategies for retaining public trust in incidents.

Attribution and the Shadow of Ransomware

As with many cyber-attacks, questions on its attribution arose quite promptly. Rumours of hackers allegedly working for the Kremlin escaped into the air quite possibly inside seconds of the realization, Cybersecurity experts justifiably advise against making conclusions hastily. Extortion ransomware gangs stand the last chance to hold the culprits, whereas state actors cannot be ruled out, especially considering Russian military activity under European airspace. Meanwhile, Collins Aerospace has refused to comment on the attack, its precise nature, or where it originated, emphasizing an inherent difficulty in cyberattribution.

What is clear is the way these attacks bestow criminal leverage and dollars. In previous ransomware attacks against critical infrastructure, cybercriminal gangs have extorted millions of dollars from their victims. In aviation terms, the stakes grow exponentially, not only in terms of money but national security and diplomatic relations as well as human safety.

Broader Implications for Aviation Cybersecurity

This incident brings to consideration several core resilience issues within aviation systems. Traditionally, the airports and airlines had placed premium on physical security, but today, the equally important concept of digital resilience has come into being. Systems such as Muse, which bind multiple airlines into shared infrastructure, offer efficiency but, at the same time, also concentrate that risk. A cyber disruption in one place will cascade across dozens of carriers and multiple airports, thereby amplifying the scale of that disruption.

The case also brings forth redundancy and contingency planning as an urgent concern. While BA systems were able to stand on backups, most other airlines could not claim that advantage. It is about time that digital redundancies, be it in the form of parallel systems or isolated backups or even AI-driven incident response frameworks, are built into aviation as standard practice and soon.

On the policy plane, this incident draws attention to the necessity for international collaboration. Aviation is therefore transnational, and cyber incidents standing on this domain cannot possibly be handled by national agencies only. Eurocontrol, the European Commission, and cross-border cybersecurity task forces must spearhead this initiative to ensure aviation-wide resilience.

Human Stories Amid a Digital Crisis

Beyond technical jargon and policy response, the human stories had perhaps the greatest impact coming from Heathrow. Passengers spoke of hours spent queuing, heading to funerals, and being hungry and exhausted as they waited for their flights. For many, the cyber-attack was no mere headline; instead, it was ¬ a living reality of disruption.

These stories reflect the fact that cybersecurity is no hunger strike; it touches people's lives. In critical sectors such as aviation, one hour of disruption means missed connections for passengers, lost revenue for airlines, and inculcates immense emotional stress. Crisis management must therefore entail technical recovery and passenger care, communication, and support on the ground.

Conclusion

The cybersecurity crisis of Heathrow and other European airports emphasizes the threat of cyber disruption on the modern legitimacy of aviation. The use of increased connectivity for airport processes means that any cyber disruption present, no matter how small, can affect scheduling issues regionally or on other continents, even threatening lives. The occurrences confirm a few things: a resilient solution should provide redundancy not efficiency; international networking and collaboration is paramount; and communicating with the traveling public is just as important (if not more) as the technical recovery process.

As governments, airlines, and technology providers analyse the disruption, the question is longer if aviation can withstand cyber threats, but to what extent it will be prepared to defend itself against those attacks. The Heathrow crisis is a reminder that the stake of cybersecurity is not just about a data breach or outright stealing of money but also about stealing the very systems that keep global mobility in motion. Now, the aviation industry is tested to make this disruption an opportunity to fortify the digital defences and start preparing for the next inevitable production.

References

- https://www.bbc.com/news/articles/c3drpgv33pxo

- https://www.theguardian.com/business/2025/sep/21/delays-continue-at-heathrow-brussels-and-berlin-airports-after-alleged-cyber-attack

- https://www.reuters.com/business/aerospace-defense/eu-agency-says-third-party-ransomware-behind-airport-disruptions-2025-09-22/

A video circulating on social media claims that British Prime Minister Keir Starmer was forcibly thrown out of a pub by its owner. The clip has been widely shared by users, many of whom are drawing political comparisons and questioning democratic norms. However, research conducted by Cyber Peace Foundation has found that the viral claim is misleading. Our research reveals that the video dates back to 2021, a time when Keir Starmer was not the Prime Minister of the United Kingdom, but the leader of the opposition Labour Party.

Claim

On January 12, 2026, a video was shared on social media platform X (formerly Twitter) with the claim that British Prime Minister Sir Keir Starmer was asked to leave a pub by its owner. The post suggests that the pub owner was unhappy with Starmer’s performance and contrasts the incident with how political dissent is allegedly handled in India. The viral video, approximately 32 seconds long, shows a man angrily confronting Keir Starmer in English, stating that he had supported the Labour Party all his life but was disappointed with Starmer’s leadership. The man is then heard asking Starmer to leave the pub.

Links to the viral post and its archived version were reviewed as part of the research.

Fact Check

To verify the claim, we extracted key frames from the viral video and conducted a Google reverse image search. During this process, we found the same video posted on an X account on April 19, 2021.The visuals in the 2021 post matched the viral video exactly, clearly indicating that the footage is not recent.The original post described the incident as an event involving Labour Party leader Keir Starmer during his visit to the Raven pub in Bath, and included a warning about strong language used by the pub owner, Rod Humphries. Here is the link to the original video, along with a screenshot:

Further keyword searches led us to a report published by NBC News on April 19, 2021. According to the report, Keir Starmer, then the leader of the UK’s opposition Labour Party, was confronted and asked to leave a pub in the city of Bath. The pub owner reportedly accused Starmer of failing to oppose COVID-19 lockdown measures strongly enough at a time when strict restrictions were in place across the UK.

- https://www.nbcnews.com/video/anti-lockdown-pub-landlord-screams-at-u-k-labour-party-leader-to-get-out-of-his-pub-110466117702

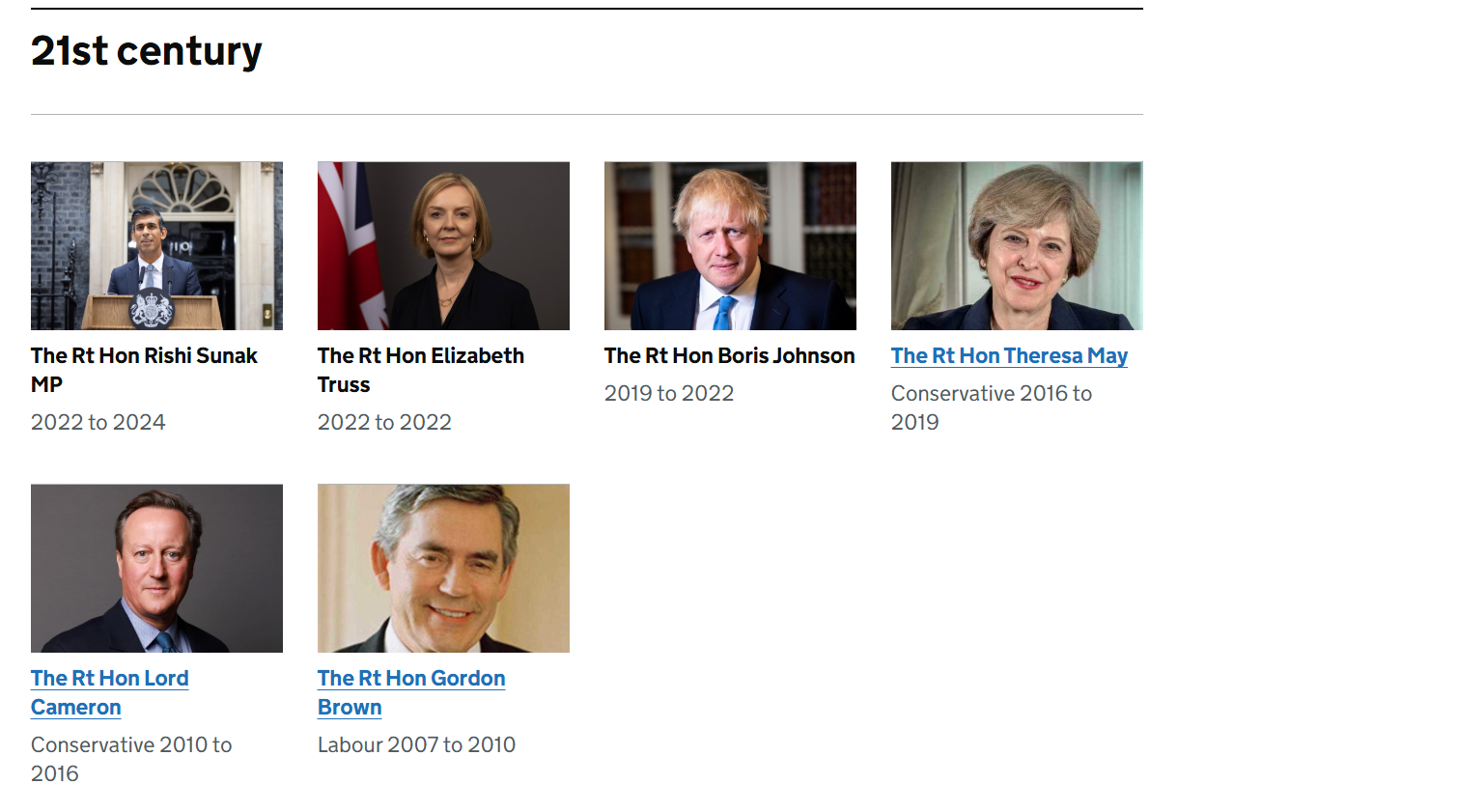

We also verified who held the office of British Prime Minister in 2021. Official UK government records confirm that Boris Johnson was the Prime Minister at that time, while Keir Starmer served as the Leader of the Opposition.

Conclusion

Our research confirms that the viral video is old and misleadingly presented. The footage is from 2021, when Keir Starmer was not the Prime Minister of the United Kingdom, but the opposition Labour Party leader. Sharing the video with the claim that it shows a current British Prime Minister being thrown out of a pub is factually incorrect.

Introduction

Since users are now constantly retrieving critical data on their mobile devices, fraudsters are now focusing on these devices. App-based, network-based, and device-based vulnerabilities are the three main ways of attacking that Mobile Endpoint Security names as mobile threats. Composed of the following features: program monitoring and risk, connection privacy and safety, psychological anomaly and reconfiguration recognition, and evaluation of vulnerabilities and management, this is how Gartner describes Mobile Threat Defense (MTD).

The widespread adoption and prevalence of cell phones among consumers worldwide have significantly increased in recent years. Users of these operating system-specific devices can install a wide range of software, or "apps," from online marketplaces like Google Play and the Apple App Store. The applications described above are the lifeblood of cell phones; they improve users' daily lives and augment the devices' performance. The app marketplaces let users quickly search for and install new programs, but certain malicious apps/links/websites can also be the origin of malware hidden among legitimate apps. These days, there are many different security issues and malevolent attacks that might affect mobile devices.

Unveiling Malware Landscape

The word "malware" refers to a comprehensive category of spyware intended to infiltrate networks, steal confidential data, cause disruptions, or grant illegal access. Malware can take many forms, such as Trojan horses, worms, ransomware, infections, spyware, and adware. Because each type has distinct goals and features, security specialists face a complex problem. Malware is a serious risk to both people and businesses. Security incidents, monetary losses, harm to one's credibility, and legal repercussions are possible outcomes. Understanding malware's inner workings is essential to defend against it effectively. Malware analysis is helpful in this situation. The practice of deconstructing and analysing dangerous software to comprehend its behaviour, operation, and consequences is known as malware analysis.Major threats targeting mobile phones

Viruses: Viruses are self-renewing programs that can steal data, launch denial of service assaults, or enact ransomware strikes. They spread by altering other software applications, adding malicious code, and running it on the target's device. Computer systems all over the world are still infected with viruses, which attack different operating systems like Mac and Microsoft Windows, even though there is a wealth of antiviral programs obtainable to mitigate their impacts.

Worms: Infections are independent apps that propagate quickly and carry out payloads—such as file deletion or the creation of botnets—to harm computers. Worms, in contrast to viruses, usually harm a computer system, even if it's just through bandwidth use. By taking advantage of holes in security or other vulnerabilities on the target computer, they spread throughout computer networks.

Ransomware: It causes serious commercial and organisational harm to people and businesses by encrypting data and demanding payment to unlock it. The daily operations of the victim organisation are somewhat disrupted, and they need to pay a ransom to get them back. It is not certain, though, that the financial transaction will be successful or that they will receive a working translation key.

Adware: It can be controlled via notification restrictions or ad-blockers, tracks user activities and delivers unsolicited advertisements. Adware poses concerns to users' privacy even though it's not always malevolent since the information it collects is frequently combined with information gathered from other places and used to build user profiles without their permission or knowledge.

Spyware: It can proliferate via malicious software or authentic software downloads, taking advantage of confidential data. This kind of spyware gathers data on users' actions without their authorisation or agreement, including:Internet activityBanking login credentialsPasswordsPersonally Identifiable Information (PII)

Navigating the Mobile Security Landscape

App-Centric Development: Regarding mobile security, app-centric protections are a crucial area of focus. Application authorisations should be regularly reviewed and adjusted to guarantee that applications only access the knowledge that is essential and to lower the probability of data misuse. Users can limit hazards and have greater oversight over their confidentiality by closely monitoring these settings. Installing trustworthy mobile security apps also adds another line of protection. With capabilities like app analysis, real-time protection, and antivirus scanning, these speciality apps strengthen your gadget's protection against malware and other harmful activity.

Network Security: Setting priorities for secure communication procedures is crucial for safeguarding confidential data and thwarting conceivable dangers in mobile security. Avoiding unprotected public Wi-Fi networks is essential since they may be vulnerable to cyberattacks. To lessen the chance of unwelcome entry and data surveillance, promote the usage of reliable, password-protected networks instead. Furthermore, by encrypting data transfer, Virtual Private Networks (VPNs) provide additional protection and make it more difficult for malevolent actors to corrupt information. To further improve security, avoid using public Wi-Fi for essential transactions and hold off until a secure network is available. Users can strengthen their handheld gadgets against possible privacy breaches by implementing these practices, which can dramatically lower the risk of data eavesdropping and illegal access.

Constant development: Maintaining a robust mobile security approach requires a dedication to constant development. Adopt a proactive stance by continuously improving and modifying your security protocols. By following up on recurring outreach and awareness campaigns, you can stay updated about new hazards. Because cybersecurity is a dynamic field, maintaining one step ahead and utilising emerging technologies is essential. Stay updated with security changes, implement the newest safeguards, and incorporate new industry standard procedures into your plan. This dedication to ongoing development creates a flexible barrier, strengthening your resistance to constantly evolving mobile security threats.

Threat emergency preparedness: To start, familiarise yourself with the ever-changing terrain associated with mobile dangers to security. Keep updated on new threats including malware, phishing, and illegal access.

Sturdy Device Management: Put in place a thorough approach to device management. This includes frequent upgrades, safe locking systems, and additional safeguarding capabilities like remote surveillance and erasing.

Customer Alertness: Emphasise proper online conduct and acquaint yourself and your team with potential hazards, such as phishing efforts.

Dynamic Measures for a Robust Wireless Safety Plan

In the dynamic field of mobile assurances, taking a proactive strategy is critical. To strengthen safeguards, thoroughly research common risks like malware, phishing, and illegal access. Establish a strong device management strategy that includes frequent upgrades, safe locking mechanisms, and remote monitoring and deletion capabilities for added security.

Promoting user awareness by educating people so they can identify and block any hazards, especially regarding phishing attempts. Reduce the dangers of data eavesdropping and illegal access by emphasising safe communication practices, using Virtual Private Networks (VPNs), and avoiding public Wi-Fi for essential transactions.

Pay close attention to app-centric integrity by periodically checking and modifying entitlements. Downloading trustworthy mobile security apps skilled at thwarting malware and other unwanted activity will enhance your smartphone's defenses. Lastly, create an atmosphere of continuous development by keeping up with new threats and utilising developing technology to make your handheld security plan more resilient overall.

Conclusion

Mobile privacy threats grow as portable electronics become increasingly integrated into daily activities. Effective defense requires knowledge of the various types of malware, such as worms, ransomware, adware, and spyware. Tools for Mobile Threat Defense, which prioritise vulnerability assessment, management, anomaly detection, connection privacy, and program monitoring, are essential. App-centric development, secure networking procedures, ongoing enhancement, threat readiness, strong device control, and user comprehension are all components of a complete mobile security strategy. People, as well as organisations, can strengthen their defenses against changing mobile security threats by implementing dynamic measures and maintaining vigilance, thereby guaranteeing safe and resilient mobile surrounding.

References

https://www.titanfile.com/blog/types-of-computer-malware/

https://www.simplilearn.com/what-is-a-trojan-malware-article

https://www.linkedin.com/pulse/latest-anti-analysis-tactics-guloader-malware-revealed-ukhxc/?trk=article-ssr-frontend-pulse_more-articles_related-content-card