#FactCheck - Viral Claim of Highway in J&K Proven Misleading

Executive Summary:

A viral post on social media shared with misleading captions about a National Highway being built with large bridges over a mountainside in Jammu and Kashmir. However, the investigation of the claim shows that the bridge is from China. Thus the video is false and misleading.

Claim:

A video circulating of National Highway 14 construction being built on the mountain side in Jammu and Kashmir.

Fact Check:

Upon receiving the image, Reverse Image Search was carried out, an image of an under-construction road, falsely linked to Jammu and Kashmir has been proven inaccurate. After investigating we confirmed the road is from a different location that is G6911 Ankang-Laifeng Expressway in China, highlighting the need to verify information before sharing.

Conclusion:

The viral claim mentioning under-construction Highway from Jammu and Kashmir is false. The post is actually from China and not J&K. Misinformation like this can mislead the public. Before sharing viral posts, take a brief moment to verify the facts. This highlights the importance of verifying information and relying on credible sources to combat the spread of false claims.

- Claim: Under-Construction Road Falsely Linked to Jammu and Kashmir

- Claimed On: Instagram and X (Formerly Known As Twitter)

- Fact Check: False and Misleading

Related Blogs

Executive Summary:

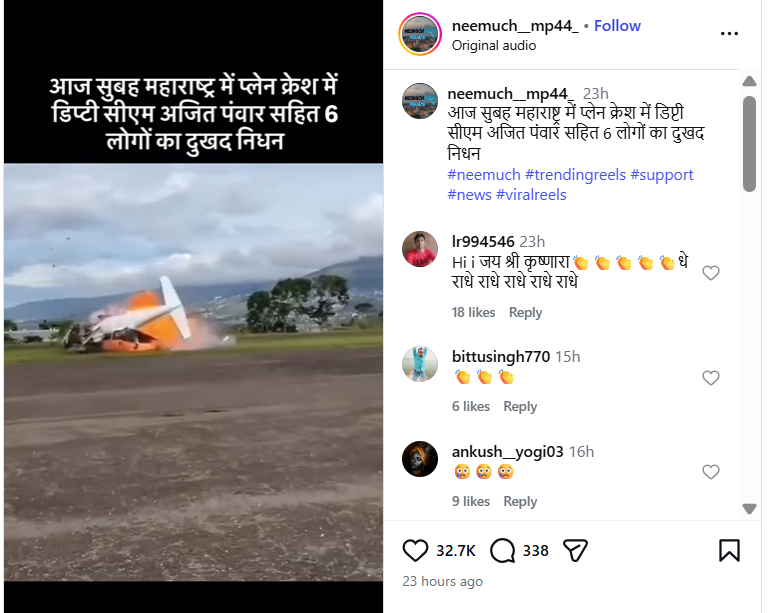

A video claiming to show the plane crash that allegedly killed Maharashtra Deputy Chief Minister Ajit Pawar has been widely circulated on social media. The circulation began soon after reports emerged of a tragic aircraft accident in Baramati, Maharashtra, on January 28, 2026, in which Ajit Pawar and five others were reported to have died. The viral video shows a plane crashing to the ground moments after take-off. Social media users have claimed that the footage captures the exact incident in which Ajit Pawar was on board. However, an research by the CyberPeacehas found that this claim is false.

Claim:

An Instagram user shared the video on January 28, 2026, claiming that it showed the plane crash in Maharashtra in which Deputy Chief Minister Ajit Pawar and others allegedly lost their lives. The caption accompanying the video read:“This morning, Deputy CM Ajit Pawar and six others tragically died in a plane crash in Maharashtra.”

Links to the post and its archived version are provided below.

Fact Check:

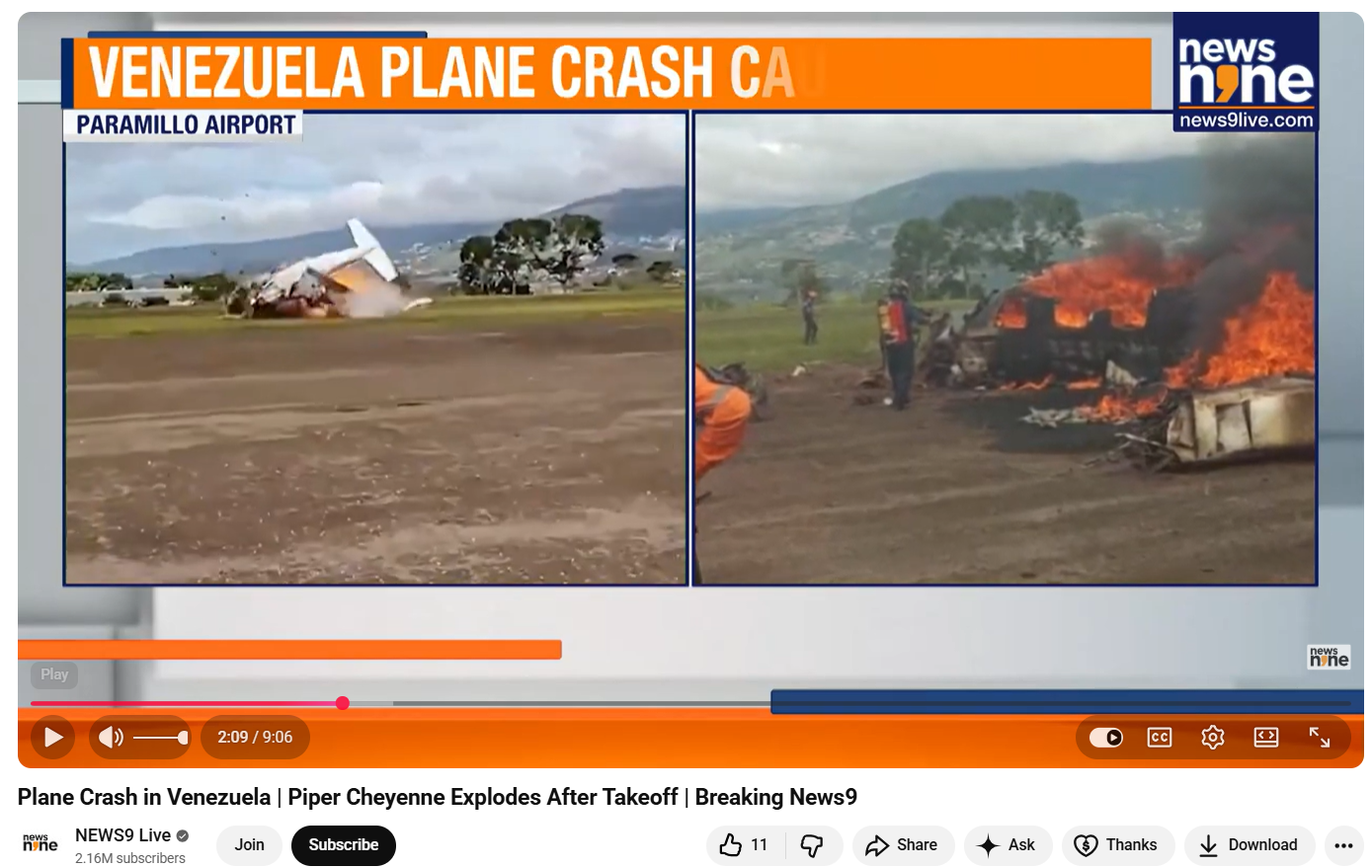

To verify the authenticity of the viral video, the CyberPeaceconducted a reverse image search of its keyframes. During this process, the same visuals were found in a video report uploaded on News9 Live’s official YouTube channel on October 23, 2025.

According to the report, the footage shows a plane crash in Venezuela, not India. The incident occurred shortly after a Piper Cheyenne aircraft took off from Paramillo Airport in Táchira, Venezuela. The aircraft crashed within seconds of take-off, killing both occupants on board. The deceased were identified as pilot José Bortone and co-pilot Juan Maldonado. Further confirmation came from a report published on October 22, 2025, by Latin American news outlet El Tiempo. The Spanish-language report also featured the same video visuals and stated that a small aircraft lost control and crashed on the runway at Paramillo Airport in Venezuela, resulting in the deaths of the pilot and co-pilot.

Conclusion

The CyberPeace’s research clearly establishes that the viral video being shared as footage of Ajit Pawar’s alleged plane crash in Baramati is misleading. The video actually shows a plane crash that occurred in Venezuela in October 2025 and has been falsely linked to a tragic claim in India.

Introduction

In the wake of the Spy Loan scandal, more than a dozen malicious loan apps were downloaded on Android phones from the Google Play Store, However, the number is significantly higher because they are also available on third-party marketplaces and questionable websites.

Unmasking the Scam

When a user borrows money, these predatory lending applications capture large quantities of information from their smartphone, which is then used to blackmail and force them into returning the total with hefty interest levels. While the loan amount is disbursed to users, these predatory loan apps request sensitive information by granting access to the camera, contacts, messages, logs, images, Wi-Fi network details, calendar information, and other personal information. These are then sent to loan shark servers.

The researchers have disclosed facts about the applications used by loan sharks to mislead consumers, as well as the numerous techniques used to circumvent some of the limitations imposed on the Play Store. Malware is often created with appealing user interfaces and promotes simple and rapid access to cash with high-interest payback conditions. The revelation of the Spy Loan scandal has triggered an immediate response from law enforcement agencies worldwide. There is an urgency to protect millions of users from becoming victims of malicious loan apps, it has become extremely important for law enforcement to unmask the culprits and dismantle the cyber-criminal network.

Aap’s banned: here is the list of the apps banned by Google Play Store :

- AA Kredit: इंस्टेंट लोन ऐप (com.aa.kredit.android)

- Amor Cash: Préstamos Sin Buró (com.amorcash.credito.prestamo)

- Oro Préstamo – Efectivo rápido (com.app.lo.go)

- Cashwow (com.cashwow.cow.eg)

- CrediBus Préstamos de crédito (com.dinero.profin.prestamo.credito.credit.credibus.loan.efectivo.cash)

- ยืมด้วยความมั่นใจ – ยืมด่วน (com.flashloan.wsft)

- PréstamosCrédito – GuayabaCash (com.guayaba.cash.okredito.mx.tala)

- Préstamos De Crédito-YumiCash (com.loan.cash.credit.tala.prestmo.fast.branch.mextamo)

- Go Crédito – de confianza (com.mlo.xango)

- Instantáneo Préstamo (com.mmp.optima)

- Cartera grande (com.mxolp.postloan)

- Rápido Crédito (com.okey.prestamo)

- Finupp Lending (com.shuiyiwenhua.gl)

- 4S Cash (com.swefjjghs.weejteop)

- TrueNaira – Online Loan (com.truenaira.cashloan.moneycredit)

- EasyCash (king.credit.ng)

- สินเชื่อปลอดภัย – สะดวก (com.sc.safe.credit)

Risks with several dimensions

SpyLoan's loan application violates Google's Financial Services policy by unilaterally shortening the repayment period for personal loans to a few days or any other arbitrary time frame. Additionally, the company threatens users with public embarrassment and exposure if they do not comply with such unreasonable demands.

Furthermore, the privacy rules presented by SpyLoan are misleading. While ostensibly reasonable justifications are provided for obtaining certain permissions, they are very intrusive practices. For instance, camera permission is ostensibly required for picture data uploads for Know Your Customer (KYC) purposes, and access to the user's calendar is ostensibly required to plan payment dates and reminders. However, both of these permissions are dangerous and can potentially infringe on users' privacy.

Prosecution Strategies and Legal Framework

The law enforcement agencies and legal authorities initiated prosecution strategies against the individuals who are involved in the Spy Loan Scandal, this multifaced approach involves international agreements and the exploration of innovative legal avenues. Agencies need to collaborate with International agencies to work on specific cyber-crime, leveraging the legal frameworks against digital fraud furthermore, the cross-border nature of the spy loan operation requires a strong legal framework to exchange information, extradition requests, and the pursuit of legal actions across multiple jurisdictions.

Legal Protections for Victims: Seeking Compensation and Restitution

As the legal battle unfolds in the aftermath of the Spy loan scam the focus shifts towards the victims, who suffer financial loss from such fraudulent apps. Beyond prosecuting culprits, the pursuit of justice should involve legal safeguards for victims. Existing consumer protection laws serve as a crucial shield for Spy Loan victims. These laws are designed to safeguard the rights of individuals against unfair practices.

Challenges in legal representation

As the legal hunt for justice in the Spy Loan scam progresses, it encounters challenges that demand careful navigation and strategic solutions. One of the primary obstacles in the legal pursuit of the Spy loan app lies in the jurisdictional complexities. Within the national borders, it’s quite challenging to define the jurisdiction that holds the authority, and a unified approach in prosecuting the offenders in various regions with the efforts of various government agencies.

Concealing the digital identities

One of the major challenges faced is the anonymity afforded by the digital realm poses a challenge in identifying and catching the perpetrators of the scam, the scammers conceal their identity and make it difficult for law enforcement agencies to attribute to actions against the individuals, this challenge can be overcome by joint effort by international agencies and using the advance digital forensics and use of edge cutting technology to unmask these scammers.

Technological challenges

The nature of cyber threats and crime patterns are changing day by day as technology advances this has become a challenge for legal authorities, the scammers explore vulnerabilities, making it essential, for law enforcement agencies to be a step ahead, which requires continuous training of cybercrime and cyber security.

Shaping the policies to prevent future fraud

As the scam unfolds, it has become really important to empower users by creating more and more awareness campaigns. The developers of the apps need to have a transparent approach to users.

Conclusion

It is really important to shape the policies to prevent future cyber frauds with a multifaced approach. Proposals for legislative amendments, international collaboration, accountability measures, technology protections, and public awareness programs all contribute to the creation of a legal framework that is proactive, flexible, and robust to cybercriminals' shifting techniques. The legal system is at the forefront of this effort, playing a critical role in developing regulations that will protect the digital landscape for years to come.

Safeguarding against spyware threats like SpyLoan requires vigilance and adherence to best practices. Users should exclusively download apps from official sources, meticulously verify the authenticity of offerings, scrutinize reviews, and carefully assess permissions before installation.

References

Executive Summary

Muslims offering prayers inside a crowded train in Japan is being widely shared on social media, amid ongoing discussions around the country’s alleged rise in anti-immigration sentiment. The clip is being presented as a recent and real incident. However, an research reveals that the video is not authentic. Experts noted that the prayer postures shown in the clip do not align with standard Islamic practices, raising doubts about its credibility. Further analysis indicates that the video has been generated using artificial intelligence (AI).

Claim

A user shared the viral video on YouTube, showing a group of men—mostly dressed in long tunics and skullcaps—appearing to offer prayers inside a moving subway train. Passengers can be seen seated on both sides of the carriage. In the clip, two men are kneeling on the floor and bowing their heads onto a small mat placed in front of them, with their heads coming very close to the knees of seated passengers. Another man is seen bending forward at the waist while standing, and a fourth appears to be standing upright with his eyes closed.

- Link: https://www.youtube.com/shorts/cZHMCUgbDIA

,

Fact Check

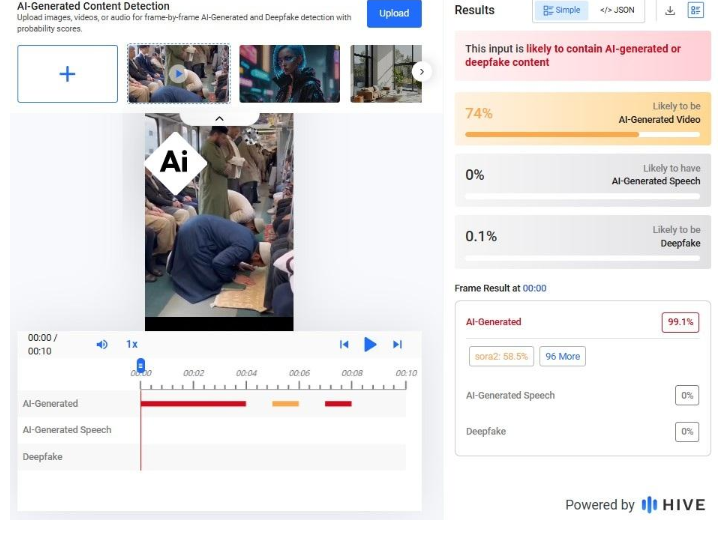

A closer examination of the video reveals several visual inconsistencies. One passenger appears to be fused with the seat rails, creating a distorted overlap. Others seem to be seated in areas where seats do not normally exist, such as directly in front of a door. Additionally, an advertisement visible in the background appears blurred and oddly shaped—another common indicator of AI-generated content. An analysis conducted using the Hive Moderation tool found that the video is “likely to contain AI-generated or deepfake content.”

Conclusion

The viral claim is misleading. The video does not depict a real incident in Japan. Instead, it is likely AI-generated content being circulated with a false narrative, misrepresenting both the context and religious practices shown in the clip.