#FactCheck - Old Wedding Fire Video Misleadingly Shared as Iranian Hypersonic Missile Strike in Tel Aviv

Executive Summary:

Amid the ongoing conflict involving the United States, Israel, and Iran, a video showing a building engulfed in flames is being widely circulated on social media. In the clip, a large fire can be seen inside a building while several people appear to be running in panic. The video is being shared with the claim that Iran fired a hypersonic missile targeting a ceremony in Tel Aviv, Israel, allegedly killing several Israeli military generals and other prominent figures.

However, research by the CyberPeace found that the claim is false. The video being circulated as footage of an attack in Israel actually predates the current conflict and shows a fire that broke out during a wedding ceremony.

Claim

A Facebook user named “Syed Asif Raza Jafri” shared the video on March 13, 2026, claiming that an Iranian hypersonic missile had struck a grand ceremony in Tel Aviv, where several Israeli military officers, generals, soldiers, and other important personalities were present. According to the post, the attack resulted in multiple casualties.

Source:

- https://www.facebook.com/reel/902182825912364

- https://ghostarchive.org/archive/rZryr

Fact Check

To verify the claim, we began our research using the Google Lens reverse image search tool. Several key frames from the viral video were extracted and searched online.

During the search, we found the same video shared earlier on multiple foreign social media accounts. A Facebook user named “Es de Bombero” from Chile had posted the video on January 17, 2026, describing it in Spanish as footage of a fire that broke out during a wedding celebration.

Our research shows that the viral video had been circulating on social media since at least January 15, 2026, well before the escalation of the current conflict. According to a report published on March 1, 2026, by BBC, the large-scale attacks on Iran by the United States and Israel began on February 28, 2026, after which Iran’s Supreme Leader Ali Khamenei was reported dead.

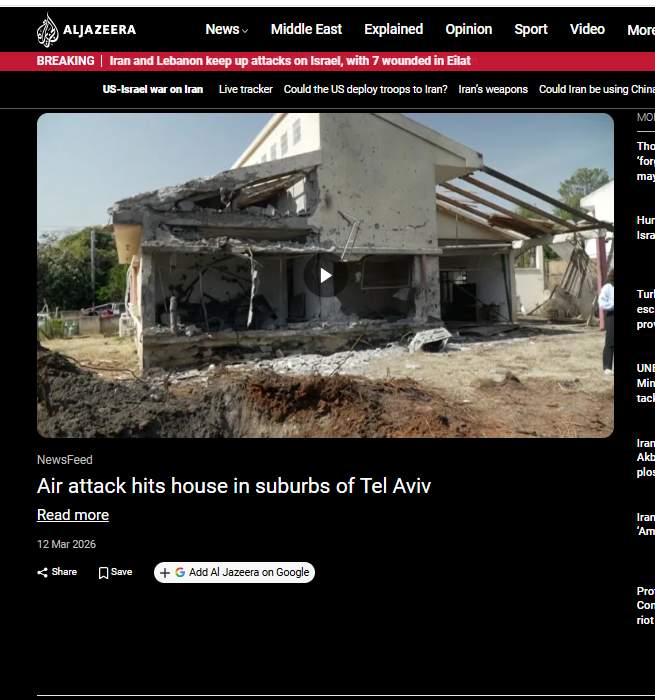

Additionally, a March 12, 2026 report by Al Jazeera stated that a house near Tel Aviv in central Israel was damaged by a rocket reportedly fired by Hezbollah, which has previously carried out joint attacks in coordination with Iran.

Conclusion

The viral video being shared as footage of an Iranian hypersonic missile strike in Tel Aviv is misleading. The clip is an older video of a fire that reportedly broke out during a wedding ceremony and was circulating online before the current conflict began.

While the exact location of the incident shown in the video cannot be independently verified, it is clear that the footage has no connection to the ongoing war between the United States, Israel, and Iran.

Related Blogs

Introduction

In the hyperconnected world, cyber incidents can no longer be treated as sporadic disruptions; such incidents have become an everyday occurrence. The attack landscape today is very consequential and shows significant multiplication in its frequency, with ransomware attacks incapacitating a health system, phishing attacks hitting a financial institution, or state-sponsored attacks on critical infrastructures. Towards counteracting such threats, traditional ways alone are not enough, they gravely rely on manual research and human intellect. Attackers exercise speed, scale, and stealth, and defenders are always four steps behind. With such a widening gap, it is deemed necessary to facilitate incident response and crisis management with the intervention of automation and artificial intelligence (AI) for faster detection, context-driven decision-making, and collaborative response beyond human capabilities.

Incident Response and Crisis Management

Incident response is the structured way in which organisations deal with responding to detecting, segregating, and recovering from security incidents. Crisis management takes this even further, dealing not only with the technical fallout of a breach but also its business, reputation, and regulatory implications. Echelon used to depend on manual teams of people sorting through logs, cross-correlating alarms, and generating responses, a paradigm effective for small numbers but quickly inadequate in today's threat climate. Today's opponents attack at machine speed, employing automation to launch attacks. Under such circumstances, responding with slow, manual methods means delay and draconian consequences. The AI and automation introduction is a paradigm change that allows organisations to equate the pace and precision with which attackers initiate attacks in responding to incidents.

How Automation Reinvents Response

Cybercrime automation liberates cybercrime analysts from boring and repetitive tasks that consume time. An analyst manually detects potential threats from a list of hundreds each day, while automated systems sift through noise and focus only on genuine threats. Malware can automatically cause infected computers to be disconnected from the network to avoid spreading or may automatically have its suspicious account permissions removed without human intervention. The security orchestration systems move further by introducing playbooks, predefined steps describing how incidents of a certain type (e.g., phishing attempts or malware infections) should be handled. This ensures fast containment while ensuring consistency and minimising human error amid the urgency of dealing with thousands of alerts.

Automation takes care of threat detection, prioritisation, and containment, allowing human analysts to refocus on more complex decision-making. Instead of drowning in the sea of trivial alerts, security teams can now devote their efforts to more strategic areas: threat hunting and longer-term resilience. Automation is a strong tool of defence, cutting response times down from hours to minutes.

The Intelligence Layer: AI in Action

If automation provides speed, then AI is what allows the brain to be intelligent and flexible. Working with old and fixed-rule systems, AI-enabled solutions learn from experiences, adapt to changes in threats, and discover hidden patterns of which human analysts themselves would be unaware. For instance, machine learning algorithms identify normal behaviour on a corporate network and raise alerts on any anomalies that could indicate an insider attack or an advanced persistent threat. Similarly, AI systems sift through global threat intelligence to predict likely attack vectors so organisations can have their vulnerabilities fixed before they are exploited.

AI also boosts forensic analysis. Instead of searching forever for clues, analysts let AI-driven systems trace back to the origin of an event, identify vulnerabilities exploited by attackers, and flag systems that are still under attack. During a crisis, AI is a decision support that predicts outcomes of different scenarios and recommends the best response. In response to a ransomware attack, for example, based on context, AI might advise separating a single network segment or restoring from backup or alerting law enforcement.

Real-World Applications and Case Studies

Already, this mitigation has been provided in the form of real-world applications of automation and AI. Consider, for example, IBM Watson for Cybersecurity, which has been applied in analysing unstructured threat intelligence and providing analysts with actionable results in minutes, rather than days. Like this, systems driven by AI in DARPA's Cyber Grand Challenge demonstrated the ability to automatically identify an instant vulnerability, patch it, and reveal the potential of a self-healing system. AI-powered fraud detection systems stop suspicious transactions in the middle of their execution and work all night to prevent losses. What is common in all these examples is that automation and AI lessen human effort, increase accuracy, and in the event of a cyberattack, buy precious time.

Challenges and Limitations

While promising, the technology is still not fully mature. The quality of an AI system is highly dependent on the training data provided; poor training can generate false positives that drown teams or worse false negatives that allow attackers to proceed unabated. Attackers have also started targeting AI itself by poisoning datasets or designing malware that does not get detected. Aside from risks that are more technical, the operational and financial costs involved in implementing advanced AI-based systems present expensive threats to any company. Organisations will have to make expenditures not only on technology but also for the training of staff to best utilise these tools. There are some ethical and privacy issues to consider as well because systems may be processing sensitive personal data, so global data protection laws such as the GDPR or India's DPDP Act could come into conflict.

Creating a Human-AI Collaboration

The future is not going to be one of substitution by machines but of creating human-AI synergy. Automation can do the drudgery, AI can provide smarts, and human professionals can use judgment, imagination, and ethical decisions. One would want to build AI-fuelled Security Operations Centres where technology and human experts work in tandem. Continuous training must be provided to AI models to reduce false alarms and make them most resistant against adversarial attacks. Regular conduct of crisis drills that combine AI tools and human teams can ensure preparedness for real-time events. Likewise, it is worth integrating ethical AI guidelines into security frameworks to ensure a stronger defence while respecting privacy and regulatory compliance.

Conclusion

Cyber-attacks are an eventuality in this modern time, but the actual impact need not be so harsh. The organisations can maintain the programmatic method of integrating automation and AI into incident response and crisis management so that the response against the very threat can be shifted from reactive firefighting to proactive resilience. Automation gives speed and efficiency while AI gives intelligence and foresight, hence putting the defenders on par and possibly exceeding the speed and sophistication of the attackers. But an utmost system without human inquisitiveness, ethical reasoning, and strategic foresight would remain imperfect. The best defence is in that human-machine relationship symbiotic system wherein automation and AI take care of how fast and how many cyber threats come in, whereas human intellect ensures that every response is aligned with larger organizational goals. This synergy is where cybersecurity resiliency will reside in the future-the defenders won't just be reacting to emergencies but will rather be driving the way.

References

- https://www.sisainfosec.com/blogs/incident-response-automation/

- https://stratpilot.ai/role-of-ai-in-crisis-management-and-its-critical-importance/

- https://www.juvare.com/integrating-artificial-intelligence-into-crisis-management/

- https://www.motadata.com/blog/role-of-automation-in-incident-management/

Introduction

There is a rising desire for artificial intelligence (AI) laws that limit threats to public safety and protect human rights while allowing for a flexible and inventive setting. Most AI policies prioritize the use of AI for the public good. The most compelling reason for AI innovation as a valid goal of public policy is its promise to enhance people's lives by assisting in the resolution of some of the world's most difficult difficulties and inefficiencies and to emerge as a transformational technology, similar to mobile computing. This blog explores the complex interplay between AI and internet governance from an Indian standpoint, examining the challenges, opportunities, and the necessity for a well-balanced approach.

Understanding Internet Governance

Before delving into an examination of their connection, let's establish a comprehensive grasp of Internet Governance. This entails the regulations, guidelines, and criteria that influence the global operation and management of the Internet. With the internet being a shared resource, governance becomes crucial to ensure its accessibility, security, and equitable distribution of benefits.

The Indian Digital Revolution

India has witnessed an unprecedented digital revolution, with a massive surge in internet users and a burgeoning tech ecosystem. The government's Digital India initiative has played a crucial role in fostering a technology-driven environment, making technology accessible to even the remotest corners of the country. As AI applications become increasingly integrated into various sectors, the need for a comprehensive framework to govern these technologies becomes apparent.

AI and Internet Governance Nexus

The intersection of AI and Internet governance raises several critical questions. How should data, the lifeblood of AI, be governed? What role does privacy play in the era of AI-driven applications? How can India strike a balance between fostering innovation and safeguarding against potential risks associated with AI?

- AI's Role in Internet Governance:

Artificial Intelligence has emerged as a powerful force shaping the dynamics of the internet. From content moderation and cybersecurity to data analysis and personalized user experiences, AI plays a pivotal role in enhancing the efficiency and effectiveness of Internet governance mechanisms. Automated systems powered by AI algorithms are deployed to detect and respond to emerging threats, ensuring a safer online environment.

A comprehensive strategy for managing the interaction between AI and the internet is required to stimulate innovation while limiting hazards. Multistakeholder models including input from governments, industry, academia, and civil society are gaining appeal as viable tools for developing comprehensive and extensive governance frameworks.

The usefulness of multistakeholder governance stems from its adaptability and flexibility in requiring collaboration from players with a possible stake in an issue. Though flawed, this approach allows for flaws that may be remedied using knowledge-building pieces. As AI advances, this trait will become increasingly important in ensuring that all conceivable aspects are covered.

The Need for Adaptive Regulations

While AI's potential for good is essentially endless, so is its potential for damage - whether intentional or unintentional. The technology's highly disruptive nature needs a strong, human-led governance framework and rules that ensure it may be used in a positive and responsible manner. The fast emergence of GenAI, in particular, emphasizes the critical need for strong frameworks. Concerns about the usage of GenAI may enhance efforts to solve issues around digital governance and hasten the formation of risk management measures.

Several AI governance frameworks have been published throughout the world in recent years, with the goal of offering high-level guidelines for safe and trustworthy AI development. The OECD's "Principles on Artificial Intelligence" (OECD, 2019), the EU's "Ethics Guidelines for Trustworthy AI" (EU, 2019), and UNESCO's "Recommendations on the Ethics of Artificial Intelligence" (UNESCO, 2021) are among the multinational organizations that have released their own principles. However, the advancement of GenAI has resulted in additional recommendations, such as the OECD's newly released "G7 Hiroshima Process on Generative Artificial Intelligence" (OECD, 2023).

Several guidance documents and voluntary frameworks have emerged at the national level in recent years, including the "AI Risk Management Framework" from the United States National Institute of Standards and Technology (NIST), a voluntary guidance published in January 2023, and the White House's "Blueprint for an AI Bill of Rights," a set of high-level principles published in October 2022 (The White House, 2022). These voluntary policies and frameworks are frequently used as guidelines by regulators and policymakers all around the world. More than 60 nations in the Americas, Africa, Asia, and Europe had issued national AI strategies as of 2023 (Stanford University).

Conclusion

Monitoring AI will be one of the most daunting tasks confronting the international community in the next centuries. As vital as the need to govern AI is the need to regulate it appropriately. Current AI policy debates too often fall into a false dichotomy of progress versus doom (or geopolitical and economic benefits versus risk mitigation). Instead of thinking creatively, solutions all too often resemble paradigms for yesterday's problems. It is imperative that we foster a relationship that prioritizes innovation, ethical considerations, and inclusivity. Striking the right balance will empower us to harness the full potential of AI within the boundaries of responsible and transparent Internet Governance, ensuring a digital future that is secure, equitable, and beneficial for all.

References

- The Key Policy Frameworks Governing AI in India - Access Partnership

- AI in e-governance: A potential opportunity for India (indiaai.gov.in)

- India and the Artificial Intelligence Revolution - Carnegie India - Carnegie Endowment for International Peace

- Rise of AI in the Indian Economy (indiaai.gov.in)

- The OECD Artificial Intelligence Policy Observatory - OECD.AI

- Artificial Intelligence | UNESCO

- Artificial intelligence | NIST

.webp)

Executive Summary:

A viral video of the Argentina football team dancing in the dressing room to a Bhojpuri song is being circulated in social media. After analyzing the originality, CyberPeace Research Team discovered that this video was altered and the music was edited. The original footage was posted by former Argentine footballer Sergio Leonel Aguero in his official Instagram page on 19th December 2022. Lionel Messi and his teammates were shown celebrating their win at the 2022 FIFA World Cup. Contrary to viral video, the song in this real-life video is not from Bhojpuri language. The viral video is cropped from a part of Aguero’s upload and the audio of the clip has been changed to incorporate the Bhojpuri song. Therefore, it is concluded that the Argentinian team dancing to Bhojpuri song is misleading.

Claims:

A video of the Argentina football team dancing to a Bhojpuri song after victory.

Fact Check:

On receiving these posts, we split the video into frames, performed the reverse image search on one of these frames and found a video uploaded to the SKY SPORTS website on 19 December 2022.

We found that this is the same clip as in the viral video but the celebration differs. Upon further analysis, We also found a live video uploaded by Argentinian footballer Sergio Leonel Aguero on his Instagram account on 19th December 2022. The viral video was a clip from his live video and the song or music that’s playing is not a Bhojpuri song.

Thus this proves that the news that circulates in the social media in regards to the viral video of Argentina football team dancing Bhojpuri is false and misleading. People should always ensure to check its authenticity before sharing.

Conclusion:

In conclusion, the video that appears to show Argentina’s football team dancing to a Bhojpuri song is fake. It is a manipulated version of an original clip celebrating their 2022 FIFA World Cup victory, with the song altered to include a Bhojpuri song. This confirms that the claim circulating on social media is false and misleading.

- Claim: A viral video of the Argentina football team dancing to a Bhojpuri song after victory.

- Claimed on: Instagram, YouTube

- Fact Check: Fake & Misleading