Introduction

The development of high-speed broadband internet in the 90s triggered a growth in online gaming, particularly in East Asian countries like South Korea and China. This culminated in the proliferation of competitive video game genres, which had otherwise existed mostly in the form of high-score and face-to-face competitions at arcades. The online competitive gaming market has only become bigger over the years, with a separate domain for professional competition, called esports. This industry is projected to reach US$4.3 billion by 2029, driven by advancements in gaming technology, increased viewership, multi-million dollar tournaments, professional leagues, sponsorships, and advertising revenues. However, the industry is still in its infancy and struggles with fairness and integrity issues. It can draw lessons in regulation from the traditional sports market to address these challenges for uniform global growth.

The Growth of Esports

The appeal of online gaming lies in its design innovations, social connectivity, and accessibility. Its rising popularity has culminated in online gaming competitions becoming an industry, formally organised into leagues and tournaments with reward prizes reaching up to millions of dollars. Professional teams now have coaches, analysts and psychologists supporting their players. For scale, the 2024 ESports World Cup (EWS) held in Saudi Arabia had the largest combined prize pool of over US$60 million. Such tournaments can be viewed in arenas and streamed online, and by 2025, around 322.7 million people are forecast to be occasional viewers of esports events.

According to Statista, esports revenue is expected to demonstrate an annual growth rate (CAGR 2024-2029) of 6.59%, resulting in a projected market volume of US$5.9 billion by 2029. Esports has even been recognised in traditional sporting events, debuting as a medal sport in the Asian Games 2022. In 2024, the International Olympic Committee (IOC) announced the Olympic Esports Games, with the inaugural event set to take place in 2025 in Saudi Arabia. Hosting esports events such as the EWS is expected to boost tourism and the host country’s local economy.

The Challenges of Esports Regulation

While the esports ecosystem provides numerous opportunities for growth and partnerships, its under-regulation presents challenges. Due to the lack of a single governing body like the IOC for the Olympics or FIFA for football to lay down centralised rules, the industry faces certain challenges, such as :

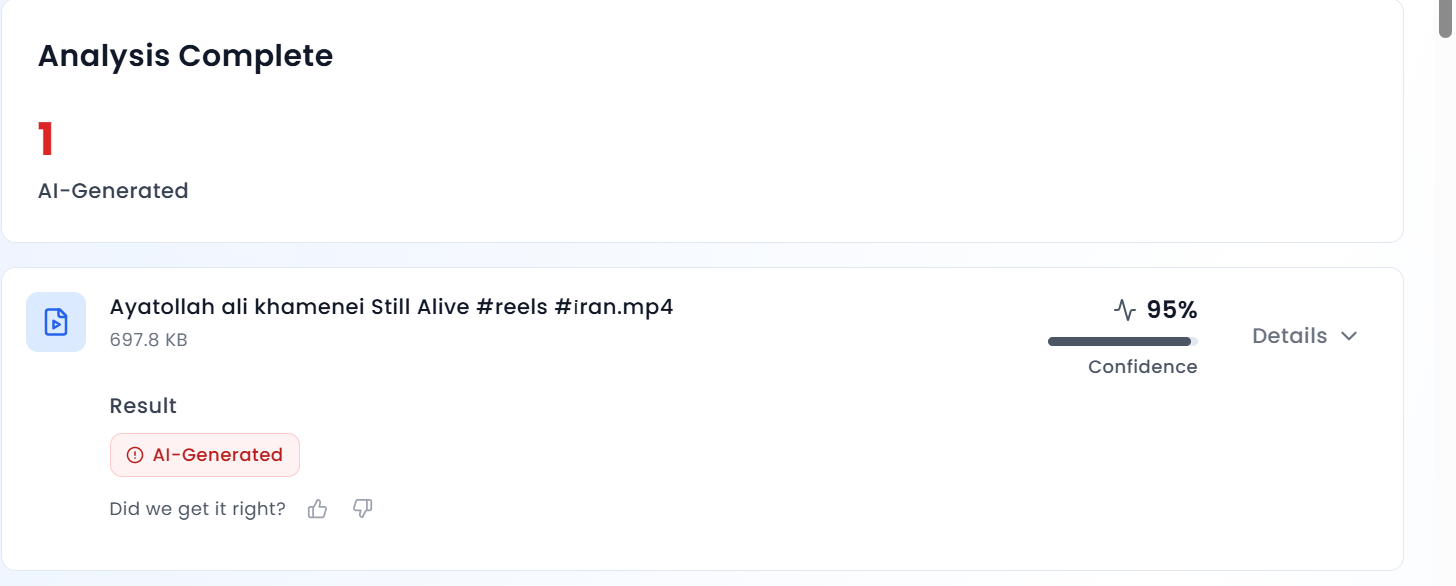

- Integrity issues: Esports are not immune to cheating attempts. Match-fixing, using advanced software hacks, doping (e.g., Adderall use), and the use of other illegal aids are common. DOTA, Counter-Strike, and Overwatch tournaments are particularly susceptible to cheating scandals.

- Players’ Rights: The teams that contractually own professional players provide remuneration and exercise significant control over athletes, who face issues like overwork, a short-lived career, stress, the absence of collective bargaining forums, instability, etc.

- Fragmented National Regulations: While multiple countries have recognised esports as a sport, policies on esports governance and allied regulation vary within and across borders. For example, age restrictions and laws on gambling, taxation, labour, and advertising differ by country. This can create confusion, risks and extra costs, impacting the growth of the ecosystem.

- Cybersecurity Concerns: The esports industry carries substantial prize pools and has growing viewer engagement, which makes it increasingly vulnerable to Distributed Denial of Service (DDoS) attacks, malware, ransomware, data breaches, phishing, and account hijacking. Tournament organisers must prioritise investments in secure network infrastructure, perform regular security audits, encrypt sensitive data, implement network monitoring, utilise API penetration testing tools, deploy intrusion detection systems, and establish comprehensive incident response and mitigation plans.

Proposals for Esports Regulation: Lessons from Traditional Sports

To address the most urgent challenges to the esports industry as outlined above, the following interventions, drawing on the governance and regulatory frameworks of traditional sports, can be made:

- Need for a Centralised Esports Governing Body: Unlike traditional sports, the esports landscape lacks a Global Sports Organisation (GSO) to oversee its governance. Instead, it is handled de facto by game publishers with industry interests different from those of traditional GSOs. Publishers’ primary source of revenue is not esports, which means they can adopt policies unsuitable for its growth but good for their core business. Appointing a centralised governing body with the power to balance the interests of multiple stakeholders and manage issues like unregulated gambling, athlete health, and integrity challenges is a logical next step for this industry.

- Gambling/Betting Regulations: While national laws on gambling/betting vary, GSOs establish uniform codes of conduct that bind participants contractually, ensuring consistent ethical standards across jurisdictions. Similar rules in esports are managed by individual publishers/ tournament organisers, leading to inconsistencies and legal grey areas. The esports ecosystem needs standardised regulation to preserve fair play codes and competitive integrity.

- Anti-Doping Policies: There is increasing adderall abuse among young players to enhance performance with the rising monetary stakes in esports. The industry must establish a global framework similar to the World Anti-Doping Code, which, in conjunction with eight international standards, harmonises anti-doping policies across all traditional sports and countries in the world. The esports industry should either adopt this or develop its own policy to curb stimulant abuse.

- Norms for Participant Health: Professional players start around age 16 or 17 and tend to retire around 24. They may be subjected to rigorous practice hours and stringent contracts by the teams that own them. There is a need for international norm-setting by a federation overseeing the protection of underage players. Enforcement of these norms can be one of the responsibilities of a decentralised system comprising country and state-level bodies. This also ensures fair play governance.

- Respect and Diversity: While esports is technologically accessible, it still has room for better representation of diverse gender identities, age groups, abilities, races, ethnicities, religions, and sexual orientations. Embracing greater diversity and inclusivity would benefit the industry's growth and enhance its potential to foster social connectivity through healthy competition.

Conclusion

The development of the world’s first esports island in Abu Dhabi gives impetus to the rapidly growing esports industry with millions of fans across the globe. To sustain this momentum, stakeholders must collaborate to build a strong governance framework that protects players, supports fans, and strengthens the ecosystem. By learning from traditional sports, esports can establish centralised governance, enforce standardised anti-doping measures, safeguard athlete rights, and promote inclusivity, especially for young and diverse communities. Embracing regulation and inclusivity will not only enhance esports' credibility but also position it as a powerful platform for unity, creativity, and social connection in the digital age.

Resources