#FactCheck – Elephant Falls From Truck? No, This Elephant Fall Video Is AI-Manipulated

Executive Summary:

A video circulating on social media claims to show a live elephant falling from a moving truck due to improper transportation, followed by the animal quickly standing up and reacting on a public road. The content may raise concerns related to animal cruelty, public safety, and improper transport practices. A detailed examination using AI content detection tools, visual anomaly analysis indicates that the video is not authentic and is likely AI generated or digitally manipulated.

Claim:

The viral video (archive link) shows a disturbing scene where a large elephant is allegedly being transported in an open blue truck with barriers for support. As the truck moves along the road, the elephant shifts its weight and the weak side barrier breaks. This causes the elephant to fall onto the road, where it lands heavily on its side. Shortly after, the animal is seen getting back on its feet and reacting in distress, facing the vehicle that is recording the incident. The footage may raise serious concerns about safety, as elephants are normally transported in reinforced containers, and such an incident on a public road could endanger both the animal and people nearby.

Fact Check:

After receiving the video, we closely examined the visuals and noticed some inconsistencies that raised doubts about its authenticity. In particular, the elephant is seen recovering and standing up unnaturally quickly after a severe fall, which does not align with realistic animal behavior or physical response to such impact.

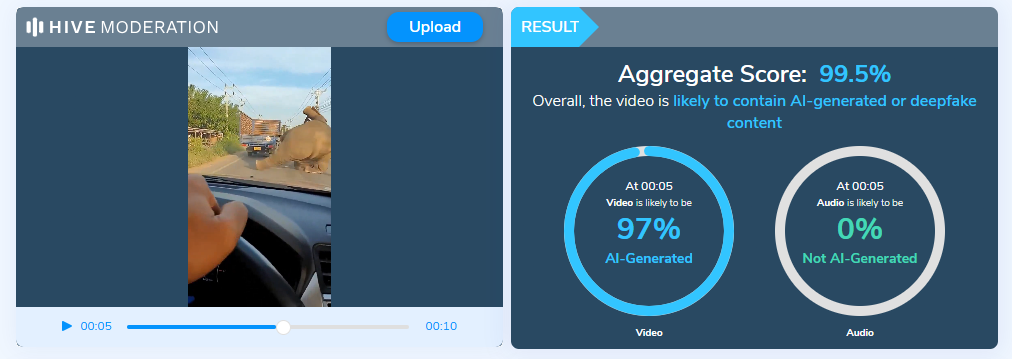

To further verify our observations, the video was analyzed using the Hive Moderation AI Detection tool, which indicated that the content is likely AI generated or digitally manipulated.

Additionally, no credible news reports or official sources were found to corroborate the incident, reinforcing the conclusion that the video is misleading.

Conclusion:

The claim that the video shows a real elephant transport accident is false and misleading. Based on AI detection results, observable visual anomalies, and the absence of credible reporting, the video is highly likely to be AI generated or digitally manipulated. Viewers are advised to exercise caution and verify such sensational content through trusted and authoritative sources before sharing.

- Claim: The viral video shows an elephant allegedly being transported, where a barrier breaks as it moves, causing the animal to fall onto the road before quickly getting back on its feet.

- Claimed On: X (Formally Twitter)

- Fact Check: False and Misleading

Related Blogs

Executive Summary

A viral graphic post on social media claims that India’s GDP (Gross Domestic Product) was “fake for 10 years.” The post also states that the real economic growth was around 4%, while official figures reported it at 6%. It further cites a former Chief Economic Adviser (Ex-CEA) and presents the claim as a “revelation.”

Research by CyberPeace Research Wing found this claim to be misleading. No official government document, nor India’s Ministry of Statistics and Programme Implementation (MoSPI), the Reserve Bank of India (RBI), or any recognised international institution has stated that India’s GDP was “fake.”

Claim

On the social media platform Instagram, a user shared a post claiming that the Chief Economic Adviser said India’s GDP (Gross Domestic Product) was “fake for 10 years.” The link to the post and its archive link are given below, along with a screenshot.

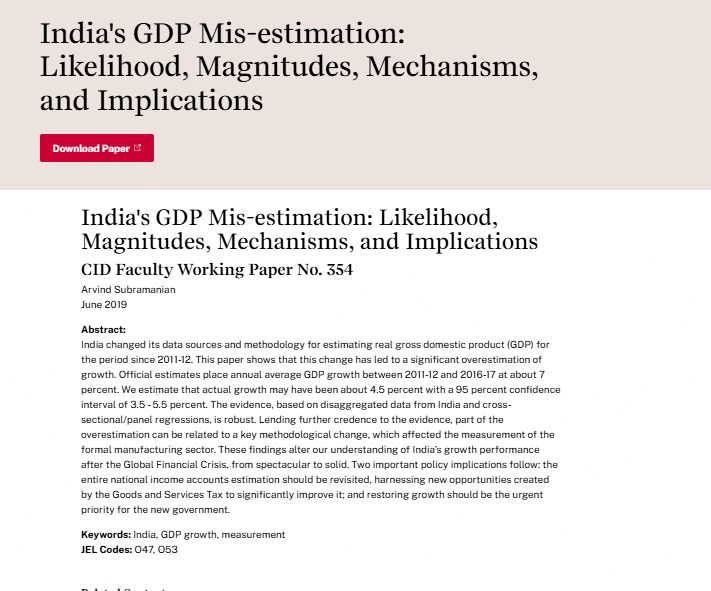

The viral post refers to a 2019 research paper linked to former Chief Economic Adviser (Ex-CEA) Arvind Subramanian. In this study, he raised questions about India’s GDP growth estimation and suggested that during 2011–12 to 2016–17, the actual growth could have been around 4.5%, while the official estimate was close to 7%.

However, the study does not conclude anywhere that India’s GDP was “fake” or entirely incorrect. It only presents an alternative estimation based on different assumptions and methods, which has also been challenged by other economists and government agencies.

- https://www.hks.harvard.edu/centers/cid/publications/faculty-working-papers/india-gdp-overestimate?utm_source

- https://www.hks.harvard.edu/centers/cid/publications/faculty-working-papers/india-gdp-overestimate?utm_source

Conclusion:

The claim circulating on social media is misleading. The former Chief Economic Adviser provided an academic view on GDP estimation, but there is no evidence or official confirmation that India’s GDP was “fake for 10 years.” The data released by the Government of India was not validated by the figures circulated on social media.

Introduction

As the sun rises on the Indian subcontinent, a nation teeters on the precipice of a democratic exercise of colossal magnitude. The Lok Sabha elections, a quadrennial event that mobilises the will of over a billion souls, is not just a testament to the robustness of India's democratic fabric but also a crucible where the veracity of information is put to the sternest of tests. In this context, the World Economic Forum's 'Global Risks Report 2024' emerges as a harbinger of a disconcerting trend: the spectre of misinformation and disinformation that threatens to distort the electoral landscape.

The report, a carefully crafted document that shares the insights of 1,490 experts from the interests of academia, government, business and civil society, paints a tableau of the global risks that loom large over the next decade. These risks, spawned by the churning cauldron of rapid technological change, economic uncertainty, a warming planet, and simmering conflict, are not just abstract threats but tangible realities that could shape the future of nations.

India’s Electoral Malice

India, as it strides towards the general elections scheduled in the spring of 2024, finds itself in the vortex of this hailstorm. The WEF survey positions India at the zenith of vulnerability to disinformation and misinformation, a dubious distinction that underscores the challenges facing the world's largest democracy. The report depicts misinformation and disinformation as the chimaeras of false information—whether inadvertent or deliberate—that are dispersed through the arteries of media networks, skewing public opinion towards a pervasive distrust in facts and authority. This encompasses a panoply of deceptive content: fabricated, false, manipulated and imposter.

The United States, the European Union, and the United Kingdom too, are ensnared in this web of varying degrees of misinformation. South Africa, another nation on the cusp of its own electoral journey, is ranked 22nd, a reflection of the global reach of this phenomenon. The findings, derived from a survey conducted over the autumnal weeks of September to October 2023, reveal a world grappling with the shadowy forces of untruth.

Global Scenario

The report prognosticates that as close to three billion individuals across diverse economies—Bangladesh, India, Indonesia, Mexico, Pakistan, the United Kingdom, and the United States—prepare to exercise their electoral rights, the rampant use of misinformation and disinformation, and the tools that propagate them, could erode the legitimacy of the governments they elect. The repercussions could be dire, ranging from violent protests and hate crimes to civil confrontation and terrorism.

Beyond the electoral arena, the fabric of reality itself is at risk of becoming increasingly polarised, seeping into the public discourse on issues as varied as public health and social justice. As the bedrock of truth is undermined, the spectre of domestic propaganda and censorship looms large, potentially empowering governments to wield control over information based on their own interpretation of 'truth.'

The report further warns that disinformation will become increasingly personalised and targeted, honing in on specific groups such as minority communities and disseminating through more opaque messaging platforms like WhatsApp or WeChat. This tailored approach to deception signifies a new frontier in the battle against misinformation.

In a world where societal polarisation and economic downturn are seen as central risks in an interconnected 'risks network,' misinformation and disinformation have ascended rapidly to the top of the threat hierarchy. The report's respondents—two-thirds of them—cite extreme weather, AI-generated misinformation and disinformation, and societal and/or political polarisation as the most pressing global risks, followed closely by the 'cost-of-living crisis,' 'cyberattacks,' and 'economic downturn.'

Current Situation

In this unprecedented year for elections, the spectre of false information looms as one of the major threats to the global populace, according to the experts surveyed for the WEF's 2024 Global Risk Report. The report offers a nuanced analysis of the degrees to which misinformation and disinformation are perceived as problems for a selection of countries over the next two years, based on a ranking of 34 economic, environmental, geopolitical, societal, and technological risks.

India, the land of ancient wisdom and modern innovation, stands at the crossroads where the risk of disinformation and misinformation is ranked highest. Out of all the risks, these twin scourges were most frequently selected as the number one risk for the country by the experts, eclipsing infectious diseases, illicit economic activity, inequality, and labor shortages. The South Asian nation's next general election, set to unfurl between April and May 2024, will be a litmus test for its 1.4 billion people.

The spectre of fake news is not a novel adversary for India. The 2019 election was rife with misinformation, with reports of political parties weaponising platforms like WhatsApp and Facebook to spread incendiary messages, stoking fears that online vitriol could spill over into real-world violence. The COVID-19 pandemic further exacerbated the issue, with misinformation once again proliferating through WhatsApp.

Other countries facing a high risk of the impacts of misinformation and disinformation include El Salvador, Saudi Arabia, Pakistan, Romania, Ireland, Czechia, the United States, Sierra Leone, France, and Finland, all of which consider the threat to be one of the top six most dangerous risks out of 34 in the coming two years. In the United Kingdom, misinformation/disinformation is ranked 11th among perceived threats.

The WEF analysts conclude that the presence of misinformation and disinformation in these electoral processes could seriously destabilise the real and perceived legitimacy of newly elected governments, risking political unrest, violence, and terrorism, and a longer-term erosion of democratic processes.

The 'Global Risks Report 2024' of the World Economic Forum ranks India first in facing the highest risk of misinformation and disinformation in the world at a time when it faces general elections this year. The report, released in early January with the 19th edition of its Global Risks Report and Global Risk Perception Survey, claims to reveal the varying degrees to which misinformation and disinformation are rated as problems for a selection of analyzed countries in the next two years, based on a ranking of 34 economic, environmental, geopolitical, societal, and technological risks.

Some governments and platforms aiming to protect free speech and civil liberties may fail to act effectively to curb falsified information and harmful content, making the definition of 'truth' increasingly contentious across societies. State and non-state actors alike may leverage false information to widen fractures in societal views, erode public confidence in political institutions, and threaten national cohesion and coherence.

Trust in specific leaders will confer trust in information, and the authority of these actors—from conspiracy theorists, including politicians, and extremist groups to influencers and business leaders—could be amplified as they become arbiters of truth.

False information could not only be used as a source of societal disruption but also of control by domestic actors in pursuit of political agendas. The erosion of political checks and balances and the growth in tools that spread and control information could amplify the efficacy of domestic disinformation over the next two years.

Global internet freedom is already in decline, and access to more comprehensive sets of information has dropped in numerous countries. The implication: Falls in press freedoms in recent years and a related lack of strong investigative media are significant vulnerabilities set to grow.

Advisory

Here are specific best practices for citizens to help prevent the spread of misinformation during electoral processes:

- Verify Information:Double-check the accuracy of information before sharing it. Use reliable sources and fact-checking websites to verify claims.

- Cross-Check Multiple Sources:Consult multiple reputable news sources to ensure that the information is consistent across different platforms.

- Be Wary of Social Media:Social media platforms are susceptible to misinformation. Be cautious about sharing or believing information solely based on social media posts.

- Check Dates and Context:Ensure that information is current and consider the context in which it is presented. Misinformation often thrives when details are taken out of context.

- Promote Media Literacy:Educate yourself and others on media literacy to discern reliable sources from unreliable ones. Be skeptical of sensational headlines and clickbait.

- Report False Information:Report instances of misinformation to the platform hosting the content and encourage others to do the same. Utilise fact-checking organisations or tools to report and debunk false information.

- Critical Thinking:Foster critical thinking skills among your community members. Encourage them to question information and think critically before accepting or sharing it.

- Share Official Information:Share official statements and information from reputable sources, such as government election commissions, to ensure accuracy.

- Avoid Echo Chambers:Engage with diverse sources of information to avoid being in an 'echo chamber' where misinformation can thrive.

- Be Responsible in Sharing:Before sharing information, consider the potential impact it may have. Refrain from sharing unverified or sensational content that can contribute to misinformation.

- Promote Open Dialogue:Open discussions should be promoted amongst their community about the significance of factual information and the dangers of misinformation.

- Stay Calm and Informed:During critical periods, such as election days, stay calm and rely on official sources for updates. Avoid spreading unverified information that can contribute to panic or confusion.

- Support Media Literacy Programs:Media Literacy Programs in schools should be promoted to provide individuals with essential skills to sail through the information sea properly.

Conclusion

Preventing misinformation requires a collective effort from individuals, communities, and platforms. By adopting these best practices, citizens can play a vital role in reducing the impact of misinformation during electoral processes.

References:

- https://thewire.in/media/survey-finds-false-information-risk-highest-in-india

- https://thesouthfirst.com/pti/india-faces-highest-risk-of-disinformation-in-general-elections-world-economic-forum/

India is the world's largest democracy, and conducting free and fair elections is a mammoth task shouldered by the Election Commission of India. But technology is transforming every aspect of the electoral process in the digital age, with Artificial Intelligence (AI) being integrated into campaigns, voter engagement, and election monitoring. In the upcoming Bihar elections of 2025, all eyes are on how the use of AI will influence the state polls and the precedent it will set for future elections.

Opportunities: Harnessing AI for Better Elections

Breaking Language Barriers with AI:

AI is reshaping political outreach by making speeches accessible in multiple languages. At the Kashi Tamil Sangamam in 2024, the PM’s Hindi address was AI-dubbed in Tamil in real time. Since then, several speeches have been rolled out in eight languages, ensuring inclusivity and connecting with voters beyond Hindi-speaking regions more effectively.

Monitoring and Transparency

During Bihar’s Panchayat polls, the State Election Commission used Staqu’s JARVIS, an AI-powered system that connects with CCTV cameras to monitor EVM screens in real time. By reducing human error, JARVIS brought greater accuracy, speed, and trust to the counting process.

AI for Information Access on Public Service Delivery

NaMo AI is a multilingual chatbot that citizens can use to inquire about the details of public services. The feature aims to make government schemes easy to understand, transparent, and help voters connect directly with the policies of the government.

Personalised Campaigning

AI is transforming how campaigns connect with voters. By analysing demographics and social media activity, AI builds detailed voter profiles. This helps craft messages that feel personal, whether on WhatsApp, a robocall, or a social media post, ensuring each group hears what matters most to them. This aims to make political outreach sharper and more effective.

Challenges: The Dark Side of AI in Elections

Deepfakes and Disinformation

AI-powered deepfakes create hyper-realistic videos and audio that are nearly impossible to distinguish from the real. In elections, they can distort public perception, damage reputations, or fuel disharmony on social media. There is a need for mandatory disclaimers stating when content is AI-generated, to ensure transparency and protect voters from manipulative misinformation.

Data Privacy and Behavioural Manipulation

Cambridge Analytica’s consulting services, provided by harvesting the data of millions of users from Facebook without their consent, revealed how personal data can be weaponised in politics. This data was allegedly used to “microtarget” users through ads, which could influence their political opinions. Data mining of this nature can be supercharged through AI models, jeopardising user privacy, trust, safety, and casting a shadow on democratic processes worldwide.

Algorithmic Bias

AI systems are trained on datasets. If the datasets contain biases, AI-driven tools could unintentionally reinforce stereotypes or favor certain groups, leading to unfair outcomes in campaigning or voter engagement.

The Road Ahead: Striking a Balance

The adoption of AI in elections opens a Pandora's box of uncertainties. On the one hand, it offers solutions for breaking language barriers and promoting inclusivity. On the other hand, it opens the door to manipulation and privacy violations.

To counter risks from deepfakes and synthetic content, political parties are now advised to clearly label AI-generated materials and add disclaimers in their campaign messaging. In Delhi, a nodal officer has even been appointed to monitor social media misuse, including the circulation of deepfake videos during elections. The Election Commission of India constantly has to keep up with trends and tactics used by political parties to ensure that elections remain free and fair.

Conclusion

With Bihar’s pioneering experiments with JARVIS in Panchayat elections to give vote counting more accuracy and speed, India is witnessing both sides of this technological revolution. The challenge lies in ensuring that AI strengthens democracy rather than undermining it. Deepfakes algorithms, bias, and data misuse remind us of the risk of when technology oversteps. The real challenge is to strike the right balance in embracing AI for elections to enhance inclusivity and transparency, while safeguarding trust, privacy, and the integrity of democratic processes.

References

- https://timesofindia.indiatimes.com/india/how-ai-is-rewriting-the-rules-of-election-campaign-in-india/articleshow/120848499.cms#

- https://m.economictimes.com/news/elections/lok-sabha/india/2024-polls-stand-out-for-use-of-ai-to-bridge-language-barriers/articleshow/108737700.cms

- https://www.ndtv.com/india-news/namo-ai-on-namo-app-a-unique-chatbot-that-will-answer-everything-on-pm-modi-govt-schemes-achievements-5426028

- https://timesofindia.indiatimes.com/gadgets-news/staqu-deploys-jarvis-to-facilitate-automated-vote-counting-for-bihar-panchayat-polls/articleshow/87307475.cms

- https://www.drishtiias.com/daily-updates/daily-news-editorials/deepfakes-in-elections-challenges-and-mitigation

- https://internetpolicy.mit.edu/blog-2018-fb-cambridgeanalytica/

- https://www.deccanherald.com/elections/delhi/delhi-assembly-elections-2025-use-ai-transparently-eci-issues-guidelines-for-political-parties-3357978#