#FactCheck - Digitally Manipulated Video Misrepresents Surinder Choudhary’s Remarks on PM Modi

Executive Summary

A video circulating on social media claims that Jammu and Kashmir Deputy Chief Minister Surinder Choudhary described Prime Minister Narendra Modi as an agent of Pakistan’s Inter-Services Intelligence (ISI). In the viral clip, Choudhary is allegedly heard accusing the Prime Minister of pushing Kashmir towards Pakistan and claiming that even pro-India Kashmiris are disillusioned with Modi’s policies.

However, research by the CyberPeace research wing has found that the video is digitally manipulated. While the visuals are genuine and taken from a real media interaction, the audio has been fabricated and falsely overlaid to misattribute inflammatory remarks to the Deputy Chief Minister.

Claim

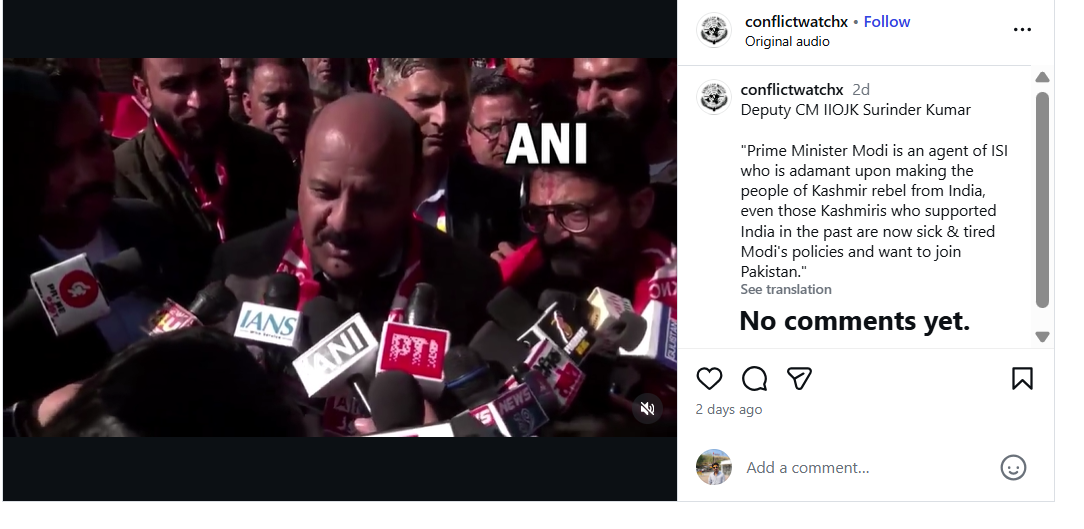

An Instagram account named Conflict Watch shared the video on January 20, claiming that J&K Deputy Chief Minister Surinder Choudhary had called Prime Minister Modi an ISI agent. The video purportedly quoted Choudhary as saying that Modi was elected with Pakistan’s support and that Kashmir would soon become part of Pakistan due to his policies.

Here is the link and archive link to the post, along with a screenshot.

Fact Check:

To verify the claim, the Desk conducted a Google Lens search, which led to a video uploaded on January 20, 2026, on the official YouTube channel of Jammu and Kashmir–based news outlet JKUpdate. The footage was an extended version of the viral clip and featured identical visuals. The original video showed Surinder Choudhary addressing the media on the sidelines of the inaugural two-day JKNC Convention of Block Presidents and Secretaries in the Jammu province. A review of the full media interaction revealed that Choudhary did not make any statements calling Prime Minister Modi an ISI agent or suggesting that Kashmir should join Pakistan.

Instead, in the original footage, Choudhary was seen criticising former Jammu and Kashmir Chief Minister and PDP leader Mehbooba Mufti for supporting the BJP during the bifurcation of Jammu and Kashmir and Ladakh into two Union Territories. He also spoke about the challenges faced by the region after the abrogation of Article 370 and demanded the restoration of full statehood for Jammu and Kashmir. During the interaction, Choudhary said that anyone attempting to divide Jammu and Kashmir at the state or regional level was effectively following Pakistan’s agenda and Jinnah’s two-nation theory. He added that such individuals could not be considered patriots.

Here is the link to the video, along with a screenshot.

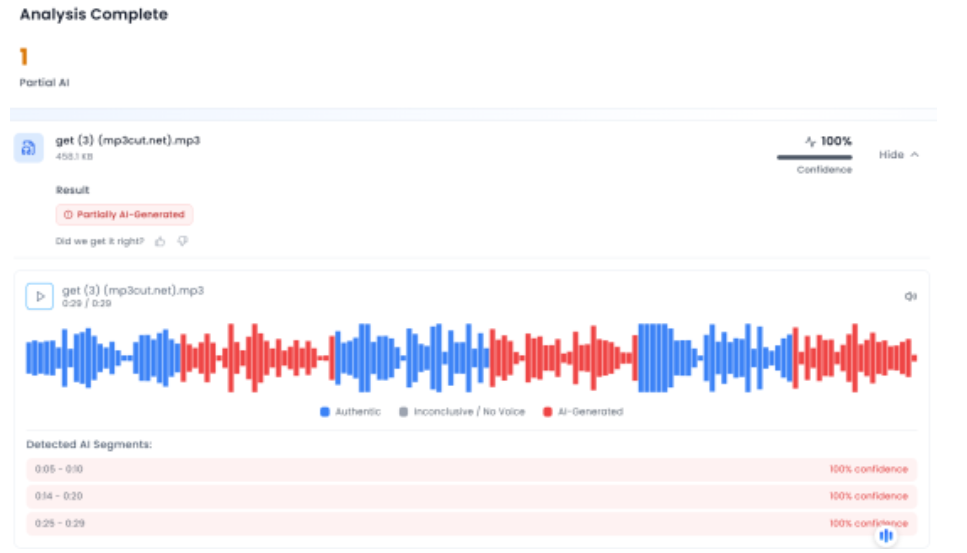

In the next phase of the research , the Desk extracted the audio from the viral clip and analysed it using the AI-based audio detection tool Aurigin. The analysis indicated that the voice in the viral video was partially AI-generated, further confirming that the clip had been tampered with.

Below is a screenshot of the result.

Conclusion

Multiple social media users shared a video claiming it showed Jammu and Kashmir Deputy Chief Minister Surinder Choudhary calling Prime Minister Narendra Modi an agent of the ISI. However, the CyberPeace found that the viral video was digitally manipulated. While the visuals were taken from a genuine media interaction with the leader, a fabricated audio track was overlaid to attribute the statements to him falsely.