#FactCheck - AI-Generated Video of Peacock ‘Rescue’ Falsely Shared as Real

Executive Summary:

A video showing a peacock allegedly trapped in ice has been going viral on social media. In the clip, the peacock appears to be frozen in a snow-covered area. Moments later, a man is seen approaching with a hammer and breaking the ice to rescue the bird. Social media users are sharing the video as a real-life incident, praising the peacock’s resilience and describing the scene as inspiring. However, CyberPeace research found the viral claim to be misleading. Our research revealed that the video was created using Artificial Intelligence (AI) and is being falsely circulated as a real incident.

Claim:

Facebook user ‘Ras Bihari Pathak’ shared the viral video on January 25, 2026, with the caption: “This peacock is not standing on ice, but on courage. It reminds us that no matter how harsh the circumstances are, hope always returns in colours.” The archived version of the post can be accessed here.

Fact Check:

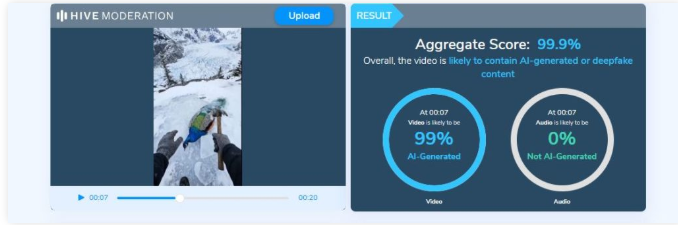

To verify the claim, we first conducted a keyword search on Google to check whether any such real incident involving a peacock trapped in ice had been reported. However, no credible or verified media reports were found. Next, we closely examined the viral video. Upon observation, the peacock’s movements and reactions appeared unnatural and artificial. The motion lacked realistic physical behaviour, raising suspicion that the video might have been digitally generated. To confirm this, we analysed the clip using the AI video detection tool Hive Moderation, which indicated a 99 per cent or higher likelihood that the video was AI-generated.

Conclusion:

CyberPeace research confirms that the viral video showing a peacock allegedly trapped in ice is not real. The clip has been created using Artificial Intelligence and is being shared on social media with a false and misleading claim.

Related Blogs

Introduction

In today’s digital world, where everything is related to data, the more data you own, the more control and compliance you have over the market, which is why companies are looking for ways to use data to improve their business. But at the same time, they have to make sure they are protecting people’s privacy. It is very tricky to strike a balance between both of them. Imagine you are trying to bake a cake where you need to use all the ingredients to make it taste great, but you also have to make sure no one can tell what’s in it. That’s kind of what companies are dealing with when it comes to data. Here, ‘Pseudonymisation’ emerges as a critical technical and legal mechanism that offers a middle ground between data anonymisation and unrestricted data processing.

Legal Framework and Regulatory Landscape

Pseudonymisation, as defined by the General Data Protection Regulation (GDPR) in Article 4(5), refers to “the processing of personal data in such a manner that the personal data can no longer be attributed to a specific data subject without the use of additional information, provided that such additional information is kept separately and is subject to technical and organisational measures to ensure that the personal data are not attributed to an identified or identifiable natural person”. This technique represents a paradigm shift in data protection strategy, enabling organisations to preserve data utility while significantly reducing privacy risks. The growing importance of this balance is evident in the proliferation of data protection laws worldwide, from GDPR in Europe to India’s Digital Personal Data Protection Act (DPDP) of 2023.

Its legal treatment varies across jurisdictions, but a convergent approach is emerging that recognises its value as a data protection safeguard while maintaining that the pseudonymised data remains personal data. Article 25(1) of GDPR recognises it as “an appropriate technical and organisational measure” and emphasises its role in reducing risks to data subjects. It protects personal data by reducing the risk of identifying individuals during data processing. The European Data Protection Board’s (EDPB) 2025 Guidelines on Pseudonymisation provide detailed guidance emphasising the importance of defining the “pseudonymisation domain”. It defines who is prevented from attributing data to specific individuals and ensures that the technical and organised measures are in place to block unauthorised linkage of pseudonymised data to the original data subjects. In India, while the DPDP Act does not explicitly define pseudonymisation, legal scholars argue that such data would still fall under the definition of personal data, as it remains potentially identifiable. The Act defines personal data defined in section 2(t) broadly as “any data about an individual who is identifiable by or in relation to such data,” suggesting that the pseudonymised information, being reversible, would continue to require compliance with data protection obligations.

Further, the DPDP Act, 2023 also includes principles of data minimisation and purpose limitation. Section 8(4) says that a “Data Fiduciary shall implement appropriate technical and organisational measures to ensure effective observance of the provisions of this Act and the Rules made under it.” The concept of Pseudonymization fits here because it is a recognised technical safeguard, which means companies can use pseudonymization as one of the methods or part of their compliance toolkit under Section 8(4) of the DPDP Act. However, its use should be assessed on a case to case basis, since ‘encryption’ is also considered one of the strongest methods for protecting personal data. The suitability of pseudonymization depends on the nature of the processing activity, the type of data involved, and the level of risk that needs to be mitigated. In practice, organisations may use pseudonymization in combination with other safeguards to strengthen overall compliance and security.

The European Court of Justice’s recent jurisprudence has introduced nuanced considerations about when pseudonymised data might not constitute personal data for certain entities. In cases where only the original controller possesses the means to re-identify individuals, third parties processing such data may not be subject to the full scope of data protection obligations, provided they cannot reasonably identify the data subjects. The “means reasonably likely” assessment represents a significant development in understanding the boundaries of data protection law.

Corporate Implementation Strategies

Companies find that pseudonymisation is not just about following rules, but it also brings real benefits. By using this technique, businesses can keep their data more secure and reduce the damage in the event of a breach. Customers feel more confident knowing that their information is protected, which builds trust. Additionally, companies can utilise this data for their research or other important purposes without compromising user privacy.

Key Benefits of Pseudonymisation:

- Enhanced Privacy Protection: It hides personal details like names or IDs with fake ones (with artificial values or codes), making it harder for accidental privacy breaches.

- Preserved Data Utility: Unlike completely anonymous data, pseudonymised data keeps its usefulness by maintaining important patterns and relationships within datasets.

- Facilitate Data Sharing: It’s easier to share pseudonymised data with partners or researchers because it protects privacy while still being useful.

However, using pseudonymisation is not as easy as companies have to deal with tricky technical issues like choosing the right methods, such as encryption or tokenisation and managing security keys safely. They have to implement strong policies to stop anyone from figuring out who the data belongs to. This can get expensive and complicated, especially when dealing with a large amount of data, and it often requires expert help and regular upkeep.

Balancing Privacy Rights and Data Utility

The primary challenge in pseudonymisation is striking the right balance between protecting individuals' privacy and maintaining the utility of the data. To get this right, companies need to consider several factors, such as why they are using the data, the potential hacker's level of skill, and the type of data being used.

Conclusion

Pseudonymisation offers a practical middle ground between full anonymisation and restricted data use, enabling organisations to harness the value of data while protecting individual privacy. Legally, it is recognised as a safeguard but still treated as personal data, requiring compliance under frameworks like GDPR and India’s DPDP Act. For companies, it is not only regulatory adherence but also ensuring that it builds trust and enhances data security. However, its effectiveness depends on robust technical methods, governance, and vigilance. Striking the right balance between privacy and data utility is crucial for sustainable, ethical, and innovation-driven data practices.

References:

- https://gdpr-info.eu/art-4-gdpr/

- https://www.meity.gov.in/static/uploads/2024/06/2bf1f0e9f04e6fb4f8fef35e82c42aa5.pdf

- https://gdpr-info.eu/art-25-gdpr/

- https://www.edpb.europa.eu/system/files/2025-01/edpb_guidelines_202501_pseudonymisation_en.pdf

- https://curia.europa.eu/juris/document/document.jsf?text=&docid=303863&pageIndex=0&doclang=EN&mode=req&dir=&occ=first&part=1&cid=16466915

- https://curia.europa.eu/juris/document/document.jsf?text=&docid=303863&pageIndex=0&doclang=EN&mode=req&dir=&occ=first&part=1&cid=16466915

.webp)

Executive Summary:

A viral image circulating on social media claims to show a Hindu Sadhvi marrying a Muslim man; however, this claim is false. A thorough investigation by the Cyberpeace Research team found that the image has been digitally manipulated. The original photo, which was posted by Balmukund Acharya, a BJP MLA from Jaipur, on his official Facebook account in December 2023, he was posing with a Muslim man in his election office. The man wearing the Muslim skullcap is featured in several other photos on Acharya's Instagram account, where he expressed gratitude for the support from the Muslim community. Thus, the claimed image of a marriage between a Hindu Sadhvi and a Muslim man is digitally altered.

Claims:

An image circulating on social media claims to show a Hindu Sadhvi marrying a Muslim man.

Fact Check:

Upon receiving the posts, we reverse searched the image to find any credible sources. We found a photo posted by Balmukund Acharya Hathoj Dham on his facebook page on 6 December 2023.

This photo is digitally altered and posted on social media to mislead. We also found several different photos with the skullcap man where he was featured.

We also checked for any AI fabrication in the viral image. We checked using a detection tool named, “content@scale” AI Image detection. This tool found the image to be 95% AI Manipulated.

We also checked with another detection tool for further validation named, “isitai” image detection tool. It found the image to be 38.50% of AI content, which concludes to the fact that the image is manipulated and doesn’t support the claim made. Hence, the viral image is fake and misleading.

Conclusion:

The lack of credible source and the detection of AI manipulation in the image explains that the viral image claiming to show a Hindu Sadhvi marrying a Muslim man is false. It has been digitally altered. The original image features BJP MLA Balmukund Acharya posing with a Muslim man, and there is no evidence of the claimed marriage.

- Claim: An image circulating on social media claims to show a Hindu Sadhvi marrying a Muslim man.

- Claimed on: X (Formerly known as Twitter)

- Fact Check: Fake & Misleading

Introduction

In the rapidly evolving landscape of cyber threats, a novel menace has surfaced the concept of Digital Arrest. The impostors impersonating law enforcement officers deceive the victims into believing that their bank account, SIM card, Aadhaar card, or bank card has been used unlawfully. They coerce victims into paying them money. Digital Arrest involves the virtual restraint of individuals. These suspensions can vary from restricted access to the account(s), and digital platforms, to implementing measures to prevent further digital activities or being restrained on video calling or being monitored through video calling. In the era of digitisation where the technology is growing on an exponential phase, various existing loopholes are being utilised by the wrongdoers which has given rise to this sinister trend known as “digital arrest fraud”. In this scam, the defrauder manipulates the victims, who impersonate law enforcement officials and further traps the victims into a web of deception involving threats of imminent digital restraint and coerced financial transactions.

Recognizing the Danger of Digital Arrest

A recent case involving an interactive voice response (IVR) call that targeted a victim sheds light on the complexities of the "digital arrest" cybercrime. The victim was notified by the scammers—who were pretending to be law enforcement officers—that a SIM card in her name had apparently been utilised in a criminal incident in Mumbai. The call proceeded to a video conversation with an FBI agent who falsely accused her of being involved in money laundering. The victim was forced into a web of dishonesty because she now believed she was involved in a criminal case, underscoring the psychological manipulation these hackers were using.

Recent incidents of digital arrest fraud

- Recently, a complaint was registered at the Noida Cyber Crime Police Station made by a 50-year-old victim, who was deceived of over Rs 11 lakh and exposed to "digital arrest". By using the identities of an IPS officer in the CBI and the founder of an airline that was grounded, the attackers, masquerading as law enforcement officers, falsely accused the victim of being involved in a fake money-laundering case. She was told that she had another SIM card in her name that was used for fraudulent activities in Mumbai. The complaint made by the victim asserted “Victim’s call was transferred to a person (who identified himself as a Mumbai Police officer) who conducted the initial interrogation over the call and then on Skype VC, where she stayed from 9:30 AM to around 7 in the evening. The woman ended up transferring around ₹11.11 lakh. The scammers then ended contact with her, after which she realised she had been scammed.

- Another recent case of digital arrest fraud came from Faridabad. Where a 23-year-old girl got a call from a fraudster posing as a Lucknow customs officer. The caller said that a package was being shipped to Cambodia that included cards and passports associated with the victim's Aadhaar number. The victim was forced to believe that she was a part of illegal activity, which included trafficking in humans. Under the guise of police officials, the hackers made up allegations before extorting money from the victim. After that, she was told by a man acting as a CBI official that she needed to pay five per cent of the total which was Rs 15 lakh. She said the cybercriminals instructed her not to log off Skype. In the meantime, she ended up transferring Rs 2.5 lakh to a bank account shared by cybercriminals.

Measures to protect oneself from digital arrest

Sustaining a practical and observant approach towards cybersecurity is the key to lowering the peril of being targeted and experiencing digital arrest. Following are certain best practices for ensuring the same:

- Cyber Hygiene: This includes maintaining cyber hygiene by regularly updating passwords, and software and also enabling two-factor authentications to reduce the chances of unauthorized access.

- Phishing Attempts: These can be evaded by refraining from clicking on dubious links or downloading attachments from unknown sources and also authenticating the legitimacy of emails and messages before sharing any personal information.

- Secured devices: By installing reputable antivirus and anti-malware solutions and keeping operating systems and applications up to date with the latest security protocols.

- Virtual Private Networks (VPNs): VPNs can be employed to encrypt internet connections thus enhancing privacy and security. However one must be cautious of free VPN services and OTP only for trustworthy providers.

- Monitor online services: A regular review of online accounts for any unauthorized or unlawful activities and setting up alerts for any changes to account settings or login attempts may help in the early detection of cybercrime and coping with it.

- Secure communication channels: Using secure communication techniques such as encryption can be done for the protection of sensitive information. Sharing of passwords and other information must be cautiously done especially in public forums.

- Awareness: The increasing prevalence of cybercrime known as "digital arrest" underscores the need for preventive measures and increased public awareness. Educational initiatives that draw attention to prevalent cyber threats—especially those that include law enforcement impersonation—can enable people to identify and fend off scams of this kind. The collaboration of law enforcement agencies and telecommunication companies can effectively limit the access points used by fraudsters by identifying and blocking susceptible calls.

Conclusion

The rise of Digital Arrest presents a noteworthy and innovative threat to cybersecurity by taking advantage of people's weaknesses through deceitful impersonation and coercive measures. The case in Noida is a prime example of the boldness and skill of cybercriminals who use fear and false information to trick victims into thinking they are in danger of suffering harsh legal repercussions and taking large amounts of money. In order to combat this increasing cybercrime, people need to take a proactive and watchful stance when it comes to cybersecurity. Cyber hygiene techniques, such as two-factor authentication and frequent password changes, are essential for lowering the possibility of unwanted access. Important precautions include being aware of phishing efforts, protecting devices with reliable antivirus software, and using Virtual Private Networks (VPNs) to increase privacy. Cybercriminals and fraudsters often use fear as a powerful tool to manipulate people and exploit their vulnerabilities for illicit gains in the realms of cybercrime and financial fraud. To protect themselves against the sneaky threat of Digital Arrest, netizens must traverse the constantly changing cyber threat landscape with collective knowledge, educated practices, and strong cybersecurity measures.

References:

- https://www.business-standard.com/india-news/new-cyber-crime-trend-unravelled-in-up-woman-held-under-digital-arrest-123120200485_1.html

- https://www.businessinsider.in/india/news/noida-woman-scammed-11-lakh-in-digital-arrest-scam-everything-you-need-to-know/articleshow/105727970.cms

- https://m.timesofindia.com/life-style/parenting/moments/23-year-old-faridabad-girl-on-digital-arrest-for-17-days-how-to-protect-your-children-from-cyber-crime/photostory/105442556.cms