#Fact Check: AI Video Falsely Shows Afghanistan Downing Pakistani Fighter Jet

Executive Summary:

Amid escalating tensions between Afghanistan and Pakistan, a video is being widely shared on social media claiming that Afghanistan has shot down a Pakistani fighter jet. The posts further allege that the incident marks the formal beginning of a war between the two countries. However, research conducted by the CyberPeace found the viral claim to be false and the research revealed that the circulating video is not authentic but AI-generated.

Claim

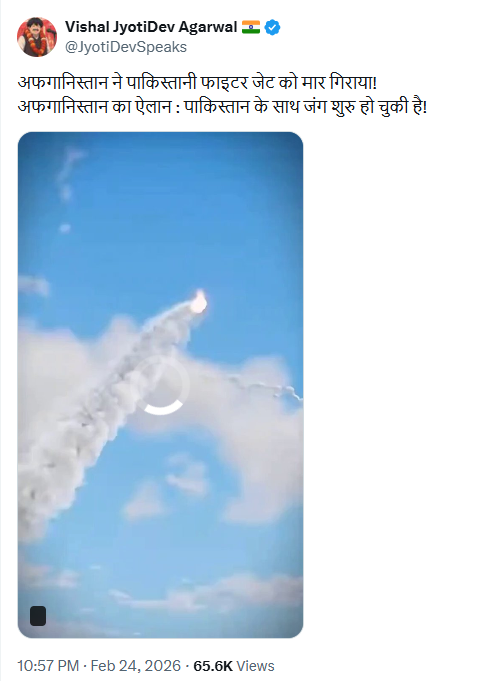

On February 24, 2026, a user on X (formerly Twitter) shared the viral video with the caption: “Afghanistan has shot down a Pakistani fighter jet! Afghanistan announces that war with Pakistan has begun.”

- Original post link: https://x.com/JyotiDevSpeaks/status/2026348257186545914

- Archived link: https://ghostarchive.org/archive/7l00Y

Fact Check:

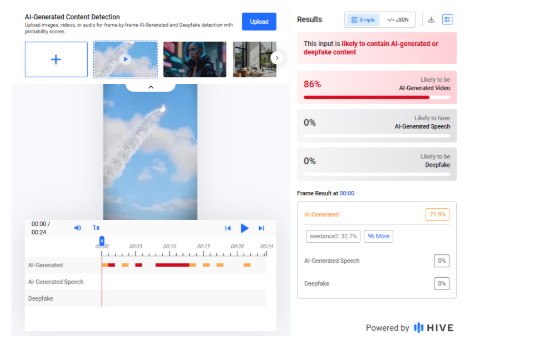

A careful review of the viral video revealed unusual visual patterns and artificial-looking effects, raising suspicions that it may have been created using artificial intelligence.We analyzed the video using the AI detection tool Hive Moderation, which indicated an 86 percent probability that the video was AI-generated.

To further verify the findings, we scanned the footage using another AI detection platform, Sightengine. The results showed a 99 percent likelihood that the video was AI-generated.

To understand the broader context of the ongoing tensions, we conducted a keyword search and found a report published on February 22, 2026, by BBC Hindi. According to the report, Pakistan claimed it had targeted “seven terrorist hideouts and camps” along the Pakistan–Afghanistan border based on intelligence inputs. Meanwhile, a spokesperson for the Taliban government in Afghanistan stated that Pakistani airstrikes in Nangarhar and Paktika provinces resulted in the deaths of dozens of people, including women and children.

- https://www.bbc.com/hindi/articles/clyz8141397o

Conclusion

Our research confirms that the viral video claiming Afghanistan shot down a Pakistani fighter jet and formally declared war on Pakistan is fake. The footage is AI-generated and is being circulated with a false and misleading narrative.

Related Blogs

In the 21st century, wars are no longer confined to land, sea, and air. Rather, they are increasingly playing out across the digital domain, where effective dominance over networks, data, and communications determines who holds the upper hand. Among these, 5G networks are becoming a defining factor on modern battlefields. The ultra-low latency, massive bandwidth capability, and the ability to connect many devices at a single time are transforming the scale and level of military operations, intelligence, and logistics. This unprecedented connectivity is also met with a host of cybersecurity vulnerabilities that the governments and the militaries have to address.

As India faces a challenging security environment, the emergence of 5G presents both an opportunity and a dilemma. On one hand, it can enhance our command, control, surveillance and battlefield coordination. On the other hand, it also exposes the military and the security establishments to risks of espionage and supply chain vulnerabilities. So in this case, it will be important to strike a balance between innovation and security for turning 5G into a strength rather than a liability.

How can 5G networks be a military asset?

In comparison to its predecessors, 5G is not just about faster downloads. Rather, it is a complete overhaul of network architectures that are designed to support services and technologies according to modern technological requirements. In terms of military application of 5G networks, it can prove a series of game-changing capabilities, such as:-

- Enhanced Command and Control in the form of real-time data sharing between troops, UAVs, Radar systems and the Command Cells to ensure a faster and coordinated decision-making approach.

- Tactical Situational Awareness with the help of 5G-enabled devices can give soldiers instant updates on the terrain, troop movements, or positions and enemy movements.

- Advanced Intelligence, Surveillance and Reconnaissance (ISR) with high-resolution sensors, radars and UAVs that can operate at their full potential by transmitting vast data streams with minimal legacy.

However, 5G networks can also help to become a key component of the communication component of the military command establishments that would allow machines, sensors and human operators to function as a single and integrated force.

Understanding the importance of 5G networks as the Double-Edged Swords of Connectivity-

The potential of 5G is undeniable, but its vulnerabilities cannot be ignored. Because they are software-driven and reliant on dense networks of small cells. For the military, this shows that adversaries could exploit their weaknesses to disrupt the communication, jam signals, and intercept sensitive data, leaving behind some key risks, such as;

- Cybersecurity threats from software-based architectures make 5G networks prone to malware and data breaches.

- Supply chain risks can arise from reliance on foreign hardware and software components with raising fears of embedded backdoors or compromised systems.

- Signal jamming and interface in terms of millimetre-wave spectrum, 5G signals are vulnerable to disruption in contested environments.

- There can also be insider threats and physical sabotage over personnel or unsecured installations that could compromise network integrity.

Securing the Backbone: Cyber Defence Imperatives

To safeguard 5G networks as the backbone for future warfare, the defence establishments need to adopt a layered, proactive cybersecurity strategy. Several measures can be considered, such as;

- Ensuring robust encryption and authentication to protect sensitive data, which requires the installation of advanced protocols like Subscription Concealed Identifiers and zero-trust frameworks to eliminate implicit trust.

- Investing in domestic R&D for 5G components to reduce dependency on foreign suppliers. India’s adoption of the 5Gi standard is a step in this direction, but upgrading it into a military grade remains vital.

- To ensure collaboration across different sectors, the defence forces need to work with civilian agencies and private telecoms support providers to create unified standards and best practices.

Thus, with embedding cybersecurity into every layer of the 5G architecture, India can work in the direction to reduce risks to maintain its operational resilience.

The geopolitical domains of 5G Network as a tool of warfare-

The introduction of 5G networks has definitely come as a tool of technological advancement in the communication sector. But at the same time, it has also posed a geopolitical context as well. The strategic competition between the US and China to dominate the 5G infrastructure has global security implications. For India, aligning closely with either of the blocs will pose a risk to its strategic autonomy, but pursuing non-alignment can give India some leverage to develop its capability on its own.

In this case, partnerships with the QUAD with the US, Japan and Australia can open avenues for cooperating on shared standards, cost sharing, and interoperability in 5G-enabled military systems. Learning from countries like the US and Israel, which are developing their defence communication and network infrastructure to secure 5G networks, or revisiting existing frameworks like COMCASA or BECA with the US can serve as platforms to explore joint protocols for 5G networks.

Conclusion: Opportunities and the way forward-

The 5G network is becoming a part of the central nervous system of the future battlefields. It can offer immense opportunities for India to modernise its defence capabilities and enhance the situational awareness by integrating AI-driven systems. The future lies in adopting a balanced strategy by developing indigenous capabilities, forging trusted partnerships, embedding cybersecurity into every layer of the networking architecture and preparing a skilled workforce to analyse and counter evolving threats. However, by adopting a foresighted preparedness, India can turn the double-edged sword of 5G into a decisive advantage by ensuring that it not only adapts to the digital battlefield, rather India can also lead it.

References

- https://chanakyaforum.com/5g-poised-to-usher-in-a-paradigm-shift-in-military-communications

- https://www.ijert.org/secure-5g-network-architecture-for-armed-forces

- https://www.airforce-technology.com/sponsored/data-is-becoming-more-powerful-than-any-weaponry-on-the-battlefield-and-5g-is-the-backbone/

- https://www.upguard.com/blog/how-5g-technology-affects-cybersecurity

- https://agileblue.com/exploring-the-impact-of-5g-technology-on-cybersecurity-practices/

Pretext

The Army Welfare Education Society has informed the Parents and students that a Scam is targeting the Army schools Students. The Scamster approaches the students by faking the voice of a female and a male. The scamster asks for the personal information and photos of the students by telling them they are taking details for the event, which is being organised by the Army welfare education society for the celebration of independence day. The Army welfare education society intimated that Parents to beware of these calls from scammers.

The students of Army Schools of Jammu & Kashmir, Noida, are getting calls from the scamster. The students were asked to share sensitive information. Students across the country are getting calls and WhatsApp messages from two numbers, which end with 1715 and 2167. The Scamster are posing to be teachers and asking for the students’ names on the pretext of adding them to the WhatsApp Groups. The scamster then sends forms links to the WhatsApp groups and asking students to fill out the form to seek more sensitive information.

Do’s

- Do Make sure to verify the caller.

- Do block the caller while finding it suspicious.

- Do be careful while sharing personal Information.

- Do inform the School Authorities while receiving these types of calls and messages posing to be teachers.

- Do Check the legitimacy of any agency and organisation while telling the details

- Do Record Calls asking for personal information.

- Do inform parents about scam calling.

- Do cross-check the caller and ask for crucial information.

- Do make others aware of the scam.

Don’ts

- Don’t answer anonymous calls or unknown calls from anyone.

- Don’t share personal information with anyone.

- Don’t Share OTP with anyone.

- Don’t open suspicious links.

- Don’t fill any forms, asking for personal information

- Don’t confirm your identity until you know the caller.

- Don’t Reply to messages asking for financial information.

- Don’t go to a fake website by following a prompt call.

- Don’t share bank Details and passwords.

- Don’t Make payment over a prompt fake call.

.webp)

Introduction

The recent events in Mira Road, a bustling suburb on the outskirts of Mumbai, India, unfold like a modern-day parable, cautioning us against the perils of unverified digital content. The Mira Road incident, a communal clash that erupted into the physical realm, has been mirrored and magnified through the prism of social media. The Maharashtra Police, in a concerted effort to quell the spread of discord, issued stern warnings against the dissemination of rumours and fake messages. These digital phantoms, they stressed, have the potential to ignite law and order conflagrations, threatening the delicate tapestry of peace.

The police's clarion call came in the wake of a video, mischievously edited, that falsely claimed anti-social elements had set the Mira Road railway station ablaze. This digital doppelgänger of reality swiftly went viral, its tendrils reaching into the ubiquitous realm of WhatsApp, ensnaring the unsuspecting in its web of deceit.

In this age of information overload, where the line between fact and fabrication blurs, the police urged citizens to exercise discernment. The note they issued was not merely an advisory but a plea for vigilance, a reminder that the act of sharing unauthenticated messages is not a passive one; it is an act that can disturb the peace and unravel the fabric of society.

The Massacre

The police's response to this crisis was multifaceted. Administrators and members of social media groups found to be the harbingers of such falsehoods would face legal repercussions. The Thane District, a mosaic of cultural and religious significance, has been marred by a series of violent incidents, casting a shadow over its storied history. The police, in their role as guardians of order, have detained individuals, scoured social media for inauthentic posts, and maintained a vigilant presence in the region.

The Maharashtra cyber cell, a digital sentinel, has unearthed approximately 15 posts laden with videos and messages designed to sow discord among the masses. These findings were shared with the Mira-Bhayandar, Vasai-Virar (MBVV) police, who stand ready to take appropriate action. Inspector General Yashasvi Yadav of the Maharashtra cyber cell issued an appeal to the public, urging them to refrain from circulating such unverified messages, reinforcing the notion that the propagation of inauthentic information is, in itself, a crime.

The MBVV police, in their zero-tolerance stance, have formed a team dedicated to scrutinizing social media posts. The message is clear: fake news will be met with strict action. The right to free speech on social media comes with the responsibility not to share information that could incite mischief. The Indian Penal Code and Information Technology Act serve as the bulwarks against such transgressions.

The Aftermath

In the aftermath of the clashes, the police have worked tirelessly to restore calm. A young man, whose video replete with harsh and obscene language went viral, was apprehended and has since apologised for his actions. The MBVV police have also taken to social media to reassure the public that the situation is under control, urging them to avoid circulating messages that could exacerbate tensions.

The Thane district has witnessed acts of vandalism targeting shops, further escalating tensions. In response, the police have apprehended individuals linked to these acts, hoping that such measures will expedite the return of peace. Advisories have been issued, warning against the dissemination of provocative messages and rumours.

In total, 19 individuals have been taken into custody in relation to numerous incidents of violence. The Mira-Bhayandar and Vasai-Virar police have underscored their commitment to legal action against those who spread rumours through fake messages. The authorities have also highlighted the importance of brotherhood and unity, reminding citizens that above all, they are Indians first.

Conclusion

In a world where old videos, stripped of context, can fuel tensions, the police have issued a note referring to the aforementioned fake video message. They urge citizens to exercise caution, to neither believe nor circulate such messages. Police Authorities have assured that no one involved in the violence will be spared, and peace committees are being convened to restore harmony. The Mira Road incident serves as a sign of the prowess of information and responsibility that comes with it. In the digital age, where the ephemeral and the eternal collide, we must navigate the waters of truth with care. Ultimately, it is not just the image of a locality that is at stake, but the essence of our collective humanity.

References

- https://youtu.be/gK2Ac1qP-nE?feature=shared

- https://www.mid-day.com/mumbai/mumbai-crime-news/article/mira-road-communal-clash-those-spreading-fake-messages-to-face-strict-action-say-mira-bhayandar-vasai-virar-cops-23331572

- https://www.mid-day.com/mumbai/mumbai-news/article/mira-road-communal-clash-cybercops-on-alert-for-fake-clips-23331653

- https://www.theweek.in/wire-updates/national/2024/01/24/bom43-mh-shops-3rdld-vandalism.html