#FactCheck: Viral video claims BSF personnel thrashing a person selling Bangladesh National Flag in West Bengal

Executive Summary:

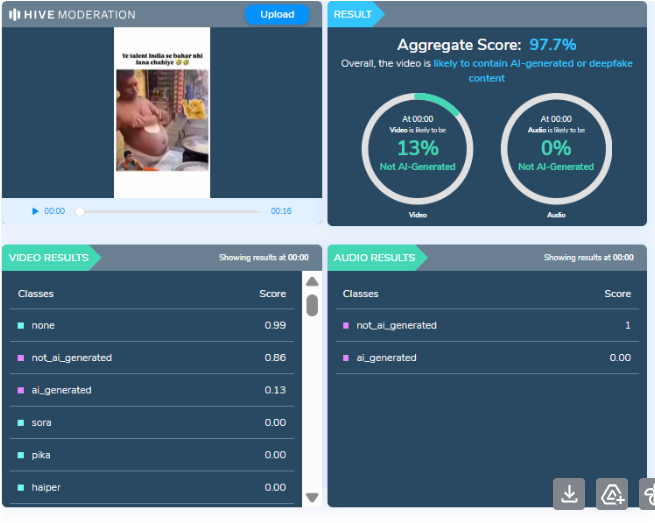

A video circulating online claims to show a man being assaulted by BSF personnel in India for selling Bangladesh flags at a football stadium. The footage has stirred strong reactions and cross border concerns. However, our research confirms that the video is neither recent nor related to the incident that occurred in India. The content has been wrongly framed and shared with misleading claims, misrepresenting the actual incident.

Claim:

It is being claimed through a viral post on social media that a Border Security Force (BSF) soldier physically attacked a man in India for allegedly selling the national flag of Bangladesh in West Bengal. The viral video further implies that the incident reflects political hostility towards Bangladesh within Indian territory.

Fact Check:

After conducting thorough research, including visual verification, reverse image searching, and confirming elements in the video background, we determined that the video was filmed outside of Bangabandhu National Stadium in Dhaka, Bangladesh, during the crowd buildup prior to the AFC Asian Cup. A match featuring Bangladesh against Singapore.

Second layer research confirmed that the man seen being assaulted is a local flag-seller named Hannan. There are eyewitness accounts and local news sources indicating that Bangladeshi Army officials were present to manage the crowd on the day under review. During the crowd control effort a soldier assaulted the vendor with excessive force. The incident created outrage to which the Army responded by identifying the officer responsible and taking disciplinary measures. The victim was reported to have been offered reparations for the misconduct.

Conclusion:

Our research confirms that the viral video does not depict any incident in India. The claim that a BSF officer assaulted a man for selling Bangladesh flags is completely false and misleading. The real incident occurred in Bangladesh, and involved a local army official during a football event crowd-control situation. This case highlights the importance of verifying viral content before sharing, as misinformation can lead to unnecessary panic, tension, and international misunderstanding.

- Claim: Viral video claims BSF personnel thrashing a person selling Bangladesh National Flag in West Bengal

- Claimed On: Social Media

- Fact Check: False and Misleading