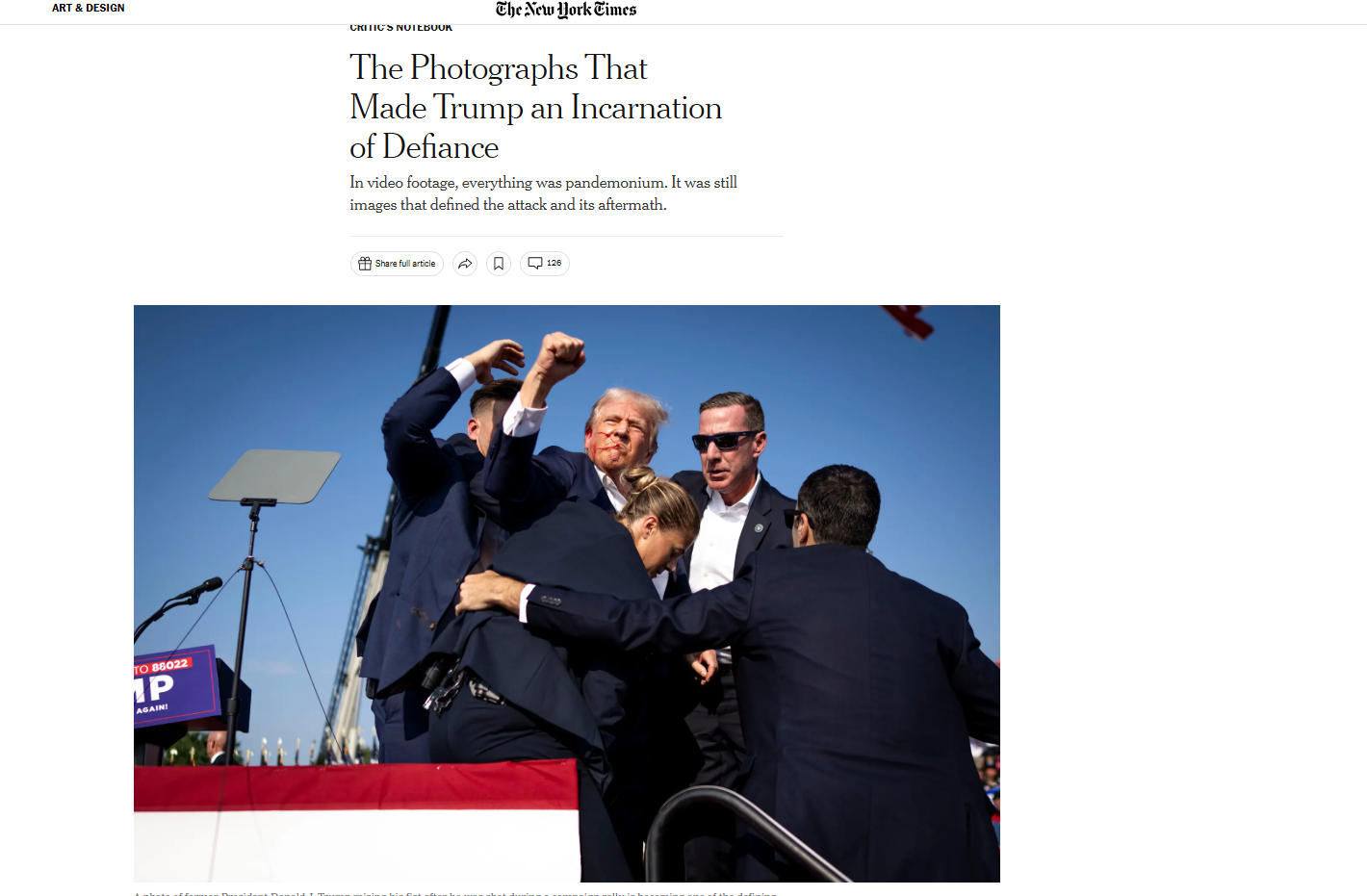

#FactCheck-AI-Generated Image Falsely Shows Donald Trump Raising Fist During Washington Incident

Executive Summary

A photo of Donald Trump is going viral on social media, showing him raising his fist. Users claim the image was taken during a press event in Washington, when security personnel were escorting him out amid reports of gunfire. Research by CyberPeace Research Wing found that the viral image is AI-generated and is being shared with misleading claims.

Claim

On April 26, 2026, an X user shared the image with the caption: “Thank You, Lord our God, for protecting our President.” The post suggests that Trump made the gesture during a chaotic evacuation at a Washington event.

Fact Check

Reports confirm that Trump and senior officials were hurried away from the White House Correspondents’ Association dinner on April 25 after gunshots were reportedly heard from a floor above the ballroom. However, no authentic visuals show Trump raising his fist during the evacuation.

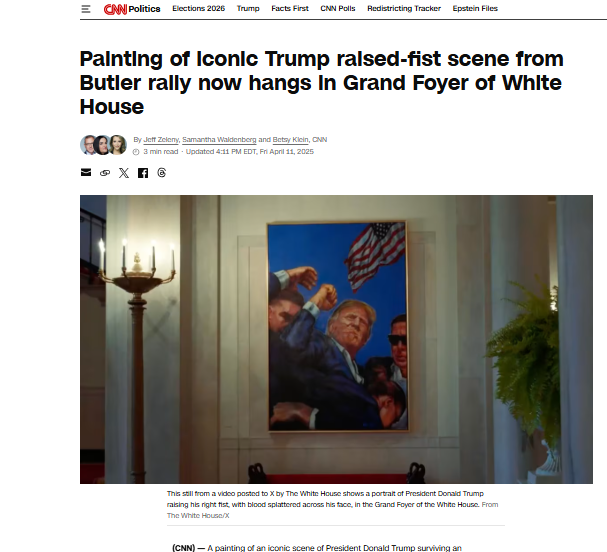

- https://www.nytimes.com/2024/07/14/arts/design/trump-photo-raised-fist.html

- https://edition.cnn.com/2025/04/11/politics/trump-obama-portrait-white-house

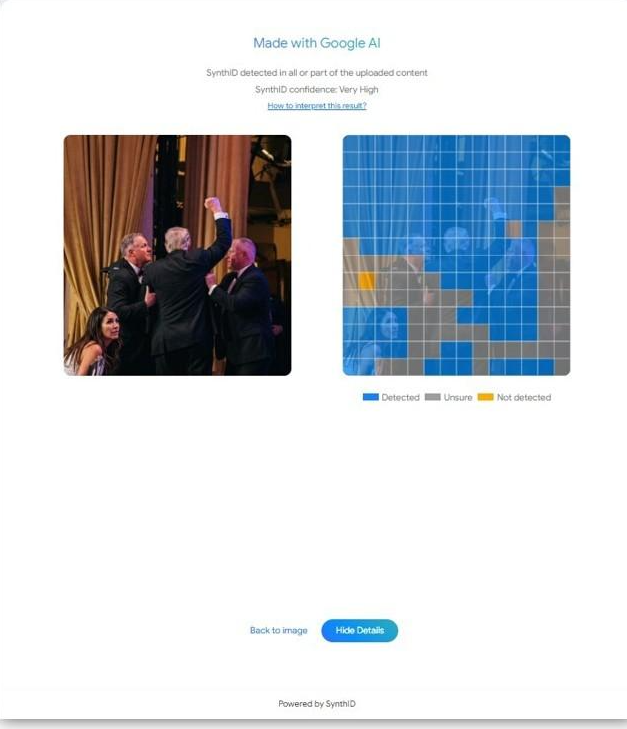

Further analysis of the viral image indicates signs of digital manipulation. Google’s SynthID detection tool flagged the file as containing SynthID—an invisible watermark embedded in content generated using Google’s AI tools.

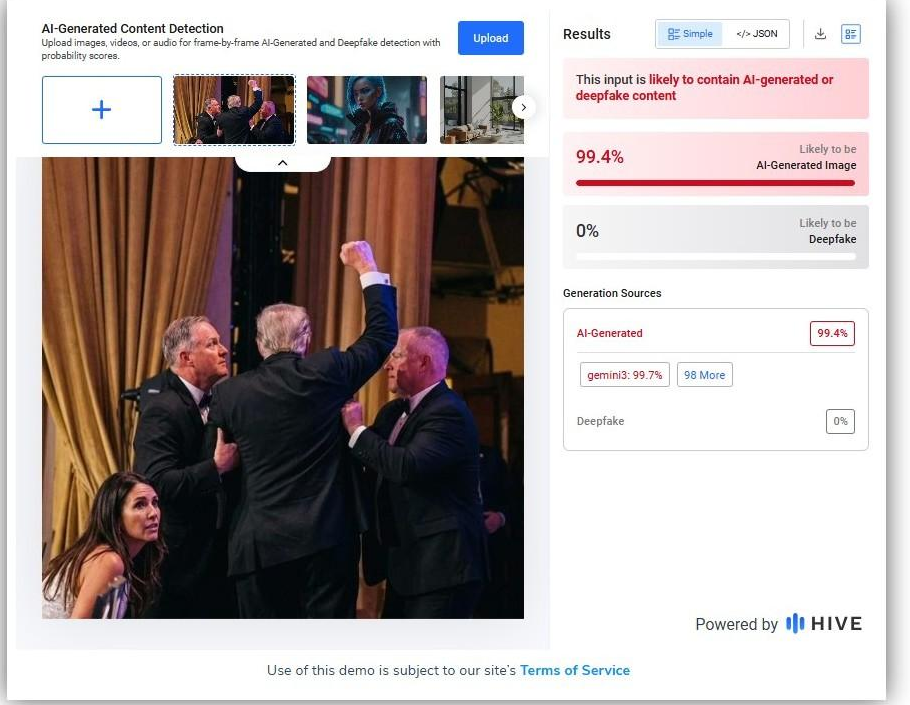

Additionally, AI detection platform Hive Moderation assessed that the image is likely AI-generated or a deepfake.

Conclusion

The research confirms that the viral image of Donald Trump raising his fist during a Washington incident is not real. It was created using AI and is being circulated with a misleading narrative.

Related Blogs

Introduction

As e-sports flourish in India, mobile gaming platforms and apps have contributed massively to this boom. The wave of online mobile gaming has led to a new recognition of esports. As we see the Sports Ministry being very proactive for e-sports and e-athletes, it is pertinent to ensure that we do not compromise our cyber security for the sake of these games. When we talk about online mobile gaming, the most common names that come to our minds are PUBG and BGMI. As news for all Indian gamers, BGMI is set to be relaunched in India after approval from the Ministry of Electronics and Information Technology.

Why was BGMI banned?

The Govt banned Battle Ground Mobile India on the pretext of being a Chinese application and the fact that all the data was hosted in China itself. This caused a cascade of compliance and user safety issues as the Data was stored outside India. Since 2020 The Indian Govt has been proactive in banning Chinese applications, which might have an adverse effect on national security and Indian citizens. Nearly 200 plus applications have been banned by the Govt, and most of them were banned due to their data hubs being in China. The issue of cross-border data flow has been a key issue in Geo-Politics, and whoever hosts the data virtually owns it as well and under the potential threat of this fact, all apps hosting their data in China were banned.

Why is BGMI coming back?

BGMI was banned for not hosting data in India, and since the ban, the Krafton Inc.-owned game has been engaging in Idnai to set up data banks and servers to have a separate gaming server for Indian players. These moves will lead to a safe gaming ecosystem and result in better adherence to the laws and policies of the land. The developers have not declared a relaunch date yet, but the game is expected to be available for download for iOS and Android users in the coming few days. The game will be back on app stores as a letter from the Ministry of Electronics and Information Technology has been issued stating that the games be allowed and made available for download on the respective app stores.

Grounds for BGMI

BGMI has to ensure that they comply with all the laws, policies and guidelines in India and have to show the same to the Ministry to get an extension on approval. The game has been permitted for only 90 days (3 Months). Hon’ble MoS Meity Rajeev Chandrashekhar stated in a tweet “This is a 3-month trial approval of #BGMI after it has complied with issues of server locations and data security etc. We will keep a close watch on other issues of User harm, Addiction etc., in the next 3 months before a final decision is taken”. This clearly shows the magnitude of the bans on Chinese apps. The ministry and the Govt will not play the soft game now, it’s all about compliance and safeguarding the user’s data.

Way Forward

This move will play a significant role in the future, not only for gaming companies but also for other online industries, to ensure compliance. This move will act as a precedent for the issue of cross-border data flow and the advantages of data localisation. It will go a long way in advocacy for the betterment of the Indian cyber ecosystem. Meity alone cannot safeguard the space completely, it is a shared responsibility of the Govt, industry and netizens.

Conclusion

The advent of online mobile gaming has taken the nation by storm, and thus, being safe and secure in this ecosystem is paramount. The provisional permission form BGMI shows the stance of the Govt and how it is following the no-tolerance policy for noncompliance with laws. The latest policies and bills, like the Digital India Act, Digital Personal Data Protection Act, etc., will go a long way in securing the interests and rights of the Indian netizen and will create a blanket of safety and prevention of issues and threats in the future.

Introduction

In the labyrinthine expanse of the digital age, where the ethereal threads of our connections weave a tapestry of virtual existence, there lies a sinister phenomenon that preys upon the vulnerabilities of human emotion and trust. This phenomenon, known as cyber kidnapping, recently ensnared a 17-year-old Chinese exchange student, Kai Zhuang, in its deceptive grip, leading to an $80,000 extortion from his distraught parents. The chilling narrative of Zhuang found cold and scared in a tent in the Utah wilderness, serves as a stark reminder of the pernicious reach of cybercrime.

The Cyber Kidnapping

The term 'cyber kidnapping' typically denotes a form of cybercrime where malefactors gain unauthorised access to computer systems or data, holding it hostage for ransom. Yet, in the context of Zhuang's ordeal, it took on a more harrowing dimension—a psychological manipulation through online communication that convinced his family of his peril, despite his physical safety before the scam.

The Incident

The incident unfolded like a modern-day thriller, with Zhuang's parents in China alerting officials at his host high school in Riverdale, Utah, of his disappearance on 28 December 2023. A meticulous investigation ensued, tracing bank records, purchases, and phone data, leading authorities to Zhuang's isolated encampment, 25 miles north of Brigham City. In the frigid embrace of Utah's winter, Zhuang awaited rescue, armed only with a heat blanket, a sleeping bag, limited provisions, and the very phones used to orchestrate his cyber kidnapping.

Upon his rescue, Zhuang's first requests were poignantly human—a warm cheeseburger and a conversation with his family, who had been manipulated into paying the hefty ransom during the cyber-kidnapping scam. This incident not only highlights the emotional toll of such crimes but also the urgent need for awareness and preventative measures.

The Aftermath

To navigate the treacherous waters of cyber threats, one must adopt the scepticism of a seasoned detective when confronted with unsolicited messages that reek of urgency or threat. The verification of identities becomes a crucial shield, a bulwark against deception. Sharing sensitive information online is akin to casting pearls before swine, where once relinquished, control is lost forever. Privacy settings on social media are the ramparts that must be fortified, and the education of family and friends becomes a communal armour against the onslaught of cyber threats.

The Chinese embassy in Washington has sounded the alarm, warning its citizens in the U.S. about the risks of 'virtual kidnapping' and other online frauds. This scam fragments a larger criminal mosaic that threatens to ensnare parents worldwide.

Kai Zhuang's story, while unique in its details, is not an isolated event. Experts warn that technological advancements have made it easier for criminals to pursue cyber kidnapping schemes. The impersonation of loved ones' voices using artificial intelligence, the mining of social media for personal data, and the spoofing of phone numbers are all tools in the cyber kidnapper's arsenal.

The Way Forward

The crimes have evolved, targeting not just the vulnerable but also those who might seem beyond reach, demanding larger ransoms and leaving a trail of psychological devastation in their wake. Cybercrime, as one expert chillingly notes, may well be the most lucrative of crimes, transcending borders, languages, and identities.

In the face of such threats, awareness is the first line of defense. Reporting suspicious activity to the FBI's Internet Crime Complaint Center, verifying the whereabouts of loved ones, and establishing emergency protocols are all steps that can fortify one's digital fortress. Telecommunications companies and law enforcement agencies also have a role to play in authenticating and tracing the source of calls, adding another layer of protection.

Conclusion

The surreal experience of reading about cyber kidnapping belies the very real danger it poses. It is a crime that thrives in the shadows of our interconnected world, a reminder that our digital lives are as vulnerable as our physical ones. As we navigate this complex web, let us arm ourselves with knowledge, vigilance, and the resolve to protect not just our data, but the very essence of our human connections.

References

- https://www.bbc.com/news/world-us-canada-67869517

- https://www.ndtv.com/feature/what-is-cyber-kidnapping-and-how-it-can-be-avoided-4792135

.webp)

In what is being stated by experts to be one of the largest data breaches of all time, approximately 16 billion passwords were exposed online last week. According to various news reports, the leak contains credentials spanning a broad array of online services, including Facebook, Instagram, Gmail, etc., creating a serious alarm across the globe. Cybersecurity specialists have noted that this leak poses immense risks of account takeovers, identity theft, and enabling phishing scams. The leaked data is being described as a “collection-of-collections,” with multiple previously breached databases compiled into one easy-to-access repository for cybercriminals.

Infostealer Malware and Why It’s a Serious Threat

This incident brought to light a type of malware that experts refer to as the Infostealer. Just as the name suggests, this is a malware program made expressly to take personal information from compromised computers and devices, including cookies, session tokens, browser data, login credentials, and more. It targets high-value credentials, as opposed to ransomware, which encrypts files for ransom, or spyware that passively watches users. Once installed, they silently gather passwords, screenshots, and other information while hiding inside unassuming software, such as a game, utility, or browser plugin. Once stolen, these credentials are then combined by hackers to create databases, which are then offered for sale on dark web forums or even made public, as was the case in this breach. This is particularly risky since, if session tokens or other browser data are also taken, these credentials can be used to get around even two-factor authentication. As a result, the leak would also enable the rise of other crimes such as phishing.

Guidelines for protection

In response to this breach, India’s Computer Emergency Response Team (CERT-IN) issued an advisory, urging all internet users to take immediate action to protect their accounts. Although this is in response to the specific data leak, these are some key measures advised to be followed to maintain a general standard of cyber hygiene at all times.

- Reset your passwords: In case of incidents such as the above, users are advised to change the passwords of their accounts immediately. More so of the ones that have been compromised and need to be prioritised, such as email, online banking, and social media etc.

- Use strong, unique passwords and password manager features: Avoid password reuse across platforms. Using a password manager on a trusted platform can aid in storing and recalling them for different accounts.

- Monitor account activity: Check activity logs, especially for signs of unrecognised login attempts or password-reset notifications.

- Enable Multi-Factor Authentication (MFA): The user is advised to enable two-step verification (via an app like Google Authenticator or a hardware key), which will add an extra security layer.

- Phishing attacks: Cybercriminals will likely attempt to use leaked credentials to impersonate legitimate companies and send phishing emails. Read carefully before clicking on any links or attachments received.

- Scan devices for malware: Run updated antivirus or anti-malware scans to catch and remove infostealers or other malicious software lurking on your device.

Why This Data Breach is a Wake-Up Call

With 16 billion credentials exposed, this breach highlights the critical need for robust personal cybersecurity hygiene. It also reveals the persistent role of infostealer malware in feeding a global cybercrime economy, one where credentials are the most valuable assets. As Infosecurity Europe and other analysts highlight, infostealers are lightweight, often distributed via phishing or malicious downloads, and are highly effective at lifting data in the background without alerting the user. Even up-to-date antivirus software can struggle to catch new variants, making proactive security practices with respect to such malware all the more essential. In a time where data is everything, access to credentials can derive power and safety, regarding it must be kept in check.

Conclusion

This breach is a reminder that cybersecurity is a shared responsibility. Even with protective systems in place with respect to the industries and official authorities, every internet user must do their part in protecting themselves through cyber hygiene practices such as resetting passwords, using multi-factor authentication, staying vigilant against phishing scams, and ensuring devices are regularly scanned for malware. While breaches like this can seem overwhelming and might create a surge of panic, practical measures go a long way in mitigating exposure. Staying informed and proactive is the best defence one can adopt in a rapidly evolving threat landscape.

References

- https://economictimes.indiatimes.com/news/international/us/16-billion-passwords-exposed-in-unprecedented-cyber-leak-of-2025-experts-raise-global-alarm/articleshow/121961165.cms?from=mdr

- https://timesofindia.indiatimes.com/technology/tech-news/16-billion-passwords-leaked-on-internet-what-you-need-to-know-to-protect-your-facebook-instagram-gmail-and-other-accounts/articleshow/121967191.cms

- https://indianexpress.com/article/technology/tech-news-technology/16-billion-passwords-leaked-online-what-we-know-10077546/

- https://indianexpress.com/article/technology/tech-news-technology/16-billion-passwords-leaked-online-what-we-know-10077546/

- https://www.hindustantimes.com/business/certin-issues-advisory-after-data-breach-of-16-billion-credentials-asks-people-to-change-passwords-101750779940872.html

- https://www.cert-in.org.in/s2cMainServlet?pageid=PUBVLNOTES02&VLCODE=CIAD-2025-0024

- https://www.infosecurityeurope.com/en-gb/blog/threat-vectors/guide-infostealer-malware.html