#FactCheck - Viral Video Misleadingly Tied to Recent Taiwan Earthquake

Executive Summary:

In the context of the recent earthquake in Taiwan, a video has gone viral and is being spread on social media claiming that the video was taken during the recent earthquake that occurred in Taiwan. However, fact checking reveals it to be an old video. The video is from September 2022, when Taiwan had another earthquake of magnitude 7.2. It is clear that the reversed image search and comparison with old videos has established the fact that the viral video is from the 2022 earthquake and not the recent 2024-event. Several news outlets had covered the 2022 incident, mentioning additional confirmation of the video's origin.

Claims:

There is a news circulating on social media about the earthquake in Taiwan and Japan recently. There is a post on “X” stating that,

“BREAKING NEWS :

Horrific #earthquake of 7.4 magnitude hit #Taiwan and #Japan. There is an alert that #Tsunami might hit them soon”.

Similar Posts:

Fact Check:

We started our investigation by watching the videos thoroughly. We divided the video into frames. Subsequently, we performed reverse search on the images and it took us to an X (formally Twitter) post where a user posted the same viral video on Sept 18, 2022. Worth to notice, the post has the caption-

“#Tsunami warnings issued after Taiwan quake. #Taiwan #Earthquake #TaiwanEarthquake”

The same viral video was posted on several news media in September 2022.

The viral video was also shared on September 18, 2022 on NDTV News channel as shown below.

Conclusion:

To conclude, the viral video that claims to depict the 2024 Taiwan earthquake was from September 2022. In the course of the rigorous inspection of the old proof and the new evidence, it has become clear that the video does not refer to the recent earthquake that took place as stated. Hence, the recent viral video is misleading . It is important to validate the information before sharing it on social media to prevent the spread of misinformation.

Claim: Video circulating on social media captures the recent 2024 earthquake in Taiwan.

Claimed on: X, Facebook, YouTube

Fact Check: Fake & Misleading, the video actually refers to an incident from 2022.

Related Blogs

Executive Summary

Claims are circulating that Iran’s Supreme Leader Ayatollah Ali Khamenei was killed in a major attack allegedly carried out by Israel and the United States. Amid these claims, a video is being widely shared on social media in which Khamenei can be heard saying, “Beware of fake news, I am alive.” Research conducted by CyberPeace has found the viral claim to be false. Our research revealed that the video being shared is old and that Khamenei’s voice has been altered using artificial intelligence to support a misleading narrative.

Claim

On March 1, 2026, an Instagram user shared the viral video in which Ayatollah Ali Khamenei is heard saying, “Beware of fake news, I am alive.” The link to the post and its archived version are provided above along with a screenshot.

Fact Check:

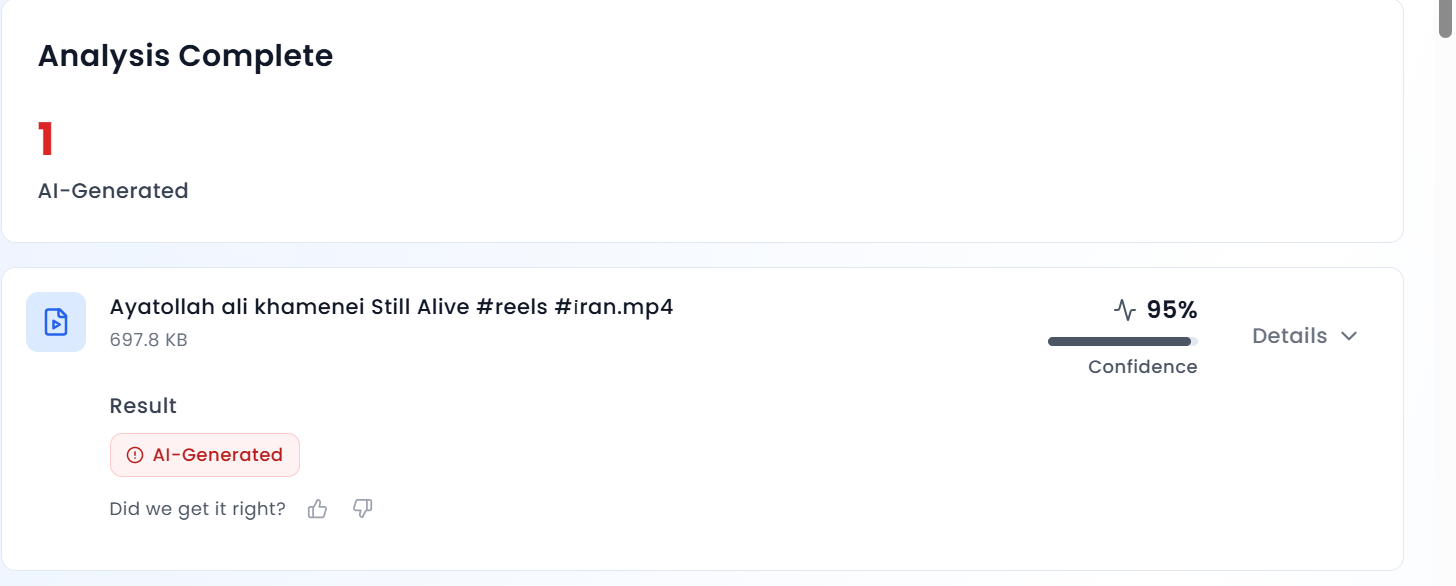

To verify the authenticity of the claim, we extracted key frames from the viral video and conducted a reverse image search using Google Lens. During the research, we found the same video on the YouTube channel of Sky News Australia, published on June 19, 2025. In the approximately 43-minute-long video, the portion used in the viral clip appears around the 10-minute mark.

According to Sky News Australia’s report, Iran’s Supreme Leader Ayatollah Ali Khamenei had rejected US President Donald Trump’s demand for unconditional surrender. The Ayatollah regime also warned that any American military intervention would be accompanied by “irreparable damage.” Upon closely listening to the viral clip, we noticed that Khamenei’s voice sounded robotic, raising suspicion that it may have been AI-generated. We then analyzed the video using the AI detection tool AURGIN AI. The results indicated that the viral clip had been generated using artificial intelligence.

Conclusion

Our research establishes that the viral video is old and has been digitally manipulated. Ayatollah Ali Khamenei’s voice has been altered using artificial intelligence and the clip is being shared with a misleading claim.

Introduction

Law grows by confronting its absences, it heals through its own gaps. States often find themselves navigating a shared frontier without a mutual guide or lines of law in an era of expanding digital boundaries and growing cyber damages. The United Nations General Assembly ratified the United Nations Convention against Cybercrime on December 24, 2024, and more than sixty governments were in attendance in the signing ceremony on 24th & 25th October this year, marking a moment of institutional regeneration and global commitment.

A new Lexicon for Global Order

The old liberal order is being strained by growing nationalism, economic fracturing, populism, and great-power competition as often emphasised in the works of scholars like G. John Iken berry and John Mearsheimer. Multilateral arrangements become more brittle in such circumstances. Therefore, the new cybercrimes convention represents not only a legal tool but also a resurgence of international promise, a significant win for collective governance in an uncertain time. It serves as a reminder that institutions can be rebuilt even after they have been damaged.

In Discussion: The Fabric of the Digital Polis

The digital sphere has become a contentious area. On the one hand, the US and its allies support stakeholder governance, robust individual rights, and open data flows. On the other hand, nations like China and Russia describe a “post-liberal cyber order” based on state mediation, heavily regulated flows, and sovereignty. Instead of focusing on ideological dichotomies, India, which is positioned as both a rising power and a voice of the Global South, has offered a viewpoint based on supply-chain security, data localisation, and capacity creation. Thus, rather than being merely a regulation, the treaty arises from a framework of strategic recalibration.

What Changed & Why it Matters

There have been regional cybercrime accords up to this point, such as the Budapest Convention. The goal of this new international convention, which is accessible to all UN members, is to standardise definitions, evidence sharing and investigation instruments. 72 states signed the Hanoi signature event in October, 2025, demonstrating an unparalleled level of scope and determination. In addition to establishing structures for cooperative investigations, extradition, and the sharing of electronic evidence, it requires signatories to criminalise acts such as fraud, unlawful access to systems, data interference, and online child exploitation.

For the first time, a legally obligatory global architecture aims to harmonise cross-border evidence flows, mutual legal assistance, and national procedural laws. Cybercrime offers genuine promise for community defence at a time when it is no longer incidental but existential, attacks on hospitals, schools and infrastructure are now common, according to the Global Observatory.

Holding the Line: India’s Deliberate Path in the Age of Cyber Multilateralism

India takes a contemplative rather than a reluctant stance towards the UN Cybercrime Treaty. Though it played an active role during the drafting sessions and lent its voice to the shaping of global cyber norms, New Delhi is yet to sign the convention. Subtle but intentional, the reluctance suggests a more comprehensive reflection, an evaluation of how international obligations correspond with domestic constitutional protections, especially the right to privacy upheld by the Supreme Court in Puttaswamy v. UOI (2017).

Prudence is the reason for this halt. Policy circles speculate that the government is still assessing the treaty’s consequences for national data protection, surveillance regimes, and territorial sovereignty. Officials have not provided explicit justifications for India’s refusal to join. India’s position has frequently been characterised by striking a careful balance between digital sovereignty and taking part in cooperative international regimes. In earlier negotiations, India had even proposed including clauses to penalise “offensive messages” on social media, echoing the erstwhile Section 66A of the IT Act, 2000, but the suggestion found little international traction.

Advocates for digital rights such as Raman Jit Singh Chima of Access Now have warned that ensuring that the treaty’s implementation upholds constitutional privacy principles may be necessary for India to eventually endorse it. He contends that the treaty’s wording might not entirely meet India’s legal requirements in the absence of such voluntary pledges.

UN Secretary-General Antonio Guterres praised the agreement as “a powerful, legally binding instrument to strengthen our collective defences against “cybercrime” during its signing in Hanoi. The issue for India is to make sure that multilateral collaboration develops in accordance with constitutional values rather than to reject that vision. Therefore, the path forward is one of assertion rather than absence, careful march towards a cyber future that protects freedom and sovereignty.

Sources:

Introduction

In the boundless world of the internet—a digital frontier rife with both the promise of connectivity and the peril of deception—a new spectre stealthily traverses the electronic pathways, casting a shadow of fear and uncertainty. This insidious entity, cloaked in the mantle of supposed authority, preys upon the unsuspecting populace navigating the virtual expanse. And in the heart of India's vibrant tapestry of diverse cultures and ceaseless activity, Mumbai stands out—a sprawling metropolis of dreams and dynamism, yet also the stage for a chilling saga, a cyber charade of foul play and fraud.

The city's relentless buzz and hum were punctuated by a harrowing tale that unwound within the unassuming confines of a Kharghar residence, where a 46-year-old individual's brush with this digital demon would unfold. His typical day veered into the remarkable as his laptop screen lit up with an ominous pop-up, infusing his routine with shock and dread. This deceiving popup, masquerading as an official communication from the National Crime Records Bureau (NCRB), demanded an exorbitant fine of Rs 33,850 for ostensibly browsing adult content—an offence he had not committed.

The Cyber Deception

This tale of deceit and psychological warfare is not unique, nor is it the first of its kind. It finds echoes in the tragic narrative that unfurled in September 2023, far south in the verdant land of Kerala, where a young life was tragically cut short. A 17-year-old boy from Kozhikode, caught in the snare of similar fraudulent claims of NCRB admonishment, was driven to the extreme despair of taking his own life after being coerced to dispense Rs 30,000 for visiting an unauthorised website, as the pop-up falsely alleged.

Sewn with a seam of dread and finesse, the pop-up which appeared in another recent case from Navi Mumbai, highlights the virtual tapestry of psychological manipulation, woven with threatening threads designed to entrap and frighten. In this recent incident a 46-year-old Kharghar resident was left in shock when he got a pop-up on a laptop screen warning him to pay Rs 33,850 fine for surfing a porn website. This message appeared from fake website of NCRB created to dupe people. Pronouncing that the user has engaged in browsing the Internet for some activities, it delivers an ultimatum: Pay the fine within six hours, or face the critical implications of a criminal case. The panacea it offers is simple—settle the demanded amount and the shackles on the browser shall be lifted.

It was amidst this web of lies that the man from Kharghar found himself entangled. The story, as retold by his brother, an IT professional, reveals the close brush with disaster that was narrowly averted. His brother's panicked call, and the rush of relief upon realising the scam, underscores the ruthless efficiency of these cyber predators. They leverage sophisticated deceptive tactics, even specifying convenient online payment methods to ensnare their prey into swift compliance.

A glimmer of reason pierced through the narrative as Maharashtra State cyber cell special inspector general Yashasvi Yadav illuminated the fraudulent nature of such claims. With authoritative clarity, he revealed that no legitimate government agency would solicit fines in such an underhanded fashion. Rather, official procedures involve FIRs or court trials—a structured route distant from the scaremongering of these online hoaxes.

Expert Take

Concurring with this perspective, cyber experts facsimiles. By tapping into primal fears and conjuring up grave consequences, the fraudsters follow a time-worn strategy, cloaking their ill intentions in the guise of governmental or legal authority—a phantasm of legitimacy that prompts hasty financial decisions.

To pierce the veil of this deception, D. Sivanandhan, the former Mumbai police commissioner, categorically denounced the absurdity of the hoax. With a voice tinged by experience and authority, he made it abundantly clear that the NCRB's role did not encompass the imposition of fines without due process of law—a cornerstone of justice grossly misrepresented by the scam's premise.

New Lesson

This scam, a devilish masquerade given weight by deceit, might surge with the pretence of novelty, but its underpinnings are far from new. The manufactured pop-ups that propagate across corners of the internet issue fabricated pronouncements, feigned lockdowns of browsers, and the spectre of being implicated in taboo behaviours. The elaborate ruse doesn't halt at mere declarations; it painstakingly fabricates a semblance of procedural legitimacy by preemptively setting penalties and detailing methods for immediate financial redress.

Yet another dimension of the scam further bolsters the illusion—the ominous ticking clock set for payment, endowing the fraud with an urgency that can disorient and push victims towards rash action. With a spurious 'Payment Details' section, complete with options to pay through widely accepted credit networks like Visa or MasterCard, the sham dangles the false promise of restored access, should the victim acquiesce to their demands.

Conclusion

In an era where the demarcation between illusion and reality is nebulous, the impetus for individual vigilance and scepticism is ever-critical. The collective consciousness, the shared responsibility we hold as inhabitants of the digital domain, becomes paramount to withstand the temptation of fear-inducing claims and to dispel the shadows cast by digital deception. It is only through informed caution, critical scrutiny, and a steadfast refusal to capitulate to intimidation that we may successfully unmask these virtual masquerades and safeguard the integrity of our digital existence.

References:

- https://www.onmanorama.com/news/kerala/2023/09/29/kozhikode-boy-dies-by-suicide-after-online-fraud-threatens-him-for-visiting-unauthorised-website.html

- https://timesofindia.indiatimes.com/pay-rs-33-8k-fine-for-surfing-porn-warns-fake-ncrb-pop-up-on-screen/articleshow/106610006.cms

- https://www.indiatoday.in/technology/news/story/people-who-watch-porn-receiving-a-warning-pop-up-do-not-pay-it-is-a-scam-1903829-2022-01-24