#FactCheck - Viral Video Claiming to Show Kashmir Avalanche Is AI-Generated

Executive Summary

A video is being shared on social media claiming to show an avalanche in Kashmir. The caption of the post alleges that the incident occurred on February 6. Several users sharing the video are also urging people to avoid unnecessary travel to hilly regions. CyberPeace’s research found that the video being shared as footage of a Kashmir avalanche is not real. The research revealed that the viral video is AI-generated.

Claim

The video is circulating widely on social media platforms, particularly Instagram, with users claiming it shows an avalanche in Kashmir on February 6. The archived version of the post can be accessed here. Similar posts were also found online. (Links and archived links provided)

Fact Check:

To verify the claim, we searched relevant keywords on Google. During this process, we found a video posted on the official Instagram account of the BBC. The BBC post reported that an avalanche occurred near a resort in Sonamarg, Kashmir, on January 27. However, the BBC post does not contain the viral video that is being shared on social media, indicating that the circulating clip is unrelated to the real incident.

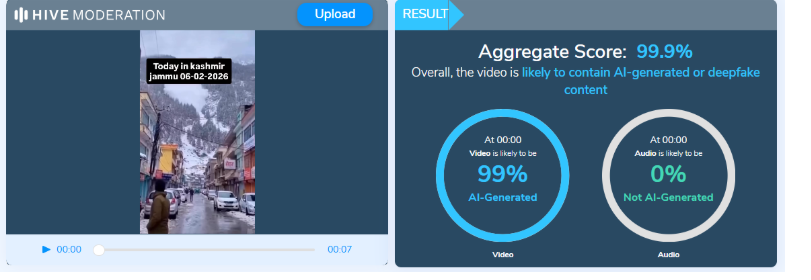

A close examination of the viral video revealed several inconsistencies. For instance, during the alleged avalanche, people present at the site are not seen panicking, running for cover, or moving toward safer locations. Additionally, the movement and flow of the falling snow appear unnatural. Such visual anomalies are commonly observed in videos generated using artificial intelligence. As part of the research , the video was analyzed using the AI detection tool Hive Moderation. The tool indicated a 99.9% probability that the video was AI-generated.

Conclusion

Based on the evidence gathered during our research , it is clear that the video being shared as footage of a Kashmir avalanche is not genuine. The clip is AI-generated and misleading. The viral claim is therefore false.

Related Blogs

Executive Summary

A video is being widely shared on social media claiming that members of the so-called ‘Cockroach Janta Party (CJP)’ caught a police officer in Chhattisgarh red-handed while accepting a bribe of ₹30,000. The viral posts also claim that the group has intensified its so-called campaign to “clean the rotten system.” However, CyberPeace Research Wing research found the claim to be misleading.The research revealed that the video has no connection with the ‘Cockroach Janta Party (CJP)’. The original footage dates back to February 2026 and shows Chhattisgarh Police sub-inspector Abdul Munaf being caught by the Anti-Corruption Bureau (ACB) in an alleged ₹25,000 bribery case.

Claim

A Facebook user shared the viral video on May 23, 2026, claiming that members of the “Cockroach Janta Party” caught a police officer red-handed taking a ₹30,000 bribe. The post further stated that the group had intensified its campaign against corruption. The link and archived post are provided.

Fact Check

To verify the claim, we extracted key frames from the viral video and conducted a reverse image search. During the research, we found a report published on the Navbharat Times website dated February 26, 2026, which contained visuals matching the viral vi

According to the report, the Anti-Corruption Bureau (ACB) in Korea district, Chhattisgarh, arrested a corrupt police station in-charge while accepting a bribe of ₹25,000. After being caught, the officer attempted to assert authority using his uniform and resisted the search procedure, but soon gave up. Further verification using keywords from the Navbharat Times report led to a similar story published by Dainik Bhaskar, which also contained the same visuals.

Additionally, a related context about the satirical “Cockroach Janta Party (CJP)” trend was found in a Times Now Hindi report, which explains that the term emerged as an online satire following a controversial remark attributed to the Chief Justice of India regarding unemployed youth. The statement was later clarified.

Conclusion

The research confirms that the viral video has no connection with the ‘Cockroach Janta Party (CJP)’. The original footage is from a February 2026 incident in which Chhattisgarh Police sub-inspector Abdul Munaf was arrested by the ACB in a bribery case involving ₹25,000. The video has been falsely linked to a misleading narrative on social media.

Introduction

Cybercrimes have been traversing peripheries and growing at a fast pace. Cybercrime is known to be an offensive action that either targets or operates through a computer, a computer network or a networked device, according to Kaspersky. In the “Era of globalisation” and a “Digitally coalesced world”, there has been an increase in International cybercrime. Cybercrime could be for personal or political objectives. Nevertheless, Cybercrime aims to sabotage networks for motives other than gain and be carried out either by organisations or individuals. Some of the cybercriminals have no national boundaries and are considered a global threat. They are likewise inordinately technically adept and operate avant-garde strategies.

The 2023 Global Risk Report points to exacerbating geopolitical apprehensions that have increased the advanced persistent threats (APTs), which are evolving globally as they are ubiquitous. Christine Lagarde, the president of the European Central Bank and former head of the International Monetary Fund (IMF), in 2020 cautioned that a cyber attack could lead to a severe economic predicament. Contemporary technologies and hazardous players have grown at an exceptional gait over the last few decades. Also, cybercrime has heightened on the agenda of nation-states, establishments and global organisations, as per the World Economic Forum (WEF).

The Role of the United Nations Ad Hoc Committee

In two shakes, the United Nations (UN) has a major initiative to develop a new and more inclusive approach to addressing cybercrime and is presently negotiating a new convention on cybercrime. The following convention seeks to enhance global collaboration in the combat against cybercrime. The UN has a central initiative to develop a unique and more inclusive strategy for addressing cybercrime. The UN passed resolution 74/247, which designated an open-ended ad hoc committee (AHC) in December 2019 entrusted with setting a broad global convention on countering the use of information and Communication Technologies (ICTs) for illicit pursuits.

The Cybercrime treaty, if adopted by the UN General Assembly (UNGA) would be the foremost imperative UN mechanism on a cyber point. The treaty could further become a crucial international legal framework for global collaboration on arraigning cyber criminals, precluding and investigating cybercrime. There have correspondingly been numerous other national and international measures to counter the criminal use of ICTs. However, the UN treaty is intended to tackle cybercrime and enhance partnership and coordination between states. The negotiations of the Ad Hoc Committee with the member states will be completed by early 2024 to further adopt the treaty during the UNGA in September 2024.

However, the following treaty is said to be complex. Some countries endorse a treaty that criminalises cyber-dependent offences and a comprehensive spectrum of cyber-enabled crimes. The proposals of Russia, Belarus, China, Nicaragua and Cuba have included highly controversial recommendations. Nevertheless, India has backed for criminalising crimes associated with ‘cyber terrorism’ and the suggestions of India to the UN Ad Hoc committee are in string with its regulatory strategy in the country. Similarly, the US, Japan, the UK, European Union (EU) member states and Australia want to include core cyber-dependent crimes.

Nonetheless, though a new treaty could become a practical instrument in the international step against cybercrime, it must conform to existing global agencies and networks that occupy similar areas. This convention will further supplement the "Budapest Cybercrime Convention" on cybercrime that materialised in the 1990s and was signed in Budapest in the year 2001.

Conclusion

According to Cyber Security Ventures, global cybercrime is expected to increase by 15 per cent per year over the next five years, reaching USD 10.5 trillion annually by 2025, up from USD 3 trillion in 2015. The UN cybercrime convention aims to be more global. That being the case, next-generation tools should have state-of-the-art technology to deal with new cyber crimes and cyber warfare. The global crevasse in nation-states due to cybercrime is beyond calculation. It could lead to a great cataclysm in the global economy and threaten the political interest of the countries on that account. It is crucial for global governments and international organisations. It is necessary to strengthen the collaboration between establishments (public and private) and law enforcement mechanisms. An “appropriately designed policy” is henceforward the need of the hour.

References

- https://www.kaspersky.co.in/resource-center/threats/what-is-cybercrime

- https://www.cyberpeace.org/

- https://www.interpol.int/en/Crimes/Cybercrime

- https://www.bizzbuzz.news/bizz-talk/ransomware-attacks-on-startups-msmes-on-the-rise-in-india-cyberpeace-foundation-1261320

- https://www.financialexpress.com/business/digital-transformation-cyberpeace-foundation-receives-4-million-google-org-grant-3282515/

- https://www.chathamhouse.org/2023/08/what-un-cybercrime-treaty-and-why-does-it-matter

- https://www.weforum.org/agenda/2023/01/global-rules-crack-down-cybercrime/

- https://www.weforum.org/publications/global-risks-report-2023/

- https://www.imf.org/external/pubs/ft/fandd/2021/03/global-cyber-threat-to-financial-systems-maurer.htm

- https://www.eff.org/issues/un-cybercrime-treaty#:~:text=The%20United%20Nations%20is%20currently,of%20billions%20of%20people%20worldwide.

- https://cybersecurityventures.com/hackerpocalypse-cybercrime-report-2016/

- https://www.coe.int/en/web/cybercrime/the-budapest-convention

- https://economictimes.indiatimes.com/tech/technology/counter-use-of-technology-for-cybercrime-india-tells-un-ad-hoc-group/articleshow/92237908.cms?utm_source=contentofinterest&utm_medium=text&utm_campaign=cppst

- https://consultation.dpmc.govt.nz/un-cybercrime-convention/principlesandobjectives/supporting_documents/Background.pdf

- https://unric.org/en/a-un-treaty-on-cybercrime-en-route/

Introduction

India is reaching a turning point in its technological development when the AI Impact Summit 2026 is held in New Delhi. Artificial Intelligence (AI)is transforming economies, labour markets, governance structures and even the grammar of public discourse. It is no longer a frontier of speculation. The challenge facing the Summit is not whether AI will change our societies, it has already done so but rather whether inclusiveness and human dignity will serve as the foundation for this change.

India’s AI journey is defined by scale. The nation has one of the biggest user bases for cutting edge AI systems worldwide. According to projections, AI may create millions of new technology-driven occupations by 2030 and change the nature of millions more. This is a structural reconfiguration rather than an incremental alteration. The stakes are high for a country with a large youth population and diverse socioeconomic diversity.

India’s Tryst with Artificial Intelligence

India’s tryst with AI is a developmental imperative occurring at a civilisational scale not a show put on for a western favour. AI is still portrayed in many international storylines as a competition between China’s state backed rapidity, Europe’s sophisticated regulations and Silicon Valley’s capital. India is far too frequently a huge consumer market rather than a significant force behind the AI era. Such evaluations undervalue a nation that has already proven its capacity to implement technology at a democratic scale through its digital public infrastructure. AI in India is about more than just improving algorithms, it’s about giving millions more people access to social safety, healthcare, agriculture and education.

The scepticism overlooks a deeper truth, India innovates not from abundance but from urgency. India remains certain that technical advancement must be in line with social justice and inclusive growth. The recollections from history suggest that India’s greatest technological strides have often followed underestimation.

A Conclave of Contagious Ideas

India has long been the favourite underestimation of certain western observers, a nation of 1.4 billion people, the world’s fifth largest economy, a noisy democracy with inconvenient geopolitical realities, often assessed by counterparts governing populations smaller than many of its states. Advice follows in spades, sometimes from cities that mastered the art of strategic improvisation long before they preached restraint and sometimes with lectures on innovation, governance and order.

However, there are times when hierarchies need to be rearranged. It was hard to overlook the symbolism when Ranvir Sachdeva, the youngest keynote speaker at the AI Impact Summit, 2026, took the stage, “I’m here as the youngest keynote speaker at the Indian AI Impact Summit,” he said, discussing how he’s connecting ancient Indian beliefs to contemporary technology and the various strategies that other countries are doing to develop AI. In that simple articulation lay a quiet rebuttal, a civilization that once debated metaphysics under banyan trees is now debating ethics in plenary halls. History constantly demonstrates that India’s permanent address has never been underestimation.

From New Delhi to Geneva: The Global Arc of AI Governance

Now that the AI Impact Summit, 2026 is coming to an end, what’s left is not just the recollection of its size but also the form of new international dialogue. The New Delhi Declaration, a remarkable highlight of the Summit, was signed by eighty-eight nations and international organisations to support the democratic spread of AI.

The increasing complexity of the AI order was also made clear by the Summit. Pledges for investments totalled hundred of billions. The U.S. led Pax Silica effort was joined by India. SovereignLLMs in the country were introduced. At the same time, spectators were reminded that the politics of AI are inextricably linked to its promise via logistical challenges, protest disruptions and business rivalries. Although nations are not bound by the New Delhi Declaration it does represent a growing consensus that acceleration must be accompanied by governance.

The revelation that the 2027 AI Impact Summit will be in Geneva represents a significant shift in this regard. Guy Parmelin, the president of Switzerland, described the upcoming chapter as one that is primarily concerned with international law and good governance in an attempt to guarantee that the future of AI is not entirely in the hands of powerful nations. From scale and ambition in New Delhi to normative consolidation in Europe, Geneva, longtime hotbed of multilateral diplomacy, provides symbolic continuity.

Concluding Confluence

It is tempting to view the Global CyberPeace Summit (GCS), a Pre-Summit Event of AI Impact Summit held in close succession at Bharat Mandapam on 10th February, 2026. They formed a strong intellectual arc. At GCS, inclusion was not ornamental. A deeper message was conveyed by India Signing Hands’ involvement and purposeful emphasis on accessibility, digital systems must be created with, not just for, those on margins. Resilience must start at the economic level, according to the AI-enabled cybersecurity engagement for MSMEs. Participants were reminded during the talks on Technology Facilitated Gender-Based Violence (TFGBV), CSAM prevention and child safety that technological arguments only gain significance when they are connected to real-world outcomes.

When Geneva takes over in 2027, the issue will not just be how AI should be regulated, but also what ethical foundation that governance is built upon. New Delhi’s belief that wisdom and power must coexist may be its contribution to this developing narrative. That persistence has content than spectacle, as well as possibly the faint form of technical conscience.