#FactCheck - Viral Video Claiming Iran’s Attack on US Airbase Debunked as 9/11 Footage

Executive Summary

A video showing thick smoke rising from a building and people running in panic is being shared on social media. The video is being circulated with the claim that it shows Iran launching a missile attack on the United States.CyberPeace’s research found the claim to be misleading. Our probe revealed that the video is not related to any recent incident. The viral clip is actually from the September 11, 2001 terrorist attacks on the World Trade Center in the United States and is being falsely shared as footage of an alleged Iranian missile strike on the US.

Claim:

An Instagram user shared the video claiming, “Iran has attacked a US airbase in Qatar. Iran has fired six ballistic missiles at the Al Udeid Airbase in Qatar. Al Udeid Airbase is the largest US military base in West Asia.”

Links to the post and its archived version are provided below.

Fact Check:

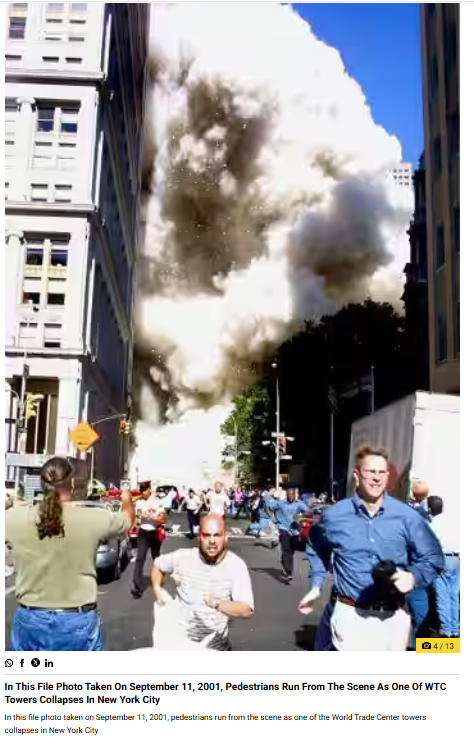

To verify the claim, we extracted key frames from the viral video and ran a reverse image search using Google Lens. During the search, we found visuals matching the viral clip in a report published by Wion on September 11, 2021. The report, titled “In pics | A look back at the scenes from the 9/11 attacks,” included an image that closely resembled the visuals seen in the viral video. The caption of the image stated that it was a file photo from September 11, 2001, showing pedestrians running as one of the World Trade Center towers collapsed in New York City.

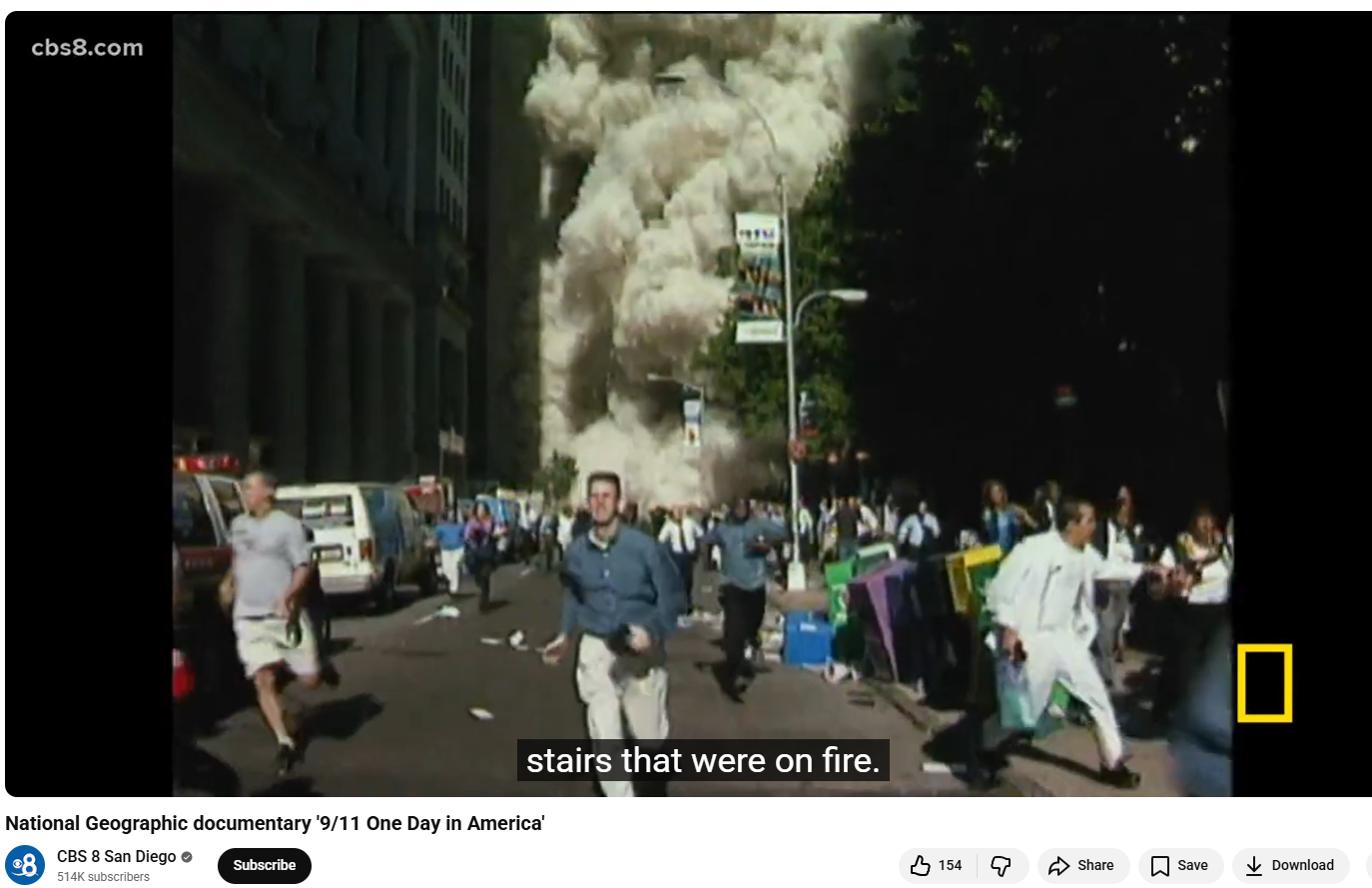

Further research led us to the same footage on the YouTube channel CBS 8 San Diego. At the 01:11 timestamp of the video, visuals matching the viral clip can be clearly seen.

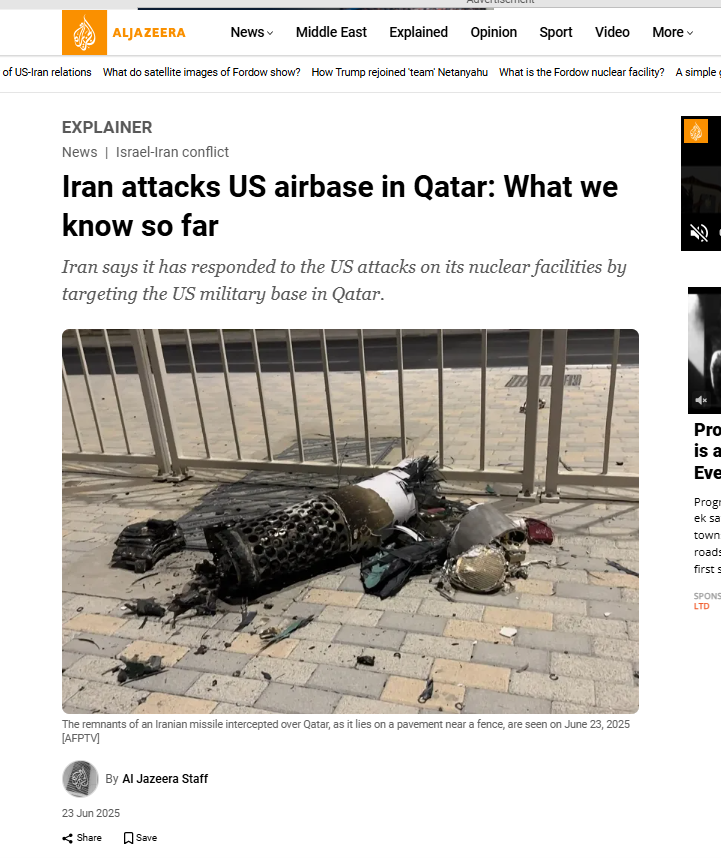

We also found an Al Jazeera report dated June 23, 2025, which confirmed that Iran had attacked US forces stationed at the Al Udeid airbase in Qatar in retaliation for US strikes on Iran’s uclear facilities. However, the visuals used in the viral video do not correspond to this incident.

Conclusion

The viral video does not show a recent Iranian attack on a US airbase in Qatar. The clip actually dates back to the September 11, 2001 terrorist attacks on the World Trade Center in the United States. Old 9/11 footage has been falsely shared with a misleading claim linking it to Iran’s alleged missile strike on the US.

Related Blogs

Introduction

Recent advances in space exploration and technology have increased the need for space laws to control the actions of governments and corporate organisations. India has been attempting to create a robust legal framework to oversee its space activities because it is a prominent player in the international space business. In this article, we’ll examine India’s current space regulations and compare them to the situation elsewhere in the world.

Space Laws in India

India started space exploration with Aryabhtta, the first satellite, and Rakesh Sharma, the first Indian astronaut, and now has a prominent presence in space as many international satellites are now launched by India. NASA and ISRO work closely on various projects

India currently lacks any space-related legislation. Only a few laws and regulations, such as the Indian Space Research Organisation (ISRO) Act of 1969 and the National Remote Sensing Centre (NRSC) Guidelines of 2011, regulate space-related operations. However, more than these rules and regulations are essential to control India’s expanding space sector. India is starting to gain traction as a prospective player in the global commercial space sector. Authorisation, contracts, dispute resolution, licencing, data processing and distribution related to earth observation services, certification of space technology, insurance, legal difficulties related to launch services, and stamp duty are just a few of the topics that need to be discussed. The necessary statute and laws need to be updated to incorporate space law-related matters into domestic laws.

India’s Space Presence

Space research activities were initiated in India during the early 1960s when satellite applications were in experimental stages, even in the United States. With the live transmission of the Tokyo Olympic Games across the Pacific by the American Satellite ‘Syncom-3’ demonstrating the power of communication satellites, Dr Vikram Sarabhai, the founding father of the Indian space programme, quickly recognised the benefits of space technologies for India.

As a first step, the Department of Atomic Energy formed the INCOSPAR (Indian National Committee for Space Research) under the leadership of Dr Sarabhai and Dr Ramanathan in 1962. The Indian Space Research Organisation (ISRO) was formed on August 15, 1969. The prime objective of ISRO is to develop space technology and its application to various national needs. It is one of the six largest space agencies in the world. The Department of Space (DOS) and the Space Commission were set up in 1972, and ISRO was brought under DOS on June 1, 1972.

Since its inception, the Indian space programme has been orchestrated well. It has three distinct elements: satellites for communication and remote sensing, the space transportation system and application programmes. Two major operational systems have been established – the Indian National Satellite (INSAT) for telecommunication, television broadcasting, and meteorological services and the Indian Remote Sensing Satellite (IRS) for monitoring and managing natural resources and Disaster Management Support.

Global Scenario

The global space race has been on and ever since the moon landing in 1969, and it has now transformed into the new cold war among developed and developing nations. The interests and assets of a nation in space need to be safeguarded by the help of effective and efficient policies and internationally ratified laws. All nations with a presence in space do not believe in good for all policy, thus, preventive measures need to be incorporated into the legal system. A thorough legal framework for space activities is being developed by the United Nations Office for Outer Space Affairs (UNOOSA). The “Outer Space Treaty,” a collection of five international agreements on space law, establishes the foundation of international space law. The agreements address topics such as the peaceful use of space, preventing space from becoming militarised, and who is responsible for damage caused by space objects. Well-established space laws govern both the United States and the United Kingdom. The National Aeronautics and Space Act, which was passed in the US in 1958 and established the National Aeronautics and Space Administration (NASA) to oversee national space programmes, is in place there. The Outer Space Act of 1986 governs how UK citizens and businesses can engage in space activity.

Conclusion

India must create a thorough legal system to govern its space endeavours. In the space sector, there needs to be a legal framework to avoid ambiguity and confusion, which may have detrimental effects. The Pacific use of space for the benefit of humanity should be covered by domestic space legislation in India. The overall scenario demonstrates the requirement for a clearly defined legal framework for the international acknowledgement of a nation’s space activities. India is fifth in the world for space technology, which is an impressive accomplishment, and a strong legal system will help India maintain its place in the space business.

.webp)

Introduction

In an era where digital connectivity drives employment, investment, and communication, the most potent weapon of cybercriminals is ‘gaining trust’ with their sophisticated tactics. Prayagraj has been a recent battleground in India's cybercrime landscape. Within a one-year crackdown, over 10,400 SIM cards, 612 mobile device IMEIs, and 59 bank accounts were blocked, exposing a sprawling international fraud network. These activities primarily targeted unsuspecting individuals through Telegram job postings, fake investment tips, and mobile app scams, highlighting the darker side of convenience in cyberspace. With India now experiencing a wave of scams enabled by technology, this crackdown establishes a precedent for concerted cyber policing and awareness among citizens.

Digital Deceit: How the Scams Operated

SIM cards that have been issued through fake or stolen identities are increasingly being used by cybercriminals in Prayagraj and elsewhere. These SIMs were the initial weapon in a highly organised fraud system, allowing criminals to conduct themselves anonymously while abusing messaging services like WhatsApp and Telegram. The gangs involved in these scams, some of which have been linked by reports to nations like Nepal, Pakistan, China, Dubai, and Myanmar, enticed their victims with rich-yielding stock market advice, remote employment offers, and weekend employment promises. After getting a target engaged, victims were slowly manipulated into sending money in the name of application fees, verification fees, or investment contributions.

API Abuse and OTP Interception

What's more alarming about these scams is their tech-savviness. From Prayagraj's cybercrime squad, several syndicates are reported to have employed API-based mobile applications to intercept OTPs (One-Time Passwords) sent to Indian numbers. Such apps, cleverly disguised as genuine services or work-from-home software, collected personal details like bank account credentials and payment card data, allowing wrongdoers to carry out unauthorised transactions in a matter of minutes. The pilfered funds were then quickly transferred through several mule accounts, rendering the money trail almost untraceable.

The Human Impact: How Citizens Were Trapped

Victims tended to come from job-hunting groups, students, or housewives seeking to earn additional income. Often, the scammers persuaded users to join Telegram channels providing free investment advice or job-referral-based schemes, creating an illusion of authenticity. Once on board, victims were sometimes even paid small commissions initially, creating a false sense of success. This tactic, known as “advance-fee confidence building,” made victims more likely to invest larger sums later, ultimately leading to complete financial loss.

Digital Arrest Threats and Bitcoin Ransom Scams

Aside from investment and job scam complaints, the cybercrime cell also saw several "digital arrest" scams, where victims were forced to send money under the threat of engaging in criminal activities. Bitcoin extortion schemes were also used in some cases, with perpetrators threatening exposure of victims' personal information or browsing history on the internet unless they were paid in cryptocurrency.

Law Enforcement’s Cyber Shield: Local Action, Global Impact

Identifying the extent of the threat, Prayagraj authorities implemented strategic measures to enable local policing. Cyber Units have been formed in each of the 43 police stations in the district, each made up of a sub-inspector, head constable, constable, lady constable, and computer operator. This decentralised model enables response in real-time, improved victim support, and quicker forensic analysis of hacked devices. The nodal officer for cyber operations said that this multi-level action is not punitive but preventive, meant to break syndicates before more harm is caused.

CyberPeace Recommendations: Prevention is Power

As cybercrime gets advanced, citizens will also have to keep pace with it. Prayagraj's experience highlights the importance of public awareness, digital literacy, and instant response processes. To assist in preventing people from falling victim to such scams, CyberPeace advises the following:

- Don't click on dubious APK links sent on WhatsApp or Telegram.

- Do not share OTPs or confidential details, even if the source appears to be familiar.

- Never download unfamiliar apps that demand access to SMS or financial information.

- Block your SIM card, payment cards, and bank accounts at once if your phone is stolen.

- Report all cyber frauds to cybercrime.gov.in or your local Cyber Cell.

- Never join investment or job groups on social sites without verification.

- Refuse video calls from unknown numbers; some scammers use this method of recording or blackmailing victims.

Conclusion

Prayagraj crackdown uncovers both the magnitude and versatility of cybercrime in the present. From trans-border cartels to Telegram job scams, the cyber front is as intricate as ever. But this incident also illustrates what can be achieved when technology, law enforcement, and public awareness come together. To stay safe from cyber threats, a cyber-conscious citizenry is as important as an effective cyber cell for India. At CyberPeace, we know that defending cyberspace begins with cyber resilience, and the story of Prayagraj should encourage communities everywhere to take active digital precautions.

References

- https://www.hindustantimes.com/cities/lucknow-news/over-10k-sims-blocked-as-job-investment-frauds-rise-in-prayagraj-101753715061234.html

- https://consumer.ftc.gov/articles/how-recognize-and-avoid-phishing-scams

- https://faq.whatsapp.com/2286952358121083

- https://education.vikaspedia.in/viewcontent/education/digital-litercy/information-security/preventing-online-scams-cert-in-advisory?lgn=en

- https://cybercrime.gov.in/Accept.aspx

- https://www.linkedin.com/pulse/perils-advance-fee-fraud-protecting-yourself-from-scammers-sharma/

Introduction

The Ministry of Electronics and Information Technology recently released the IT Intermediary Guidelines 2023 Amendment for social media and online gaming. The notification is crucial when the Digital India Bill’s drafting is underway. There is no denying that this bill, part of a series of bills focused on amendments and adding new provisions, will significantly improve the dynamics of Cyberspace in India in terms of reporting, grievance redressal, accountability and protection of digital rights and duties.

What is the Amendment?

The amendment comes as a key feature of cyberspace as the bill introduces fact-checking, a crucial aspect of relating information on various platforms prevailing in cyberspace. Misformation and disinformation were seen rising significantly during the Covid-19 pandemic, and fact-checking was more important than ever. This has been taken into consideration by the policymakers and hence has been incorporated as part of the Intermediary guidelines. The key features of the guidelines are as follows –

- The phrase “online game,” which is now defined as “a game that is offered on the Internet and is accessible by a user through a computer resource or an intermediary,” has been added.

- A clause has been added that emphasises that if an online game poses a risk of harm to the user, intermediaries and complaint-handling systems must advise the user not to host, display, upload, modify, publish, transmit, store, update, or share any data related to that risky online game.

- A proviso to Rule 3(1)(f) has been added, which states that if an online gaming intermediary has provided users access to any legal online real money game, it must promptly notify its users of the change, within 24 hours.

- Sub-rules have been added to Rule 4 that focus on any legal online real money game and require large social media intermediaries to exercise further due diligence. In certain situations, online gaming intermediaries:

- Are required to display a demonstrable and obvious mark of verification of such online game by an online gaming self-regulatory organisation on such permitted online real money game

- Will not offer to finance themselves or allow financing to be provided by a third party.

- Verification of real money online gaming has been added to Rule 4-A.

- The Ministry may name as many self-regulatory organisations for online gaming as it deems necessary for confirming an online real-money game.

- Each online gaming self-regulatory body will prominently publish on its website/mobile application the procedure for filing complaints and the appropriate contact information.

- After reviewing an application, the self-regulatory authority may declare a real money online game to be a legal game if it is satisfied that:

- There is no wagering on the outcome of the game.

- Complies with the regulations governing the legal age at which a person can engage into a contract.

- The Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 have a new rule 4-B (Applicability of certain obligations after an initial period) that states that the obligations of the rule under rules 3 and 4 will only apply to online games after a three-month period has passed.

- According to Rule 4-C (Obligations in Relation to Online Games Other Than Online Real Money Games), the Central Government may direct the intermediary to make necessary modifications without affecting the main idea if it deems it necessary in the interest of India’s sovereignty and integrity, the security of the State, or friendship with foreign States.

- Intermediaries, such as social media companies or internet service providers, will have to take action against such content identified by this unit or risk losing their “safe harbour” protections under Section 79 of the IT Act, which let intermediaries escape liability for what third parties post on their websites. This is problematic and unacceptable. Additionally, these notified revisions can circumvent the takedown order process described in Section 69A of the IT Act, 2000. They also violated the ruling in Shreya Singhal v. Union of India (2015), which established precise rules for content banning.

- The government cannot decide if any material is “fake” or “false” without a right of appeal or the ability for judicial monitoring since the power to do so could be abused to thwart examination or investigation by media groups. Government takedown orders have been issued for critical remarks or opinions posted on social media sites; most of the platforms have to abide by them, and just a few, like Twitter, have challenged them in court.

Conclusion

The new rules briefly cover the aspects of fact-checking, content takedown by Govt, and the relevance and scope of sections 69A and 79 of the Information Technology Act, 2000. Hence, it is pertinent that the intermediaries maintain compliance with rules to ensure that the regulations are sustainable and efficient for the future. Despite these rules, the responsibility of the netizens cannot be neglected, and hence active civic participation coupled with such efficient regulations will go a long way in safeguarding the Indian cyber ecosystem.