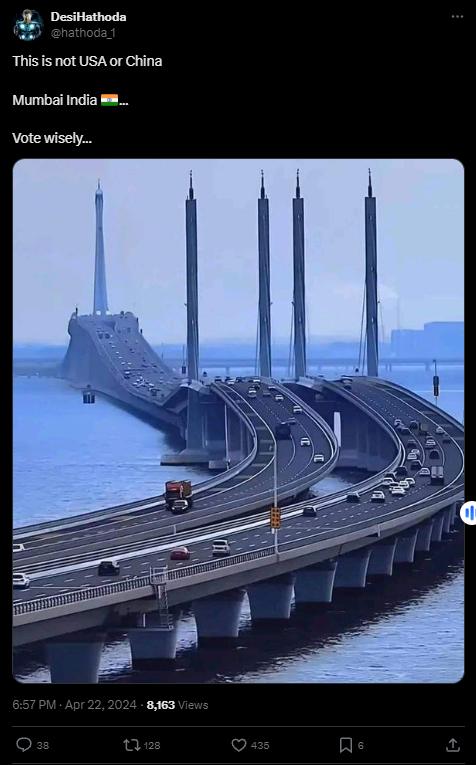

#FactCheck - Viral Image of Bridge claims to be of Mumbai, but in reality it's located in Qingdao, China

Executive Summary:

The photograph of a bridge allegedly in Mumbai, India circulated through social media was found to be false. Through investigations such as reverse image searches, examination of similar videos, and comparison with reputable news sources and google images, it has been found that the bridge in the viral photo is the Qingdao Jiaozhou Bay Bridge located in Qingdao, China. Multiple pieces of evidence, including matching architectural features and corroborating videos tell us that the bridge is not from Mumbai. No credible reports or sources have been found to prove the existence of a similar bridge in Mumbai.

Claims:

Social media users claim a viral image of the bridge is from Mumbai.

Fact Check:

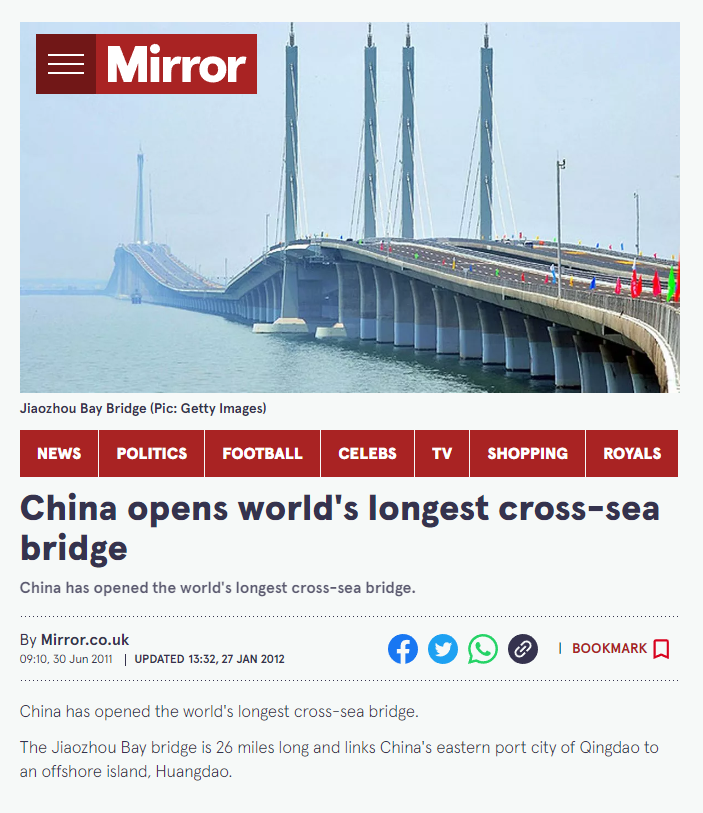

Once the image was received, it was investigated under the reverse image search to find any lead or any information related to it. We found an image published by Mirror News media outlet, though we are still unsure but we can see the same upper pillars and the foundation pillars with the same color i.e white in the viral image.

The name of the Bridge is Jiaozhou Bay Bridge located in China, which connects the eastern port city of the country to an offshore island named Huangdao.

Taking a cue from this we then searched for the Bridge to find any other relatable images or videos. We found a YouTube Video uploaded by a channel named xuxiaopang, which has some similar structures like pillars and road design.

In reverse image search, we found another news article that tells about the same bridge in China, which is more likely similar looking.

Upon lack of evidence and credible sources for opening a similar bridge in Mumbai, and after a thorough investigation we concluded that the claim made in the viral image is misleading and false. It’s a bridge located in China not in Mumbai.

Conclusion:

In conclusion, after fact-checking it was found that the viral image of the bridge allegedly in Mumbai, India was claimed to be false. The bridge in the picture climbed to be Qingdao Jiaozhou Bay Bridge actually happened to be located in Qingdao, China. Several sources such as reverse image searches, videos, and reliable news outlets prove the same. No evidence exists to suggest that there is such a bridge like that in Mumbai. Therefore, this claim is false because the actual bridge is in China, not in Mumbai.

- Claim: The bridge seen in the popular social media posts is in Mumbai.

- Claimed on: X (formerly known as Twitter), Facebook,

- Fact Check: Fake & Misleading

Related Blogs

Introduction

You must have heard of several techniques of cybercrime up to this point. Many of which we could never have anticipated. Some of these reports are coming from different parts of the country. Where video calls are being utilised to cheat. Through video calls, cybercriminals are making individuals victims of fraud. During this incident, fraudsters film pornographic recordings of both the victims using a screen recorder, then blackmail them by emailing these videos and demanding money. However, cybercriminals are improving their strategies to defraud more people. In this blog post, we will explore the tactics involved in this case, the psychological impact, and ways to combat it. Before we know more about the case, let’s have a look at deep fake, AI, and Sextortion and how fraudsters use technology to commit crimes.

Understanding Deepfake

Deepfake technology is the manipulation or fabrication of multimedia information such as videos, photos, or audio recordings using artificial intelligence (AI) algorithms, and profound learning models. These algorithms process massive quantities of data to learn and imitate human-like behaviour, allowing for very realistic synthetic media development.

Individuals with malicious intent may change facial expressions, bodily movements, and even voices in recordings using deepfake technology, basically replacing a person’s appearance with someone else’s. The produced film can be practically indistinguishable from authentic footage, making it difficult for viewers to distinguish between the two.

Sextortion and technology

Sextortion is a sort of internet blackmail in which offenders use graphic or compromising content to compel others into offering money, sexual favours, or other concessions. This information is usually gained by hacking, social engineering, or tricking people into providing sensitive information.

Deepfake technology combined with sextortion techniques has increased the impact on victims. Deepfakes may now be used by perpetrators to make and distribute pornographic or compromising movies or photographs that seem genuine but are completely fake. As the prospect of discovery grows increasingly credible and tougher to rebut, the stakes for victims rise.

Cyber crooks Deceive

In this present case, cyber thugs first make video calls to people and capture the footage. They then twist the footage and merge it with a distorted naked video. As a result, the victim is obliged to conceal the case. Following that, “they demand money as a ransom to stop releasing the doctored video on the victim’s contacts and social media platforms.” In this case, a video has emerged in which a lady who was supposedly featured in the first film is depicted committing herself because of the shame caused by the video’s release. These extra threats are merely intended to inflict psychological pressure and coercion on the victims.

Sextortionists have reached a new low by profiting from the misfortunes of others, notably targeting deceased victims. The offenders want to maximise emotional pain and persuade the victim into acquiescence by generating deep fake films depicting these persons. They use the inherent compassion and emotion connected with tragedy to exact bigger ransoms from their victims.

This distressing exploitation not only adds urgency to the extortion demands but also preys on the victim’s sensitivity and emotional instability. They even pressurize the victim by impersonating them, and if the demands are fulfilled, the victims may land up in jail.

Tactics used

The morphed death videos are precisely constructed to heighten emotional discomfort and instil terror in the targeted individual. By editing photographs or videos of the deceased, the offenders create unsettling circumstances that heighten the victim’s emotional response.

The psychological manipulation seeks to instil guilt, regret, and a sense of responsibility in the victim. The notion that they are somehow linked to the catastrophe increases their emotional weakness, making them more vulnerable to the demands of sextortionists. The offenders take use of these emotions, coercing victims into cooperation out of fear of being involved in the apparent tragedy.

The impact on the victim’s mental well-being cannot be overstated. They may experience intense psychological trauma, including anxiety, depression, and post-traumatic stress disorder (PTSD). The guilt and shame associated with the false belief of being linked to someone’s death can have long-lasting effects on their emotional health and overall quality of life, others may have trust issues.

Law enforcement agencies advised

Law enforcement organisations were concerned about the growing annoyance of these illegal acts. The use of deep fake methods or other AI technologies to make convincing morphing films demonstrates scammers’ improved ability. These tools are fully capable of modifying digital information in ways that are radically different from the genuine film, making it difficult for victims to detect the fake nature of the video.

Defence strategies to fight back: To combat sextortion, a proactive approach that empowers individuals and utilizes resources is required. This section delves into crucial anti-sextortion techniques such as reporting events, preserving evidence, raising awareness, and implementing digital security measures.

- Report the Incident: Sextortion victims should immediately notify law enforcement. Contact your local police or cybercrime department and supply them with any important information, including specifics of the extortion attempt, communication logs, and any other evidence that can assist in the investigation. Reporting the occurrence is critical for keeping criminals responsible and averting additional harm to others.

- Preserve Evidence: Preserving evidence is critical in creating a solid case against sextortionists. Save and document any types of contact connected to the extortion, including text messages, emails, and social media conversations. Take screenshots, record phone calls (if legal), and save any other digital material or papers that might be used as evidence. This evidence can be useful in investigations and judicial processes.

Digital security: Implementing comprehensive digital security measures can considerably lower the vulnerability to sextortion assaults. Some important measures that one can use:

- Use unique, complicated passwords for all online accounts, and avoid reusing passwords across platforms. Consider utilising password managers to securely store and create strong passwords.

- Enable two-factor authentication (2FA) whenever possible, which adds an extra layer of protection by requiring a second verification step, such as a code delivered to your phone or email, in addition to the password.

- Regular software updates: Keep your operating system, antivirus software, and programmes up to date. Security patches are frequently included in software upgrades to defend against known vulnerabilities.

- Adjust your privacy settings on social networking platforms and other online accounts to limit the availability of personal information and restrict access to your content.

- Be cautious when clicking on links or downloading files from unfamiliar or suspect sources. When exchanging personal information online, only use trusted websites.

Conclusion:

Combating sextortion demands a collaborative effort that combines proactive tactics and resources to confront this damaging practice. Individuals may actively fight back against sextortion by reporting incidences, preserving evidence, raising awareness, and implementing digital security measures. It is critical to empower victims, encourage their rehabilitation, and collaborate to build a safer online environment where sextortionists are held accountable and everyone can navigate the digital environment with confidence.

Executive Summary:

A misleading video of a child covered in ash allegedly circulating as the evidence for attacks against Hindu minorities in Bangladesh. However, the investigation revealed that the video is actually from Gaza, Palestine, and was filmed following an Israeli airstrike in July 2024. The claim linking the video to Bangladesh is false and misleading.

Claims:

A viral video claims to show a child in Bangladesh covered in ash as evidence of attacks on Hindu minorities.

Fact Check:

Upon receiving the viral posts, we conducted a Google Lens search on keyframes of the video, which led us to a X post posted by Quds News Network. The report identified the video as footage from Gaza, Palestine, specifically capturing the aftermath of an Israeli airstrike on the Nuseirat refugee camp in July 2024.

The caption of the post reads, “Journalist Hani Mahmoud reports on the deadly Israeli attack yesterday which targeted a UN school in Nuseirat, killing at least 17 people who were sheltering inside and injuring many more.”

To further verify, we examined the video footage where the watermark of Al Jazeera News media could be seen, We found the same post posted on the Instagram account on 14 July, 2024 where we confirmed that the child in the video had survived a massacre caused by the Israeli airstrike on a school shelter in Gaza.

Additionally, we found the same video uploaded to CBS News' YouTube channel, where it was clearly captioned as "Video captures aftermath of Israeli airstrike in Gaza", further confirming its true origin.

We found no credible reports or evidence were found linking this video to any incidents in Bangladesh. This clearly implies that the viral video was falsely attributed to Bangladesh.

Conclusion:

The video circulating on social media which shows a child covered in ash as the evidence of attack against Hindu minorities is false and misleading. The investigation leads that the video originally originated from Gaza, Palestine and documents the aftermath of an Israeli air strike in July 2024.

- Claims: A video shows a child in Bangladesh covered in ash as evidence of attacks on Hindu minorities.

- Claimed by: Facebook

- Fact Check: False & Misleading

Introduction

Due to the rapid growth of high-capability AI systems around the world, growing concerns regarding safety, accountability, and governance have arisen throughout the world; thus, California has responded by passing the Transparency in Frontier Artificial Intelligence Act (TFAIA), the first state statute focused on "frontier" (highly capable) AI models. This statute is unique in that it does not only target harms caused by AI models in the form of consumer protection as compared to the majority of state statutes; rather, this statute addresses the catastrophic and systemic risks to society associated with large-scale AI systems. As California is a global technology leader, the TFAIA is positioned to have a significant impact on both domestic regulation and the evolution of international legal frameworks for AI technology (and as such has the potential to influence corporate compliance practices and the establishment of global norms related to the use of AI).

Understanding the Transparency in Frontier Artificial Intelligence Act

The Transparency in Frontier Artificial Intelligence Act provides a specific regulatory process for companies that create sophisticated AI systems with societal, economic, or national security implications. Covered developers are required to publish an extensive safety and transparency policy that details how they navigate risk throughout the artificial intelligence lifecycle. The act requires developers to notify the government of any significant incidents or failures with their deployed frontier models on a timely basis.

A significant aspect of the TFAIA is that it establishes the concept of "process transparency", which does not explicitly control how AI developers create their models, but rather holds them accountable for their internal safety governance by mandating that they develop Documented safety frameworks that outline risk assessment, mitigation, and monitoring processes. The act allows developers to protect their trade secrets, patents, and national defense concerns by providing them with limited opportunities for exemption and/or redaction of their documents so that they can maintain a balance between data openness and safeguarding sensitive information..

Extraterritorial Impact on Global AI Developers

While the Act is a state law, its implementation has far-reaching effects. Many of the largest AI companies have facilities, research labs or customers in California. Therefore, to be compliant with the TFAIA, these companies are required to do so commercially. The ability to develop a unified compliance model across regions enables companies to avoid developing duplicate compliance models.

This same pattern has occurred in other regulatory areas, like data protection regulations; where a region's regulations effectively became global compliance benchmarks for that regulatory area. The TFAIA could similarly serve as a global standard for transparency in frontier AI and shape how companies build their governance structure globally even if they don't have explicit regulations in the regions where they operate.

Influence on International AI Regulatory Models

The TFAIA offers a unique perspective on global discussions about regulating AI. In contrast to other legislation which defines different levels of risk depending on the type of AI, the TFAIA targets specifically high-impact or emerging technologies. Other nations may see value in this model of tiered regulations based on capability and apply it for their own regulation of AI, with the strictest obligations placed on those with the most critical potential harm.

The TFAIA may serve as a guide for international public policy makers by showing how they can reference existing standards and best practices in developing regulations, thus improving interoperability and potentially lessening regulatory barriers to cross-border AI innovations.

Corporate Governance, Compliance Costs, and Competition

From an industry perspective, the Act revolutionizes the way companies govern themselves. Developers are now required to create thorough risk assessments, red-teaming exercises, incident response protocols, and have board oversight for AI safety and regulation. The number of people involved in this process increases accountability but at the same time the increases will create a burden of cost for all involved.

The burden of compliance will be easier for large tech companies than for smaller or start-ups, and thus large tech companies may solidify their position of dominance over the development of frontier AI. Smaller and newer developers may be blocked from entering the market unless some form of proportional or scaled compliance mechanism for where they operate emerges. These developments certainly raise issues surrounding innovation policy and competition law at a global scale that will need to be addressed by regulators in conjunction with AI safety concerns.

Transparency, Public Trust, and Accountability

The TFAIA bolsters the capability of citizens, researchers and journalists to oversee the development and the use of artificial intelligence (AI) through its requirement for public disclosure of the safety framework of AI systems. The disclosures will allow citizens, researchers and journalists to critically evaluate corporate claims of responsible AI development. Over time, this evaluation could increase trust in publically regulated AI systems and would expose businesses that exhibit a poor risk management process.

However, how useful this transparency is depends on the quality and comparability of the information being disclosed. Many current disclosures are either too vague or too complex, thus limiting the ability to conduct meaningful oversight. There should be a push for clearer guidance and/or the establishment of standardised disclosure forms for the purposes of public accountability (i.e., citizens) and uniformity between countries.

Conclusion

The Transparency in Frontier Artificial Intelligence Act is a transformative development in the regulation of Artificial Intelligence Technology, specifically, a whole new risk profile of this new generation of AI / (Advanced High-Powered) Technologies such as Autonomous Vehicles. This new California law will create global impact because it Be will change how technology companies operate, create regulatory frameworks and develop standards to govern/oversee the use of Autonomous Vehicles. The Act creates a “transparent” means for regulating (or governing) Autonomous Vehicles as opposed to relying solely on “technical” means for these systems. As other regions experience similar challenges that US Government is facing with respect to this new generation of AI (written laws), California's approach will likely be used as an example for how AI laws are written in the future and develop a more unified and responsible international AI regulatory framework.

References

- https://www.whitecase.com/insight-alert/california-enacts-landmark-ai-transparency-law-transparency-frontier-artificial

- https://www.gov.ca.gov/2025/09/29/governor-newsom-signs-sb-53-advancing-californias-world-leading-artificial-intelligence-industry/

- https://www.mofo.com/resources/insights/251001-california-enacts-ai-safety-transparency-regulation-tfaia-sb-53

- https://www.dlapiper.com/en/insights/publications/2025/10/california-law-mandates-increased-developer-transparency-for-large-ai-models