#FactCheck - Suryakumar Yadav–Salman Ali Agha Handshake Row: Viral Image Found AI-Generated

Executive Summary

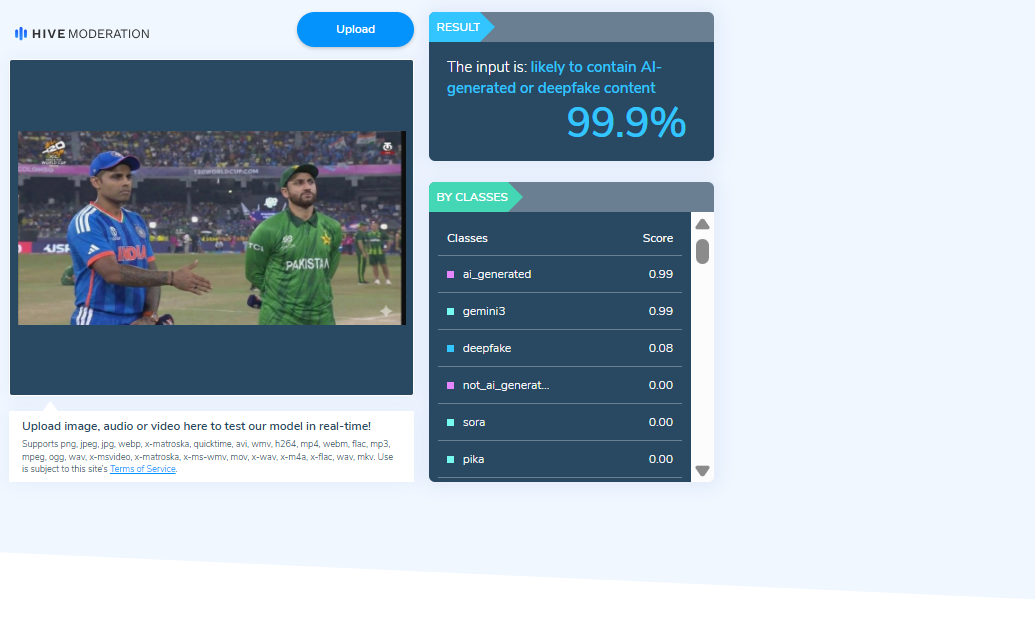

An image circulating on social media claims to show Suryakumar Yadav, captain of the Indian cricket team, extending his hand to greet Pakistan’s skipper Salman Ali Agha, who allegedly refused the gesture during the India–Pakistan T20 World Cup match held on February 15. Users shared the image as evidence of a real incident from the high-profile clash. However, a research by CyberPeace found that the image is AI-generated and was falsely circulated to mislead viewers.

Claim

On February 15, an X account named “@iffiViews,” reportedly operated from Pakistan, shared the image claiming it was taken during the India–Pakistan T20 World Cup match at the R. Premadasa Stadium in Colombo. The viral image appeared to show Yadav attempting to shake hands with Agha, who seemed to decline the gesture. The post quickly gained significant traction online, attracting around one million views at the time of reporting. Here is the link and archive link to the post, along with a screenshot.

- https://x.com/iffiViews/status/2023024665770484206?s=20

- https://archive.ph/xvtBs

Fact Check:

To verify the authenticity of the image, researchers closely examined the visual and identified a watermark associated with an AI image-generation tool. This raised strong indications that the image was digitally created and did not depict an actual event.

The image was further analysed using an AI detection tool, which indicated a 99.9 percent probability that the content was artificially generated or manipulated.

Researchers also conducted keyword searches to check whether the two captains had exchanged a handshake during the match. The search revealed media reports confirming that the traditional handshake between players has been discontinued since the Asia Cup 2025 in both men’s and women’s cricket. A report published by The Times of India on February 15 confirmed that no such customary exchange took place during the match between the two teams in Colombo.

Conclusion

The viral image claiming to show Suryakumar Yadav attempting to shake hands with Salman Ali Agha is not authentic. The visual is AI-generated and has been shared online with misleading claims.

Related Blogs

India’s cities are rapidly embracing digital technologies, transforming the way essential urban services operate. From traffic management and water supply to online grievance redressal, connected systems are making city life more efficient. As the Prime Minister has emphasised, smart cities are not just a fancy concept; they aim to ensure basic services, including housing and infrastructure for the urban poor, are delivered comprehensively and equitably.

But improved cybersecurity has become essential with th increasing reliance on digital systems in daily life. A single breach in digital public systems could jeopardise citizen data and interrupt vital services. In light of this, MoHUA organised the National Conference on Making Cities Cyber Secure in collaboration with MHA and MeitY. This is in spirit with the goal of Digital India, which is to create a safer online environment for all. More than 300 representatives from Central Ministries, National Cybersecurity Agencies, State Governments, State IT and Urban Development Secretaries, Additional Director Generals, Municipal Commissioners, CEOs of Smart Cities, and representatives from organisations like CERT-In, NCIIPC, I4C, and STQC attended the conference.

Key Initiatives Presented

MoHUA showcased a series of city-level cybersecurity initiatives designed to create a common framework for all smart cities. These include:

- Mandatory appointment of Chief Information Security Officers (CISOs) at city level which maintain and oversee the security of digital infrastructure in smart cites

- Completion of regular cybersecurity audits to identify and address vulnerabilities in there seem

- Consistent Risk Management Across Services: A structured approach to risk management will be used so that critical areas like traffic systems, utilities and public services all follow the same high standards of protection.

CISOs and Cybersecurity Frameworks

At the conference, the Union Home Secretary underscored a clear message: every city needs its own Chief Information Security Officer (CISO) backed by a capable technical team. This isn’t just a box-ticking exercise. A dedicated CISO brings focus to meeting national security norms, coordinating quick responses to cyber incidents, and lifting the overall level of cyber hygiene in the city.

Naming a single officer also creates accountability and gradually builds local expertise instead of constant dependence on outside consultants. Over time, this leadership position can help cities develop their own in-house capacity to manage the increasingly complex digital systems that keep public services running.

The SPV Dimension: Beyond Implementation

An important theme of the conference was the future of Special Purpose Vehicles (SPVs)(SPVs means government-backed companies set up under the Companies Act, 2013 with joint shareholding between State/UT administrations and Urban Local Bodies to implement the Smart Cities Mission) which have been the implementing arms of the Smart Cities Mission. Drawing from Advisory No. 27 (June 2025), stakeholders discussed repositioning SPVs as dynamic, innovation-driven bodies capable of supporting long-term urban development beyond the initial project phase.

Key points included:

- Expanding SPVs’ role in consultancy, investment facilitation, technology integration, and policy research.

- Ensuring SPVs act as hubs of expertise and innovation, rather than just project managers.

- Aligning SPV functions with the evolving cybersecurity and technology needs of urban local bodies.

This expanded mandate could allow SPVs to become sustainable institutions that continuously support cities in managing digital risks and adopting new technologies responsibly.

Building a Culture of Cyber Preparedness

One clear takeaway from the conference was that cybersecurity can’t just be added on later — it needs to be part of every step in the digital planning process, from purchasing technology and designing systems to daily operations. Experts from the Intelligence Bureau (IB) pointed out that as more government services go online, the potential risks grow, and cities must always be ready to respond. They highlighted emerging cyber risks linked to the rapid digitisation of governance.

Some of the practical steps highlighted included regular security audits, penetration testing, staff training, and campaigns to raise awareness among citizens. Equally important to have CISO which lead cybersecurity and creating strong communication channels between city teams, state agencies, and national cybersecurity bodies, so that information is shared promptly and responses can be coordinated effectively

Conclusion

The Ministry of Home Affairs’ directive on strengthening cybersecurity in smart cities represents a major milestone in safeguarding India’s urban digital infrastructure and shows the government's proactive step in cybersecurity . By mandating the appointment of Chief Information Security Officers (CISOs), enforcing regular audits, and promoting structured risk management, the MHA has set clear expectations for city administration. The conference also highlighted the evolving role of Special Purpose Vehicles (SPVs) in supporting long-term technological resilience. Embedding cybersecurity at every stage of planning, from system design to daily operations, signals a shift toward a culture of proactive defence. As highlighted by the Intelligence Bureau, emerging cyber risks linked to the rapid digitisation of governance make robust cybersecurity measures the need of the hour for India’s smart cities.

- https://www.pib.gov.in/PressReleasePage.aspx?PRID=2146180

- https://www.pib.gov.in/PressReleasePage.aspx?PRID=2135474

- https://m.economictimes.com/news/economy/infrastructure/pm-narendra-modi-launches-smart-city-projects/articleshow/52916581.cms

- https://the420.in/mha-orders-stronger-cybersecurity-in-smart-cities/

- https://www.newindianexpress.com/nation/2025/Sep/20/tighten-cyber-security-measures-in-smart-cities-mha-to-housing-ministry

.webp)

At Semicon India 2025 held recently, the Prime Minister declared, “when the chips are down, you can bet on India”. The event showcased the country’s first indigenous microprocessor, Vikram, developed by ISRO’s Semiconductor Lab, and announced that commercial chip production will begin by the end of 2025. India aims to become a global player in semiconductor production, and build self-reliance in a world where global supply chains are shifting rapidly.

Why Semiconductors Matter

Semiconductors power almost everything around us, from laptops and air conditioners to cars and even the tiniest gadget we hardly notice . They’ve rightly been called the “oil of the digital age” because our entire digital world depends on them. But the global supply chain for chips is heavily concentrated. Taiwan alone makes over 60% of the world’s semiconductors and nearly 90% of the most advanced ones. Rising tensions between China and Taiwan have only shown how fragile and risky this dependence can be for the rest of the world. For India, building its own semiconductor base is not just about technology, it is about economic security and reduced dependence on imports.

India’s Push: The Numbers and Projects

The government has committed nearly US$18 billion across 10 projects, making it one of the country’s largest industrial bets in decades. Under the Production Linked Incentive (PLI) scheme, ₹76,000 crore (about US$9.1 billion) was set aside, of which most has already been allocated.

Key developments include:

- Vikram processor – developed at ISRO’s Semiconductor Lab, fabricated on 180nm technology.

- CG Power facility in Sanand, Gujarat – launched in 2024, scaling chip assembly and testing.

- Micron’s investment – ₹22,500+ crore in Gujarat for packaging and testing.

- Tata Electronics–PSMC partnership – ₹91,000 crore tie-up with Taiwan’s Powerchip for fabs.

The domestic market, valued at US$38 billion in 2023, is expected to touch US$100–110 billion by 2030 if growth sustains.

The Technology Gap

While the Vikram chip, a 32 bit microprocessor, is a proud milestone, it highlights the technology gap India faces. The chip was fabricated using a 180nm CMOS process, a process that was cutting-edge back in the early 2000s. Today, companies like TSMC and Samsung are already producing 3nm chips for smartphones and AI servers, whereas those like Nvidia and Apple have developed chips 2ith 64-bit processing capabilities.

This means India's main focus, to become self-reliant in the mature end of the spectrum useful for space, defense, and automotives and electronics, is far from the global cutting edge. Bridging this gap will require both time and deep technical expertise.

Talent and Design Strengths

On the positive side, India already contributes around 20% of global semiconductor design talent. Two advanced design centers—one in Noida and another in Bengaluru—are working on 3nm designs. The government’s Design Linked Incentive scheme has cleared 20+ projects to nurture startups in chip design.

Over 60,000 engineers have been trained under various programs, but scaling this to the hundreds of thousands needed for fabs remains a challenge. Unlike software development, semiconductor fabrication demands highly specialised skills in process engineering, yield optimization, and supply chain logistics.

Lessons from Global Players

Countries like Taiwan, South Korea, and the US didn’t build their chip industries overnight. Taiwan’s TSMC spent decades and billions of dollars mastering yield rates and building trust with clients. The US recently passed the CHIPS and Science Act to revive domestic production, while the EU has its own Chips Act. Japan, too, has pledged billions, including ¥10 trillion in cooperation with India.

These examples show that success depends not just on funding , but also on harmony between government and private players, consistent execution, ecosystem building, and global partnerships.

The Challenges Ahead

India’s ambitions face several hurdles:

- Capital intensity – A single leading-edge fab costs US$10–20 billion, and requires constant upgrades.

- Supply chain complexity – Hundreds of chemicals, gases, and precision tools are needed, many of which India doesn’t yet produce domestically.

- Technology transfer – Advanced lithography machines (from ASML in the Netherlands, for example) are tightly controlled and not easily available.

- Execution risks – Moving from announcements to commercially viable fabs with competitive yields is where many countries have stumbled.

The Way Forward

India has big ambitions in the field of semi-conductor design and manufacturing, with the goal of becoming a major global exporter instead of importer. The country appears to be adopting a step-by-step approach, starting with assembly, testing, and mature-node fabs, while simultaneously investing in design, research, and talent. Every successful global power in this industry first mastered older nodes before advancing to cutting-edge levels.

At the same time, international collaborations with players like Micron, Tata-PSMC, and Japan will be critical for technology transfer and capacity building. If India can combine its engineering talent, rising domestic demand, and government backing with the PLI scheme, and drive global collaborations, the outlook can be promising.

Conclusion

India’s semiconductor story is just beginning, but the direction is clear. The Vikram processor and investment announcement at Semicon 2025 shows the intent of the government. The hard part now lies ahead: moving from prototypes to large-scale production and globally competitive fabs in an industry that demands substantial investment, flawless execution, and years of patience.

Yet the stakes couldn’t be higher. Semiconductors will shape the future of economies and national security . If India plays its cards right by nurturing talent, innovating and researching, and driving global partnerships, the dream of becoming a global semiconductor hub may well move from ambition to reality.

References

- https://www.ndtv.com/india-news/when-chips-are-down-bet-on-india-pm-narendra-modis-big-semiconductor-push-6539317

- https://www.indiatoday.in/science/story/what-is-vikram-32-bit-chip-presented-to-pm-modi-at-semicon-india-2025-2780582-2025-09-02#

- https://www.visionofhumanity.org/the-worlds-dependency-on-taiwans-semiconductor-industry-is-increasing/

- https://m.economictimes.com/tech/artificial-intelligence/tata-electronics-and-powerchip-semiconductor-manufacturing-corporation-to-build-indias-first-semiconductor-fab/articleshow/113694273.cms

- https://www.business-standard.com/economy/news/10-trillion-yen-in-10-years-japan-pledges-big-investment-in-india-125082901564_1.html

- https://www.oecd.org/content/dam/oecd/en/publications/reports/2023/06/vulnerabilities-in-the-semiconductor-supply-chain_f4de7491/6bed616f-en.pdf

- https://techwireasia.com/2025/09/semiconductor-india-commercial-production-2025/

Artificial Intelligence (AI) provides a varied range of services and continues to catch intrigue and experimentation. It has altered how we create and consume content. Specific prompts can now be used to create desired images enhancing experiences of storytelling and even education. However, as this content can influence public perception, its potential to cause misinformation must be noted as well. The realistic nature of the images can make it hard to discern as artificially generated by the untrained eye. As AI operates by analysing the data it was trained on previously to deliver, the lack of contextual knowledge and human biases (while framing prompts) also come into play. The stakes are higher whilst dabbling with subjects such as history, as there is a fine line between the creation of content with the intent of mere entertainment and the spread of misinformation owing to biases and lack of veracity left unchecked. AI-generated images enhance storytelling but can also spread misinformation, especially in historical contexts. For instance, an AI-generated image of London during the Black Death might include inaccurate details, misleading viewers about the past.

The Rise of AI-Generated Historical Images as Entertainment

Recently, generated images and videos of various historical instances along with the point of view of the people present have been floating all over the internet. Some of them include the streets of London during the Black Death in the 1300s in England, the eruption of Mount Vesuvius at Pompeii etc. Hogne and Dan, two creators who operate accounts named POV Lab and Time Traveller POV on TikTok state that they create such videos as they feel that seeing the past through a first-person perspective is an interesting way to bring history back to life while highlighting the cool parts, helping the audience learn something new. Mostly sensationalised for visual impact and storytelling, such content has been called out by historians for inconsistencies with respect to details particular of the time. Presently, artists admit to their creations being inaccurate, reasoning them to be more of an artistic interpretation than fact-checked documentaries.

It is important to note that AI models may inaccurately depict objects (issues with lateral inversion), people(anatomical implausibilities), or scenes due to "present-ist" bias. As noted by Lauren Tilton, an associate professor of digital humanities at the University of Richmond, many AI models primarily rely on data from the last 15 years, making them prone to modern-day distortions especially when analysing and creating historical content. The idea is to spark interest rather than replace genuine historical facts while it is assumed that engagement with these images and videos is partly a product of the fascination with upcoming AI tools. Apart from this, there are also chatbots like Hello History and Charater.ai which enable simulations of interacting with historical figures that have piqued curiosity.

Although it makes for an interesting perspective, one cannot ignore that our inherent biases play a role in how we perceive the information presented. Dangerous consequences include feeding into conspiracy theories and the erasure of facts as information is geared particularly toward garnering attention and providing entertainment. Furthermore, exposure of such content to an impressionable audience with a lesser attention span increases the gravity of the matter. In such cases, information regarding the sources used for creation becomes an important factor.

Acknowledging the risks posed by AI-generated images and their susceptibility to create misinformation, the Government of Spain has taken a step in regulating the AI content created. It has passed a bill (for regulating AI-Generated content) that mandates the labelling of AI-generated images and failure to do so would warrant massive fines (up to $38 million or 7% of turnover on companies). The idea is to ensure that content creators label their content which would help to spot images that are artificially created from those that are not.

The Way Forward: Navigating AI and Misinformation

While AI-generated images make for exciting possibilities for storytelling and enabling intrigue, their potential to spread misinformation should not be overlooked. To address these challenges, certain measures should be encouraged.

- Media Literacy and Awareness – In this day and age critical thinking and media literacy among consumers of content is imperative. Awareness, understanding, and access to tools that aid in detecting AI-generated content can prove to be helpful.

- AI Transparency and Labeling – Implementing regulations similar to Spain’s bill on labelling content could be a guiding crutch for people who have yet to learn to tell apart AI-generated content from others.

- Ethical AI Development – AI developers must prioritize ethical considerations in training using diverse and historically accurate datasets and sources which would minimise biases.

As AI continues to evolve, balancing innovation with responsibility is essential. By taking proactive measures in the early stages, we can harness AI's potential while safeguarding the integrity and trust of the sources while generating images.

References:

- https://www.npr.org/2023/06/07/1180768459/how-to-identify-ai-generated-deepfake-images

- https://www.nbcnews.com/tech/tech-news/ai-image-misinformation-surged-google-research-finds-rcna154333

- https://www.bbc.com/news/articles/cy87076pdw3o

- https://newskarnataka.com/technology/government-releases-guide-to-help-citizens-identify-ai-generated-images/21052024/

- https://www.technologyreview.com/2023/04/11/1071104/ai-helping-historians-analyze-past/

- https://www.psypost.org/ai-models-struggle-with-expert-level-global-history-knowledge/

- https://www.youtube.com/watch?v=M65IYIWlqes&t=2597s

- https://www.vice.com/en/article/people-are-creating-records-of-fake-historical-events-using-ai/?utm_source=chatgpt.com

- https://www.reuters.com/technology/artificial-intelligence/spain-impose-massive-fines-not-labelling-ai-generated-content-2025-03-11/?utm_source=chatgpt.com

- https://www.theguardian.com/film/2024/sep/13/documentary-ai-guidelines?utm_source=chatgpt.com