#FactCheck - Misleading Video of Dubai Airport Attack Circulates Online, Found AI-Generated

Executive Summary

Amid rising tensions in the Middle East following attacks on Iran by the United States and Israel, a video is being shared on social media claiming that it shows a recent attack at Dubai International Airport. Research by the CyberPeace found the viral claim to be false. Our research revealed that the viral video is not real but has been created using artificial intelligence technology.

Claim:

An Instagram user shared the viral video on March 1, 2026, claiming it shows an attack at Dubai Airport. The link to the post, the archive link, and a screenshot are provided below.

Fact Check:

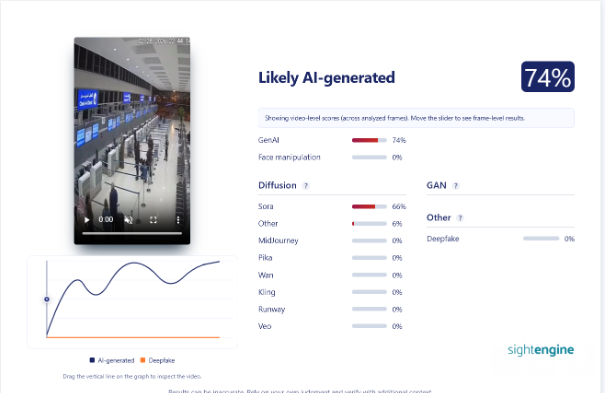

To verify the viral claim, we searched Google using relevant keywords. However, we did not find any credible media report confirming the claim.On closely examining the viral video, we noticed several unusual visuals and technical inconsistencies, raising suspicion that it might be AI-generated. To verify this, we scanned the video using the AI detection tool Sightengine. According to the results, around 74 percent of the video shows the likelihood of being AI-generated.

Conclusion:

Our research found that the viral video is not real but has been created using artificial intelligence technology.

Related Blogs

Introduction

Google India announced sachet loans on the Google Pay application to help small businesses in the country. Google India said that merchants in India often need smaller loans, hence, the tech giant launched sachet loans on the Gpay application. The company will provide loans to small businesses, which can be repaid in easier repayment instalments. To provide the load services, Google Pay has partnered with DMI Finance. This move comes at the Google for India, 2023, the flagship event to launch the Indian interventions planned by the big tech.

What is a Sachet Loan?

The loan system is the primary backbone of the global banking system. Since we have seen a massive transition towards the digital mode of transactions and banking operations, many online platforms have emerged. With the advent of QR codes, the Unified Payment Interface (UPI) has been rampantly used by Indians for making small or petty payments. Seeing this, Sachet loans made an advent as well, Sachet loans are essentially small-ticket loans ranging from Rs 10,000 to Rs 1 lakh, with repayment tenures between 7 days and 12 months. This nano-credit addresses immediate financial needs and is designed for swift approval and disbursement. Satchel loans are one of the most sought-after loan forms in the Western world. The ease of accessibility and easy repayment options have made it a successful form of money lending, which in turn has sparked the interest of the tech giant Google to execute similar operations in India.

Google Pay

Pertaining to the fact that UPI payments are the most preferred form of online payment, google came out with GPay in 2013 and now enjoys a user base of 67 million Indians. Google Pay has a 36.10% mobile application market share in India, and 26% of the UPI payments made have been through Google Pay. Google Pay adoption for in-store payments in India was higher in 2023 than it was in early 2019, signalling a growing use among consumers. The numbers shown here refer to the share of respondents who indicated they used Google Pay in the last 12 months, either for POS transactions with a mobile device in stores and restaurants or for online shopping. Eight out of 10 respondents from India indicated they had used Google Pay in a POS setting between April 2022 and March 2023, with an additional seven out of 10 saying they used Google Pay during this same time for online payments.

Pertaining to the Indian spectrum, the following aspects should be kept into consideration:

- PhonePe, Google Pay and Paytm accounted for nearly 96% of all UPI transactions by value in March

- PhonePe remained the top UPI app, processing 407.63 Cr transactions worth INR 7.07 Lakh Cr

- While Google Pay and Paytm retained second and third positions, respectively, Amazon Pay pushed CRED to the fifth spot in terms of the number of transactions

- Walmart-owned PhonePe, Google Pay and Paytm continued their dominance in India’s UPI payments space, together processing 94% of payments in March 2023.

- According to data from the National Payments Corporation of India (NPCI), the top three apps accounted for nearly 96% of all UPI transactions by value. This translates to about 841.91 Cr transactions worth INR 13.44 Lakh Cr between the three apps.

Conclusion

The big tech giant Google.org has been fundamental in creating and provisioning best-in-class services which are easily accessible to all the netizens. Satchel loans are the new services introduced by the platform and the widespread access of Gpay will go a long way in providing financial services and ease to the deprived and needy lot of the Indian population. This transition can also be seen by other payment portals like Paypal and Paytm, which clearly shows India's massive potential in leading the world of online banking and UPI transactions. As per stats, 40% of global online banking transactions take place in India. These aspects, coupled with the cores of Digital India and Make in India, clearly show how India is the global destination for investment in the current era.

References

- https://www.livemint.com/companies/news/google-enters-retail-loan-business-in-india-11697697999246.html

- https://www.statista.com/statistics/1389649/google-pay-adoption-in-india/#:~:text=Eight%20out%20of%2010%20respondents,same%20time%20for%20online%20payments

- https://playtoday.co/blog/stats/google-pay-statistics/#:~:text=67%20million%20active%20users%20of%20Google%20Pay%20are%20in%20India.&text=Google%20Pay%20users%20in%20India,in%2Dstore%20and%20online%20purchases.

- https://inc42.com/buzz/phonepe-google-pay-paytm-process-94-of-upi-transactions-march-2023/

Executive Summary

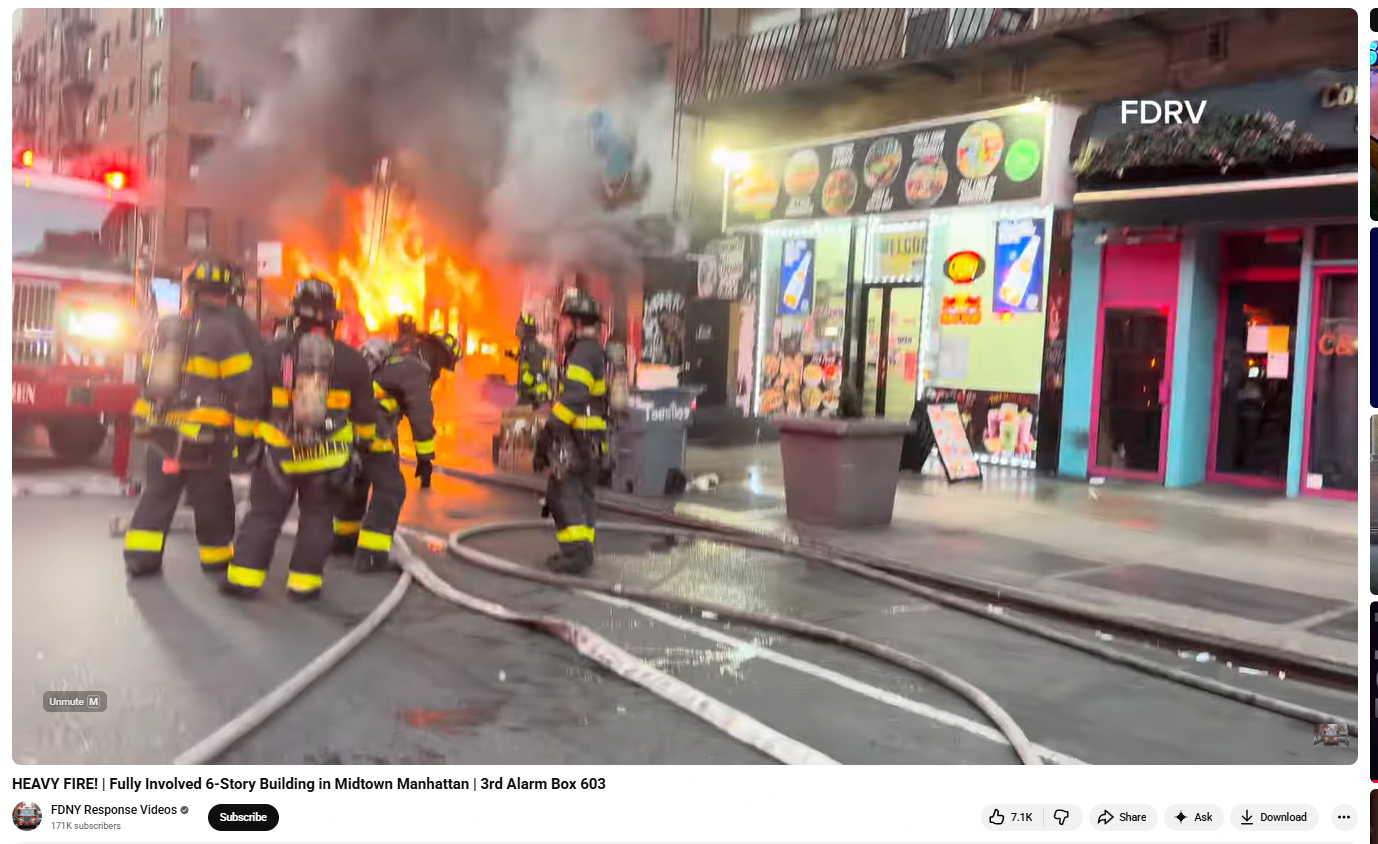

A video showing a building engulfed in flames is going viral on social media, with users claiming it depicts an attack by Hezbollah on Israel’s military headquarters. The clip is being shared with assertions that several Israeli soldiers were killed and many remain trapped inside the burning structure. However, a research by the CyberPeace Research Wing found that the claim is false. The viral video is not from Israel but from New York City in the Manhattan area, where a residential building caught fire.

Claim

A Facebook user, ‘Nazim Khan Tirwadiya’, shared the video on April 15, 2026, claiming that Hezbollah had targeted an Israeli military headquarters, resulting in heavy casualties and ongoing fire.

Fact Check

To verify the claim, we extracted keyframes from the viral video and conducted a reverse image search. This led us to a longer version of the same clip uploaded on the YouTube channel “FDNY Response Videos” on April 12, 2026. The video description identified the location as Manhattan, New York City.

Further keyword searches led us to a report published by ABC7NY on April 12, 2026. According to the report, a massive fire broke out in a six-storey apartment building in Manhattan’s Midtown area around 6 a.m. Firefighters worked extensively to control the blaze, and two firefighters sustained minor injuries. No fatalities were reported.

Conclusion

The viral claim is false. The video does not show an attack on Israel by Hezbollah. Instead, it captures a fire incident in a residential building in Manhattan, New York City. The clip has been shared with a misleading narrative unrelated to the actual event.

In an exciting milestone achieved by CyberPeace, an ICANN APRALO At-Large organization, in collaboration with the Internet Corporation for Assigned Names and Numbers (ICANN), has successfully deployed and made operational an L-root server instance in Ranchi, Jharkhand. This initiative marks a significant step toward enhancing the resilience, speed, and security of internet connectivity in eastern India.

Understanding the DNS hierarchy – Starting from Root

Internet users access online information through different domain names and interactions with any web browser takes place through IP (Internet Protocol) addresses. Domain Name System (DNS) functions as the internet's equivalent of Yellow Pages or the phonebook of cyberspace. When a person uses a domain name like www.cyberpeace.org to access a website, their browser communicates with the internet protocol, and DNS converts the domain name to the corresponding IP address so that web browsers may load the web pages. The function of a DNS is to convert domain names to Internet Protocol addresses. It enables the respective browsers to load the resources from the Internet.

When a user types a domain name into your browser, a DNS query works behind the scenes to find the website’s IP address. First, your device asks a DNS resolver—often provided by your ISP or a third-party service—for the address. The resolver checks its cache for a match, and if none is found, it queries a root server to locate the top-level domain (TLD) server (like .com or .org). The resolver then asks the TLD server for the Authoritative nameserver responsible for the particular domain, which provides the specific IP address. Finally, the resolver sends this address back to your device, enabling it to connect to the website’s server and load the page. The entire process happens in milliseconds, ensuring seamless browsing.

Special focus on Root Server:

A root server is a name server that directly answers queries for records in the root zone and redirects requests for more specific domains to the appropriate top-level domain (TLD) servers. Root servers are an integral part of this system, acting as the first step in resolving a domain name into its corresponding IP address. They provide the initial direction needed to locate the authoritative servers for any domain.

The DNS root zone is served by 13 unique IP addresses, supported by hundreds of redundant root servers distributed worldwide connected through Anycast Routing to manage requests efficiently. As of January 8, 2025, the global root server system consists of 1921 instances operated by 12 independent root server operators. These servers ensure the smooth functioning of the internet by managing the backbone of DNS queries.

Type of Root Server Instances:

Well, in this regard, there are two types of root server instances that can be found– Global instance and Local instance.

Global root server instances are the primary root servers distributed strategically around the world. Local instances, on the other hand, are replicas of these global servers deployed in specific regions to handle local DNS traffic more efficiently. In each operator's list of sites, some instances are marked as global (globe icon) and some are marked as local (flag icon). The difference is in how widely available that instance will be, because of how routing for that instance is done. Recall that the routes for an instance are announced by BGP, the inter-domain routing protocol.

For global instances, the route advertisement is permitted to spread throughout the Internet, i.e., any router on the Internet could know the path to that instance. Of course, for a particular source, the route to that instance may not be the optimal route, so some other instance could be chosen as the destination.

With a local instance, however, the route advertisement is limited to only nearby networks. For example, the instance may be visible to just one ISP, or to ISPs that connect at a particular exchange point. Sources from farther away will not be able to see and query that local instance.

Deployment in Ranchi - The Journey & Significance:

CyberPeace in Collaboration with ICANN has successfully deployed an L-root server instance in Ranchi, marking a significant milestone in enhancing regional Internet infrastructure. This deployment, part of a global network of root servers, ensures faster and more reliable DNS query resolution for the region, reducing latency and enhancing cybersecurity.

The Journey of deploying the L-Root instance in Collaboration with ICANN followed the steps-

- Signing the Agreement: Finalized the L-SINGLE Hosting Agreement with ICANN to formalize the partnership.

- Procuring the Hardware: Acquired the required hardware appliance to meet technical standards for hosting the L-root server.

- Setup and Installation: Configured and installed the appliance to prepare it for seamless operation.

- Joining the Anycast Network: Integrated the server into ICANN's global Anycast network using BGP (Border Gateway Protocol) for efficient DNS traffic management.

The deployment of the L-root server in Ranchi marks a significant boost to the region’s digital ecosystem. It accelerates DNS query resolution, reducing latency and enhancing internet speed and reliability for users.

This instance strengthens cyber defenses by mitigating Distributed Denial of Service (DDoS) risks and managing local traffic efficiently. It also underscores Eastern India’s advanced digital infrastructure, aligning with initiatives like Digital India to meet evolving digital demands.

By handling local queries, the L-root server eases the load on global servers, contributing to a more stable and resilient global internet.

CyberPeace’s Commitment to a Secure and resilient Cyberspace

As an organization dedicated to promoting peace, security and resilience in cyberspace, CyberPeace views this collaboration with ICANN as a significant achievement in its mission. By strengthening the internet’s backbone in eastern India, this deployment underscores our commitment to enabling a secure, accessible, and resilient digital ecosystem.

Way forward and Roadmap for Strengthening India’s DNS Infrastructure:

The successful deployment of the L-root instance in Ranchi is a stepping stone toward bolstering India's digital ecosystem. CyberPeace aims to promote awareness about DNS infrastructure through workshops and seminars, emphasizing its critical role in a resilient digital future.

With plans to deploy more such root server instances across India, the focus is on expanding local DNS infrastructure to enhance efficiency and security. Collaborative efforts with government agencies, ISPs, and tech organizations will drive this vision forward. A robust monitoring framework will ensure optimal performance and long-term sustainability of these initiatives.

Conclusion

The deployment of the L-root server instance in Eastern India represents a monumental step toward strengthening the region’s digital foundation. As Ranchi joins the network of cities hosting root server instances, the benefits will extend not only to the local community but also to the global internet ecosystem. With this milestone, CyberPeace reaffirms its commitment to driving innovation and resilience in cyberspace, paving the way for a more connected and secure future.