#FactCheck - False Claim of Hindu Sadhvi Marrying Muslim Man Debunked

Executive Summary:

A viral image circulating on social media claims to show a Hindu Sadhvi marrying a Muslim man; however, this claim is false. A thorough investigation by the Cyberpeace Research team found that the image has been digitally manipulated. The original photo, which was posted by Balmukund Acharya, a BJP MLA from Jaipur, on his official Facebook account in December 2023, he was posing with a Muslim man in his election office. The man wearing the Muslim skullcap is featured in several other photos on Acharya's Instagram account, where he expressed gratitude for the support from the Muslim community. Thus, the claimed image of a marriage between a Hindu Sadhvi and a Muslim man is digitally altered.

Claims:

An image circulating on social media claims to show a Hindu Sadhvi marrying a Muslim man.

Fact Check:

Upon receiving the posts, we reverse searched the image to find any credible sources. We found a photo posted by Balmukund Acharya Hathoj Dham on his facebook page on 6 December 2023.

This photo is digitally altered and posted on social media to mislead. We also found several different photos with the skullcap man where he was featured.

We also checked for any AI fabrication in the viral image. We checked using a detection tool named, “content@scale” AI Image detection. This tool found the image to be 95% AI Manipulated.

We also checked with another detection tool for further validation named, “isitai” image detection tool. It found the image to be 38.50% of AI content, which concludes to the fact that the image is manipulated and doesn’t support the claim made. Hence, the viral image is fake and misleading.

Conclusion:

The lack of credible source and the detection of AI manipulation in the image explains that the viral image claiming to show a Hindu Sadhvi marrying a Muslim man is false. It has been digitally altered. The original image features BJP MLA Balmukund Acharya posing with a Muslim man, and there is no evidence of the claimed marriage.

- Claim: An image circulating on social media claims to show a Hindu Sadhvi marrying a Muslim man.

- Claimed on: X (Formerly known as Twitter)

- Fact Check: Fake & Misleading

Related Blogs

.webp)

Introduction

YouTube is testing a new feature called ‘Notes,’ which allows users to add community-sourced context to videos. The feature allows users to clarify if a video is a parody or if it is misrepresenting information. The feature builds on existing features to provide helpful content alongside videos. Currently under testing, the feature will be available to a limited number of eligible contributors who will be invited to write notes on videos. These notes will appear publicly under a video if they are found to be broadly helpful. Viewers will be able to rate notes into three categories: ‘Helpful,’ ‘Somewhat helpful,’ or ‘Unhelpful’. Based on the ratings, YouTube will determine which notes are published. The feature will first be rolled out on mobile devices in the U.S. in English. The Google-owned platform will look at ways to improve the feature over time, including whether it makes sense to expand it to other markets.

YouTube To Roll Out The New ‘Notes’ Feature

YouTube is testing an experimental feature that allows users to add notes to provide relevant, timely, and easy-to-understand context for videos. This initiative builds on previous products that display helpful information alongside videos, such as information panels and disclosure requirements when content is altered or synthetic. YouTube in its blog clarified that the pilot will be available on mobiles in the U.S. and in the English language, to start with. During this test phase, viewers, participants, and creators are invited to give feedback on the quality of the notes.

YouTube further stated in its blog that a limited number of eligible contributors will be invited via email or Creator Studio notifications to write notes so that they can test the feature and add value to the system before the organisation decides on next steps and whether or not to expand the feature. Eligibility criteria include having an active YouTube channel in good standing with Yotube’s Community Guidelines.

Viewers in the U.S. will start seeing notes on videos in the coming weeks and months. In this initial pilot, third-party evaluators will rate the helpfulness of notes, which will help train the platform’s systems. As the pilot moves forward, contributors themselves will rate notes as well.

Notes will appear publicly under a video if they are found to be broadly helpful. People will be asked whether they think a note is helpful, somewhat helpful, or unhelpful and the reasons for the same. For example, if a note is marked as ‘Helpful,’ the evaluator will have the opportunity to specify if it is so because it cites high-quality sources or is written clearly and neutrally. A bridging-based algorithm will be used to consider these ratings and determine what notes are published. YouTube is excited to explore new ways to make context-setting even more relevant, dynamic, and unique to the videos we are watching, at scale, across the huge variety of content on YouTube.

CyberPeace Analysis: How Can Notes Help Counter Misinformation

The potential effectiveness of countering misinformation on YouTube using the proposed ‘Notes’ feature is significant. Enabling contributors to include notes on videos can offer relevant and accurate context to clarify any misleading or false information in the video. These notes can aid in enhancing viewers' comprehension of the content and detecting misinformation. The participation from users to rate the added notes as helpful, somewhat helpful, and unhelpful adds a heightened layer of transparency and public participation in identifying the accuracy of the content.

As YouTube intends to gather feedback from its various stakeholders to improve the feature over time, one can look forward to improved policy and practical over time: the feedback mechanism will allow for continuous refinement of the feature, ensuring it effectively addresses misinformation. The platform employs algorithms to identify helpful notes that cater to a broad audience across different perspectives. This helps showcase accurate information and combat misinformation.

Furthermore, along with the Notes feature, YouTube should explore and implement prebunking and debunking strategies on the platform by promoting educational content and empowering users to discern between fact and any misleading information.

Conclusion

The new feature, currently in the testing phase, aims to counter misinformation by providing context, enabling user feedback, leveraging algorithms, promoting transparency, and continuously improving information quality. Considering the diverse audience on the platform and high volumes of daily content consumption, it is important for both the platform operators and users to engage with factual, verifiable information. The fallout of misinformation on such a popular platform can be immense, and so, any mechanism or feature that can help counter the same must be developed to its full potential. Apart from this new Notes feature, YouTube has also implemented certain measures in the past to counter misinformation, such as providing authenticated sources to counter any election misinformation during the recent 2024 elections in India. These efforts are a welcome contribution to our shared responsibility as netizens to create a trustworthy, factual and truly-informational digital ecosystem.

References:

- https://blog.youtube/news-and-events/new-ways-to-offer-viewers-more-context/

- https://www.thehindu.com/sci-tech/technology/internet/youtube-tests-feature-that-will-let-users-add-context-to-videos/article68302933.ece

The digital ecosystem of India has experienced rapid growth, which has created numerous opportunities for economic development, better governance and increased social connections. The increasing use of digital technology has resulted in a higher incidence of cyber-enabled crimes, which include online fraud and cyber harassment, child exploitation and the spread of misinformation. The Government of India has established multiple initiatives to enhance a complete and unified framework that will help in combatting cybercrime more effectively. The latest updates presented to Parliament demonstrate how different institutional frameworks and legal provisions, capacity building efforts, and public awareness programs work together to handle new cyber threats.

A Coordinated Institutional Framework

The Indian system for investigating and prosecuting cybercrimes assigns responsibility to States and Union Territories, which operate their own Law Enforcement Agencies. The central government established the Indian Cyber Crime Coordination Centre (I4C) to support these operations through its Ministry of Home Affairs.

The I4C functions as a central hub that allows various stakeholders in cybercrime prevention and investigation to share intelligence and build their operational capacities. The initiative establishes a cybersecurity system that will improve its organisational structure through better central and state agency collaboration.

The National Cyber Crime Reporting Portal (NCCRP) serves as a primary project of this framework by providing online cyber incident reporting for citizens. The portal enables users to register complaints more efficiently while enhancing access to crime reporting, which particularly benefits victims of crimes against women and children. The system offers special channels which allow users to report Child Sexual Exploitative and Abuse Material (CSEAM) and rape-related materials while providing options for anonymous reporting and case tracking. After a complaint is lodged, the appropriate state authorities initiate the process to investigate the matter and proceed with legal procedures.

Capacity Building and Cyber Forensics

The response to cybercrime requires both expert investigators and advanced forensic technology systems. The Cyber Crime Prevention against Women and Children (CCPWC) Scheme, which provides financial backing and technical training to states and union territories, was instituted by the Ministry of Home Affairs to address this requirement.

The scheme has authorised the release of ₹132.93 crore for developing cyber forensic facilities and investigative technologies. The funding has supported the establishment of cyber forensic-cum-training laboratories across multiple states and union territories. The total number of operational laboratories has reached 33 at this time.

The organisation has prioritised its training initiatives together with its infrastructure development projects. More than 24,600 law enforcement personnel, prosecutors, and judicial officers have received training on cybercrime investigation, digital evidence handling, and forensic analysis. The capacity-building initiatives were designed to provide investigators and judicial authorities with essential skills needed to handle advanced cyber incidents.

International Cooperation for Child Protection

International cooperation is essential for addressing online child exploitation because these crimes utilise digital networks that connect multiple countries. The National Crime Records Bureau (NCRB) established a partnership with the National Centre for Missing and Exploited Children (NCMEC) of the United States in 2019 through a Memorandum of Understanding, which aims to enhance regional collaboration in this field.

The partnership enables the sharing of tipline reports about online child exploitation, which Indian authorities use for their investigative work. Under the Information Technology Act provisions, NCRB has received official powers to issue removal notices to intermediaries because they oversee child sexual abuse material and other dangerous content.

Promoting Online Safety Awareness

Cybercrime prevention requires two essential elements, which are public knowledge and digital expertise. The National Commission for Protection of Child Rights (NCPCR) has developed several resources to educate children, parents, teachers, and school administrators about online safety. The guidelines include Being Safe Online, together with school safety manuals that protect against cyberbullying and the 2024 updates, which provide new recommendations for cyberbullying prevention. The commission has established multiple conferences and training sessions throughout various states to educate both educators and school administrators about child protection regulations and school security measures, and cyber protection standards.

The digital responsibility programs educate communities about proper online conduct and teach them how to recognise and handle cybersecurity threats.

Legal Framework for Digital Safety

The Information Technology Act of 2000, together with the Information Technology Intermediary Guidelines and Digital Media Ethics Code Rules of 2021, (Updated as of 2026) serve as the core legal foundation through which India combats cybercrime. The laws establish penalties for online distribution of obscene and sexually explicit material while requiring digital intermediaries to block access to illegal content.

The Bharatiya Nyaya Sanhita 2023 contains additional legal provisions that deal with two types of offences that involve disseminating obscene material and spreading dangerous misinformation.

The regulatory framework requires intermediaries to eliminate illegal content within specified timeframes, while they must prevent their platforms from being used to conduct dangerous or unlawful activities.

Conclusion

India establishes its cybercrime response strategy through a multi-layered method that uses different institutional systems, technological systems, legal systems, and public education programs. Cyber threats develop through technological progress, yet authorities must establish effective cybersecurity, which depends on their ability to investigate, their systems for reporting incidents, and their dedication to maintaining proper online conduct.

India needs continuous cooperation among government bodies, police forces, technology companies, and community organisations to maintain secure and strong digital networks that provide equal access to all citizens.

References

- https://www.pib.gov.in/PressReleasePage.aspx?PRID=2238260®=3&lang=2

- https://www.policyedge.in/p/rajya-sabha-strengthening-indias-coordinated-response-to-cyber-crimes

.webp)

Executive Summary

The U.S. Department of Justice recently released nearly three million pages of documents, along with thousands of videos and photographs, related to its research into convicted offender Jeffrey Epstein. Meanwhile, a video showing a massive crowd protesting on a street is going viral on social media The video, which had earlier circulated with false claims linking it to anti-government protests in Iran, is now being shared by several users who claim that the protest took place in the United States after the release of the Epstein files. Research by CyberPeace found the viral claim to be false. The video being linked to protests in the United States following the release of the Epstein files is not real and was generated using artificial intelligence (AI).

Claim:

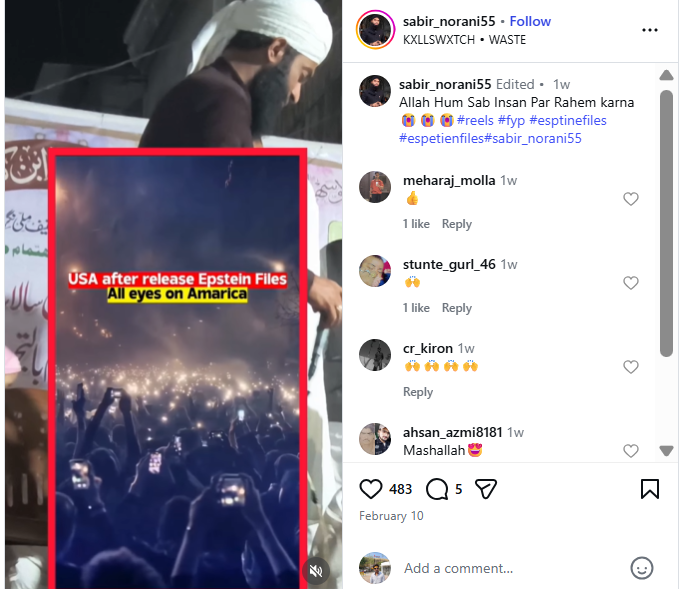

An Instagram user uploaded the viral video on February 9, 2026, with the caption: “After Epstein files released in America. All eyes on America.”

- https://www.instagram.com/reel/DUjLe-XE5lA

- https://ghostarchive.org/archive/tkP6W

Fact Check:

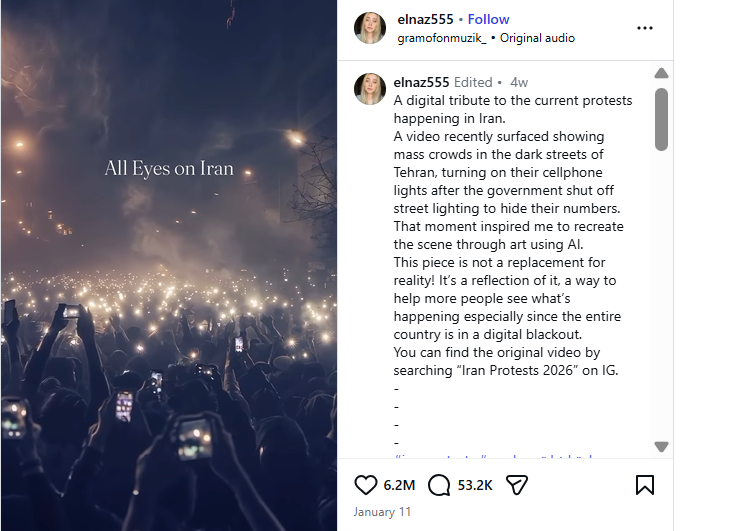

To verify the claim, we first conducted a reverse search of the viral video using Google Lens. The same video was found posted on January 10, 2026, by an Instagram account named “elnaz555,” where it was shared in the context of recent protests in Iran. The post also mentioned that the video was created using AI.

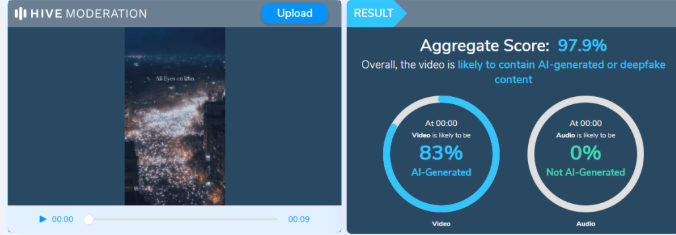

Based on this lead, we further analyzed a higher-quality version of the viral video using Hive Moderation, a tool used to detect AI-generated images and videos. The analysis indicated a 97.9% probability that the video was generated using artificial intelligence. The research clearly shows that the video is not authentic and has been falsely linked to protests in the United States after the release of the Epstein files.

Conclusion:

The claim circulating on social media is false. The viral video allegedly showing protests in the United States following the release of the Epstein files is AI-generated and not related to any real event.