#FactCheck - Digitally Altered Image Falsely Shows World Bank President Ajay Banga Holding Khalistani Flag

Executive Summary

A digitally manipulated image of World Bank President Ajay Banga has been circulating on social media, falsely portraying him as holding a Khalistani flag. The image was shared by a Pakistan-based X (formerly Twitter) user, who also incorrectly identified Banga as the President of the International Monetary Fund (IMF), thereby fuelling misleading speculation that he supports the Khalistani movement against India.

The Claim

On February 5, an X user with the handle @syedAnas0101010 posted an image allegedly showing Ajay Banga holding a Khalistani flag. The user misidentified him as the IMF President and captioned the post, “IMF president sending signals to INDIA.” The post quickly gained traction, amplifying false narratives and political speculation. Here is the link and archive link to the post, along with a screenshot:

Fact Check:

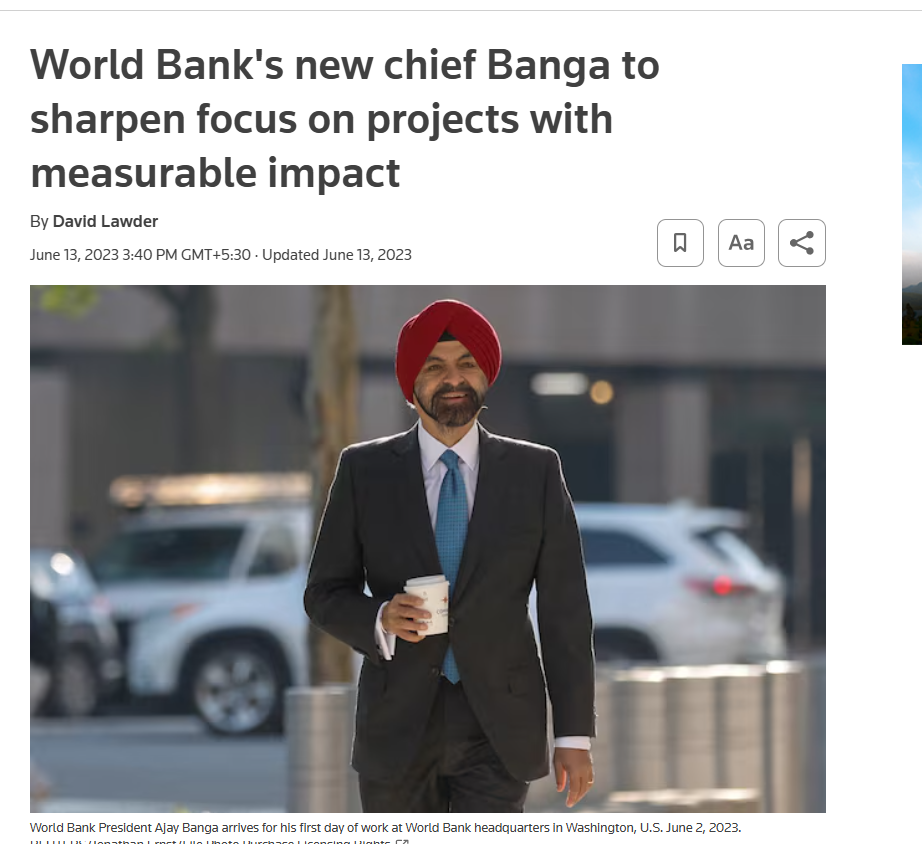

To verify the authenticity of the image, the CyberPeace Fact Check Desk conducted a detailed research . The image was first subjected to a reverse image search using Google Lens, which led to a Reuters news report published on June 13, 2023. The original photograph, captured by Reuters photojournalist Jonathan Ernst, showed Ajay Banga arriving at the World Bank headquarters in Washington, D.C., on June 2, 2023, marking his first day in office. In the authentic image, Banga is seen holding a coffee cup, not a flag.

Further analysis confirmed that the viral image had been digitally altered to replace the coffee cup with a Khalistani flag, thereby misrepresenting the context and intent of the original photograph. Here is the link to the report, along with a screenshot.

To strengthen the findings, the altered image was also analysed using the Hive Moderation AI detection tool. The tool’s assessment indicated a high likelihood that the image contained AI-generated or manipulated elements, reinforcing the conclusion that the image was not genuine. Below is a screenshot of the result.

Conclusion

The viral image claiming to show World Bank President Ajay Banga holding a Khalistani flag is fake. The photograph was digitally manipulated to spread misinformation and provoke political speculation. In reality, the original Reuters image from June 2023 shows Banga holding a coffee cup during his arrival at the World Bank headquarters. The claim that he supports the Khalistani movement is false and misleading.

Related Blogs

.webp)

Introduction

Misinformation has the potential to impact people, communities and institutions alike, and the ramifications can be far-ranging. From influencing voter behaviours and consumer choices to shaping personal beliefs and community dynamics, the information we consume in our daily lives affects every aspect of our existence. And so, when this very information is flawed or incomplete, whether accidentally or deliberately so, it has the potential to confuse and mislead people.

‘Debunking’ is the process of exposing false information or countering inaccuracies and manipulation by presenting actual facts. The goal is to minimise the harmful effects of misinformation by informing and educating people. Debunking initiatives work hard to expose false information and cut down conspiracies, catalogue evidence of false information, clearly identify sources of misinformation vs. accurate information, and assert the truth. Debunking looks at building capacity and educating people both as a strategy and goal.

Debunking is most effective when it comes from trusted sources, provides detailed explanations, and offers guidance and verifiable advice. Debunking is reactive in nature and it focuses on specific instances of misinformation and is closely tied to fact-checking. Debunking aims to mitigate the impact of misinformation that has already spread. As such, the approach is to contain and correct, post-occurrence. The most common method of debunking is collaboration between fact-checking groups and social media companies. When journalists or other fact-checkers identify false or misleading content, social media sites flag or label it such, so that audiences are alerted. Debunking is an essential method for reducing the impact and incidence of misinformation by providing real facts and increasing overall accuracy of content in the digital information ecosystem.

Role of Debunking the Misinformation

Debunking fights against false or misleading information by correcting false claims, myths, and misinformation with evidence-based rebuttals. It combats untruths and the spread of misinformation by providing and disseminating debunked evidence to the public. Debunking by presenting evidence that contradicts misleading facts and encourages individuals to develop fact-checking habits and proactively check for authenticated sources. Debunking plays a vital role in boosting trust in credible sources by offering evidence-based corrections and enhancing the credibility of online information. By exposing falsehoods and endorsing qualities like information completeness and evidence-backed data and logic, debunking efforts help create a culture of well-informed and constructive public conversations and analytical exchanges. Effectively dispelling myths and misinformation can help create communities and societies that are more educated, resilient, and goal-oriented.

Debunking as a tailoring Strategy to counter Misinformation

Understanding the information environment and source trustworthiness is critical for developing effective debunking techniques. Successful debunking efforts use clear messages, appealing forms, and targeted distribution to reach a wide range of netizens. Debunking as an effective method for combating misinformation includes analysing successful efforts, using fact-checking, relying on reputable sources for corrections, and using scientific communication. Fact-checking plays a critical role in ensuring information accuracy and holding people accountable for making misleading claims. Collaborative efforts and transparent techniques can boost the credibility and efficacy of fact-checking activities and boost the legitimacy and effectiveness of debunking initiatives at a larger scale. Scientific communication is also critical for debunking myths about different topics/concerns by giving evidence-based information. Clear and understandable framing of scientific knowledge is critical for engaging broad audiences and effectively refuting misinformation.

CyberPeace Policy Recommendations

- It is recommended that debunking initiatives must highlight core facts, emphasising what is true over what is wrong and establishing a clear contrast between the two. This is crucial as people are more likely to believe familiar information even if they learn later that it is incorrect. Debunking must provide a comprehensive explanation, filling the ‘information gap’ created by the myth. This can be done by explaining things as clearly as possible, as people may stop paying attention if they are faced with an overload of competing information. The use of visuals to illustrate core facts is an effective way to help people understand the issue and clearly tell the difference between information and misinformation.

- Individuals can play a role in debunking misinformation on social media by highlighting inconsistencies, recommending related articles with corrections or sharing trusted sources and debunking reports in their communities.

- Governments and regulatory agencies can improve information openness by demanding explicit source labelling and technical measures to be implemented on platforms. This can increase confidence in information sources and equip people to practice discernment when they consume content online. Governments should also support and encourage independent fact-checking organisations that are working to disprove misinformation. Digital literacy programmes may teach the public how to critically assess information online and spot any misinformation.

- Tech businesses may enhance algorithms for detecting and flagging misinformation, therefore reducing the propagation of misleading information. Offering options for people to report suspicious/doubtful information and misinformation can empower them and help them play an active role in identifying and rectifying inaccurate information online and foster a more responsible information environment on the platforms.

Conclusion

Debunking is an effective strategy to counter widespread misinformation through a combination of fact-checking, scientific evidence, factual explanations, verified facts and corrections. Debunking can play an important role in fostering a culture where people look for authenticity while consuming the information and place a high value on trusted and verified information. A collaborative strategy can increase the legitimacy and reach of debunking efforts, making them more effective in reaching larger audiences and being easy-to-understand for a wide range of demographics. In a complex and ever-evolving digital ecosystem, it is important to build information resilience both at the macro level for the ecosystem as a whole and at the micro level, with the individual consumer. Only then can we ensure a culture of mindful, responsible content creation and consumption.

References

.webp)

Introduction

In the intricate maze of our interconnected world, an unseen adversary conducts its operations with a stealth almost poetic in its sinister intent. This adversary — malware — has extended its tendrils into the digital sanctuaries of Mac users, long perceived as immune to such invasive threats. Our narrative today does not deal with the physical and tangible frontlines we are accustomed to; this is a modern tale of espionage, nestled in the zeros and ones of cyberspace.

The Mac platform, cradled within the fortifications of Apple's walled garden ecosystem, has stood as a beacon of resilience amidst the relentless onslaught of cyber threats. However, this sense of imperviousness has been shaken at its core, heralding a paradigm shift. A new threat lies in wait, bridging the gap between perceived security and uncomfortable vulnerability.

The seemingly invincible Mac OS X, long heralded for its robust security features and impervious resilience to virus attacks, faces an undercurrent of siege tactics from hackers driven by a relentless pursuit for control. This narrative is not about the front-and-centre warfare we see so often reported in media headlines. Instead, it veils itself within the actions of users as benign as the download of pirated software from the murky depths of warez websites.

The Incident

The casual act, born out of innocence or economic necessity, to sidestep the financial requisites of licensed software, has become the unwitting point of compromised security. Users find themselves on the battlefield, one that overshadows the significance of its physical counterpart with its capacity for surreptitious harm. The Mac's seeming invulnerability is its Achilles' heel, as the wariness against potential threats has been eroded by the myth of its impregnability.

The architecture of this silent assault is not one of brute force but of guile. Cyber marauders finesse their way through the defenses with a diversified arsenal; pirated content is but a smokescreen behind which trojans lie in ambush. The very appeal of free access to premium applications is turned against the user, opening a rift that permits these malevolent forces to ingress.

The trojans that permeate the defenses of the Mac ecosystem are architects of chaos. They surreptitiously enrol devices into armies of sorts – botnets which, unbeknownst to their hosts, become conduits for wider assaults on privacy and security. These machines, now soldiers in an unconsented war, are puppeteered to distribute further malware, carry out phishing tactics, and breach the sanctity of secure data.

The Trojan of Mac

A recent exposé by the renowned cybersecurity firm Kaspersky has shone a spotlight on this burgeoning threat. The meticulous investigation conducted in April of this year unveiled a nefarious campaign, engineered to exploit the complacency among Mac users. This operation facilitates the sale of proxy access, linking previously unassailable devices to the infrastructure of cybercriminal networks.

This revelation cannot be overstated in its importance. It illustrates with disturbing clarity the evolution and sophistication of modern malware campaigns. The threat landscape is not stagnant but ever-shifting, adapting with both cunning and opportunity.

Kaspersky's diligence in dissecting this threat detected nearly three dozen popular applications, and tools relied upon by individuals and businesses alike for a multitude of tasks. These apps, now weaponised, span a gamut of functionalities - image editing and enhancement, video compression, data recovery, and network scanning among them. Each one, once a benign asset to productivity, is twisted into a lurking danger, imbued with the power to betray its user.

The duplicity of the trojan is shrouded in mimicry; it disguises its malicious intent under the guise of 'WindowServer,' a legitimate system process intrinsic to the macOS. Its camouflage is reinforced by an innocuously named file, 'GoogleHelperUpdater.plist' — a moniker engineered to evade suspicion and blend seamlessly with benign processes affiliated with familiar applications.

Mode of Operation

Its mode of operation, insidious in its stealth, utilises the Transmission Control Protocol(TCP) and User Datagram Protocol(UDP) networking protocols. This modus operandi allows it to masquerade as a benign proxy. The full scope of its potential commands, however, eludes our grasp, a testament to the shadowy domain from which these threats emerge.

The reach of this trojan does not cease at the periphery of Mac's operating system; it harbours ambitions that transcend platforms. Windows and Android ecosystems, too, find themselves under the scrutiny of this burgeoning threat.

This chapter in the ongoing saga of cybersecurity is more than a cautionary tale; it is a clarion call for vigilance. The war being waged within the circuits and code of our devices underscores an inescapable truth: complacency is the ally of the cybercriminal.

Safety measures and best practices

It is imperative to safeguard the Mac system from harmful intruders, which are constantly evolving. Few measures can play a crucial role in protecting your data in your Mac systems.

- Refrain from Unlicensed Software - Refrain from accessing and downloading pirated software. Plenty of software serves as a decoy for malware which remains dormant till downloaded files are executed.

- Use Trusted Source: Downloading files from legitimate and trusted sources can significantly reduce the threat of any unsolicited files or malware making its way into your Mac system.

- Regular system updates: Regular updates to systems released by the company ensure the latest patches are installed in the system critical to combat and neutralize emerging threats.

- General Awareness: keeping abreast of the latest developments in cyberspace plays a crucial role in avoiding new and emerging threats. It is crucial to keep pace with trends and be well-informed about new threats and ways to combat them.

Conclusion

In conclusion, this silent conflict, though waged in whispers, echoes with repercussions that reverberate through every stratum of digital life. The cyber threats that dance in the shadows cast by our screens are not figments of paranoia, but very real specters hunting for vulnerabilities to exploit. Mac users, once confident in their platforms' defenses, must awaken to the new dawn of cybersecurity awareness.

The battlefield, while devoid of the visceral carnage of physical warfare, is replete with casualties of privacy and breaches of trust. The soldiers in this conflict are disguised as serviceable code, enacting their insidious agendas beneath a façade of normalcy. The victims eschew physical wounds for scars on their digital identities, enduring theft of information, and erosion of security.

As we course through the daunting terrain of digital life, it becomes imperative to heed the lessons of this unseen warfare. Shadows may lie unseen, but it is within their obscurity that the gravest dangers often lurk, a reminder to remain ever vigilant in the face of the invisible adversary.

References:

Transforming Misguided Knowledge into Social Strength

यत्र योगेश्वरः कृष्णो यत्र पार्थो धनुर्धरः । तत्र श्रीर्विजयो भूतिर्ध्रुवा नीतिर्मतिर्मम ॥ (Bhagavad Gita) translates as “Where there is divine guidance and righteous effort, there will always be prosperity, victory, and morality.” In the context of the idea of rehabilitation, this verse teaches us that if offenders receive proper guidance, their skills can be redirected. Instead of causing harm, the same abilities can be transformed into tools for protection and social good. Cyber offenders who misuse their skills can, through structured guidance, be redirected toward constructive purposes like cyber defence, digital literacy, and security innovation. This interpretation emphasises not discarding the “spoiled” but reforming and reintegrating them into society.

Introduction

Words and places are often associated with positive and negative aspects based on their history, stories, and the activities that might happen in that certain place. For example, the word “hacker” has a negative connotation, as does the place “Jamtara”, which is identified with its shady history as a cybercrime hotspot, but often people forget that there are lots of individuals who use their hacking skills to serve and protect their nation, also known as “white hat hackers”, a.k.a. ethical hackers, and places like Jamtara have a substantial number of talented individuals who have lost their way and are often victims of their circumstances. This presents the authorities with a fundamental issue of destigmatising cybercriminals and the need to act on their rehabilitation. The idea is to shift from punitive responses to rehabilitative and preventive approaches, especially in regions like Jamtara.

The Deeper Problem: Systemic Gaps and Social Context

Jamtara is not an isolated or a single case; there are many regions like Mewat, Bharatpur, Deoghar, Mathura, etc., that are facing a crisis, and various lives are uprooted because youth are entrapped in cybercrime rings, often to escape unemployment, poverty, and simply in the hope of a better life. In one such heart-wrenching story, a 24-year-old Shakil, belonging to Nuh, Haryana, was arrested for committing various cybercrimes, including sextortion and financial scams, and while his culpability is not in question here, his background reflects a deeper issue. He committed these crimes to pay for his diabetic father’s mounting bills and to see his sister, Shabana, married. This is the story of almost every other individual in the rural areas who is forced into committing these crimes, if not by a person, but by their circumstances. In a news report covered in 2024, an intervention was launched by various Meo leaders and social organisations in the Mewat region aimed at weaning the youth away from cybercrimes.

Not only poverty, but lack of education, social awareness, and digital literacy have acted as active agents for pushing the youth of India away from mainstream growth and towards the dark trenches of the cybercrime world. The local authorities have made active efforts to solve this problem; for instance, to dispel Jamtara’s unfavourable reputation for cybercrime and set the city firmly on the path to change, community libraries have been established in all 118 panchayats spread across six blocks of the district by IAS officer and DM Faiz Aq Ahmed Mumtaz.

The menace of cybercrimes is not limited to rural areas, as various reports surfaced during and post-COVID, where young children from urban areas became victims of various cybercrimes such as cyberbullying and stalking, and often perpetrators were someone from the same age group, adding to the dilemma. The issue has been noticed by various agencies, and the a need to deal with both victims and the accused in a sensitised manner. Recently, ex-CJI DY Chandrachud called for international collaboration to combat juvenile cybercrimes, as there are many who are ensnared and coerced into these criminal gangs, and swift resolution is the key to ensuring justice and rehabilitation.

CyberPeace Policy Outlook

Cybercrime is often a product of skill without purpose. The youth who are often pushed into these crimes either have an incomplete idea of the veracity of their actions or have no other resort. The legal system and the agencies will have to look beyond the nature of the crimes and adopt and undertake a reformative approach so that these people can make their way into society and harness their skills ethically. A good alternative would be to organise Cyber Bootcamps for Reform, i.e., structured training with placement support, and explain to them how ethical hacking and cybersecurity careers can be attractive alternatives. One way to make the process effective is to share real-world stories of reformed hackers. There are many who belong to small villages and districts who have written success stories on reform after participating in digital training programmes. The crime they commit doesn’t have to be the last thing they are able to do in life; it doesn’t have to be the ending. The digital programmes should be organised in a way and in a vernacular that the youth are well-versed in, so there are no language barriers. The programme may give training for coding, cyber hygiene, legal literacy, ethical hacking, psychological counselling, and financial literacy workshops.

It has become a matter of reclaiming the misdirected talent, as rehabilitation is not just humane; it is strategic in the fight against cybercrime. On 1st April 2025, IIT Madras Pravartak Technologies Foundation finished training its first batch of law enforcement officers in cybersecurity techniques. The initiative is commendable, and a similar initiative may prove effective for the youth accused of cybercrimes, and preferably, they can be involved in similar rehabilitation and empowerment programmes during the early stages of criminal proceedings. This will help prevent recidivism and convert digital deviance into digital responsibility. In order to successfully incorporate this into law enforcement, the police can effectively use it to identify first-time, non-habitual offenders involved in low-impact cybercrimes. Also, courts can exercise the authority to require participation in an approved cyber-reform programme as a condition of bail in addition to bail hearings.

Along with this, under the Juvenile Justice (Care and Protection of Children) Act, 2015, children in conflict with the law can be sent to observation homes where modules for digital literacy and skill development can be implemented. Other methods that may prove effective may include Restorative Justice Programmes, Court-monitored rehabilitation, etc.

Conlusion

A rehabilitative approach does not simply punish offenders, it transforms their knowledge into a force for good, ensuring that cybercrime is not just curtailed but converted into cyber defence and progress.

References

- Ismat Ara, How an impoverished district in Haryana became a breeding ground for cybercriminals, FRONTLINE (Jul 27, 2023, 11:00 IST), https://frontline.thehindu.com/the-nation/spotlight-how-nuh-district-in-haryana-became-a-breeding-ground-for-cybercriminals/article67098193.ece )

- Mohammed Iqbal, Counselling, skilling aim to wean Mewat youth away from cybercrimes, THE HINDU (Jul. 28, 2024, 01:39 AM), https://www.thehindu.com/news/cities/Delhi/counselling-skilling-aim-to-wean-mewat-youth-away-from-cybercrimes/article68454985.ece

- Prawin Kumar Tiwary,Jamtara’s journey from cybercrime to community libraries, 101 REPORTERS (Feb. 16, 2022), https://101reporters.com/article/development/Jamtaras_journey_from_cybercrime_to_community_libraries .

- IIT Madras Pravartak completes Training First Batch of Cyber Commandos, PRESS INFORMATION BUREAU (Apr. 1, 2025, 03:36 PM), https://www.pib.gov.in/PressReleasePage.aspx?PRID=2117256