#FactCheck - AI-Generated Image Falsely Shared as ‘Border 2’ Shooting Photo Goes Viral

Executive Summary

Border 2 is set to hit theatres today, January 23. Meanwhile, a photograph is going viral on social media showing actors Sunny Deol, Suniel Shetty, Akshaye Khanna and Jackie Shroff sitting together and having a meal, while a woman is seen serving food to them. Social media users are sharing this image claiming that it was taken during the shooting of Border 2. It is being alleged that the photograph shows a moment from the film’s set, where the actors were having food during a break in shooting. However, Cyber Peace research has found the viral claim to be false. Our investigation revealed that users are sharing an AI-generated image with a misleading claim.

Claim

On Instagram, a user shared the viral image on January 9, 2026, with the caption: “During the shooting of Border 2.” The link to the post, its archive link and screenshots can be seen below.

Fact Check:

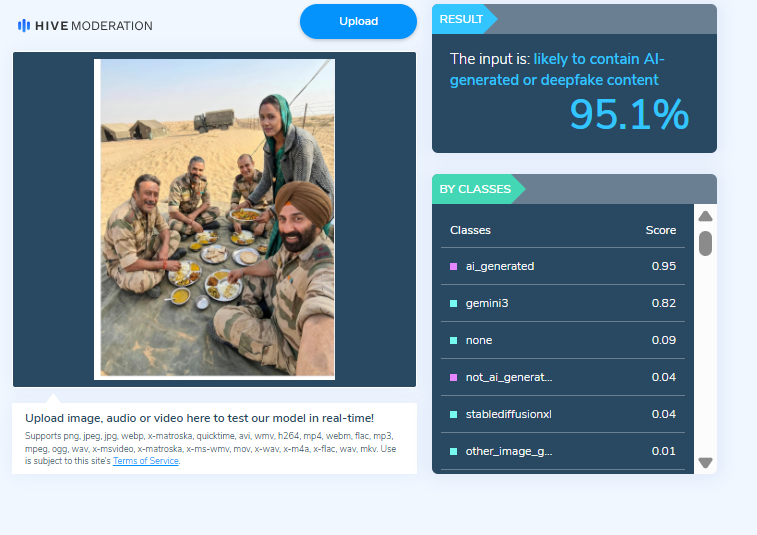

To verify the claim, we first checked Google for the official star cast of the film Border 2. Our search showed that the names of the actors seen in the viral image are not part of the film’s officially announced cast. Next, upon closely examining the image, we noticed that the facial structure and expressions of the actors appeared unnatural and distorted. The facial features did not look realistic, raising suspicion that the image might have been created using Artificial Intelligence (AI). We then scanned the viral image using the AI-generated content detection tool HIVE Moderation. The results indicated that the image is 95 per cent AI-generated.

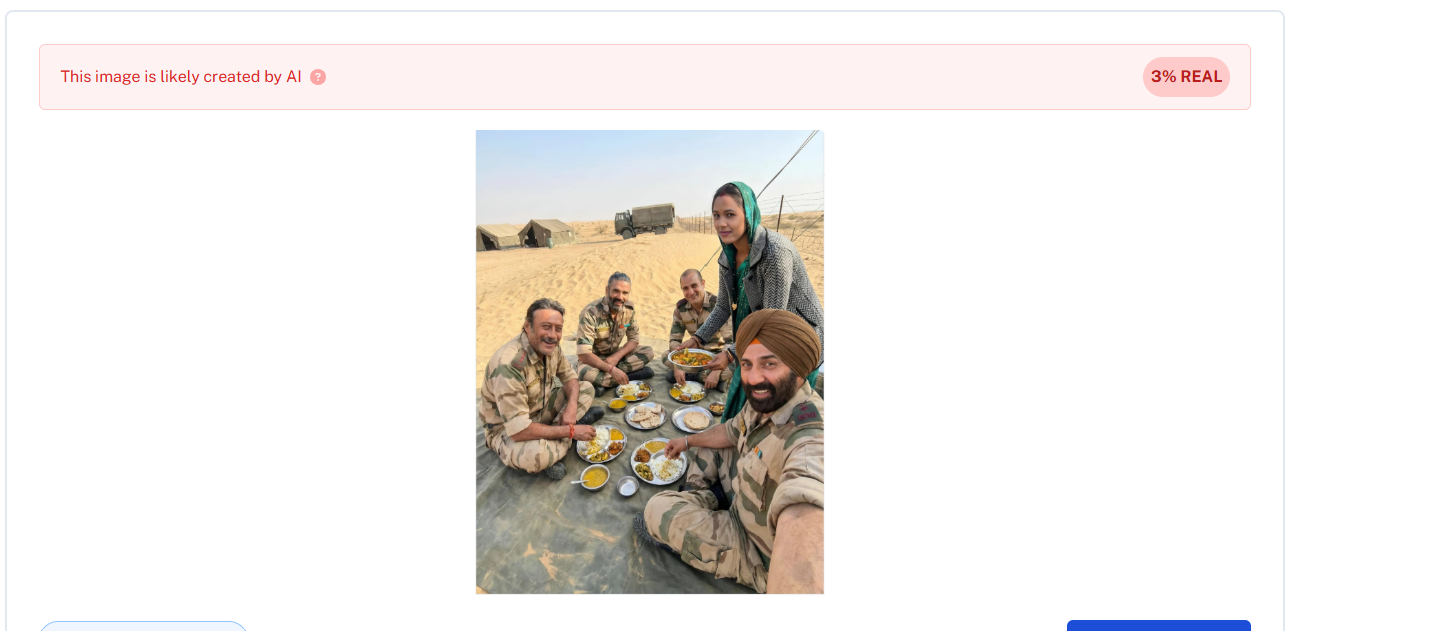

In the final step of our investigation, we analysed the image using another AI-detection tool, Undetectable AI. According to the results, the viral image was confirmed to be AI-generated.

Conclusion:

Our research confirms that social media users are sharing an AI-generated image while falsely claiming that it is from the shooting of Border 2. The viral claim is misleading and false.

Our research revealed that users are sharing an AI-generated image along with misleading claims

Related Blogs

Introduction

In today's era of digitalised community and connections, social media has become an integral part of our lives. A large number of teenagers are also active and have their accounts on social media. They use social media to connect with their friends and family. Social media offers ease to connect and communicate with larger communities and even showcase your creativity. On the other hand, it also poses some challenges or issues such as inappropriate content, online harassment, online stalking, misuse of personal information, abusive and dishearted content etc. There could be unindented consequences on teenagers' mental health by such threats or overuse of social media. The data shows some teens spend hours a day on social media hence it has a larger impact on them whether we notice it or not. Social media addiction and its negative repercussions such as overuse of social media by teens and online threats and vulnerabilities is a growing concern that needs to be taken seriously by social media platforms, regulatory policies and even user's responsibilities. Recently Colorado and California led a joint lawsuit filed by 33 states in the U.S. District Court for the Northern District of California against meta on the concern of child safety.

Meta and concern of child users safety

Recently Meta, the company that owns Facebook, Instagram, WhatsApp, and Messenger, has been sued by more than three dozen states for allegedly using features to hook children to its platforms. The lawsuit claims that Meta violated consumer protection laws and deceived users about the safety of its platforms. The states accuse Meta of designing manipulative features to induce young users' compulsive and extended use, pushing them into harmful content. However, Meta has responded by stating that it is working to provide a safer environment for teenagers and expressing disappointment in the lawsuit.

According to the complaint filed by the states, Meta “designed psychologically manipulative product features to induce young users’ compulsive and extended use" of platforms like Instagram. The states allege that Meta's algorithms were designed to push children and teenagers into rabbit holes of toxic and harmful content, with features like "infinite scroll" and persistent alerts used to hook young users. However, meta responded with disappointment with a lawsuit stating that meta working productively with companies across the industry to create clear, age-appropriate standards for the many apps.

Unplug for sometime

Overuse of social media is associated with increased mental health repercussions along with online threats and risks. Social media’s effect on teenagers is driven by factors such as inadequate sleep, exposure to cyberbullying and online threats and lack of physical activity. Its admitted that social media can help teens feel more connected to their friends and their support system and showcase their creativity to the online world. However, social media overuse by teens is often linked with underlying issues that require attention. To help teenagers, encourage them for responsible use and unplug from social media for some time, encourage them to get outside in nature, do physical activities, and express themselves creatively.

Understanding the threats & risks

- Psychological effects

- Addiction: Excessive use of social media will lead to procrastination and excessively using social media can lead to physical and psychological addiction because it triggers the brain's reward system.

- Mental Conditions Associated: Excessively using social media can be harmful for mental well-being which can also lead to depression and anxiety, self-consciousness and may also lead to social anxiety disorder.

- Eyes, Carpal tunnel syndrome: Excessive spending time on screen may lead to put a real strain on your eyes. Eye problems caused by computer/phone screen use fall under computer vision syndrome (CVS). Carpal tunnel syndrome is caused by pressure on the median nerve.

- Cyberbullying: Cyberbullying is one of the major concerns faced in online interactions on social media. Cyberbullying takes place using the internet or other digital communication technology to bully, harass, or intimidate others and it has become a major concern of online harassment on popular social media platforms. Cyberbullying may include spreading rumours or posting hurtful comments. Cyberbullying has emerged as a phenomenon that has a socio-psychological impact on the victims.

- Online grooming: Online grooming is defined as the tactics abusers deploy through the internet to sexually exploit children. The average time for a bad actor to lure children into his trap is 3 minutes, which is a very alarming number.

- Ransomware/Malware/Spyware: Cybercrooks impose threats such as ransomware, malware and spyware by deploying malicious links on social media. This poses serious cyber threats, and it causes consequences such as financial losses, data loss, and reputation damage. Ransomware is a type of malware which is designed to deny a user or organisation access to their files on the computer. On social media, cyber crooks post malicious links which contain malware, and spyware threats. Hence it is important to be cautious before clicking on any such suspicious link.

- Sextortion: Sextortion is a crime where the perpetrator threatens the victim and demands ransom or asks for sexual favours by threatening the victim to expose or reveal the victim’s sexual activity. It is a kind of sexual blackmail, it may take place on social media and youngsters are mostly targeted. The cyber crooks also misuse the advanced AI Deepfake technology which is capable of creating realistic images or videos which in actuality are created by machine algorithms. Deepfakes technology since easily accessible, is misused by fraudsters to commit various crimes including sextortion or deceiving and scamming people through fake images or videos which look realistic.

- Child sexual abuse material(CSAM): CSAM is inappropriate or illicit content which is prohibited by the laws and regulatory guidelines. Child while using the internet if encounters age-restricted or inappropriate content which may be harmful to them child. Through regulatory guidelines, internet service providers are refrained from hosting the CSAM content on the websites and blocking such inappropriate or CSAM content.

- In App purchases: The teen user also engages in-app purchases on social media or online gaming where they might fall into financial fraud or easy money scams. Where fraudster targets through offering exciting job offers such as part-time job, work-from-home job, small investments, liking content on social media, and earning money out of this. This has been prevalent on social media and fraudsters target innocent people ask for their personal and financial information, and commit financial fraud by scamming people on the pretext of offering exciting offers.

Safety tips:

To stay safe while using social media teens or users are encouraged to follow the best practices and stay aware of the online threats. Users must keep in regard to the best practices. Such as;

- Safe web browsing.

- Utilising privacy settings of your social media accounts.

- Using strong passwords and enabling two-factor authentication.

- Be careful about what you post or share.

- Becoming familiar with the privacy policy of the social media platforms.

- Being selective of adding unknown users to your social media network.

- Reporting any suspicious activity to the platform or relevant forum.

Conclusion:

Child safety is a major concern on social media platforms. Social media-related offences such as cyberstalking, hacking, online harassment and threats, sextortion, and financial fraud are seen as the most occurring cyber crimes on social media. The tech giants must ensure the safety of teen users on social media by implementing and adopting the best mechanisms on the platform. CyberPeace Foundation is working towards advocating for a Child-friendly SIM to protect from the illicit influence of the internet and Social Media.

References:

- https://www.scientificamerican.com/article/heres-why-states-are-suing-meta-for-hurting-teens-with-facebook-and-instagram/

- https://www.nytimes.com/2023/10/24/technology/states-lawsuit-children-instagram-facebook.html

Today, let us talk about one of the key features of our digital lives – security. The safer their online habits are, the safer their data and devices will be. A branded security will make their devices and Internet connections secure, but their carelessness or ignorance can make them targets for cybercrimes. On the other hand, they can themselves unwittingly get involved in dubious activities online. With children being very smart about passwords and browsing history clearing, parents are often left in the dark about their digital lives.

Fret not, parental controls are there at your service. These are digital tools often included with your OS or security software package, which helps you to remotely monitor and control your child’s online activities.

Where Can I find them?

Many devices come with pre-installed PC tools that you have to set up and run. Go to Settings-> Parental controls or Screentime and proceed from there. As I mentioned, they are also offered as a part of your comprehensive security software package.

Why and How to Use Parental Controls

Parental controls help monitor and limit your children's smartphone usage, ensuring they access only age-appropriate content. If your child is a minor, use of this tool is recommended, with the full knowledge of your child/ren. Let them know that just as you supervise them in public places for their safety, and guide them on rights and wrongs, you will use the tool to monitor and mentor them online, for their safety. Emphasize that you love them and trust them but are concerned about the various dubious and fake characters online as well as unsafe websites and only intend to supervise them. As they grow older and display greater responsibility and maturity levels, you may slowly reduce the levels of monitoring. This will help build a relationship of mutual trust and respect.

Step 1: Enable Parental Controls

- iOS: If your child has an iPhone, to set up the controls, go to Settings, select Screen Time, then select Content & Privacy Restrictions.

- Android: If the child has an Android phone, you can use the Google Family Link to manage apps, set screen time limits, and track device usage.

- Third-party apps: Consider security tools like McAfee, Kaspersky, Bark, Qustodio, or Norton Family for advanced features.

Check out what some of the security software apps have on offer:

If you prefer Norton, here are the details:

McAfee Parental Controls suite offers the following features:

McAfee also outlines why Parental Controls matter:

Lastly, let us take a look at what Quick Heal has on offer:

STEP 2: Set up Admin Login

Needless to say, a parent should be the admin login, and it is a wise idea to set up a strong and unique password. You do not want your kids to outsmart you and change their accessibility settings, do you? Remember to create a password you will remember, for children are clever and will soon discover where you have jotted it down.

STEP 3: Create Individual accounts for all users of the device

Let us say two minor kids, a grandparent and you, will be using the device. You will have to create separate accounts for each user. You can allow the children to choose their own passwords, it will give them a sense of privacy. The children or you may (or may not) need to help any Seniors set up their accounts.

Done? Good. Now let us proceed to the next step.

STEP 4: Set up access permissions by age

Let us first get grandparents and other seniors out of the way by giving them full access. when you enter their ages; your device will identify them as adults and guide you accordingly.

Now for each child, follow the instructions to set up filters and blocks. This will again vary with age – more filters for the younger ones, while you can remove controls gradually as they grow older, and hence more mature and responsible. Set up screen Time (daily and weekends), game filtering and playtime, content filtering and blocking by words (e.g. block websites that contain violence/sex/abuse). Ask for activity reports on your device so that you can monitor them remotely This will help you to receive alerts if children connect with strangers or get involved in abusive actions.

Save the data and it has done! Simple, wasn’t it?

Additional Security

For further security, you may want to set up parental controls on the Home Wi-Fi Router, Gaming devices, and online streaming services you subscribe to.

Follow the same steps. Select settings, Admin sign-in, and find out what controls or screen time protection they offer. Choose the ones you wish to activate, especially for the time when adults are not at home.

Conclusion

Congratulations. You have successfully secured your child’s digital space and sanitized it. Discuss unsafe practices as a family, and make any digital rule breaches and irresponsible actions, or concerns, learning points for them. Let their takeaway be that parents will monitor and mentor them, but they too have to take ownership of their actions.

Introduction

The digital landscape of the nation has reached a critical point in its evolution. The rapid adoption of technologies such as cloud computing, mobile payment systems, artificial intelligence, and smart infrastructure has led to a high degree of integration between digital systems and governance, commercial activity, and everyday life. As dependence on these systems continues to grow, a wide range of cyber threats has emerged that are complex, multi-layered, and closely interconnected. By 2026, cyber security threats directed at India are expected to include an increasing number of targeted, well-organised, and strategic cyber attacks. These attacks are likely to focus on exploiting the trust placed in technology, institutions, automation, and the fast pace of technological change.

1. Social Engineering 2.0: Hyper-Personalised AI Phishing & Mobile Banking Malware

Cybercriminals have moved from generalised methods to hyper-targeted attacks through AI-based psychological manipulation. In addition to social media profiles, data breaches, and digital/tracking footprints, the latest types of cybercrimes expected in 2026 will involve AI-based analysis of this information to create and increase the use of hyper-targeted phishing emails.

Phishing emails are capable of impersonating banks, employers, and even family members, with all the same regionally or culturally relevant tone, language, and context as would be done if these persons were sending the emails in person.

With malicious applications disguised as legitimate service apps, cybercriminals have the ability to intercept and capture One-Time Passwords (OTPs), hijack user sessions, and steal money from user accounts in a matter of minutes.

These types of attempts or attacks are successful not only because of their technical sophistication, but because they take advantage of human trust at scale, giving them an almost limitless reach into the financial systems of people around the world through their computers and mobile devices.

2. Cloud and Supply Chain Vulnerabilities

As Indian organisations increasingly migrate to cloud infrastructure, cloud misconfigurations are emerging as a major cybersecurity risk. Weak identity controls, exposed storage, and improper access management can allow attackers to bypass traditional network defences. Alongside this, supply chain attacks are expected to intensify in 2026.

In supply chain attacks, cybercriminals compromise a trusted software vendor or service provider to infiltrate multiple downstream organisations. Even entities with strong internal security can be affected through third-party dependencies. For India’s startup ecosystem, government digital platforms, and IT service providers, this presents a systemic risk. Strengthening vendor risk management and visibility across digital supply chains will be essential.

3. Threats to IoT and Critical Infrastructure

By implementing smart cities, digital utilities, and connected public services, IoT has opened itself up to increased levels of operational technology (OT) through India’s initiative. However, there is currently a lack of adequate security in the form of strong authentication, encryption, and update methods available on many IoT devices. By the year 2026, attackers are going to be able to exploit these vulnerabilities much more than they already are.

Cyberattacks on critical infrastructure such as energy, transportation, healthcare, and telecom systems have far-reaching consequences that extend well beyond data loss; they directly affect the provision of essential services, can damage public safety, and raise concerns over national security. Effectively securing critical infrastructure needs to involve dedicated security solutions to deal with the specific needs of critical infrastructure, in contrast to conventional IT security.

4. Hidden File Vectors and Stealth Payload Delivery

SVG File Abuse in Stealth Attacks

Cybercriminals are continually searching for ways to bypass security filters, and hidden file vectors are emerging as a preferred tactic. One such method involves the abuse of SVG (Scalable Vector Graphics) files. Although commonly perceived as harmless image files, SVGs can contain embedded scripts capable of executing malicious actions.

By 2026, SVG-based attacks are expected to be used in phishing emails, cloud file sharing, and messaging platforms. Because these files often bypass traditional antivirus and email security systems, they provide an effective stealth delivery mechanism. Indian organisations will need to rethink assumptions about “safe” file formats and strengthen deep content inspection capabilities.

5. Quantum-Era Cyber Risks and “Harvest Now, Decrypt Later” Attacks

Although practical quantum computers are still emerging, quantum-era cyber risks are already a present-day concern. Adversaries are believed to be intercepting and storing encrypted data now with the intention of decrypting it in the future once quantum capabilities mature—a strategy known as “harvest now, decrypt later.” This poses serious long-term confidentiality risks.

Recognising this threat, the United States took early action during the Biden administration through National Security Memorandum 10, which directed federal agencies to prepare for the transition to quantum-resistant cryptography. For India, similar foresight is essential, as sensitive government communications, financial data, health records, and intellectual property could otherwise be exposed retrospectively. Preparing for quantum-safe cryptography will therefore become a strategic priority in the coming years.

6. AI Trust Manipulation and Model Exploitation

Poisoning the Well – Direct Attacks on AI Models

As artificial intelligence systems are increasingly used for decision-making—ranging from fraud detection and credit scoring to surveillance and cybersecurity—attackers are shifting focus from systems to models themselves. “Poisoning the well” refers to attacks that manipulate training data, feedback mechanisms, or input environments to distort AI outputs.

In the context of India's rapidly growing digital ecosystem, compromised AI models can result in biased decisions, false security alerts or denying legitimate services. The big problem with these types of attacks is they may occur without triggering conventional security measures. Transparency, integrity and continuous monitoring of AI systems will be key to creating and maintaining stakeholder confidence in the decision-making process of the automated systems.

Recommendations

Despite the increasing sophistication of malicious cyber actors, India is entering this phase with a growing level of preparedness and institutional capacity. The country has strengthened its cyber security posture through dedicated mechanisms and relevant agencies such as the Indian Cyber Crime Coordination Centre, which play a central role in coordination, threat response, and capacity building. At the same time, sustained collaboration among government bodies, non-governmental organisations, technology companies, and academic institutions has expanded cyber security awareness, skill development, and research. These collective efforts have improved detection capabilities, response readiness, and public resilience, placing India in a stronger position to manage emerging cyber threats and adapt to the evolving digital environment.

Conclusion

By 2026, complexity, intelligence, and strategic intent will increasingly define cyber threats to the digital ecosystem. Cyber criminals are expected to use advanced methods of attack, including artificial intelligence assisted social engineering and the exploitation of cloud supply chain risks. As these threats evolve, adversaries may also experiment with quantum computing techniques and the manipulation of AI models to create new ways of influencing and disrupting digital systems. In response, the focus of cybersecurity is shifting from merely preventing breaches to actively protecting and restoring digital trust. While technical controls remain essential, they must be complemented by strong cybersecurity governance, adherence to regulatory standards, and sustained user education. As India continues its digital transformation, this period presents a valuable opportunity to invest proactively in cybersecurity resilience, enabling the country to safeguard citizens, institutions, and national interests with confidence in an increasingly complex and dynamic digital future.

References

- https://www.seqrite.com/india-cyber-threat-report-2026/

- https://www.uscsinstitute.org/cybersecurity-insights/blog/ai-powered-phishing-detection-and-prevention-strategies-for-2026

- https://www.expresscomputer.in/guest-blogs/cloud-security-risks-that-should-guide-leadership-in-2026/130849/

- https://www.hakunamatatatech.com/our-resources/blog/top-iot-challenges

- https://csrc.nist.gov/csrc/media/Presentations/2024/u-s-government-s-transition-to-pqc/images-media/presman-govt-transition-pqc2024.pdf

- https://www.cyber.nj.gov/Home/Components/News/News/1721/214