#FactCheck - AI-Generated Image Falsely Shared as Bees Attacking Shankaracharya Swami Avimukteshwaranand Saraswati

Executive Summary

During the Gau Raksha Yatra of Shankaracharya Swami Avimukteshwaranand Saraswati, bees reportedly attacked a discourse event in Rohania area of Varanasi, Uttar Pradesh. Following the incident, a picture has gone viral on social media showing bees attacking Swami Avimukteshwaranand Saraswati. Several users are sharing the image as genuine while targeting the Shankaracharya online. CyberPeace Research Wing investigated the viral image and found it to be fake. Our research revealed that the picture was created using Artificial Intelligence (AI). While it is true that a bee attack occurred during Swami Avimukteshwaranand Saraswati’s discourse program, the viral image itself is fabricated.

Claim

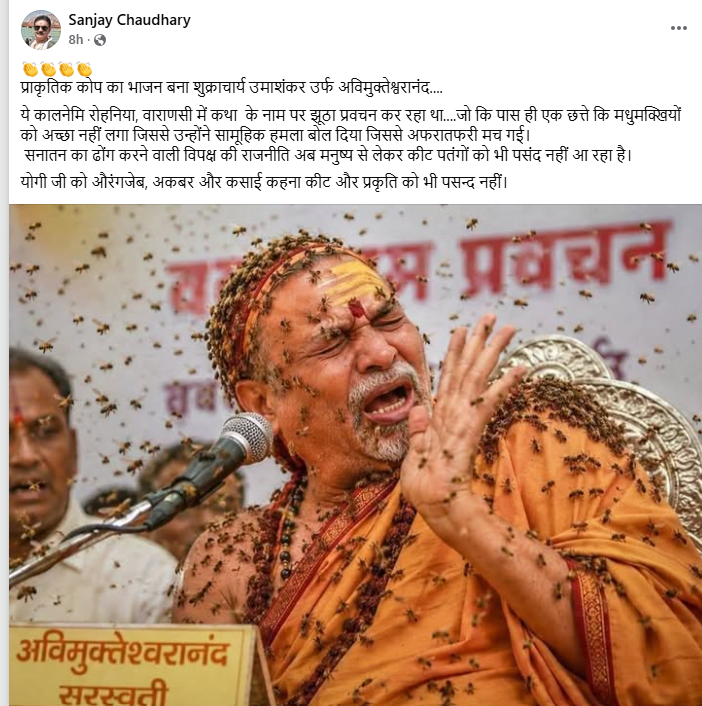

A Facebook user named “Sanjay Chaudhary” shared the viral image on May 15, 2026, with the caption: “Prakritik kop ka bhajan bana Shukracharya Umashankar alias Avimukteshwaranand… This Kaalnemi was delivering false sermons in Rohania, Varanasi in the name of religion… The bees from a nearby hive did not like it and collectively attacked, creating chaos. Even insects and nature no longer like the opposition’s politics disguised as Sanatan Dharma. Calling Yogi Ji Aurangzeb, Akbar and butcher is not acceptable even to nature and insects.”

Post link and archive link are given below:

- https://www.facebook.com/sanjaychaudhary073/posts/pfbid02kgts8igKDwgctz3MamECMGoGfQR5aWPTdsDgLeux3pD9jwP7ADfgNpoPfHvMb9Zul

- https://perma.cc/E6SE-BAXZ

Fact-Check

To verify the viral claim, we used Google Open Search tools and found reports related to the incident on the YouTube channel of News18 UP Uttarakhand. A report published on May 13, 2026 stated that bees attacked the discourse event during Swami Avimukteshwaranand’s Gau Raksha Yatra in Rohania, Varanasi. The incident created panic at the venue, forcing the Swami to end his discourse midway. The channel also uploaded a YouTube Shorts video related to the incident.

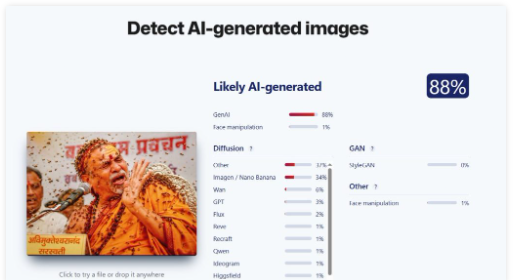

As part of the research, we further analyzed the viral image using AI detection tools. First, we used the tool “Sight Engine,” which indicated an 88 percent probability that the image was AI-generated.

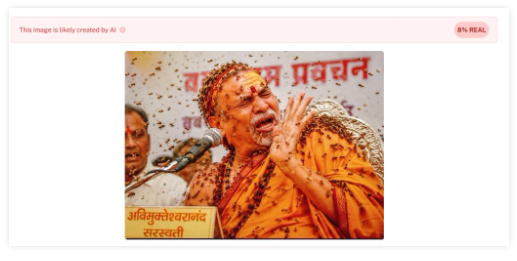

We then examined the image using another AI detection tool called “Undetectable,” which also suggested that the photo was likely created using AI.

Conclusion

Our research found that the viral image is AI-generated. The picture was created using artificial intelligence tools. While bees did attack during Swami Avimukteshwaranand Saraswati’s Gau Raksha Yatra on May 13, 2026, the viral image circulating on social media is fictional and not real.

Related Blogs

Introduction

Purchasing online currencies through one of the numerous sizable digital marketplaces designed specifically for this purpose is the simplest method. The quantity of cryptocurrency and money paid. These online marketplaces impose an exchange fee. After being obtained, digital cash is stored in a digital wallet and can be used in the metaverse or as real money to make purchases of goods and services in the real world. Blockchain ensures the security and decentralisation of each exchange.

Its worth and application are comparable to those of gold: when a large number of investors choose this valuable asset, its value increases and vice versa. This also applies to cryptocurrencies, which explains why they have become so popular in recent years. The metaphysical realm is an online space where users can communicate with one another via virtual personas, among other features. Furthermore, money and commerce always come up when people communicate.

Web3 is welcoming the metaverse, and in an environment where conventional currency isn't functional, its technologies are making it possible to use cryptocurrencies. Non-Fungible Tokens (NFTs) can be used to monitor intellectual rights to ownership in the metaverse, while cryptocurrencies are used to pay for content and incentivise consumers. This write-up addresses what the metaverse crypto is. It also delves into the advantages, disadvantages, and applications of crypto in this context.

Convergence of Metaverse and Cryptocurrency

As the main form of digital money in the Metaverse, digital currencies can be used to do business and exchange in the digital realm. The term "metaverse" describes a simulation of reality where users can communicate in real time with other users and an environment created by computers. The acquisition and exchange of virtual products, virtual possessions, and electronic creativity within the Metaverse can all be made possible via cryptocurrency.

Many digital currencies are based on blockchain software, which can offer an accessible and safe way to confirm payments and manage digital currencies in the Metaverse. By giving consumers vouchers or other electronic currencies in exchange for their accomplishments or contributions, cryptocurrency might encourage consumer engagement and involvement in the Metaverse.

In the Metaverse, cryptocurrency can also facilitate portable connectivity, enabling users to move commodities and their worth between various virtual settings and platforms.

The idea of fragmentation in the Metaverse, where participants have more ownership and control over their virtual worlds, is consistent with the decentralised characteristics of cryptocurrencies.

Advantages of Metaverse Cryptocurrency

There are countless opportunities for creativity and discovery in the metaverse. Because the blockchain is accessible to everyone, unchangeable, and password-protected, metaverse-centric cryptocurrencies offer greater safety and adaptability than cash. Crypto will be crucial to the evolution of the metaverse as it keeps growing and more individuals show interest in using it. Here are a few of the variables influencing the growth of this new virtual environment.

Safety

Your Bitcoin wallet is intimately linked to your personal information, progress, and metaverse possessions. Additionally, if your digital currency wallet is compromised, especially if your account credentials are weak, public, or connected to your real-world identity, cybercriminals may try to steal your money or personal data.

Adaptability

Digital assets can be accessed and exchanged worldwide due to cryptocurrencies’ ability to transcend national borders. By utilising a local cryptocurrency, many metaverse platforms streamline transactions and eliminate the need for frequent currency conversions between various digital or fiat currencies. Another advantage of using autonomous contract languages is for metaverse cryptos. When consumers make transactions within the network, applications do away with the need for administrative middlemen.

Objectivity

By exposing interactions in a publicly accessible distributed database, the use of blockchain improves accountability. It is more difficult for dishonest people to raise the cost of digital goods and land since Bitcoin transactions are public. Metaverse cryptocurrencies are frequently employed to control project modifications. The outcomes of these legislative elections are made public using digital contracts.

NFT, Virtual worlds, and Digital currencies

Using the NFT is an additional method of using Bitcoin for metaverse transactions. These are distinct electronic documents that have significant potential value.

A creator must convert an electronic work of art into a virtual object or virtual world if they want to display it digitally in the metaverse. Artists produce one-of-a-kind, serialised pieces that are given an NFT that may be acquired through Bitcoin payments.

Applications of Metaverse Cryptography

Fiat money or independent virtual currencies like Robux are used by Web 2 metaverse initiatives to pay for goods, real estate, and services. Fiat lacked the adaptability of cryptocurrencies with automated contract capabilities, even though it may be used to pay for goods and finance the creation of projects. Users can stake these within the network virtual currencies to administer distributed metaverses, and they have all the same functions as fiat currency.

Banking operations

Lending digital cash to purchase metaverse land is possible. Banks that have already made inroads into the metaverse include HSBC and JPMorgan, both of which possess virtual real estate. "We are making our foray into the metaverse, allowing us to create innovative brand experiences for both new and existing customers," said Suresh Balaji, chief marketing officer for HSBC in Asia-Pacific.

Purchasing

An increasingly important aspect of the metaverse is online commerce. Users can interact with real-world brands, tour simulated malls, and try on virtual apparel for their characters. Adidas, for instance, debuted an NFT line in 2021 that included customizable peripherals for the Sandbox. Buyers of NFTs crossed the line separating the virtual universe and the actual world to obtain the tangible goods associated with their NFTs.

Authority

Metaverse initiatives are frequently governed by cryptocurrency. Decentraland, a well-known Ethereum-based metaverse featuring virtual reality components, permits users to submit and vote on suggestions provided they own specific tokens.

Conclusion

The combination of the virtual world and cryptocurrencies creates novel opportunities for trade, innovation, and communication. The benefits of using the blockchain system are increased objectivity, safety, and flexibility. By facilitating exclusive ownership of digital assets, NFTs enhance metaverse immersion even more. In the metaverse, cryptocurrencies are used in banking, shopping, and government, forming a user-driven, autonomous digital world. The combination of cryptocurrencies and the metaverse will revolutionise how we interact with online activities, creating a dynamic environment that presents both opportunities and difficulties.

References

- https://www.telefonica.com/en/communication-room/blog/metaverse-and-cryptocurrencies-what-is-their-relationship/

- https://hedera.com/learning/metaverse/metaverse-crypto

- https://www.linkedin.com/pulse/unleashing-power-connection-between-cryptocurrency-ai-amit-chandra/

Disclaimer:

This report is based on extensive research conducted by CyberPeace Research using publicly available information, and advanced analytical techniques. The findings, interpretations, and conclusions presented are based on the data available at the time of study and aim to provide insights into global ransomware trends.

The statistics mentioned in this report are specific to the scope of this research and may vary based on the scope and resources of other third-party studies. Additionally, all data referenced is based on claims made by threat actors and does not imply confirmation of the breach by CyberPeace. CyberPeace includes this detail solely to provide factual transparency and does not condone any unlawful activities. This information is shared only for research purposes and to spread awareness. CyberPeace encourages individuals and organizations to adopt proactive cybersecurity measures to protect against potential threats.

CyberPeace Research does not claim to have identified or attributed specific cyber incidents to any individual, organization, or nation-state beyond the scope of publicly observable activities and available information. All analyses and references are intended for informational and awareness purposes only, without any intention to defame, accuse, or harm any entity.

While every effort has been made to ensure accuracy, CyberPeace Research is not liable for any errors, omissions, subsequent interpretations and any unlawful activities of the findings by third parties. The report is intended to inform and support cybersecurity efforts globally and should be used as a guide to foster proactive measures against cyber threats.

Executive Summary:

The 2024 ransomware landscape reveals alarming global trends, with 166 Threat Actor Groups leveraging 658 servers/underground resources and mirrors to execute 5,233 claims across 153 countries. Monthly fluctuations in activity indicate strategic, cyclical targeting, with peak periods aligned with vulnerabilities in specific sectors and regions. The United States was the most targeted nation, followed by Canada, the UK, Germany, and other developed countries, with the northwestern hemisphere experiencing the highest concentration of attacks. Business Services and Healthcare bore the brunt of these operations due to their high-value data, alongside targeted industries such as Pharmaceuticals, Mechanical, Metal, Electronics, and Government-related professional firms. Retail, Financial, Technology, and Energy sectors were also significantly impacted.

This research was conducted by CyberPeace Research using a systematic modus operandi, which included advanced OSINT (Open-Source Intelligence) techniques, continuous monitoring of Ransomware Group activities, and data collection from 658 servers and mirrors globally. The team utilized data scraping, pattern analysis, and incident mapping to track trends and identify hotspots of ransomware activity. By integrating real-time data and geographic claims, the research provided a comprehensive view of sectoral and regional impacts, forming the basis for actionable insights.

The findings emphasize the urgent need for proactive Cybersecurity strategies, robust defenses, and global collaboration to counteract the evolving and persistent threats posed by ransomware.

Overview:

This report provides insights into ransomware activities monitored throughout 2024. Data was collected by observing 166 Threat Actor Groups using ransomware technologies across 658 servers/underground resources and mirrors, resulting in 5,233 claims worldwide. The analysis offers a detailed examination of global trends, targeted sectors, and geographical impact.

Top 10 Threat Actor Groups:

The ransomware group ‘ransomhub’ has emerged as the leading threat actor, responsible for 527 incidents worldwide. Following closely are ‘lockbit3’ with 522 incidents and ‘play’ with 351. Other Groups are ‘akira’, ‘hunters’, ‘medusa’, ‘blackbasta’, ‘qilin’, ‘bianlian’, ‘incransom’. These groups usually employ advanced tactics to target critical sectors, highlighting the urgent need for robust cybersecurity measures to mitigate their impact and protect organizations from such threats.

Monthly Ransomware Incidents:

In January 2024, the value began at 284, marking the lowest point on the chart. The trend rose steadily in the subsequent months, reaching its first peak at 557 in May 2024. However, after this peak, the value dropped sharply to 339 in June. A gradual recovery follows, with the value increasing to 446 by August. September sees another decline to 389, but a sharp rise occurs afterward, culminating in the year’s highest point of 645 in November. The year concludes with a slight decline, ending at 498 in December 2024 (till 28th of December).

Top 10 Targeted Countries:

- The United States consistently topped the list as the primary target probably due to its advanced economic and technological infrastructure.

- Other heavily targeted nations include Canada, UK, Germany, Italy, France, Brazil, Spain, and India.

- A total of 153 countries reported ransomware attacks, reflecting the global scale of these cyber threats

Top Affected Sectors:

- Business Services and Healthcare faced the brunt of ransomware threat due to the sensitive nature of their operations.

- Specific industries under threats:

- Pharmaceutical, Mechanical, Metal, and Electronics industries.

- Professional firms within the Government sector.

- Other sectors:

- Retail, Financial, Technology, and Energy sectors were also significant targets.

Geographical Impact:

The continuous and precise OSINT(Open Source Intelligence) work on the platform, performed as a follow-up action to data scraping, allows a complete view of the geography of cyber attacks based on their claims. The northwestern region of the world appears to be the most severely affected by Threat Actor groups. The figure below clearly illustrates the effects of this geographic representation on the map.

Ransomware Threat Trends in India:

In 2024, the research identified 98 ransomware incidents impacting various sectors in India, marking a 55% increase compared to the 63 incidents reported in 2023. This surge highlights a concerning trend, as ransomware groups continue to target India's critical sectors due to its growing digital infrastructure and economic prominence.

Top Threat Actors Group Targeted India:

Among the following threat actors ‘killsec’ is the most frequent threat. ‘lockbit3’ follows as the second most prominent threat, with significant but lower activity than killsec. Other groups, such as ‘ransomhub’, ‘darkvault’, and ‘clop’, show moderate activity levels. Entities like ‘bianlian’, ‘apt73/bashe’, and ‘raworld’ have low frequencies, indicating limited activity. Groups such as ‘aps’ and ‘akira’ have the lowest representation, indicating minimal activity. The chart highlights a clear disparity in activity levels among these threats, emphasizing the need for targeted cybersecurity strategies.

Top Impacted Sectors in India:

The pie chart illustrates the distribution of incidents across various sectors, highlighting that the industrial sector is the most frequently targeted, accounting for 75% of the total incidents. This is followed by the healthcare sector, which represents 12% of the incidents, making it the second most affected. The finance sector accounts for 10% of the incidents, reflecting a moderate level of targeting. In contrast, the government sector experiences the least impact, with only 3% of the incidents, indicating minimal targeting compared to the other sectors. This distribution underscores the critical need for enhanced cybersecurity measures, particularly in the industrial sector, while also addressing vulnerabilities in healthcare, finance, and government domains.

Month Wise Incident Trends in India:

The chart indicates a fluctuating trend with notable peaks in May and October, suggesting potential periods of heightened activity or incidents during these months. The data starts at 5 in January and drops to its lowest point, 2, in February. It then gradually increases to 6 in March and April, followed by a sharp rise to 14 in May. After peaking in May, the metric significantly declines to 4 in June but starts to rise again, reaching 7 in July and 8 in August. September sees a slight dip to 5 before the metric spikes dramatically to its highest value, 24, in October. Following this peak, the count decreases to 10 in November and then drops further to 7 in December.

CyberPeace Advisory:

- Implement Data Backup and Recovery Plans: Backups are your safety net. Regularly saving copies of your important data ensures you can bounce back quickly if ransomware strikes. Make sure these backups are stored securely—either offline or in a trusted cloud service—to avoid losing valuable information or facing extended downtime.

- Enhance Employee Awareness and Training: People often unintentionally open the door to ransomware. By training your team to spot phishing emails, social engineering tricks, and other scams, you empower them to be your first line of defense against attacks.

- Adopt Multi-Factor Authentication (MFA): Think of MFA as locking your door and adding a deadbolt. Even if attackers get hold of your password, they’ll still need that second layer of verification to break in. It’s an easy and powerful way to block unauthorized access.

- Utilize Advanced Threat Detection Tools: Smart tools can make a world of difference. AI-powered systems and behavior-based monitoring can catch ransomware activity early, giving you a chance to stop it in its tracks before it causes real damage.

- Conduct Regular Vulnerability Assessments: You can’t fix what you don’t know is broken. Regularly checking for vulnerabilities in your systems helps you identify weak spots. By addressing these issues proactively, you can stay one step ahead of attackers.

Conclusion:

The 2024 ransomware landscape reveals the critical need for proactive cybersecurity strategies. High-value sectors and technologically advanced regions remain the primary targets, emphasizing the importance of robust defenses. As we move into 2025, it is crucial to anticipate the evolution of ransomware tactics and adopt forward-looking measures to address emerging threats.

Global collaboration, continuous innovation in cybersecurity technologies, and adaptive strategies will be imperative to counteract the persistent and evolving threats posed by ransomware activities. Organizations and governments must prioritize preparedness and resilience, ensuring that lessons learned in 2024 are applied to strengthen defenses and minimize vulnerabilities in the year ahead.

Introduction

Targeting airlines and airports, airline hoax threats are fabricated alarms which intend to disrupt normal day-to-day activities and create panic among the public. Security of public settings is of utmost importance, making them a vulnerable target. The consequences of such threats include the financial loss incurred by parties concerned, increased security protocols to be followed immediately after and in preparation, flight delays and diversions, emergency landings and passenger inconvenience and emotional distress. The motivation behind such threats is malicious intent of varying degrees, breaching national security, integrity and safety. However, apart from the government, airline and social media authorities which already have certain measures in place to tackle such issues, the public, through responsible consumption and verified sharing has an equal role in preventing the spread of misinformation and panic regarding the same.

Hoax Airline Threats

The recent spate of bomb hoax threats to Indian airlines has witnessed false reports about threats to (over) 500 flights since 14/10/2024, the majority being traced to posts on social media handles which are either anonymous or unverified. Some recent incidents include a hoax threat on Air India's flights from Delhi to Mumbai via Indore which was posted on X, 30/10/2024 and a flight from Nepal (Kathmandu) to Delhi on November 2nd, 2024.

As per reports by the Indian Express, steps are being taken to address such incidents by tweaking the assessment criteria for threats (regarding bombs) and authorities such as the Bomb Threat Assessment Committees (BTAC) are being selective in categorising them as specific and non-specific. Some other consideration factors include whether a VIP is onboard and whether the threat has been posted from an anonymous account with a similar history.

CyberPeace Recommendations

- For Public

- Question sensational information: The public should scrutinise the information they’re consuming not only to keep themselves safe but also to be responsible to other citizens. Exercise caution before sharing alarming messages, posts and pieces of information

- Recognising credible sources: Rely only on trustworthy, verified sources when sharing information, especially when it comes to topics as serious as airline safety.

- Avoiding Reactionary Sharing: Sharing in a state of panic can contribute to the chaos created upon receiving unverified news, hence, it is suggested to refrain from reactionary sharing.

- For the Authorities & Agencies

- After a series of hoax bomb threats, the Government of India has issued an advisory to social media platforms calling for them to make efforts for the removal of such malicious content. Adherence to obligations such as the prompt removal of harmful content or disabling access to such unlawful information has been specified under the IT Rules, 2021. They are also obligated under the Bhartiya Nagarik Suraksha Sanhita 2023 to report certain offences on their platform. The Ministry of Civil Aviation’s action plan consists of plans regarding hoax bomb threats being labelled as a cognisable offence, and attracting a no-flyers list as a penalty, among other things.

These plans also include steps such as :

- Introduction of other corrective measures that are to be taken against bad actors (similar to having a non-flyers list).

- Introduction of a reporting mechanism which is specific to such threats.

- Focus on promoting awareness, digital literacy and critical thinking, fact-checking resources as well as encouraging the public to report such hoaxes

Conclusion

Preventing the spread of airline threat hoaxes is a collective responsibility which involves public engagement and ownership to strengthen safety measures and build upon the trust in the overall safety ecosystem (here; airline agencies, government authorities and the public). As the government and agencies take measures to prevent such instances, the public should continue to share information only from and on verified and trusted portals. It is encouraged that the public must remain vigilant and responsible while consuming and sharing information.

References

- https://indianexpress.com/article/business/flight-bomb-threats-assessment-criteria-serious-9646397/

- https://www.wionews.com/world/indian-airline-flight-bound-for-new-delhi-from-nepal-receives-hoax-bomb-threat-amid-rise-in-similar-incidents-772795

- https://www.newindianexpress.com/nation/2024/Oct/26/centre-cautions-social-media-platforms-to-tackle-misinformation-after-hoax-bomb-threat-to-multiple-airlines

- https://economictimes.indiatimes.com/industry/transportation/airlines-/-aviation/amid-rising-hoax-bomb-threats-to-indian-airlines-centre-issues-advisory-to-social-media-companies/articleshow/114624187.cms