#FactCheck - Viral Video Falsely Claims RSS Chief Mohan Bhagwat Called for ‘Saffronisation’ of Indian Army

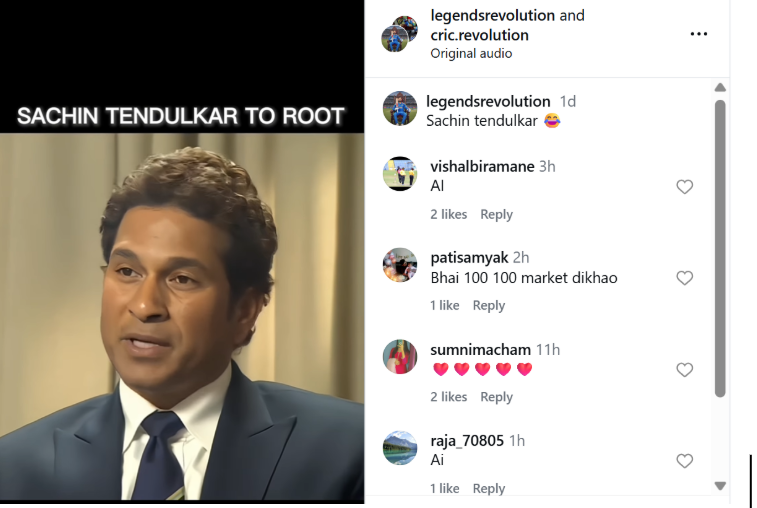

A video purportedly showing Rashtriya Swayamsevak Sangh (RSS) chief Mohan Bhagwat making remarks about the “saffronisation” of the Indian Army has been widely circulated on social media. The clip claims that Bhagwat called for the removal of non-Hindus from the armed forces and linked the issue to future political leadership changes in the country.

Claim

However, a verification by the Cyber Peace Foundation has established that the video is misleading and has been digitally manipulated.

In the video, Bhagwat is allegedly heard saying that unless more than 50 percent of non-Hindus are removed from the Indian Army by 2028, Prime Minister Narendra Modi would be replaced by Uttar Pradesh Chief Minister Yogi Adityanath. The clip further attributes another statement to him, suggesting that he would resign if the Prime Minister were to demand Nitish Kumar’s resignation.

By the time of publication, the video had been viewed over 7,000 times.( lINK, ARCHIVE Link, Screenshot

Fact Check:

The reverse image search also directed the Desk to a video uploaded on CNN-News18’s official YouTube channel on December 21, 2025. The footage was found to be a longer version of the viral clip and was recorded at the RSS centenary event held in Kolkata on the same date. A comparison of both videos confirmed that the background visuals, stage setup and camera angles were identical.

However, a careful review of the original CNN-News18 video revealed that Mohan Bhagwat did not make any of the statements attributed to him in the viral clip.

In his original address, Bhagwat spoke about unity and referred to concerns over increasing atrocities against Hindus in Bangladesh. He made no reference to the Indian Army, nor did he comment on its composition or alleged saffronisation. Here is the link to the original video, along with a screenshot: https://www.youtube.com/watch?v=KnsAUGfBQBk&t=1s

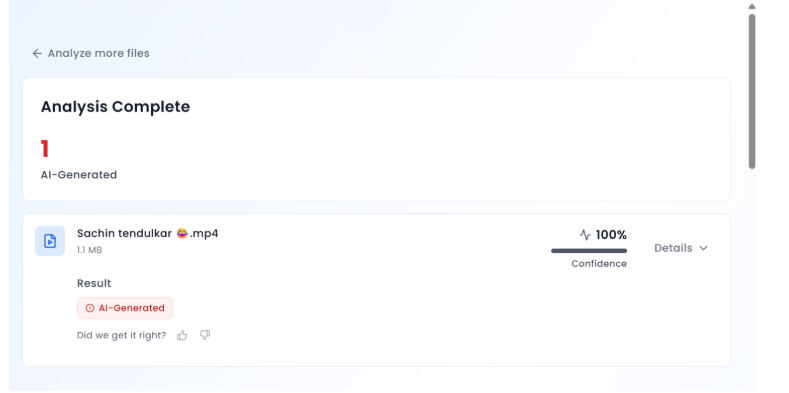

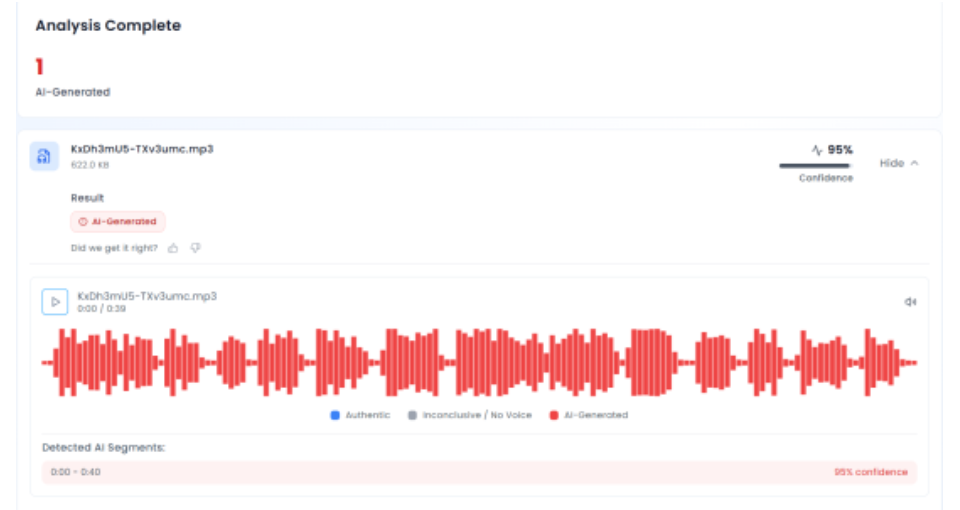

In the next phase of the investigation, the audio track from the viral video was extracted and analysed using the AI audio detection tool Aurigin. The tool’s assessment indicated that the voice heard in the clip was artificially generated, confirming that the audio did not originate from the original speech.

Conclusion

The claim that RSS chief Mohan Bhagwat called for the saffronisation of the Indian Army is false. PTI Fact Check found that the viral video was digitally manipulated, using genuine footage from an RSS centenary event but pairing it with an AI-generated audio track. The altered video was shared online to mislead viewers by falsely attributing statements Bhagwat never made.

.webp)