#FactCheck: Fake Claim on Delhi Authority Culling Dogs After Supreme Court Stray Dog Ban Directive 11 Aug 2025

Executive Summary:

A viral claim alleges that following the Supreme Court of India’s August 11, 2025 order on relocating stray dogs, authorities in Delhi NCR have begun mass culling. However, verification reveals the claim to be false and misleading. A reverse image search of the viral video traced it to older posts from outside India, probably linked to Haiti or Vietnam, as indicated by the use of Haitian Creole and Vietnamese language respectively. While the exact location cannot be independently verified, it is confirmed that the video is not from Delhi NCR and has no connection to the Supreme Court’s directive. Therefore, the claim lacks authenticity and is misleading

Claim:

There have been several claims circulating after the Supreme Court of India on 11th August 2025 ordered the relocation of stray dogs to shelters. The primary claim suggests that authorities, following the order, have begun mass killing or culling of stray dogs, particularly in areas like Delhi and the National Capital Region. This narrative intensified after several videos purporting to show dead or mistreated dogs allegedly linked to the Supreme Court’s directive—began circulating online.

Fact Check:

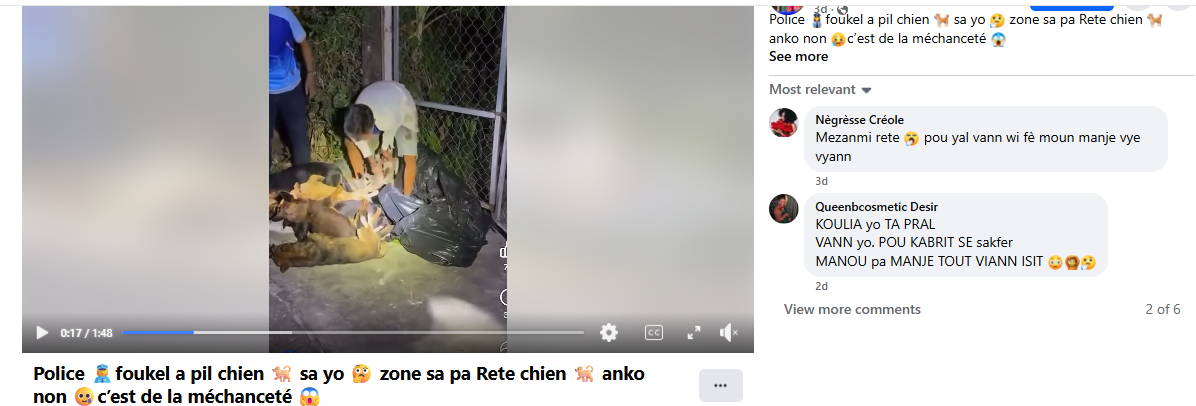

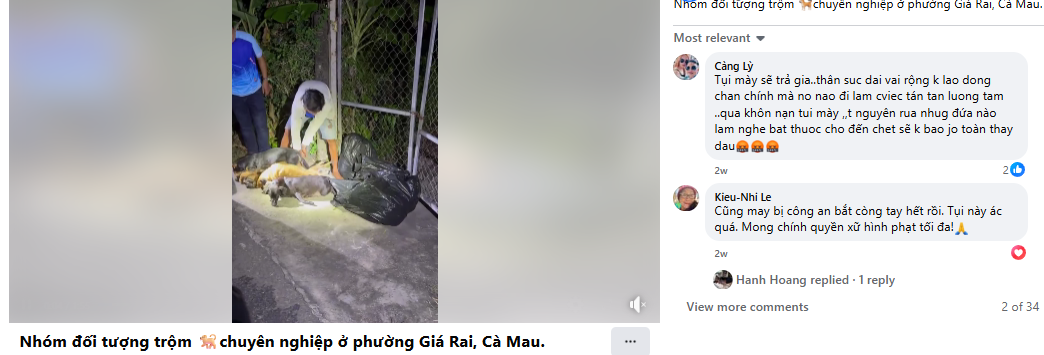

After conducting a reverse image search using a keyframe from the viral video, we found similar videos circulating on Facebook. Upon analyzing the language used in one of the posts, it appears to be Haitian Creole (Kreyòl Ayisyen), which is primarily spoken in Haiti. Another similar video was also found on Facebook, where the language used is Vietnamese, suggesting that the post associates the incident with Vietnam.

However, it is important to note that while these posts point towards different locations, the exact origin of the video cannot be independently verified. What can be established with certainty is that the video is not from Delhi NCR, India, as is being claimed. Therefore, the viral claim is misleading and lacks authenticity.

Conclusion:

The viral claim linking the Supreme Court’s August 11, 2025 order on stray dogs to mass culling in Delhi NCR is false and misleading. Reverse image search confirms the video originated outside India, with evidence of Haitian Creole and Vietnamese captions. While the exact source remains unverified, it is clear the video is not from Delhi NCR and has no relation to the Court’s directive. Hence, the claim lacks credibility and authenticity.

Claim: Viral fake claim of Delhi Authority culling dogs after the Supreme Court directive on the ban of stray dogs as on 11th August 2025

Claimed On: Social Media

Fact Check: False and Misleading

Related Blogs

A video purportedly showing Rashtriya Swayamsevak Sangh (RSS) chief Mohan Bhagwat making remarks about the “saffronisation” of the Indian Army has been widely circulated on social media. The clip claims that Bhagwat called for the removal of non-Hindus from the armed forces and linked the issue to future political leadership changes in the country.

Claim

However, a verification by the Cyber Peace Foundation has established that the video is misleading and has been digitally manipulated.

In the video, Bhagwat is allegedly heard saying that unless more than 50 percent of non-Hindus are removed from the Indian Army by 2028, Prime Minister Narendra Modi would be replaced by Uttar Pradesh Chief Minister Yogi Adityanath. The clip further attributes another statement to him, suggesting that he would resign if the Prime Minister were to demand Nitish Kumar’s resignation.

By the time of publication, the video had been viewed over 7,000 times.( lINK, ARCHIVE Link, Screenshot

Fact Check:

The reverse image search also directed the Desk to a video uploaded on CNN-News18’s official YouTube channel on December 21, 2025. The footage was found to be a longer version of the viral clip and was recorded at the RSS centenary event held in Kolkata on the same date. A comparison of both videos confirmed that the background visuals, stage setup and camera angles were identical.

However, a careful review of the original CNN-News18 video revealed that Mohan Bhagwat did not make any of the statements attributed to him in the viral clip.

In his original address, Bhagwat spoke about unity and referred to concerns over increasing atrocities against Hindus in Bangladesh. He made no reference to the Indian Army, nor did he comment on its composition or alleged saffronisation. Here is the link to the original video, along with a screenshot: https://www.youtube.com/watch?v=KnsAUGfBQBk&t=1s

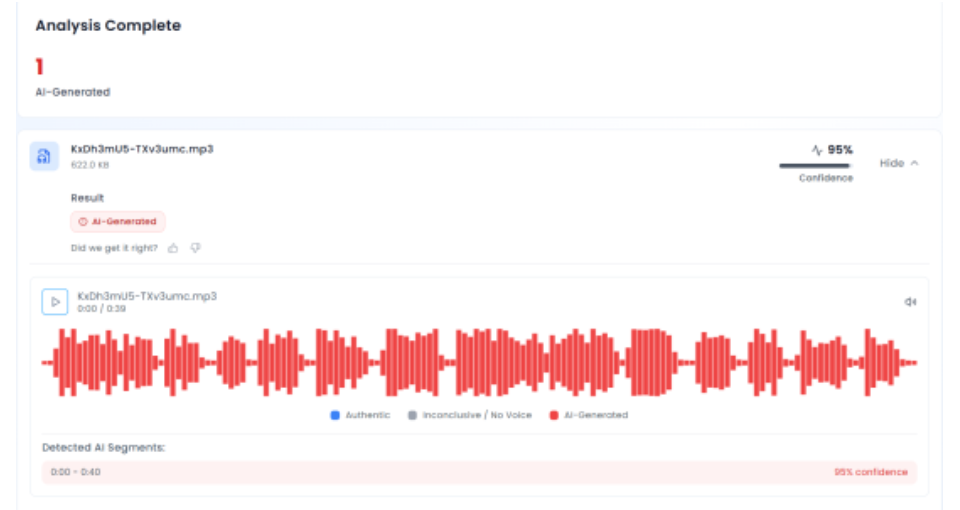

In the next phase of the investigation, the audio track from the viral video was extracted and analysed using the AI audio detection tool Aurigin. The tool’s assessment indicated that the voice heard in the clip was artificially generated, confirming that the audio did not originate from the original speech.

Conclusion

The claim that RSS chief Mohan Bhagwat called for the saffronisation of the Indian Army is false. PTI Fact Check found that the viral video was digitally manipulated, using genuine footage from an RSS centenary event but pairing it with an AI-generated audio track. The altered video was shared online to mislead viewers by falsely attributing statements Bhagwat never made.

Introduction

We inhabit an era where digital connectivity, while empowering, has also unleashed a relentless tide of cyber vulnerabilities, where personal privacy is constantly threatened, and crimes like sextortion are the perfect example of the sinister side of our hyperconnected world. Social media platforms, instant messaging apps, and digital content-sharing tools have all grown rapidly, changing how people communicate with one another and making it harder to distinguish between the private and public domains. The rise of sophisticated cybercrimes that use the very tools meant to connect us is the price paid for this unparalleled convenience. Sextortion, a portmanteau of “sex’ and “extortion”, stands out among them as a particularly pernicious kind of internet exploitation. Under the threat of disclosing their private information, photos, or videos, people are forced to engage in sexual behaviours or provide intimate content. Sextortion’s psychological component is what makes it particularly harmful, it feeds on social stigma, shame, and fear, which discourage victims from reporting the crime and feed the cycle of victimisation and silence. This cybercrime targets vulnerable people from all socioeconomic backgrounds and is not limited by age, gender, or location.

The Economy of Shame: Sextortion as a Cybercrime Industry

A news report from June 03, 2025, reveals a sextortion racket busted in Delhi, where a money trail of over Rs. 5 crore was identified by different teams of the Crime branch. From synthetic financial identities to sextortion and other cyber frauds, a recipe for a sophisticated cybercrime chain was found. To believe this is an aberration is to overlook the reality that it is symptomatic of a much wider and largely uncharted criminal framework. According to the FBI’s 2024 IC3 report, “extortion (including sextortion)” has skyrocketed to 86,415 complaints with losses of $143 million reported in the United States (US) alone. This indicates that coercive image-based threats are no longer an isolated cybercrime but an everyday occurrence. Sextortion is no longer an isolated cybercrime; it has metamorphosed into a systematic, industrialised criminal enterprise. Another news report dated 19th July, 2025, where Delhi Police has detained four people suspected of participating in a sextortion scheme that targeted a resident of the Bhagwanpur Khera neighbourhood of Shahdara. The suspected people were allegedly arrested on a complaint wherein the victim was manipulated and fell prey to a dating site.

The threat is amplified by the usage of deepfake technology, which allows offenders to create obscene content that looks believable. The approach, which relies on the stigma attached to sexual imagery in conservative societies like India, is that victims frequently give in to requests out of fear of damaging their reputations. The combination of cybercrime and cutting-edge technology highlights the lopsided power that criminals possess, leaving victims defenceless and law enforcement unable to keep up.

Legal Remedies and the Evolving Battle Against Sextortion

Given the complexity of these crimes, India has recognised sextortion and similar cyber-enabled financial crimes under a number of legal frameworks. A change to recognising cyber-enabled sexual exploitation as an organised criminal business is shown by the introduction of specific provisions like Section 111 in the Bhartiya Nyaya Sanhita (BNS), 2023, which classifies organised cybercrimes including extortion and frauds which fall under its expansive interpretation, as a serious offence. Similarly, Section 318 (2) criminalises cheating with a maximum sentence of three years in prison or a fine, whereas Section 336 (2) makes digital forgery a crime with a maximum sentence with a maximum sentence of two years in prison or a fine. In addition to these regulations, cheating by personation through computer resources is punishable by the Information Technology Act, 2000, specifically Section 66D, which carries a maximum sentence of three years in prison and a maximum fine of Rs. 1 lakh. Due to issues with attribution, cross-border jurisdiction, and the discreet nature of digital evidence, enforcement is still inconsistent even with current statutory restrictions.

The government and its agencies recognise that laws achieve real impact only when backed by awareness initiatives and accessible, localised mechanisms for redressal. Several Indian states and the Department of Telecommunications launched numerous campaigns to educate the public about and safeguard their mobile communication assets against identity theft, financial fraud, and cyberscams. Initiatives like Cyber Saathi Initiative and Cyber Dost by MHA, with the goal of improving forensic and victim reporting skills.

Conclusion

At CyberPeace, we understand that the best defence against online abuse is prevention. Our goal is to provide people with the information and resources to identify, avoid and report sextortion attempts like CyberPeace Helpline and organise awareness campaigns on safe digital habits. In order to remain updated with the constantly looming danger, our research and policy advocacy also focus on developing more robust legal and technological safeguards.

To every reader: think before you share, secure your accounts, and never let shame silence you. If you or someone you know becomes a victim, report it immediately, help is available, and justice is possible. Together we can reclaim the internet as a space of trust, not terror.

References

- https://www.hindustantimes.com/india-news/delhi-police-busts-sextortion-cyberfraud-rackets-6-held-101748959601825.html

- https://timesofindia.indiatimes.com/city/delhi/delhi-police-arrests-four-for-sextortion-and-blackmail-in-shahdara/articleshow/122767656.cms

- https://cdn.ncw.gov.in/wp-content/uploads/2025/05/CyberSaheli.pdf

Introduction: Reasons Why These Amendments Have Been Suggested.

The suggested changes in the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, are the much-needed regulatory reaction to the blistering emergence of synthetic information and deepfakes. These reforms are due to the pressing necessity to govern risks within the digital ecosystem as opposed to regular reformation.

The Emergence of the Digital Menace

Generative AI tools have also facilitated the generation of very realistic images, videos, audio, and text in recent years. Such artificial media have been abused to portray people in situations they are not in or in statements they have never said. The market size is expected to have a compound annual growth rate(CAGR) from 2025 to 2031 of 37.57%, resulting in a market volume of US$400.00 bn by 2031. Therefore, tight regulatory controls are necessary to curb a high prevalence of harm in the Indian digital world.

The Gap in Law and Institution

None of the IT Rules, 2021, clearly addressed synthetic content. Although the Information Technology Act, 2000 dealt with identity theft, impersonation and violation of privacy, the intermediaries were not explicitly obligated on artificial media. This left a loophole in enforcement, particularly since AI-generated content might get around the old system of moderation. These amendments bring India closer to the international standards, including the EU AI Act, which requires transparency and labelling of AI-driven content. India addresses such requirements and adapts to local constitutional and digital ecosystem needs.

II. Explanation of the Amendments

The amendments of 2025 present five alternative changes in the current IT Rules framework, which address various areas of synthetic media regulation.

A. Definitional Clarification: Synthetic Generation of Information Introduction.

Rule 2(1)(wa) Amendment:

The amendments provide an all-inclusive definition of what is meant by “synthetically generated information” as information, which is created, or produced, changed or distorted with the use of a computer resource, in a way that such information can reasonably be perceived to be genuine. This definition is intentionally broad and is not limited to deepfakes in the strict sense but to any artificial media that has gone through algorithmic manipulation in order to have a semblance of authenticity.

Expansion of Legal Scope:

Rule 2(1A) also makes it clear that any mention of information in the context of unlawful acts, namely, including categories listed in Rule 3(1)(b), Rule 3(1)(d), Rule 4(2), and Rule 4(4), should be understood to mean synthetically generated information. This is a pivotal interpretative protection that does not allow intermediaries to purport that synthetic versions of illegal material are not under the control of the regulation since they are algorithmic creations and not descriptions of what actually occurred.

B. Safe Harbour Protection and Content Removal Requirements

Amendment, rule 3(1)(b)- Safe Harbour Clarification:

The amendments add a certain proviso to the Rule (3) (1)(b) that explains a deletion or facilitation of access of synthetically produced information (or any information falling within specified categories) which the intermediaries have made in good faith as part of reasonable endeavours or at the receipt of a complaint shall not be considered a breach of the Section 79(2) (a) or (b) of the Information Technology Act, 2000. This coverage is relevant especially since it insures the intermediaries against liability in situations where they censor the synthetic contents in advance of a court ruling or governmental warnings.

C. Labelling and Metadata Requirements that are mandatory on Intermediaries that enable the creation of synthetic content

The amendments establish a new framework of due diligence in Rule 3(3) on the case of intermediaries that offer tools to generate, modify, or alter the synthetically generated information. Two fundamental requirements are laid down.

- The generated information must be prominently labelled or embedded with a permanent, unique metadata or identifier. The label or metadata must be:

- Visibly displayed or made audible in a prominent manner on or within that synthetically generated information.

- It should cover at least 10% of the surface of the visual display or, in the case of audio content, during the initial 10% of its duration.

- It can be used to immediately identify that such information is synthetically generated information which has been created, generated, modified, or altered using the computer resource of the intermediary.

- The intermediary in clause (a) shall not enable modification, suppression or removal of such label, permanent unique metadata or identifier, by whatever name called.

D. Important Social Media Intermediaries- Pre-Publication Checking Responsibilities

The amendments present a three-step verification mechanism, under Rule 4(1A), to Significant Social Media Intermediaries (SSMIs), which enables displaying, uploading or publishing on its computer resource before such display, uploading, or publication has to follow three steps.

Step 1- User Declaration: It should compel the users to indicate whether the materials they are posting are synthetically created. This puts the first burden on users.

Step 2-Technical Verification: To ensure that the user is truly valid, the SSMIs need to provide reasonable technical means, such as automated tools or other applications. This duty is contextual and would be based on the nature, format and source of content. It does not allow intermediaries to escape when it is known that not every type of content can be verified using the same standards.

Step 3- Prominent Labelling: In case the synthetic origin is verified by user declaration or technical verification, SSMIs should have a notice or label that is prominently displayed to be seen by users before publication.

The amendments provide a better system of accountability and set that intermediaries will be found to have failed due diligence in a case where it is established that they either knowingly permitted, encouraged or otherwise failed to act on synthetically produced information in contravention of these requirements. This brings in an aspect of knowledge, and intermediaries cannot use accidental errors as an excuse for non-compliance.

An explanation clause makes it clear that SSMIs should also make reasonable and proportionate technical measures to check user declarations and keep no synthetic content published without adequate declaration or labelling. This eliminates confusion on the role of the intermediaries with respect to making declarations.

III. Attributes of The Amendment Framework

- Precision in Balancing Innovation and Accountability.

The amendments have commendably balanced two extreme regulatory postures by neither prohibiting nor allowing the synthetic media to run out of control. It has recognised the legitimate use of synthetic media creation in entertainment, education, research and artistic expression by adopting a transparent and traceable mandate that preserves innovation while ensuring accountability.

- Overt Acceptance of the Intermediary Liability and Reverse Onus of Knowledge

Rule 4(1A) gives a highly significant deeming rule; in cases where the intermediary permits or refrains from acting with respect to the synthetic content knowing that the rules are violated, it will be considered as having failed to comply with the due diligence provisions. This description closes any loopholes in unscrupulous supervision where intermediaries can be able to argue that they did so. Standard of scienter promotes material investment in the detection devices and censor mechanisms that have been in place to offer security to the platforms that have sound systems, albeit the fact that the tools fail to capture violations at times.

- Clarity Through Definition and Interpretive Guidance

The cautious definition of the term “synthetically generated information” and the guidance that is provided in Rule 2(1A) is an admirable attempt to solve confusion in the previous regulatory framework. Instead of having to go through conflicting case law or regulatory direction, the amendments give specific definitional limits. The purposefully broad formulation (artificially or algorithmically created, generated, modified or altered) makes sure that the framework is not avoided by semantic games over what is considered to be a real synthetic content versus a slight algorithmic alteration.

- Insurance of non-accountability but encourages preventative moderation

The safe harbour clarification of the Rule 3(1)(b) amendment clearly safeguards the intermediaries who voluntarily dismiss the synthetic content without a court order or government notification. It is an important incentive scheme that prompts platforms to implement sound self-regulation measures. In the absence of such protection, platforms may also make rational decisions to stay in a passive stance of compliance, only deleting content under the pressure of an external authority, thus making them more effective in keeping users safe against dangerous synthetic media.

IV. Conclusion

The Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules 2025 suggest a structured, transparent, and accountable execution of curbing the rising predicaments of synthetic media and deepfakes. The amendments deal with the regulatory and interpretative gaps that have always existed in determining what should be considered as synthetically generated information, the intermediary liabilities and the mandatory labelling and metadata requirement. Safe-harbour protection will encourage the moderation proactively, and a scienter-based liability rule will not permit the intermediaries to escape liability when they are aware of the non-compliance but tolerate such non-compliance. The idea to introduce pre-publication verification of Significant Social Media Intermediaries adds the responsibility to users and due diligence to the platform. Overall, the amendments provide a reasonable balance between innovation and regulation, make the process more open with its proper definitions, promote responsible conduct on the platform and transform India and the new standards in the sphere of synthetic media regulation. They collaborate to enhance the verisimilitude, defence of the users, and visibility of the systems of the digital ecosystem of India.

V. References

2. https://www.statista.com/outlook/tmo/artificial-intelligence/generative-ai/worldwide