#FactCheck - MS Dhoni Sculpture Falsely Portrayed as Chanakya 3D Recreation

Executive Summary:

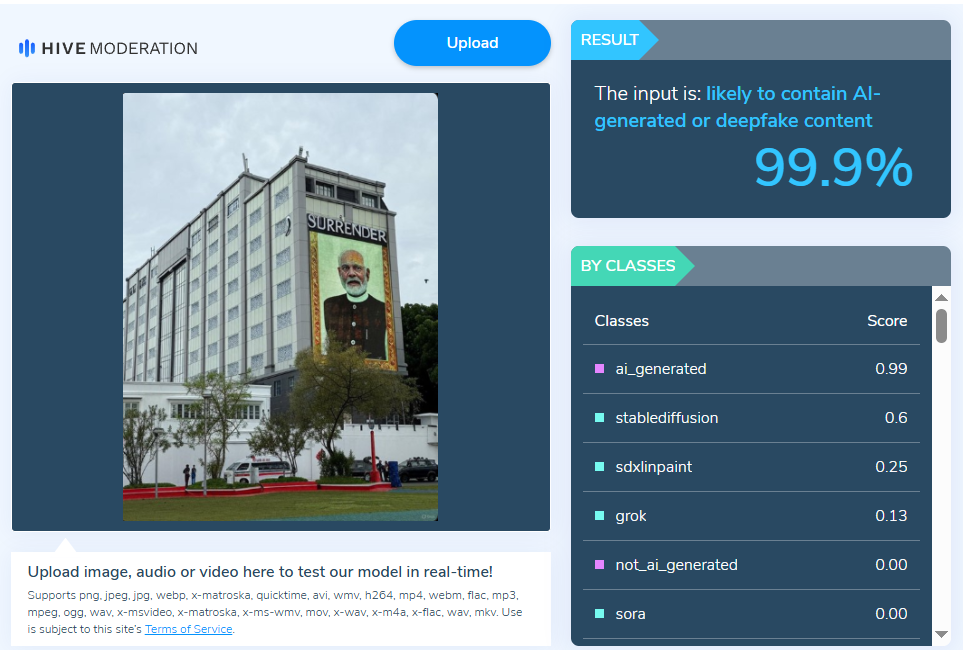

A widely used news on social media is that a 3D model of Chanakya, supposedly made by Magadha DS University matches with MS Dhoni. However, fact-checking reveals that it is a 3D model of MS Dhoni not Chanakya. This MS Dhoni-3D model was created by artist Ankur Khatri and Magadha DS University does not appear to exist in the World. Khatri uploaded the model on ArtStation, calling it an MS Dhoni similarity study.

Claims:

The image being shared is claimed to be a 3D rendering of the ancient philosopher Chanakya created by Magadha DS University. However, people are noticing a striking similarity to the Indian cricketer MS Dhoni in the image.

Fact Check:

After receiving the post, we ran a reverse image search on the image. We landed on a Portfolio of a freelance character model named Ankur Khatri. We found the viral image over there and he gave a headline to the work as “MS Dhoni likeness study”. We also found some other character models in his portfolio.

Subsequently, we searched for the mentioned University which was named as Magadha DS University. But found no University with the same name, instead the name is Magadh University and it is located in Bodhgaya, Bihar. We searched the internet for any model, made by Magadh University but found nothing. The next step was to conduct an analysis on the Freelance Character artist profile, where we found that he has a dedicated Instagram channel where he posted a detailed video of his creative process that resulted in the MS Dhoni character model.

We concluded that the viral image is not a reconstruction of Indian philosopher Chanakya but a reconstruction of Cricketer MS Dhoni created by an artist named Ankur Khatri, not any University named Magadha DS.

Conclusion:

The viral claim that the 3D model is a recreation of the ancient philosopher Chanakya by a university called Magadha DS University is False and Misleading. In reality, the model is a digital artwork of former Indian cricket captain MS Dhoni, created by artist Ankur Khatri. There is no evidence of a Magadha DS University existence. There is a university named Magadh University in Bodh Gaya, Bihar despite its similar name, we found no evidence in the model's creation. Therefore, the claim is debunked, and the image is confirmed to be a depiction of MS Dhoni, not Chanakya.