#FactCheck -Old Video of Benjamin Netanyahu Running in Knesset Falsely Linked to Iran-Israel Tensions

Executive Summary

Amid the ongoing tensions between the United States, Israel, and Iran, a video circulating on social media claims that Israeli Prime Minister Benjamin Netanyahu was seen running after Iran launched an attack on Israel. However, research by the CyberPeace found the viral claim to be misleading. Our research revealed that the video has no connection with the current tensions between the United States, Israel, and Iran. In reality, the clip dates back to 2021, when Netanyahu was rushing inside Israel’s parliament to cast his vote after arriving late.

Claim:

On the social media platform X (formerly Twitter), a user shared the video on March 5, 2026, claiming that Netanyahu had fled and gone into hiding due to fear of Iran. The post included inflammatory remarks suggesting that Iran had demonstrated its power and that Netanyahu had abandoned his country out of fear.

Fact Check

To verify the authenticity of the video, we extracted several keyframes and conducted a reverse image search on Google. During the research, we found the same video on the official X account of Benjamin Netanyahu, posted on December 14, 2021. In the post, Netanyahu wrote in Hebrew, which translates to,“I am always proud to run for you. Photographed half an hour ago in the Knesset.”

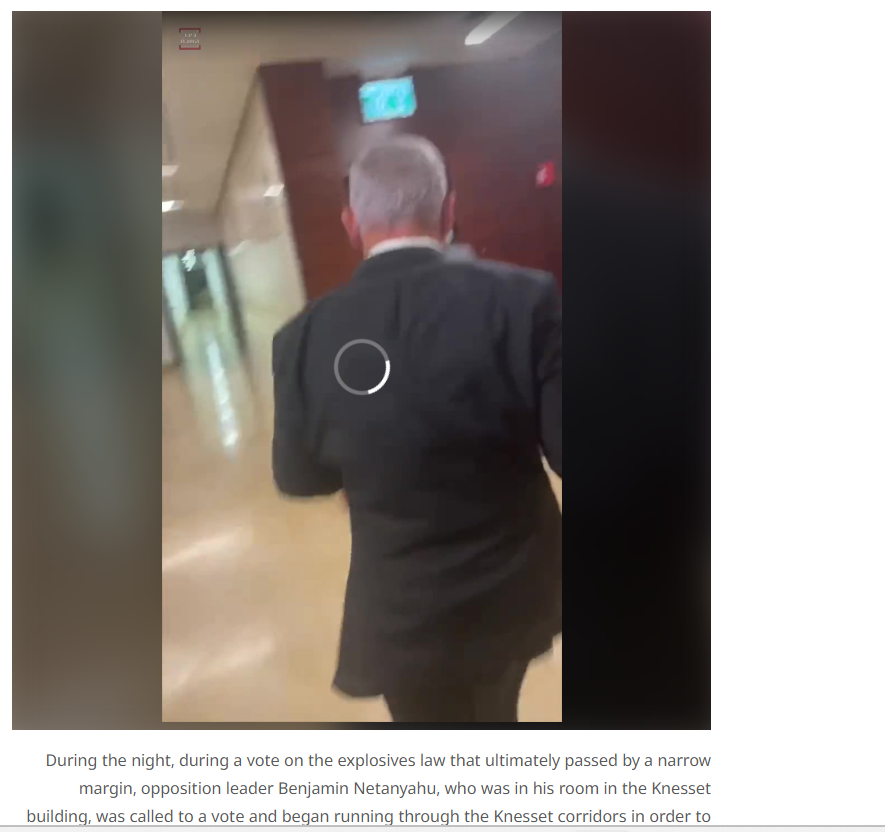

Further research also led us to a Hebrew news website where the same video was published.

According to the report, voting in the Knesset (Israel’s parliament) continued throughout the night, and an explosives-related bill was passed by a very narrow margin. At the time, opposition leader Benjamin Netanyahu was in his room inside the Knesset building. When he was called for the vote, he hurried through the parliament corridors to reach the chamber in time to cast his vote.

Conclusion:

Our research found that the viral video is unrelated to the ongoing tensions involving the United States, Israel, and Iran. The footage is from 2021 and shows Benjamin Netanyahu rushing inside the Knesset to participate in a parliamentary vote after being called in at the last moment.

Related Blogs

Introduction

Artificial Intelligence (AI) is fast transforming our future in the digital world, transforming healthcare, finance, education, and cybersecurity. But alongside this technology, bad actors are also weaponising it. More and more, state-sponsored cyber actors are misusing AI tools such as ChatGPT and other generative models to automate disinformation, enable cyberattacks, and speed up social engineering operations. This write-up explores why and how AI, in the form of large language models (LLMs), is being exploited in cyber operations associated with adversarial states, and the necessity for international vigilance, regulation, and AI safety guidelines.

The Shift: AI as a Cyber Weapon

State-sponsored threat actors are misusing tools such as ChatGPT to turbocharge their cyber arsenal.

- Phishing Campaigns using AI- Generative AI allows for highly convincing and grammatically correct phishing emails. Unlike the shoddily written scams of yesteryears, these AI-based messages are tailored according to the victim's location, language, and professional background, increasing the attack success rate considerably. Example: It has recently been reported by OpenAI and Microsoft that Russian and North Korean APTs have employed LLMs to create customised phishing baits and malware obfuscation notes.

- Malware Obfuscation and Script Generation- Big Language Models (LLMs) such as ChatGPT may be used by cyber attackers to help write, debug, and camouflage malicious scripts. While the majority of AI instruments contain safety mechanisms to guard against abuse, threat actors often exploit "jailbreaking" to evade these protections. Once such constraints are lifted, the model can be utilised to develop polymorphic malware that alters its code composition to avoid detection. It can also be used to obfuscate PowerShell or Python scripts to render them difficult for conventional antivirus software to identify. Also, LLMs have been employed to propose techniques for backdoor installation, additional facilitating stealthy access to hijacked systems.

- Disinformation and Narrative Manipulation

State-sponsored cyber actors are increasingly employing AI to scale up and automate disinformation operations, especially on election, protest, and geopolitical dispute days. With LLMs' assistance, these actors can create massive amounts of ersatz news stories, deepfake interview transcripts, imitation social media posts, and bogus public remarks on online forums and petitions. The localisation of content makes this strategy especially perilous, as messages are written with cultural and linguistic specificity, making them credible and more difficult to detect. The ultimate aim is to seed societal unrest, manipulate public sentiments, and erode faith in democratic institutions.

Disrupting Malicious Uses of AI – OpenAI Report (June 2025)

OpenAI released a comprehensive threat intelligence report called "Disrupting Malicious Uses of AI" and the “Staying ahead of threat actors in the age of AI”, which outlined how state-affiliated actors had been testing and misusing its language models for malicious intent. The report named few advanced persistent threat (APT) groups, each attributed to particular nation-states. OpenAI highlighted that the threat actors used the models mostly for enhancing linguistic quality, generating social engineering content, and expanding operations. Significantly, the report mentioned that the tools were not utilized to produce malware, but rather to support preparatory and communicative phases of larger cyber operations.

AI Jailbreaking: Dodging Safety Measures

One of the largest worries is how malicious users can "jailbreak" AI models, misleading them into generating banned content using adversarial input. Some methods employed are:

- Roleplay: Simulating the AI being a professional criminal advisor

- Obfuscation: Concealing requests with code or jargon

- Language Switching: Proposing sensitive inquiries in less frequently moderated languages

- Prompt Injection: Lacing dangerous requests within innocent-appearing questions

These methods have enabled attackers to bypass moderation tools, transforming otherwise moral tools into cybercrime instruments.

Conclusion

As AI generations evolve and become more accessible, its application by state-sponsored cyber actors is unprecedentedly threatening global cybersecurity. The distinction between nation-state intelligence collection and cybercrime is eroding, with AI serving as a multiplier of adversarial campaigns. AI tools such as ChatGPT, which were created for benevolent purposes, can be targeted to multiply phishing, propaganda, and social engineering attacks. The cross-border governance, ethical development practices, and cyber hygiene practices need to be encouraged. AI needs to be shaped not only by innovation but by responsibility.

References

- https://www.microsoft.com/en-us/security/blog/2024/02/14/staying-ahead-of-threat-actors-in-the-age-of-ai/

- https://www.bankinfosecurity.com/openais-chatgpt-hit-nation-state-hackers-a-28640

- https://oecd.ai/en/incidents/2025-06-13-b5e9

- https://www.microsoft.com/en-us/security/security-insider/meet-the-experts/emerging-AI-tactics-in-use-by-threat-actors

- https://www.wired.com/story/youre-not-ready-for-ai-hacker-agents/

- https://www.cert-in.org.in/PDF/Digital_Threat_Report_2024.pdf

- https://cdn.openai.com/threat-intelligence-reports/5f73af09-a3a3-4a55-992e-069237681620/disrupting-malicious-uses-of-ai-june-2025.pdf

Introduction

Deepfake technology, which combines the words "deep learning" and "fake," uses highly developed artificial intelligence—specifically, generative adversarial networks (GANs)—to produce computer-generated content that is remarkably lifelike, including audio and video recordings. Because it can provide credible false information, there are concerns about its misuse, including identity theft and the transmission of fake information. Cybercriminals leverage AI tools and technologies for malicious activities or for committing various cyber frauds. By such misuse of advanced technologies such as AI, deepfake, and voice clones. Such new cyber threats have emerged.

India Topmost destination for deepfake attacks

According to Sumsub’s identity fraud report 2023, a well-known digital identity verification company with headquarters in the UK. India, Bangladesh, and Pakistan have become an important participants in the Asia-Pacific identity fraud scene with India’s fraud rate growing exponentially by 2.99% from 2022 to 2023. They are among the top ten nations most impacted by the use of deepfake technology. Deepfake technology is being used in a significant number of cybercrimes, according to the newly released Sumsub Identity Fraud Report for 2023, and this trend is expected to continue in the upcoming year. This highlights the need for increased cybersecurity awareness and safeguards as identity fraud poses an increasing concern in the area.

How Deeepfake Works

Deepfakes are a fascinating and worrisome phenomenon that have emerged in the modern digital landscape. These realistic-looking but wholly artificial videos have become quite popular in the last few months. Such realistic-looking, but wholly artificial, movies have been ingrained in the very fabric of our digital civilisation as we navigate its vast landscape. The consequences are enormous and the attraction is irresistible.

Deep Learning Algorithms

Deepfakes examine large datasets, frequently pictures or videos of a target person, using deep learning techniques, especially Generative Adversarial Networks. By mimicking and learning from gestures, speech patterns, and facial expressions, these algorithms can extract valuable information from the data. By using sophisticated approaches, generative models create material that mixes seamlessly with the target context. Misuse of this technology, including the dissemination of false information, is a worry. Sophisticated detection techniques are becoming more and more necessary to separate real content from modified content as deepfake capabilities improve.

Generative Adversarial Networks

Deepfake technology is based on GANs, which use a dual-network design. Made up of a discriminator and a generator, they participate in an ongoing cycle of competition. The discriminator assesses how authentic the generated information is, whereas the generator aims to create fake material, such as realistic voice patterns or facial expressions. The process of creating and evaluating continuously leads to a persistent improvement in Deepfake's effectiveness over time. The whole deepfake production process gets better over time as the discriminator adjusts to become more perceptive and the generator adapts to produce more and more convincing content.

Effect on Community

The extensive use of Deepfake technology has serious ramifications for several industries. As technology develops, immediate action is required to appropriately manage its effects. And promoting ethical use of technologies. This includes strict laws and technological safeguards. Deepfakes are computer trickery that mimics prominent politicians' statements or videos. Thus, it's a serious issue since it has the potential to spread instability and make it difficult for the public to understand the true nature of politics. Deepfake technology has the potential to generate totally new characters or bring stars back to life for posthumous roles in the entertainment industry. It gets harder and harder to tell fake content from authentic content, which makes it simpler for hackers to trick people and businesses.

Ongoing Deepfake Assaults In India

Deepfake videos continue to target popular celebrities, Priyanka Chopra is the most recent victim of this unsettling trend. Priyanka's deepfake adopts a different strategy than other examples including actresses like Rashmika Mandanna, Katrina Kaif, Kajol, and Alia Bhatt. Rather than editing her face in contentious situations, the misleading film keeps her look the same but modifies her voice and replaces real interview quotes with made-up commercial phrases. The deceptive video shows Priyanka promoting a product and talking about her yearly salary, highlighting the worrying development of deepfake technology and its possible effects on prominent personalities.

Actions Considered by Authorities

A PIL was filed requesting the Delhi High Court that access to websites that produce deepfakes be blocked. The petitioner's attorney argued in court that the government should at the very least establish some guidelines to hold individuals accountable for their misuse of deepfake and AI technology. He also proposed that websites should be asked to identify information produced through AI as such and that they should be prevented from producing illegally. A division bench highlighted how complicated the problem is and suggested the government (Centre) to arrive at a balanced solution without infringing the right to freedom of speech and expression (internet).

Information Technology Minister Ashwini Vaishnaw stated that new laws and guidelines would be implemented by the government to curb the dissemination of deepfake content. He presided over a meeting involving social media companies to talk about the problem of deepfakes. "We will begin drafting regulation immediately, and soon, we are going to have a fresh set of regulations for deepfakes. this might come in the way of amending the current framework or ushering in new rules, or a new law," he stated.

Prevention and Detection Techniques

To effectively combat the growing threat posed by the misuse of deepfake technology, people and institutions should place a high priority on developing critical thinking abilities, carefully examining visual and auditory cues for discrepancies, making use of tools like reverse image searches, keeping up with the latest developments in deepfake trends, and rigorously fact-check reputable media sources. Important actions to improve resistance against deepfake threats include putting in place strong security policies, integrating cutting-edge deepfake detection technologies, supporting the development of ethical AI, and encouraging candid communication and cooperation. We can all work together to effectively and mindfully manage the problems presented by deepfake technology by combining these tactics and adjusting the constantly changing terrain.

Conclusion

Advanced artificial intelligence-powered deepfake technology produces extraordinarily lifelike computer-generated information, raising both creative and moral questions. Misuse of tech or deepfake presents major difficulties such as identity theft and the propagation of misleading information, as demonstrated by examples in India, such as the latest deepfake video involving Priyanka Chopra. It is important to develop critical thinking abilities, use detection strategies including analyzing audio quality and facial expressions, and keep up with current trends in order to counter this danger. A thorough strategy that incorporates fact-checking, preventative tactics, and awareness-raising is necessary to protect against the negative effects of deepfake technology. Important actions to improve resistance against deepfake threats include putting in place strong security policies, integrating cutting-edge deepfake detection technologies, supporting the development of ethical AI, and encouraging candid communication and cooperation. We can all work together to effectively and mindfully manage the problems presented by deepfake technology by combining these tactics and making adjustments to the constantly changing terrain. Creating a true cyber-safe environment for netizens.

References:

- https://yourstory.com/2023/11/unveiling-deepfake-technology-impact

- https://www.indiatoday.in/movies/celebrities/story/deepfake-alert-priyanka-chopra-falls-prey-after-rashmika-mandanna-katrina-kaif-and-alia-bhatt-2472293-2023-12-05

- https://www.csoonline.com/article/1251094/deepfakes-emerge-as-a-top-security-threat-ahead-of-the-2024-us-election.html

- https://timesofindia.indiatimes.com/city/delhi/hc-unwilling-to-step-in-to-curb-deepfakes-delhi-high-court/articleshow/105739942.cms

- https://www.indiatoday.in/india/story/india-among-top-targets-of-deepfake-identity-fraud-2472241-2023-12-05

- https://sumsub.com/fraud-report-2023/

.webp)

Introduction

On the precipice of a new domain of existence, the metaverse emerges as a digital cosmos, an expanse where the horizon is not sky, but a limitless scope for innovation and imagination. It is a sophisticated fabric woven from the threads of social interaction, leisure, and an accelerated pace of technological progression. This new reality, a virtual landscape stretching beyond the mundane encumbrances of terrestrial life, heralds an evolutionary leap where the laws of physics yield to the boundless potential inherent in our creativity. Yet, the dawn of such a frontier does not escape the spectre of an age-old adversary—financial crime—the shadow that grows in tandem with newfound opportunity, seeping into the metaverse, where crypto-assets are no longer just an alternative but the currency du jour, dazzling beacons for both legitimate pioneers and shades of illicit intent.

The metaverse, by virtue of its design, is a canvas for the digital repaint of society—a three-dimensional realm where the lines between immersive experiences and entertainment blur, intertwining with surreal intimacy within this virtual microcosm. Donning headsets like armor against the banal, individuals become avatars; digital proxies that acquire the ability to move, speak, and perform an array of actions with an ease unattainable in the physical world. Within this alternative reality, users navigate digital topographies, with experiences ranging from shopping in pixelated arcades to collaborating in virtual offices; from witnessing concerts that defy sensory limitations to constructing abodes and palaces from mere codes and clicks—an act of creation no longer beholden to physicality but to the breadth of one's ingenuity.

The Crypto Assets

The lifeblood of this virtual economy pulsates through crypto-assets. These digital tokens represent value or rights held on distributed ledgers—a technology like blockchain, which serves as both a vault and a transparent tapestry, chronicling the pathways of each digital asset. To hop onto the carousel of this economy requires a digital wallet—a storeroom and a gateway for acquisition and trade of these virtual valuables. Cryptocurrencies, with NFTs—Non-fungible Tokens—have accelerated from obscure digital curios to precious artifacts. According to blockchain analytics firm Elliptic, an astonishing figure surpassing US$100 million in NFTs were usurped between July 2021 and July 2022. This rampant heist underlines their captivating allure for virtual certificates. Empowers do not just capture art, music, and gaming, but embody their very soul.

Yet, as the metaverse burgeons, so does the complexity and diversity of financial transgressions. From phishing to sophisticated fraud schemes, criminals craft insidious simulacrums of legitimate havens, aiming to drain the crypto-assets of the unwary. In the preceding year, a daunting figure rose to prominence—the vanishing of US$14 billion worth of crypto-assets, lost to the abyss of deception and duplicity. Hence, social engineering emerges from the shadows, a sort of digital chicanery that preys not upon weaknesses of the system, but upon the psychological vulnerabilities of its users—scammers adorned in the guise of authenticity, extracting trust and assets with Machiavellian precision.

The New Wave of Fincrimes

Extending their tentacles further, perpetrators of cybercrime exploit code vulnerabilities, engage in wash trading, obscuring the trails of money laundering, meander through sanctions evasion, and even dare to fund activities that send ripples of terror across the physical and virtual divide. The intricacies of smart contracts and the decentralized nature of these worlds, designed to be bastions of innovation, morph into paths paved for misuse and exploitation. The openness of blockchain transactions, the transparency that should act as a deterrent, becomes a paradox, a double-edged sword for the law enforcement agencies tasked with delineating the networks of faceless adversaries.

Addressing financial crime in the metaverse is Herculean labour, requiring an orchestra of efforts—harmonious, synchronised—from individual users to mammoth corporations, from astute policymakers to vigilant law enforcement bodies. Users must furnish themselves with critical awareness, fortifying their minds against the siren calls that beckon impetuous decisions, spurred by the anxiety of falling behind. Enterprises, the architects and custodians of this digital realm, are impelled to collaborate with security specialists, to probe their constructs for weak seams, and to reinforce their bulwarks against the sieges of cyber onslaughts. Policymakers venture onto the tightrope walk, balancing the impetus for innovation against the gravitas of robust safeguards—a conundrum played out on the global stage, as epitomised by the European Union's strides to forge cohesive frameworks to safeguard this new vessel of human endeavour.

The Austrian Example

Consider the case of Austria, where the tapestry of laws entwining crypto-assets spans a gamut of criminal offences, from data breaches to the complex webs of money laundering and the financing of dark enterprises. Users and corporations alike must become cartographers of local legislation, charting their ventures and vigilances within the volatile seas of the metaverse.

Upon the sands of this virtual frontier, we must not forget: that the metaverse is more than a hive of bits and bandwidth. It crystallises our collective dreams, echoes our unspoken fears, and reflects the range of our ambitions and failings. It stands as a citadel where the ever-evolving quest for progress should never stray from the compass of ethical pursuit. The cross-pollination of best practices, and the solidarity of international collaboration, are not simply tactics—they are imperatives engraved with the moral codes of stewardship, guiding us to preserve the unblemished spirit of the metaverse.

Conclusion

The clarion call of the metaverse invites us to venture into its boundless expanse, to savour its gifts of connection and innovation. Yet, on this odyssey through the pixelated constellations, we harness vigilance as our star chart, mindful of the mirage of morality that can obfuscate and lead astray. In our collective pursuit to curtail financial crime, we deploy our most formidable resource—our unity—conjuring a bastion for human ingenuity and integrity. In this, we ensure that the metaverse remains a beacon of awe, safeguarded against the shadows of transgression, and celebrated as a testament to our shared aspiration to venture beyond the realm of the possible, into the extraordinary.

References

- https://www.wolftheiss.com/insights/financial-crime-in-the-metaverse-is-real/

- https://gnet-research.org/2023/08/16/meta-terror-the-threats-and-challenges-of-the-metaverse/

- https://shuftipro.com/blog/the-rising-concern-of-financial-crimes-in-the-metaverse-aml-screening-as-a-solution/