#FactCheck- Delhi Metro Rail Corporation Price Hike

Executive Summary:

Recently, a viral social media post alleged that the Delhi Metro Rail Corporation Ltd. (DMRC) had increased ticket prices following the BJP’s victory in the Delhi Legislative Assembly elections. After thorough research and verification, we have found this claim to be misleading and entirely baseless. Authorities have asserted that no fare hike has been declared.

Claim:

Viral social media posts have claimed that the Delhi Metro Rail Corporation Ltd. (DMRC) increased metro fares following the BJP's victory in the Delhi Legislative Assembly elections.

Fact Check:

After thorough research, we conclude that the claims regarding a fare hike by the Delhi Metro Rail Corporation Ltd. (DMRC) following the BJP’s victory in the Delhi Legislative Assembly elections are misleading. Our review of DMRC’s official website and social media handles found no mention of any fare increase.Furthermore, the official X (formerly Twitter) handle of DMRC has also clarified that no such price hike has been announced. We urge the public to rely on verified sources for accurate information and refrain from spreading misinformation.

Conclusion:

Upon examining the alleged fare hike, it is evident that the increase pertains to Bengaluru, not Delhi. To verify this, we reviewed the official website of Bangalore Metro Rail Corporation Limited (BMRCL) and cross-checked the information with appropriate evidence, including relevant images. Our findings confirm that no fare hike has been announced by the Delhi Metro Rail Corporation Ltd. (DMRC).

- Claim: Delhi Metro price Hike after BJP’s victory in election

- Claimed On: X (Formerly Known As Twitter)

- Fact Check: False and Misleading

Related Blogs

Introduction

The world has been witnessing various advancements in cyberspace, and one of the major changes is the speed with which we gain and share information. Cyberspace has been declared as the fifth dimension of warfare, and hence, the influence of technology will go a long way in safeguarding ourselves and our nation. Information plays a vital role in this scenario, and due to the easy access to information, the instances of misinformation and disinformation have been rampant across the globe. In the recent Russia-Ukraine crisis, it was clearly seen how instances of misinformation can lead to major loss and harm to a nation and its subjects. All nations and global leaders are deliberating upon this aspect and efficient sharing of information among friendly nations and inter-government organisations.

What is IW?

IW, also known as Information warfare, is a critical aspect of defending our cyberspace. Information Warfare, in its broadest sense, is a struggle over the information and communications process, a struggle that began with the advent of human communication and conflict. Over the past few decades, the rapid rise in information and communication technologies and their increasing prevalence in our society has revolutionised the communications process and, with it, the significance and implications of information warfare. Information warfare is the application of destructive force on a large scale against information assets and systems, against the computers and networks that support the four critical infrastructures (the power grid, communications, financial, and transportation). However, protecting against computer intrusion, even on a smaller scale, is in the national security interests of the country and is important in the current discussion about information warfare.

IW in India

The aspects of misinformation have been recently seen in India in the form of the violence in Manipur and Nuh, which resulted in a massive loss of property and even human lives. A lot of miscreants or anti-national elements often seed misinformation in our daily news feed, and this is often magnified by social media platforms such as Instagram or X (formerly known as Twitter) and OTT-based messaging applications like WhatsApp or Telegram during the pandemic. It was seen nearly every week that some or the other new ways to treat COVID-19 were shared on Social media, which were false and inaccurate, especially in regard to the vaccination drive. A lot of posts and messages highlighted that the Vaccine is not safe, but a lot of this was a part of misinformation propaganda. Most of the time, the speed of spread of such episodes of misinformation is rapid and is often spread by the use of social media platforms and OTT messaging applications.

IW and Indian Army

Former Meta employees have recently come up with allegations that the Chinar Corp of the Indian Army had approached the social media giant to suppress some pages and channels which propagated content that may be objectionable. It is alleged that the formation made such a request to propagate its counterintelligence operations against Pakistan. The Chinar Corps is one of the most prestigious formations of the Indian Army and has the operational area of Kashmir Valley. The instances of online grooming and brainwashing have been common from the anti-national elements of Pakistan, as a faction of youth has been engaged in terrorist activities directly or indirectly. Various messaging and social media apps are used by the bad actors to lure in innocent youth on the fake and fabricated pretext of religion or any other social issue. The Indian Army had launched an anti-misinformation campaign in Kashmir, which aimed to protect Kashmiris from the propaganda of fake news and misinformation, which often led to radicalisation or even riots or attacks on defence forces. The aspect of net neutrality is often misused by bad actors in areas which are sociological, critical or unstable. The Indian Army has created special offices focusing on IW at all levels of formations, and the same is also used to eradicate all or any fake news or fake propaganda against the Indian Army.

Conclusion

Information has always been a source of power since the days of the Roman Empire. Control, dissemination, moderation and mode of sharing of information plays a vital role for any nation both in term of safety from external threats and to maintain National Security. Information Warfare is part of the 5th dimension of warfare, i.e., Cyberwar and is a growing concern for developed as well as developing nations. Information warfare is a critical aspect which needs to be incorporated in terms of basic training for defence personnel and law enforcement agencies. The anti-misinformation operation in Kashmir was primarily focused towards eradicating the bad elements after repealing Article 377, from cyberspace and ensuring harmony, peace, stability and prosperity in the state.

References

- https://irp.fas.org/eprint/snyder/infowarfare.htm

- https://www.thehindu.com/news/national/metas-india-team-delayed-action-against-army-led-misinfo-op-in-kashmir-us-news-report/article67352470.ece

- https://www.indiatoday.in/india/story/facebook-instagram-block-handles-of-chinar-corps-no-response-from-company-over-a-week-says-officials-1910445-2022-02-08

Introduction

A disturbing trend of courier-related cyber scams has emerged, targeting unsuspecting individuals across India. In these scams, fraudsters pose as officials from reputable organisations, such as courier companies or government departments like the narcotics bureau. Using sophisticated social engineering tactics, they deceive victims into divulging personal information and transferring money under false pretences. Recently, a woman IT professional from Mumbai fell victim to such a scam, losing Rs 1.97 lakh.

Instances of courier-related cyber scams

Recently, two significant cases of courier-related cyber scams have surfaced, illustrating the alarming prevalence of such fraudulent activities.

- Case in Delhi: A doctor in Delhi fell victim to an online scam, resulting in a staggering loss of approximately Rs 4.47 crore. The scam involved fraudsters posing as representatives of a courier company. They informed the doctor about a seized package and requested substantial money for verification purposes. Tragically, the doctor trusted the callers and lost substantial money.

- Case in Mumbai: In a strikingly similar incident, an IT professional from Mumbai, Maharashtra, lost Rs 1.97 lakh to cyber fraudsters pretending to be officials from the narcotics department. The fraudsters contacted the victim, claiming her Aadhaar number was linked to the criminals’ bank accounts. They coerced the victim into transferring money for verification through deceptive tactics and false evidence, resulting in a significant financial loss.

These recent cases highlight the growing threat of courier-related cyber scams and the devastating impact they can have on unsuspecting individuals. It emphasises the urgent need for increased awareness, vigilance, and preventive measures to protect oneself from falling victim to such fraudulent schemes.

Nature of the Attack

The cyber scam typically begins with a fraudulent call from someone claiming to be associated with a courier company. They inform the victim that their package is stuck or has been seized, escalating the situation by involving law enforcement agencies, such as the narcotics department. The fraudsters manipulate victims by creating a sense of urgency and fear, convincing them to download communication apps like Skype to establish credibility. Fabricated evidence and false claims trick victims into sharing personal information, including Aadhaar numbers, and coercing them to make financial transactions for verification purposes.

Best Practices to Stay Safe

To protect oneself from courier-related cyber scams and similar frauds, individuals should follow these best practices:

- Verify Calls and Identity: Be cautious when receiving calls from unknown numbers. Verify the caller’s identity by cross-checking with relevant authorities or organisations before sharing personal information.

- Exercise Caution with Personal Information: Avoid sharing sensitive personal information, such as Aadhaar numbers, bank account details, or passwords, over the phone or through messaging apps unless necessary and with trusted sources.

- Beware of Urgency and Threats: Scammers often create a sense of urgency or threaten legal consequences to manipulate victims. Remain vigilant and question any unexpected demands for money or personal information.

- Double-Check Suspicious Claims: If contacted by someone claiming to be from a government department or law enforcement agency, independently verify their credentials by contacting the official helpline or visiting the department’s official website.

- Educate and Spread Awareness: Share information about these scams with friends, family, and colleagues to raise awareness and collectively prevent others from falling victim to such frauds.

Legal Remedies

In case of falling victim to a courier-related cyber scam, individuals can sort to take the following legal actions:

- File a First Information Report (FIR): In case of falling victim to a courier-related cyber scam or any similar online fraud, individuals have legal options available to seek justice and potentially recover their losses. One of the primary legal actions that can be taken is to file a First Information Report (FIR) with the local police. The following sections of Indian law may be applicable in such cases:

- Section 419 of the Indian Penal Code (IPC): This section deals with the offence of cheating by impersonation. It states that whoever cheats by impersonating another person shall be punished with imprisonment of either description for a term which may extend to three years, or with a fine, or both.

- Section 420 of the IPC: This section covers the offence of cheating and dishonestly inducing delivery of property. It states that whoever cheats and thereby dishonestly induces the person deceived to deliver any property shall be punished with imprisonment of either description for a term which may extend to seven years and shall also be liable to pay a fine.

- Section 66(C) of the Information Technology (IT) Act, 2000: This section deals with the offence of identity theft. It states that whoever, fraudulently or dishonestly, makes use of the electronic signature, password, or any other unique identification feature of any other person shall be punished with imprisonment of either description for a term which may extend to three years and shall also be liable to pay a fine.

- Section 66(D) of the IT Act, 2000 pertains to the offence of cheating by personation by using a computer resource. It states that whoever, by means of any communication device or computer resource, cheats by personating shall be punished with imprisonment of either description for a term which may extend to three years and shall also be liable to pay a fine.

- National Cyber Crime Reporting Portal- One powerful resource available to victims is the National Cyber Crime Reporting Portal, equipped with a 24×7 helpline number, 1930. This portal serves as a centralised platform for reporting cybercrimes, including financial fraud.

Conclusion:

The rise of courier-related cyber scams demands increased vigilance from individuals to protect themselves against fraud. Heightened awareness, caution, and scepticism when dealing with unknown callers or suspicious requests are crucial. By following best practices, such as verifying identities, avoiding sharing sensitive information, and staying updated on emerging scams, individuals can minimise the risk of falling victim to these fraudulent schemes. Furthermore, spreading awareness about such scams and promoting cybersecurity education will play a vital role in creating a safer digital environment for everyone.

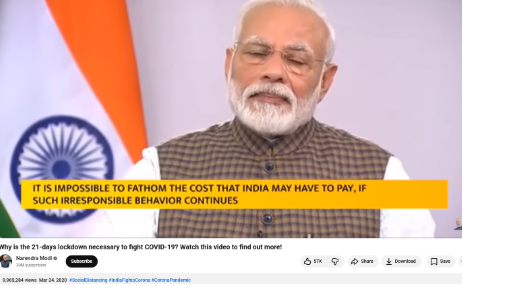

Executive Summary

A video is being shared on social media in which Prime Minister Narendra Modi can be heard saying that “a complete lockdown will be imposed from midnight to save the country.” Research by the CyberPeace found the viral claim to be misleading. Our probe revealed that the video is from March 2020, when PM Modi had announced a nationwide lockdown to curb the spread of COVID-19.

Claim:

An Instagram user shared the viral video on March 25, 2026. The link and archive link of the post are given below.

Fact Check:

To verify the claim, we conducted a keyword search on Google. However, we did not find any credible media reports confirming that such a lockdown announcement had been made recently. We then extracted keyframes from the viral video and performed a reverse image search using Google Lens. During this process, we found the same video on a YouTube channel, where it had been uploaded on March 24, 2020.

The viral portion of the clip appears around the 40-second mark in the original video.

Conclusion:

Our research found that the viral video is not recent. It dates back to March 24, 2020, when PM Modi announced a nationwide lockdown during the COVID-19 pandemic. The clip is being shared with a misleading claim.