#FactCheck - Old Japanese Earthquake Footage Falsely Linked to Tibet

Executive Summary:

A viral post on X (formerly Twitter) gained much attention, creating a false narrative of recent damage caused by the earthquake in Tibet. Our findings confirmed that the clip was not filmed in Tibet, instead it came from an earthquake that occurred in Japan in the past. The origin of the claim is traced in this report. More to this, analysis and verified findings regarding the evidence have been put in place for further clarification of the misinformation around the video.

Claim:

The viral video shows collapsed infrastructure and significant destruction, with the caption or claims suggesting it is evidence of a recent earthquake in Tibet. Similar claims can be found here and here

Fact Check:

The widely circulated clip, initially claimed to depict the aftermath of the most recent earthquake in Tibet, has been rigorously analyzed and proven to be misattributed. A reverse image search based on the Keyframes of the claimed video revealed that the footage originated from a devastating earthquake in Japan in the past. According to an article published by a Japanese news website, the incident occurred in February 2024. The video was authenticated by news agencies, as it accurately depicted the scenes of destruction reported during that event.

Moreover, the same video was already uploaded on a YouTube channel, which proves that the video was not recent. The architecture, the signboards written in Japanese script, and the vehicles appearing in the video also prove that the footage belongs to Japan, not Tibet. The video shows news from Japan that occurred in the past, proving the video was shared with different context to spread false information.

The video was uploaded on February 2nd, 2024.

Snap from viral video

Snap from Youtube video

Conclusion:

The video viral about the earthquake recently experienced by Tibet is, therefore, wrong as it appears to be old footage from Japan, a previous earthquake experienced by this nation. Thus, the need for information verification, such that doing this helps the spreading of true information to avoid giving false data.

- Claim: A viral video claims to show recent earthquake destruction in Tibet.

- Claimed On: X (Formerly Known As Twitter)

- Fact Check: False and Misleading

Related Blogs

Executive Summary

Despite a truce announced in mid-April, sporadic violence has continued between Israel and the Iran-backed Hezbollah in Lebanon. Meanwhile, a video circulating widely on social media shows a multi-storey building engulfed in flames, with users falsely linking it to the ongoing conflict. Posts sharing the clip claim it depicts a Hezbollah strike on an Israeli military headquarters, alleging that several soldiers were killed and that Israel is censoring visuals from the incident. However, research by the CyberPeace Research Wing found the claim to be misleading. The video is unrelated to the Israel-Hezbollah conflict. Verification shows that the footage actually captures a fire at an apartment building in New York City. Firefighters can be seen at the scene attempting to control the blaze.

Claim

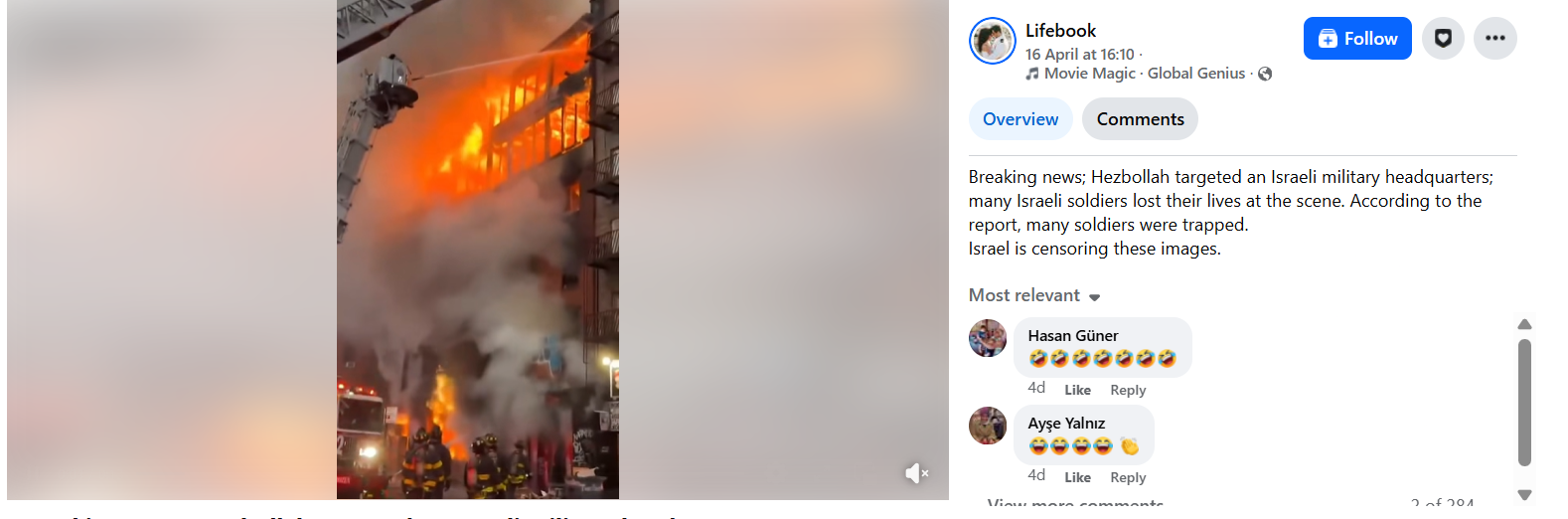

A Facebook post shared on April 16, 2026, read: “Breaking news; Hezbollah targeted an Israeli military headquarters; many Israeli soldiers lost their lives at the scene… Israel is censoring these images.” The video has garnered more than 240,000 views.

- https://perma.cc/BQ6X-4LAT

- https://www.facebook.com/watch/?v=1283830349750737

Fact Check

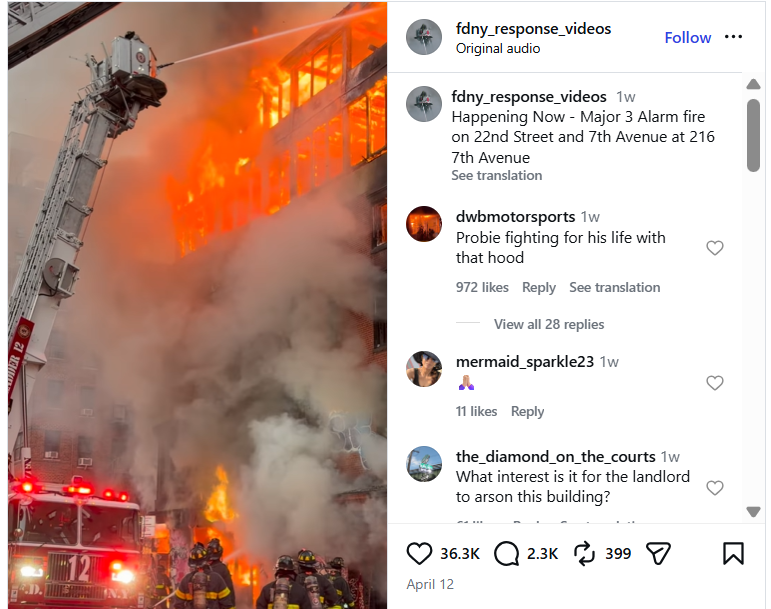

A reverse image search using keyframes from the viral clip led to a higher-quality version posted on April 12, 2026, by an Instagram account titled “FDNY response video.” The caption stated: “Happening now — Major 3 alarm fire on 22nd Street and 7th Avenue at 216 7th Avenue.”

- https://www.instagram.com/p/DXB0ePqjgGD/

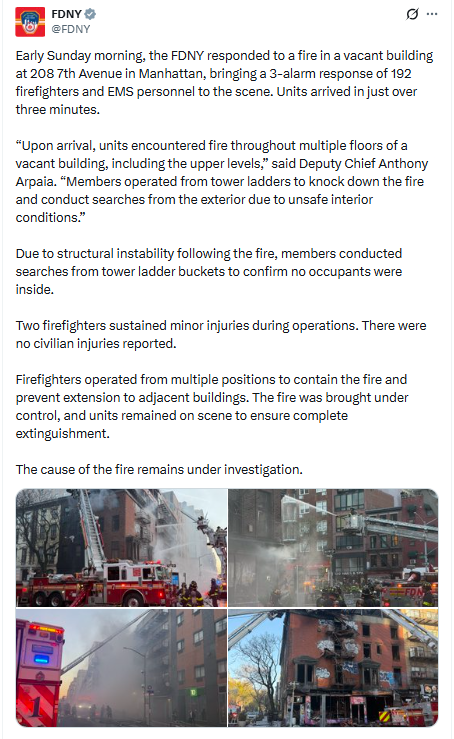

Further verification found that images of the same incident were shared on April 13, 2026, by the official X account of the New York City Fire Department. According to the post, no civilians were injured in the fire, although two firefighters sustained minor injuries while battling the blaze.

Using the location details mentioned in the posts, visible structures in the video were matched with Google Maps street imagery, confirming that the footage was indeed filmed in New York City.

Conclusion

The research establishes that the viral video is being shared with a false claim. It does not show any attack on an Israeli military facility but rather a residential building fire in New York City.

In an exciting milestone achieved by CyberPeace, an ICANN APRALO At-Large organization, in collaboration with the Internet Corporation for Assigned Names and Numbers (ICANN), has successfully deployed and made operational an L-root server instance in Ranchi, Jharkhand. This initiative marks a significant step toward enhancing the resilience, speed, and security of internet connectivity in eastern India.

Understanding the DNS hierarchy – Starting from Root

Internet users access online information through different domain names and interactions with any web browser takes place through IP (Internet Protocol) addresses. Domain Name System (DNS) functions as the internet's equivalent of Yellow Pages or the phonebook of cyberspace. When a person uses a domain name like www.cyberpeace.org to access a website, their browser communicates with the internet protocol, and DNS converts the domain name to the corresponding IP address so that web browsers may load the web pages. The function of a DNS is to convert domain names to Internet Protocol addresses. It enables the respective browsers to load the resources from the Internet.

When a user types a domain name into your browser, a DNS query works behind the scenes to find the website’s IP address. First, your device asks a DNS resolver—often provided by your ISP or a third-party service—for the address. The resolver checks its cache for a match, and if none is found, it queries a root server to locate the top-level domain (TLD) server (like .com or .org). The resolver then asks the TLD server for the Authoritative nameserver responsible for the particular domain, which provides the specific IP address. Finally, the resolver sends this address back to your device, enabling it to connect to the website’s server and load the page. The entire process happens in milliseconds, ensuring seamless browsing.

Special focus on Root Server:

A root server is a name server that directly answers queries for records in the root zone and redirects requests for more specific domains to the appropriate top-level domain (TLD) servers. Root servers are an integral part of this system, acting as the first step in resolving a domain name into its corresponding IP address. They provide the initial direction needed to locate the authoritative servers for any domain.

The DNS root zone is served by 13 unique IP addresses, supported by hundreds of redundant root servers distributed worldwide connected through Anycast Routing to manage requests efficiently. As of January 8, 2025, the global root server system consists of 1921 instances operated by 12 independent root server operators. These servers ensure the smooth functioning of the internet by managing the backbone of DNS queries.

Type of Root Server Instances:

Well, in this regard, there are two types of root server instances that can be found– Global instance and Local instance.

Global root server instances are the primary root servers distributed strategically around the world. Local instances, on the other hand, are replicas of these global servers deployed in specific regions to handle local DNS traffic more efficiently. In each operator's list of sites, some instances are marked as global (globe icon) and some are marked as local (flag icon). The difference is in how widely available that instance will be, because of how routing for that instance is done. Recall that the routes for an instance are announced by BGP, the inter-domain routing protocol.

For global instances, the route advertisement is permitted to spread throughout the Internet, i.e., any router on the Internet could know the path to that instance. Of course, for a particular source, the route to that instance may not be the optimal route, so some other instance could be chosen as the destination.

With a local instance, however, the route advertisement is limited to only nearby networks. For example, the instance may be visible to just one ISP, or to ISPs that connect at a particular exchange point. Sources from farther away will not be able to see and query that local instance.

Deployment in Ranchi - The Journey & Significance:

CyberPeace in Collaboration with ICANN has successfully deployed an L-root server instance in Ranchi, marking a significant milestone in enhancing regional Internet infrastructure. This deployment, part of a global network of root servers, ensures faster and more reliable DNS query resolution for the region, reducing latency and enhancing cybersecurity.

The Journey of deploying the L-Root instance in Collaboration with ICANN followed the steps-

- Signing the Agreement: Finalized the L-SINGLE Hosting Agreement with ICANN to formalize the partnership.

- Procuring the Hardware: Acquired the required hardware appliance to meet technical standards for hosting the L-root server.

- Setup and Installation: Configured and installed the appliance to prepare it for seamless operation.

- Joining the Anycast Network: Integrated the server into ICANN's global Anycast network using BGP (Border Gateway Protocol) for efficient DNS traffic management.

The deployment of the L-root server in Ranchi marks a significant boost to the region’s digital ecosystem. It accelerates DNS query resolution, reducing latency and enhancing internet speed and reliability for users.

This instance strengthens cyber defenses by mitigating Distributed Denial of Service (DDoS) risks and managing local traffic efficiently. It also underscores Eastern India’s advanced digital infrastructure, aligning with initiatives like Digital India to meet evolving digital demands.

By handling local queries, the L-root server eases the load on global servers, contributing to a more stable and resilient global internet.

CyberPeace’s Commitment to a Secure and resilient Cyberspace

As an organization dedicated to promoting peace, security and resilience in cyberspace, CyberPeace views this collaboration with ICANN as a significant achievement in its mission. By strengthening the internet’s backbone in eastern India, this deployment underscores our commitment to enabling a secure, accessible, and resilient digital ecosystem.

Way forward and Roadmap for Strengthening India’s DNS Infrastructure:

The successful deployment of the L-root instance in Ranchi is a stepping stone toward bolstering India's digital ecosystem. CyberPeace aims to promote awareness about DNS infrastructure through workshops and seminars, emphasizing its critical role in a resilient digital future.

With plans to deploy more such root server instances across India, the focus is on expanding local DNS infrastructure to enhance efficiency and security. Collaborative efforts with government agencies, ISPs, and tech organizations will drive this vision forward. A robust monitoring framework will ensure optimal performance and long-term sustainability of these initiatives.

Conclusion

The deployment of the L-root server instance in Eastern India represents a monumental step toward strengthening the region’s digital foundation. As Ranchi joins the network of cities hosting root server instances, the benefits will extend not only to the local community but also to the global internet ecosystem. With this milestone, CyberPeace reaffirms its commitment to driving innovation and resilience in cyberspace, paving the way for a more connected and secure future.

Introduction

Entrusted with the responsibility of leading the Global Education 2030 Agenda through the Sustainable Development Goal 4, UNESCO’s Institute for Lifelong Learning in collaboration with the Media and Information Literacy and Digital Competencies Unit has recently launched a Media and Information Literacy Course for Adult Educators. The course aligns with The Pact for The Future adopted at The United Nations Summit of the Future, September 2024 - asking for increased efforts towards media and information literacy from its member countries. The course is free for Adult Educators to access and is available until 31st May 2025.

The Course

According to a report by Statista, 67.5% of the global population uses the internet. Regardless of the age and background of the users, there is a general lack of understanding on how to spot misinformation, targeted hate, and navigating online environments in a manner that is secure and efficient. Since misinformation (largely spread online) is enabled by the lack of awareness, digital literacy becomes increasingly important. The course is designed keeping in mind that many active adult educators are yet to get an opportunity to hone their skills with regard to media and information through formal education. Self-paced, a total of 10 hours, this course covers basics such as concepts of misinformation and disinformation, artificial intelligence, and combating hate speech, and offers a certificate on completion.

CyberPeace Recommendations

As this course is free of cost, can be done in a remote capacity, and covers basics regarding digital literacy, all eligible are encouraged to take it up to familiarise themselves with such topics. However, awareness regarding the availability of this course, alongside who can avail of this opportunity can be further worked on so a larger number can avail its benefits.

CyberPeace Recommendations To Enhance Positive Impact

- Further Collaboration: As this course is open to adult educators, one can consider widening the scope through active engagement with Independent organisations and even Individual internet users who are willing to learn.

- Engagement with Educational Institutions: After launching a course, an interactive outreach programme and connecting with relevant stakeholders can prove to be beneficial. Since this course requires each individual adult educator to sign up to avail the course, partnering with educational universities, institutes, etc. is encouraged. In the Indian context, active involvement with training institutes such as DIET (District Institute of Education and Training), SCERT (State Council of Educational Research and Training), NCERT (National Council of Educational Research and Training), and Open Universities, etc. could be initiated, facilitating greater awareness and more participation.

- Engagement through NGOs: NGOs (focused on digital literacy) with a tie-up with UNESCO, can aid in implementing and encouraging awareness. A localised language approach option can be pondered upon for inclusion as well.

Conclusion

Though a long process, tackling misinformation through education is a method that deals with the issue at the source. A strong foundation in awareness and media literacy is imperative in the age of fake news, misinformation, and sensitive data being peddled online. UNESCO’s course launch garners attention as it comes from an international platform, is free of cost, truly understands the gravity of the situation, and calls for action in the field of education, encouraging others to do the same.

References

- https://www.uil.unesco.org/en/articles/media-and-information-literacy-course-adult-educators-launched

- https://www.unesco.org/en/articles/celebrating-global-media-and-information-literacy-week-2024

- https://www.unesco.org/en/node/559#:~:text=UNESCO%20believes%20that%20education%20is,must%20be%20matched%20by%20quality.