#FactCheck - AI-Generated Image Falsely Linked to Mira–Bhayandar Bridge

Executive Summary

Mumbai’s Mira–Bhayandar bridge has recently been in the news due to its unusual design. In this context, a photograph is going viral on social media showing a bus seemingly stuck on the bridge. Some users are also sharing the image while claiming that it is from Sonpur subdivision in Bihar. However, an research by the CyberPeace has found that the viral image is not real. The bridge shown in the image is indeed the Mira–Bhayandar bridge, which is under discussion because its design causes it to suddenly narrow from four lanes to two lanes. That said, the bridge is not yet operational, and the viral image showing a bus stuck on it has been created using Artificial Intelligence (AI).

Claim

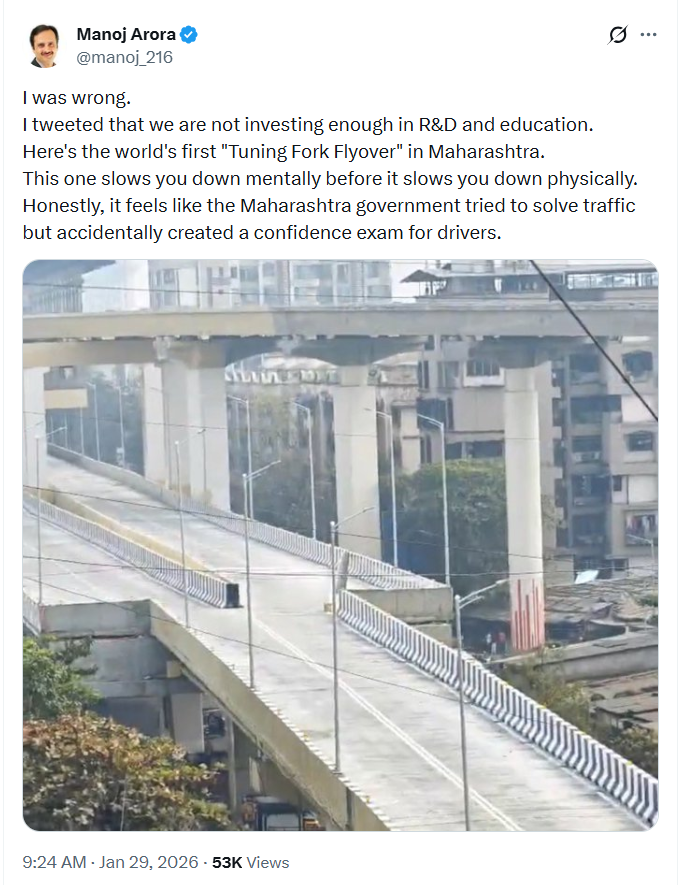

An Instagram user shared the viral image on January 29, 2026, with the caption:“Are Indian taxpayers happy to see that this is funded by their money?” The link, archive link, and screenshot of the post can be seen below.

Fact Check:

To verify the claim, we first conducted a Google Lens reverse image search. This led us to a post shared by X (formerly Twitter) user Manoj Arora on January 29. While the bridge structure in that image matches the viral photo, no bus is visible in the original post.This raised suspicion that the viral image had been digitally manipulated.

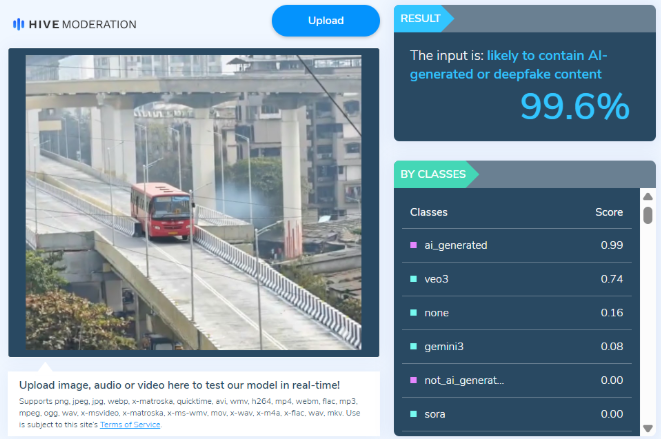

We then ran the viral image through the AI detection tool Hive Moderation, which flagged it as over 99% likely to be AI-generated

Conclusion

The CyberPeace research confirms that while the Mira–Bhayandar bridge is real and has been in the news due to its design, the viral image showing a bus stuck on the bridge has been created using AI tools. Therefore, the image circulating on social media is misleading.

Related Blogs

.webp)

Executive Summary:

A viral online video claims to show a Syrian prisoner experiencing sunlight for the first time in 13 years. However, the CyberPeace Research Team has confirmed that the video is a deep fake, created using AI technology to manipulate the prisoner’s facial expressions and surroundings. The original footage is unrelated to the claim that the prisoner has been held in solitary confinement for 13 years. The assertion that this video depicts a Syrian prisoner seeing sunlight for the first time is false and misleading.

Claim A viral video falsely claims that a Syrian prisoner is seeing sunlight for the first time in 13 years.

Factcheck:

Upon receiving the viral posts, we conducted a Google Lens search on keyframes from the video. The search led us to various legitimate sources featuring real reports about Syrian prisoners, but none of them included any mention of such an incident. The viral video exhibited several signs of digital manipulation, prompting further investigation.

We used AI detection tools, such as TrueMedia, to analyze the video. The analysis confirmed with 97.0% confidence that the video was a deepfake. The tools identified “substantial evidence of manipulation,” particularly in the prisoner’s facial movements and the lighting conditions, both of which appeared artificially generated.

Additionally, a thorough review of news sources and official reports related to Syrian prisoners revealed no evidence of a prisoner being released from solitary confinement after 13 years, or experiencing sunlight for the first time in such a manner. No credible reports supported the viral video’s claim, further confirming its inauthenticity.

Conclusion:

The viral video claiming that a Syrian prisoner is seeing sunlight for the first time in 13 years is a deep fake. Investigations using tools like Hive AI detection confirm that the video was digitally manipulated using AI technology. Furthermore, there is no supporting information in any reliable sources. The CyberPeace Research Team confirms that the video was fabricated, and the claim is false and misleading.

- Claim: Syrian prisoner sees sunlight for the first time in 13 years, viral on social media.

- Claimed on: Facebook and X(Formerly Twitter)

- Fact Check: False & Misleading

.webp)

Introduction

Digitalisation presents both opportunities and challenges for micro, small, and medium enterprises (MSMEs) in emerging markets. Digital tools can increase business efficiency and reach but also increase exposure to misinformation, fraud, and cyber attacks. Such cyber threats can lead to financial losses, reputational damage, loss of customer trust, and other challenges hindering MSMEs' ability and desire to participate in the digital economy.

The current information dump is a major component of misinformation. Misinformation spreads or emerges from online sources, causing controversy and confusion in various fields including politics, science, medicine, and business. One obvious adverse effect of misinformation is that MSMEs might lose trust in the digital market. Misinformation can even result in the devaluation of a product, sow mistrust among customers, and negatively impact the companies’ revenue. The reach of and speed with which misinformation can spread and ruin companies’ brands, as well as the overall difficulty businesses face in seeking recourse, may discourage MSMEs from fully embracing the digital ecosystem.

MSMEs are essential for innovation, job development, and economic growth. They contribute considerably to the GDP and account for a sizable share of enterprises. They serve as engines of economic resilience in many nations, including India. Hence, a developing economy’s prosperity and sustainability depend on the MSMEs' growth and such digital threats might hinder this process of growth.

There are widespread incidents of misinformation on social media, and these affect brand and product promotion. MSMEs also rely on online platforms for business activities, and threats such as misinformation and other digital risks can result in reputational damage and financial losses. A company's reputation being tarnished due to inaccurate information or a product or service being incorrectly represented are just some examples and these incidents can cause MSMSs to lose clients and revenue.

In the digital era, MSMEs need to be vigilant against false information in order to preserve their brand name, clientele, and financial standing. In the interconnected world of today, these organisations must develop digital literacy and resistance against misinformation in order to succeed in the long run. Information resilience is crucial for protecting and preserving their reputation in the online market.

The Impact of Misinformation on MSMEs

Misinformation can have serious financial repercussions, such as lost sales, higher expenses, legal fees, harm to the company's reputation, diminished consumer trust, bad press, and a long-lasting unfavourable impact on image. A company's products may lose value as a result of rumours, which might affect both sales and client loyalty.

Inaccurate information can also result in operational mistakes, which can interrupt regular corporate operations and cost the enterprise a lot of money. When inaccurate information on a product's safety causes demand to decline and stockpiling problems to rise, supply chain disruptions may occur. Misinformation can also lead to operational and reputational issues, which can cause psychological stress and anxiety at work. The peace of the workplace and general productivity may suffer as a result. For MSMEs, false information has serious repercussions that impact their capacity to operate profitably, retain employees, and maintain a sustainable business. Companies need to make investments in cybersecurity defence, legal costs, and restoring consumer confidence and brand image in order to lessen the effects of false information and ensure smooth operations.

When we refer to the financial implications caused by misinformation spread in the market, be it about the product or the enterprise, the cost is two-fold in all scenarios: there is loss of revenue and then the organisation has to contend with the costs of countering the impact of the misinformation. Stock Price Volatility is one financial consequence for publicly-traded MSMEs, as misinformation can cause stock price fluctuations. Potential investors might be discouraged due to false negative information.

Further, the reputational damage consequences of misinformation on MSMEs is also a serious concern as a loss of their reputation can have long-term damages for a carefully-cultivated brand image.

There are also operational disruptions caused by misinformation: for instance, false product recalls can take place and supplier mistrust or false claims about supplier reliability can disrupt procurement leading to disruptions in the operations of MSMEs.

Misinformation can negatively impact employee morale and productivity due to its physiological effects. This leads to psychological stress and workplace tensions. Staff confidence is also affected due to the misinformation about the brand. Internal operational stability is a core component of any organisation’s success.

Misinformation: Key Risk Areas for MSMEs

- Product and Service Misinformation

For MSMEs, misinformation about products and services poses a serious danger since it undermines their credibility and the confidence clients place in the enterprise and its products or services. Because this misleading material might mix in with everyday activities and newsfeeds, viewers may find it challenging to identify fraudulent content. For example, falsehoods and rumours about a company or its goods may travel quickly through social media, impacting the confidence and attitude of customers. Algorithms that favour sensational material have the potential to magnify disinformation, resulting in the broad distribution of erroneous information that can harm a company's brand.

- False Customer Reviews and Testimonials

False testimonies and evaluations pose a serious risk to MSMEs. These might be abused to damage a company's brand or lead to unfair competition. False testimonials, for instance, might mislead prospective customers about the calibre or quality of a company’s offerings, while phony reviews can cause consumers to mistrust a company's goods or services. These actions frequently form a part of larger plans by rival companies or bad individuals to weaken a company's position in the market.

- Misleading Information about Business Practices

False statements or distortions regarding a company's operations constitute misleading information about business practices. This might involve dishonest marketing, fabrications regarding the efficacy or legitimacy of goods, and inaccurate claims on a company's compliance with laws or moral principles. Such incorrect information can result in a decline in consumer confidence, harm to one's reputation, and even legal issues if consumers or rival businesses act upon it. Even before the truth is confirmed, for example, allegations of wrongdoing or criminal activity pertaining can inflict a great deal of harm, even if they are disproven later.

- Fake News Related to Industry and Market Conditions

By skewing consumer views and company actions, fake news about market and industry circumstances can have a significant effect on MSMEs. For instance, false information about market trends, regulations, or economic situations might make consumers lose faith in particular industries or force corporations to make poor strategic decisions. The rapid dissemination of misinformation on online platforms intensifies its effects on enterprises that significantly depend on digital engagement for their operations.

Factors Contributing to the Vulnerability of MSMEs

- Limited Resources for Verification

MSMEs have a small resource pool. Information verification is typically not a top priority for most. MSMEs usually lack the resources needed to verify the information and given their limited resources, they usually tend to deploy the same towards other, more seemingly-critical functions. They are more susceptible to misleading information because they lack the capacity to do thorough fact-checking or validate the authenticity of digital content. Technology tools, human capital, and financial resources are all in low supply but they are essential requirements for effective verification processes.

- Inadequate Digital Literacy

Digital literacy is required for effective day-to-day operations. Fake reviews, rumours, or fake images commonly used by malicious actors can result in increased scrutiny or backlash against the targeted business. The lack of awareness combined with limited resources usually spells out a pale redressal plan on part of the affected MSME. Due to their low digital literacy in this domain, a large number of MSMEs are more susceptible to false information and other online threats. Inadequate knowledge and abilities to use digital platforms securely and effectively can result in making bad decisions and raising one's vulnerability to fraud, deception, and online scams.

- Lack of Crisis Management Plans

MSMEs frequently function without clear-cut procedures for handling crises. They lack the strategic preparation necessary to deal with the fallout from disinformation and cyberattacks. Proactive crisis management plans usually incorporate procedures for detecting, addressing, and lessening the impact of digital harms, which are frequently absent from MSMEs.

- High Dependence on Social Media and Online Platforms

The marketing strategy for most MSMEs is heavily reliant on social media and online platforms. While the digital-first nature of operations reduces the need for a large capital to set up in the form of stores or outlets, it also gives them a higher need to stay relevant to the trends of the online community and make their products attractive to the customer base. However, MSMEs are depending more and more on social media and other online channels for marketing, customer interaction, and company operations. These platforms are really beneficial, but they also put organisations at a higher risk of false information and online fraud. Heavy reliance on these platforms coupled with the absence of proper security measures and awareness can result in serious interruptions to operations and monetary losses.

CyberPeace Policy Recommendations to Enhance Information Resilience for MSMEs

CyberPeace advocates for establishing stronger legal frameworks to protect MSMEs from misinformation. Governments should establish regulations to build trust in online business activities and mitigate fraud and misinformation risks. Mandatory training programs should be implemented to cover online safety and misinformation awareness for MSME businesses. Enhanced reporting mechanisms should be developed to address digital harm incidents promptly. Governments should establish strict penalties for deliberate inaccurate misinformation spreaders, similar to those for copyright or intellectual property violations. Community-based approaches should be encouraged to help MSMEs navigate digital challenges effectively. Donor communities and development agencies should invest in digital literacy and cybersecurity training for MSMEs, focusing on misinformation mitigation and safe online practices. Platform accountability should be increased, with social media and online platforms playing a more active role in removing content from known scam networks and responding to fraudulent activity reports. There should be investment in comprehensive digital literacy solutions for MSMEs that incorporate cyber hygiene and discernment skills to combat misinformation.

Conclusion

Misinformation poses a serious risk to MSME’s digital resilience, operational effectiveness, and financial stability. MSMEs are susceptible to false information because of limited technical resources, lack of crisis management strategies, and insufficient digital literacy. They are also more vulnerable to false information and online fraud because of their heavy reliance on social media and other online platforms. To address these challenges it is significant to strengthen their cyber hygiene and information resilience. Robust policy and regulatory frameworks are encouraged, promoting and mandating online safety training programmes, and improved reporting procedures, are required to overall enhance the information landscape.

References:

- https://www.dai.com/uploads/digital-downsides.pdf

- https://www.indiacode.nic.in/bitstream/123456789/2013/3/A2006-27.pdf

- https://pib.gov.in/PressReleaseIframePage.aspx?PRID=1946375

- https://dai-global-digital.com/digital-downsides-the-economic-impact-of-misinformation-and-other-digital-harms-on-msmes-in-kenya-india-and-cambodia.html

- https://www.dai.com/uploads/digital-downsides.pdf

Executive Summary:

A viral claim alleges that following the Supreme Court of India’s August 11, 2025 order on relocating stray dogs, authorities in Delhi NCR have begun mass culling. However, verification reveals the claim to be false and misleading. A reverse image search of the viral video traced it to older posts from outside India, probably linked to Haiti or Vietnam, as indicated by the use of Haitian Creole and Vietnamese language respectively. While the exact location cannot be independently verified, it is confirmed that the video is not from Delhi NCR and has no connection to the Supreme Court’s directive. Therefore, the claim lacks authenticity and is misleading

Claim:

There have been several claims circulating after the Supreme Court of India on 11th August 2025 ordered the relocation of stray dogs to shelters. The primary claim suggests that authorities, following the order, have begun mass killing or culling of stray dogs, particularly in areas like Delhi and the National Capital Region. This narrative intensified after several videos purporting to show dead or mistreated dogs allegedly linked to the Supreme Court’s directive—began circulating online.

Fact Check:

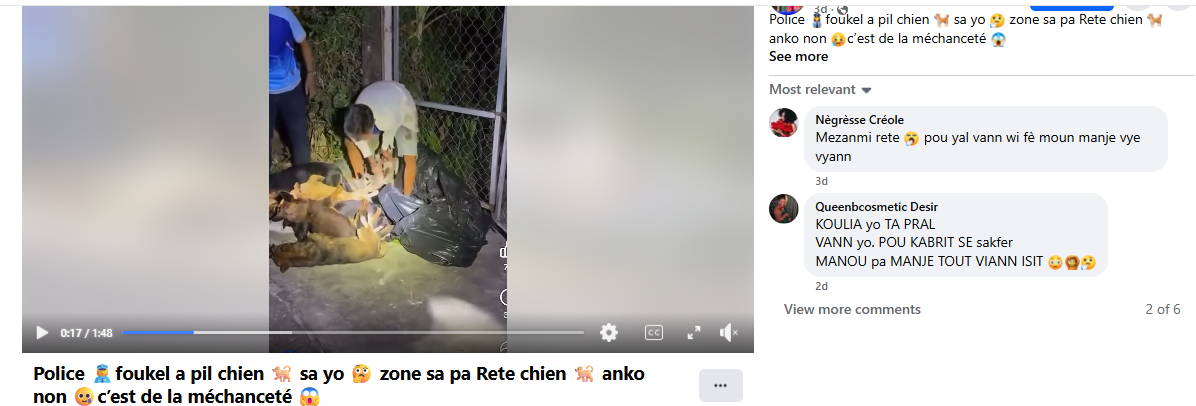

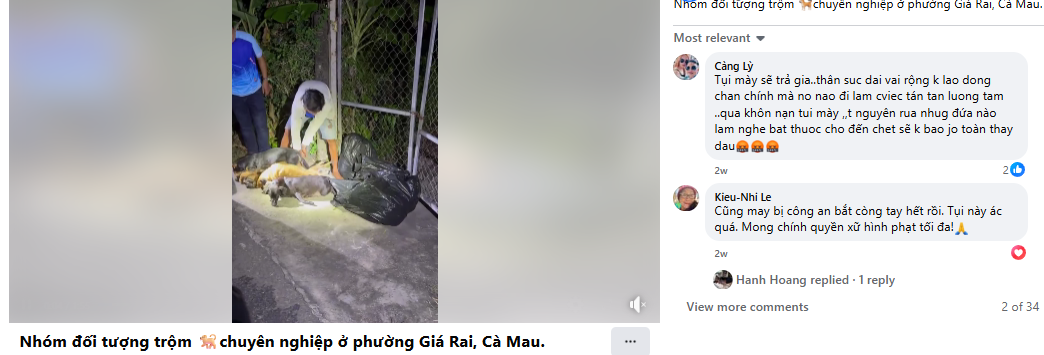

After conducting a reverse image search using a keyframe from the viral video, we found similar videos circulating on Facebook. Upon analyzing the language used in one of the posts, it appears to be Haitian Creole (Kreyòl Ayisyen), which is primarily spoken in Haiti. Another similar video was also found on Facebook, where the language used is Vietnamese, suggesting that the post associates the incident with Vietnam.

However, it is important to note that while these posts point towards different locations, the exact origin of the video cannot be independently verified. What can be established with certainty is that the video is not from Delhi NCR, India, as is being claimed. Therefore, the viral claim is misleading and lacks authenticity.

Conclusion:

The viral claim linking the Supreme Court’s August 11, 2025 order on stray dogs to mass culling in Delhi NCR is false and misleading. Reverse image search confirms the video originated outside India, with evidence of Haitian Creole and Vietnamese captions. While the exact source remains unverified, it is clear the video is not from Delhi NCR and has no relation to the Court’s directive. Hence, the claim lacks credibility and authenticity.

Claim: Viral fake claim of Delhi Authority culling dogs after the Supreme Court directive on the ban of stray dogs as on 11th August 2025

Claimed On: Social Media

Fact Check: False and Misleading