#FactCheck-AI-Generated Viral Image of US President Joe Biden Wearing a Military Uniform

Executive Summary:

A circulating picture which is said to be of United States President Joe Biden wearing military uniform during a meeting with military officials has been found out to be AI-generated. This viral image however falsely claims to show President Biden authorizing US military action in the Middle East. The Cyberpeace Research Team has identified that the photo is generated by generative AI and not real. Multiple visual discrepancies in the picture mark it as a product of AI.

Claims:

A viral image claiming to be US President Joe Biden wearing a military outfit during a meeting with military officials has been created using artificial intelligence. This picture is being shared on social media with the false claim that it is of President Biden convening to authorize the use of the US military in the Middle East.

Similar Post:

Fact Check:

CyberPeace Research Team discovered that the photo of US President Joe Biden in a military uniform at a meeting with military officials was made using generative-AI and is not authentic. There are some obvious visual differences that plainly suggest this is an AI-generated shot.

Firstly, the eyes of US President Joe Biden are full black, secondly the military officials face is blended, thirdly the phone is standing without any support.

We then put the image in Image AI Detection tool

The tool predicted 4% human and 96% AI, Which tells that it’s a deep fake content.

Let’s do it with another tool named Hive Detector.

Hive Detector predicted to be as 100% AI Detected, Which likely to be a Deep Fake Content.

Conclusion:

Thus, the growth of AI-produced content is a challenge in determining fact from fiction, particularly in the sphere of social media. In the case of the fake photo supposedly showing President Joe Biden, the need for critical thinking and verification of information online is emphasized. With technology constantly evolving, it is of great importance that people be watchful and use verified sources to fight the spread of disinformation. Furthermore, initiatives to make people aware of the existence and impact of AI-produced content should be undertaken in order to promote a more aware and digitally literate society.

- Claim: A circulating picture which is said to be of United States President Joe Biden wearing military uniform during a meeting with military officials

- Claimed on: X

- Fact Check: Fake

Related Blogs

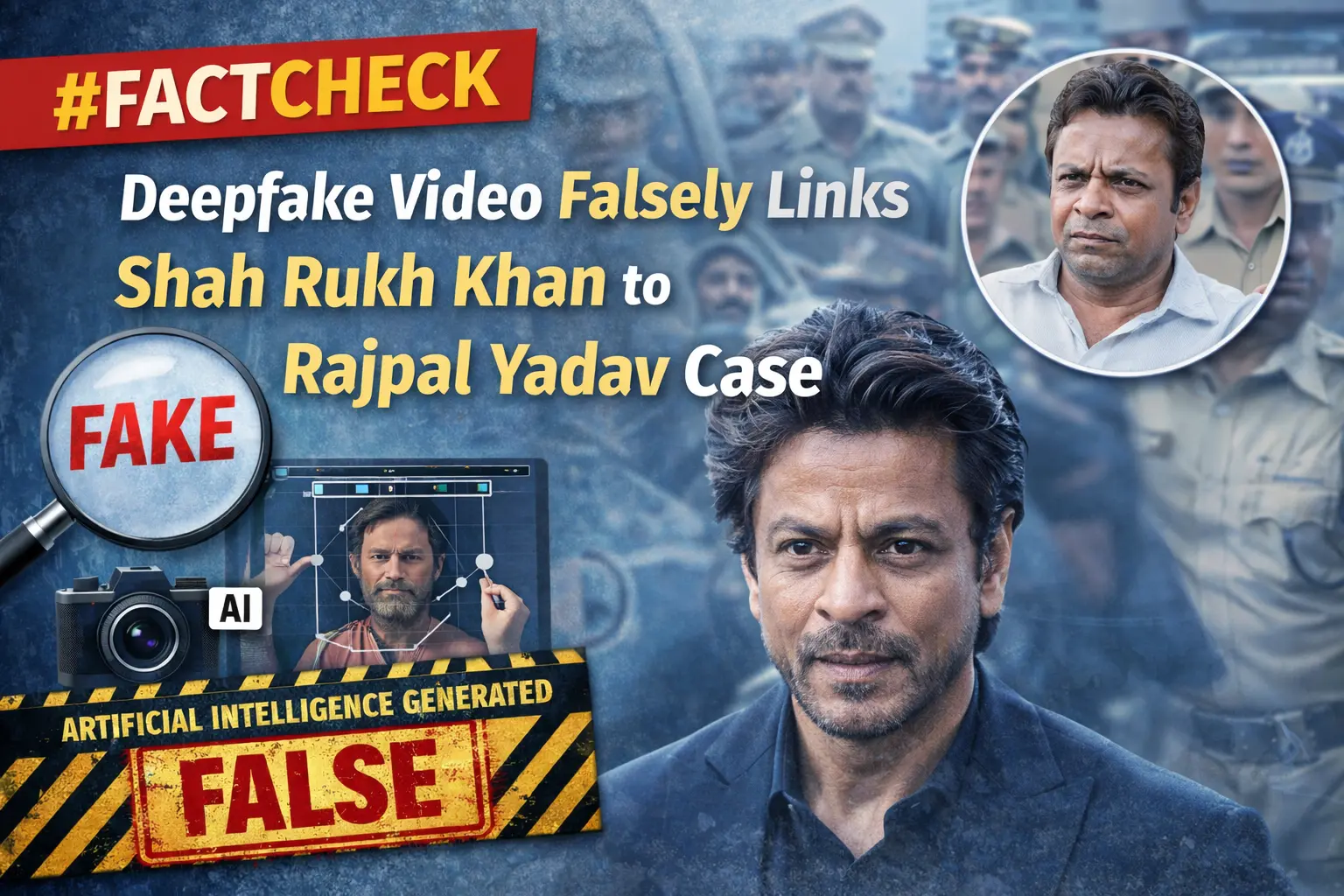

Executive Summary

A video featuring popular comedian Rajpal Yadav has recently gone viral on social media, claiming that he is currently lodged in Tihar Jail in connection with a loan default and cheque bounce case. In connection with this, another video showing Bollywood superstar Shah Rukh Khan is being widely shared online. In the viral clip, Khan is purportedly seen saying that he would help Rajpal Yadav get out of jail and also offer him a role in his upcoming film. However, research by the CyberPeace found the viral video to be fake. The clip is a deepfake, in which the audio has been manipulated using artificial intelligence. In the original video, Shah Rukh Khan is speaking about his life and personal experiences. Although several prominent Bollywood personalities have expressed support for Rajpal Yadav, the claims made in the viral video are misleading.

Claim

An Instagram user named “ayubeditz” shared the viral video on February 11, 2026, with the caption: “Rajpal Yadav bhai, stay strong, we are all with you — Shah Rukh Khan.” The link to the post and its archived version are provided below.

Fact Check

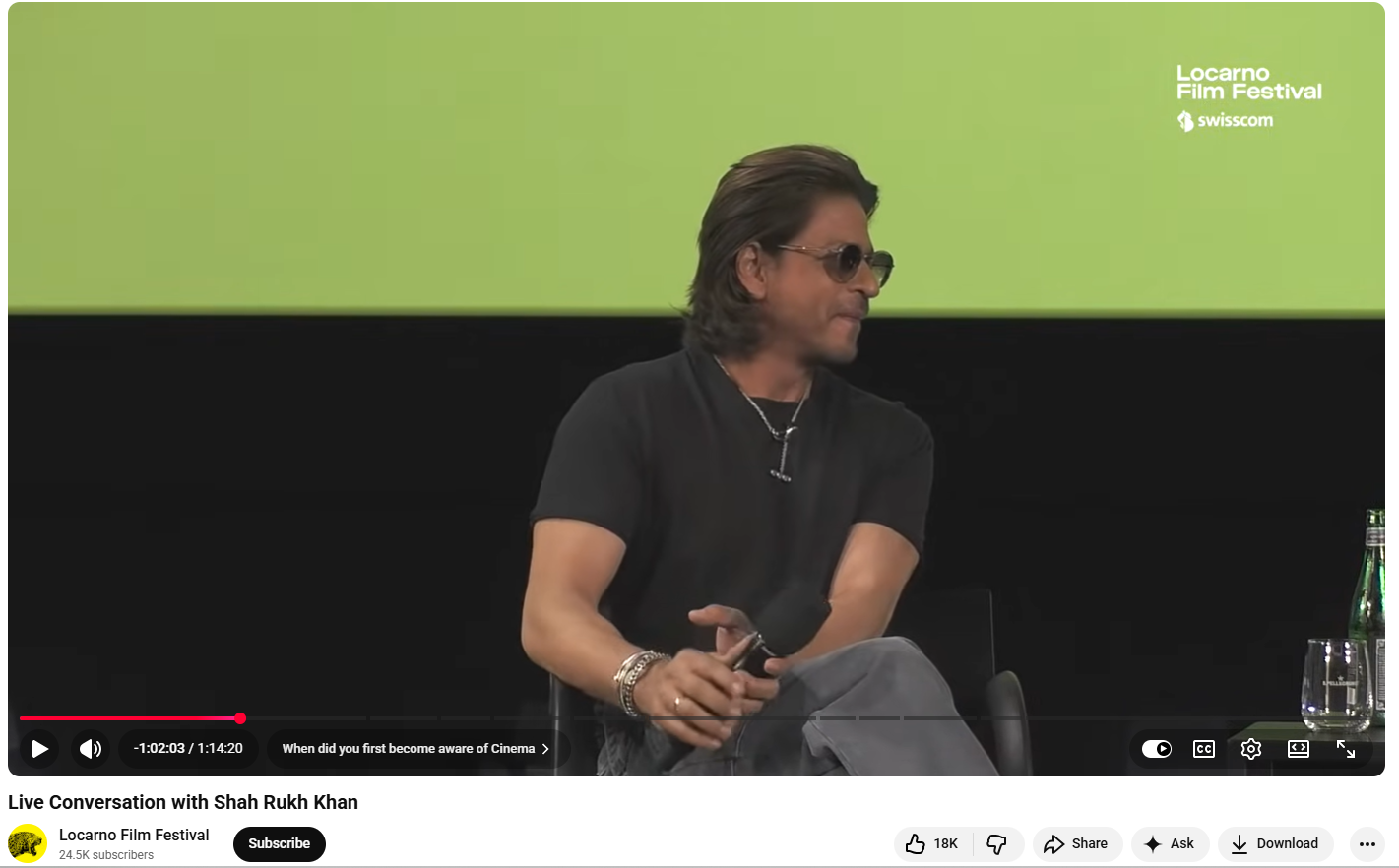

To verify the claim, we extracted key frames from the viral video and conducted a Google reverse image search. This led us to the original video uploaded on a YouTube channel titled “Locarno Film Festival” on August 11, 2024. According to the available information, Shah Rukh Khan was sharing insights about his life and career during a conversation with the festival’s Artistic Director, Giona A. Nazzaro. This raised strong suspicion that the viral video had been edited using AI.

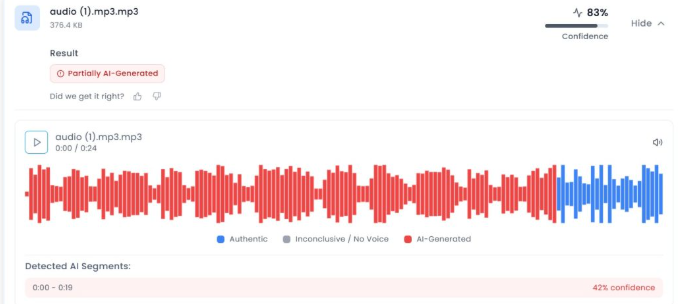

To further examine the authenticity of the audio, we analysed it using AI detection tools. The audio was first checked using Aurigin.ai, which indicated an 83 percent probability that the voice in the viral clip was AI-generated.

Conclusion

The CyberPeace’s research confirmed that the claim associated with Shah Rukh Khan’s viral video is false. The video is a deepfake in which the audio has been altered using artificial intelligence. In the original footage, Khan was discussing his life and experiences, and he did not make any statement about helping Rajpal Yadav.

Introduction

In a world where social media dictates public perception and content created by AI dilutes the difference between fact and fiction, mis/disinformation has become a national cybersecurity threat. Today, disinformation campaigns are designed for their effect, with political manipulation, interference in public health, financial fraud, and even community violence. India, with its 900+ million internet users, is especially susceptible to this distortion online. The advent of deep fakes, AI-text, and hyper-personalised propaganda has made disinformation more plausible and more difficult to identify than ever.

What is Misinformation?

Misinformation is false or inaccurate information provided without intent to deceive. Disinformation, on the other hand, is content intentionally designed to mislead and created and disseminated to harm or manipulate. Both are responsible for what experts have termed an "infodemic", overwhelming people with a deluge of false information that hinders their ability to make decisions.

Examples of impactful mis/disinformation are:

- COVID-19 vaccine conspiracy theories (e.g., infertility or microchips)

- Election-related false news (e.g., EVM hacking so-called)

- Social disinformation (e.g., manipulated videos of riots)

- Financial scams (e.g., bogus UPI cashbacks or RBI refund plans)

How Misinformation Spreads

Misinformation goes viral because of both technology design and human psychology. Social media sites such as Facebook, X (formerly Twitter), Instagram, and WhatsApp are designed to amplify messages that elicit high levels of emotional reactions are usually polarising, sensationalistic, or fear-mongering posts. This causes falsehoods or misinformation to get much more attention and activity than authentic facts, and therefore prioritises virality over truth.

Another major consideration is the misuse of generative AI and deep fakes. Applications like ChatGPT, Midjourney, and ElevenLabs can be used to generate highly convincing fake news stories, audio recordings, or videos imitating public figures. These synthetic media assets are increasingly being misused by bad actors for political impersonation, propagating fabricated news reports, and even carrying out voice-based scams.

To this danger are added coordinated disinformation efforts that are commonly operated by foreign or domestic players with certain political or ideological objectives. These efforts employ networks of bot networks on social media, deceptive hashtags, and fabricated images to sway public opinion, especially during politically sensitive events such as elections, protests, or foreign wars. Such efforts are usually automated with the help of bots and meme-driven propaganda, which makes them scalable and traceless.

Why Misinformation is Dangerous

Mis/disinformation is a significant threat to democratic stability, public health, and personal security. Perhaps one of the most pernicious threats is that it undermines public trust. If it goes unchecked, then it destroys trust in core institutions like the media, judiciary, and electoral system. This erosion of public trust has the potential to destabilise democracies and heighten political polarisation.

In India, false information has had terrible real-world outcomes, especially in terms of creating violence. Misleading messages regarding child kidnappers on WhatsApp have resulted in rural mob lynching. As well, communal riots have been sparked due to manipulated religious videos, and false terrorist warnings have created public panic.

The pandemic of COVID-19 also showed us how misinformation can be lethal. Misinformation regarding vaccine safety, miracle cures, and the source of viruses resulted in mass vaccine hesitancy, utilisation of dangerous treatments, and even avoidable deaths.

Aside from health and safety, mis/disinformation has also been used in financial scams. Cybercriminals take advantage of the fear and curiosity of the people by promoting false investment opportunities, phishing URLs, and impersonation cons. Victims get tricked into sharing confidential information or remitting money using seemingly official government or bank websites, leading to losses in crypto Ponzi schemes, UPI scams, and others.

India’s Response to Misinformation

- PIB Fact Check Unit

The Press Information Bureau (PIB) operates a fact-checking service to debunk viral false information, particularly on government policies. In 3 years, the unit identified more than 1,500 misinformation posts across media.

- Indian Cybercrime Coordination Centre (I4C)

Working under MHA, I4C has collaborated with social media platforms to identify sources of viral misinformation. Through the Cyber Tipline, citizens can report misleading content through 1930 or cybercrime.gov.in.

- IT Rules (The Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 [updated as on 6.4.2023]

The Information Technology (Intermediary Guidelines) Rules were updated to enable the government to following aspects:

- Removal of unlawful content

- Platform accountability

- Detection Tools

There are certain detection tool that works as shields in assisting fact-checkers and enforcement bodies to:

- Identify synthetic voice and video scams through technical measures.

- Track misinformation networks.

- Label manipulated media in real-time.

CyberPeace View: Solutions for a Misinformation-Resilient Bharat

- Scale Digital Literacy

"Think Before You Share" programs for rural schools to teach students to check sources, identify clickbait, and not reshare fake news.

- Platform Accountability

Technology platforms need to:

- Flag manipulated media.

- Offer algorithmic transparency.

- Mark AI-created media.

- Provide localised fact-checking across diverse Indian languages.

- Community-Led Verification

Establish WhatsApp and Telegram "Fact Check Hubs" headed by expert organisations, industry experts, journalists, and digital volunteers who can report at the grassroots level fake content.

- Legal Framework for Deepfakes

Formulate targeted legislation under the Bhartiya Nyaya Sanhita (BNS) and other relevant laws to make malicious deepfake and synthetic media use a criminal offense for:

- Electoral manipulation.

- Defamation.

- Financial scams.

- AI Counter-Misinformation Infrastructure

Invest in public sector AI models trained specifically to identify:

- Coordinated disinformation patterns.

- Botnet-driven hashtag campaigns.

- Real-time viral fake news bursts.

Conclusion

Mis/disinformation is more than just a content issue, it's a public health, cybersecurity, and democratic stability challenge. As India enters the digitally empowered world, making a secure, informed, and resilient information ecosystem is no longer a choice; now, it's imperative. Fighting misinformation demands a whole-of-society effort with AI innovation, public education, regulatory overhaul, and tech responsibility. The danger is there, but so is the opportunity to guide the world toward a fact-first, trust-based digital age. It's time to act.

References

- https://www.pib.gov.in/factcheck.aspx

- https://www.meity.gov.in/static/uploads/2024/02/Information-Technology-Intermediary-Guidelines-and-Digital-Media-Ethics-Code-Rules-2021-updated-06.04.2023-.pdf

- https://www.cyberpeace.org

- https://www.bbc.com/news/topics/cezwr3d2085t

- https://www.logically.ai

- https://www.altnews.in

.webp)

Introduction

Conversations surrounding the scourge of misinformation online typically focus on the risks to social order, political stability, economic safety and personal security. An oft-overlooked aspect of this phenomenon is the fact that it also takes a very real emotional and mental toll on people. Even as we grapple with the big picture questions about financial fraud or political rumors or inaccurate medical information online, we must also appreciate the fact that being exposed to misinformation and becoming aware of one’s own vulnerability are both significant sources of mental stress in today’s digital ecosystem.

Inaccurate information causes confusion and worry, which has negative consequences for mental health. Misinformation may also impair people's sense of well-being by undermining their trust in institutions, authority figures, and their own judgment. The constant bombardment of misinformation can lead to information overload, wherein people are unable to discriminate between legitimate sources and misleading content, resulting in mental exhaustion and a sense of being overwhelmed by the sheer volume of information available. Vulnerable groups such as children, the elderly, and those with pre-existing health conditions are more sensitive or susceptible to the negative effects of misinformation.

How Does Misinformation Endanger Mental Health?

Misinformation on social media platforms is a matter of public health because it has the potential to confuse people, lead to poor decision-making and result in cognitive dissonance, anxiety and unwanted behavioural changes.

Unconstrained misinformation can also lead to social disorder and the prevalence of negative emotions amongst larger numbers, ultimately causing a huge impact on society. Therefore, understanding the spread and diffusion characteristics of misinformation on Internet platforms is crucial.

The spread of misinformation can elicit different emotions of the public, and the emotions also change with the spread of misinformation. Factors such as user engagement, number of comments, and time of discussion all have an impact on the change of emotions in misinformation. Active users tend to make more comments, engage longer in discussions, and display more dominant negative emotions when triggered by misinformation. Understanding the evolution pattern of emotions triggered by misinformation is also important in view of the public’s emotional fluctuations under the influence of misinformation, and social media often magnifies the impact of emotions and makes emotions spread rapidly in social networks. For example, the sentiment of misinformation increases when there are sensitive topics such as political elections, viral trending topics, health-related information, communal and local information, information about natural disasters and more. Active misinformation on the Internet not only affects the public's psychology, mental health and behavior, but also has an impact on the stability of social order and the maintenance of social security.

Prebunking and Debunking To Build Mental Guards Against Misinformation

As the spread of misinformation and disinformation rises, so do the techniques aimed to tackle their spread. Prebunking or attitudinal inoculation is a technique for training individuals to recogniseand resist deceptive communications before they can take root. Prebunking is a psychological method for mitigating the effects of misinformation, strengthening resilience and creating cognitive defenses against future misinformation. Debunking provides individuals with accurate information to counter false claims and myths, correcting misconceptions and preventing the spread of misinformation. By presenting evidence-based refutations, debunking helps individuals distinguish fact from fiction.

What do health experts say about online misinformation?

“In the21st century, mental health is crucial due to the overwhelming amount of information available online. The COVID-19 pandemic-related misinformation was a prime example of this, with misinformation spreading online, leading to increased anxiety, panic buying, fear of leaving home, and mistrust in health measures. To protect our mental health, it is essential to cultivate a discerning mindset, question sources, and verify information before consumption. Fostering a supportive community that encourages open dialogue and fact-checking can help navigate the digital information landscape with confidence and emotional support. Prioritising self-care routines, mindfulness practices, and seeking professional guidance are also crucial for safeguarding mental health in the digital information era.”

In conversation with CyberPeace ~ Says Dubai-based psychologist, Aishwarya Menon, (BA,in Psychology and Criminology from the University of Westen Ontario, London and MA in Mental Health and Addictions (Humber College, University of Guelph),Toronto.

CyberPeace Policy Recommendations:

1) Countering misinformation is everyone's shared responsibility. To mitigate the negative effects of infodemics online, we must look at developing strong legal policies, creating and promoting awareness campaigns, relying on authenticated content on mass media, and increasing people's digital literacy.

2) Expert organisations actively verifying the information through various strategies including prebunking and debunking efforts are among those best placed to refute misinformation and direct users to evidence-based information sources. It is recommended that countermeasures for users on platforms be increased with evidence-based data or accurate information.

3) The role of social media platforms is crucial in the misinformation crisis, hence it is recommended that social media platforms actively counter the production of misinformation on their platforms. Local, national, and international efforts and additional research are required to implement the robust misinformation counterstrategies.

4) Netizens are advised or encouraged to follow official sources to check the reliability of any news or information. They must recognise the red flags by recognising the signs such as questionable facts, poorly written texts, surprising or upsetting news, fake social media accounts and fake websites designed to look like legitimate ones. Netizens are also encouraged to develop cognitive skills to discern fact and reality. Netizens are advised to approach information with a healthy dose of skepticism and curiosity.

Final Words:

It is crucial to protect mental health by escalating and disturbing the rise of misinformation incidents on various subjects, safeguarding our minds requires cognitive skills, building media literacy and verifying the information from trusted sources, prioritising mental health by self-care practices and staying connected with supportive authenticated networks. Promoting prebunking and debunking initiatives is necessary. Netizen scan protect themselves against the negative effects of misinformation and cultivate a resilient mindset in the digital information age.

References:

- https://www.hindawi.com/journals/scn/2021/7999760/

- https://www.ncbi.nlm.nih.gov/pmc/articles/PMC8502082/