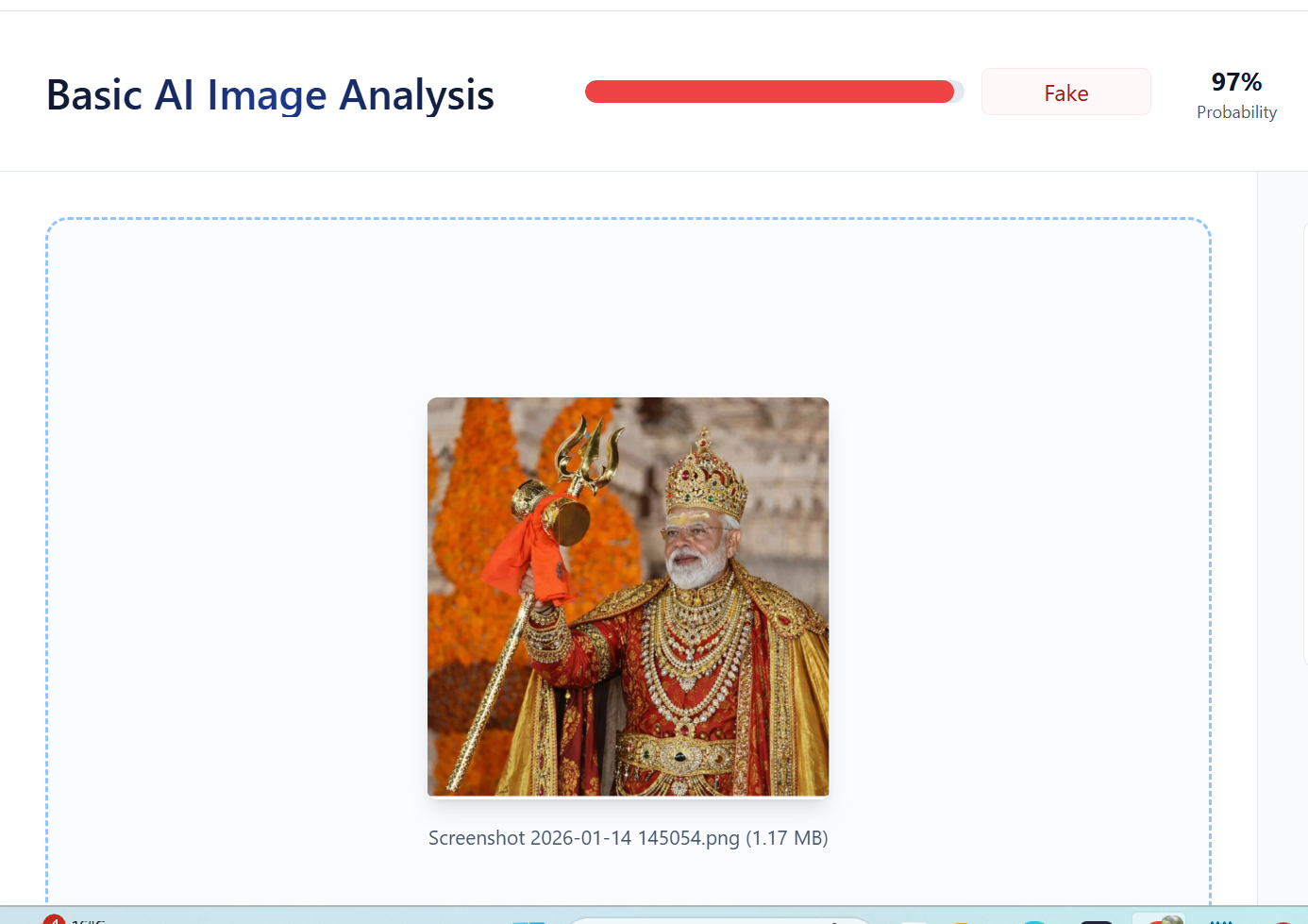

#FactCheck: No, PM Modi Did Not Appear in Royal Attire,Image Is AI-Generated

A photograph showing Prime Minister Narendra Modi holding a trident and dressed in royal attire is being widely shared on social media. Users circulating the image are claiming that it shows PM Modi in a regal outfit.

However, a verification by the Cyber Peace Foundation’s Research Desk has found that the claim is false. The investigation established that the viral image is not authentic and has been generated using Artificial Intelligence (AI).

Claim:

On January 11, 2026, several Instagram users shared the image with captions describing it as a photograph of Prime Minister Modi in royal attire.

Links and archived versions of the posts, along with screenshots, are provided below.

Fact Check:

To verify the claim, relevant keywords such as “PM Modi holding trishul” were searched on Google. This led to a report published by Navbharat Times on January 10, 2025. The report features photographs of Prime Minister Modi holding a trident during his visit to the Somnath Temple. However, in the original images, he is seen wearing normal attire, not royal clothing as shown in the viral image. Link and screenshot

In the next step of the investigation, the original photograph was traced to the official Instagram account of BJP Gujarat, where it was posted on January 11, 2026. The post clearly identifies the image as being from Somnath Temple. Link and screenshot: https://www.instagram.com/p/DTVlb-9Da1V

A close examination of the viral image raised suspicion about digital manipulation. The image was then analysed using the AI detection tool TruthScan. The tool’s assessment indicated a 97 percent likelihood that the image was AI-generated.

Further comparison between the viral image and the original photograph revealed that all visual elements match except the clothing, confirming that the attire was digitally altered using AI tools.

Conclusion

The claim that Prime Minister Narendra Modi appeared in royal attire is false. The Cyber Peace Foundation’s research confirms that the viral image was created using AI by altering the clothing in an original photograph taken during PM Modi’s visit to Somnath Temple. The manipulated image was shared online to mislead users.

Related Blogs

Executive Summary:

A viral video has circulated on social media, wrongly showing lawbreakers surrendering to the Indian Army. However, the verification performed shows that the video is of a group surrendering to the Bangladesh Army and is not related to India. The claim that it is related to the Indian Army is false and misleading.

Claims:

A viral video falsely claims that a group of lawbreakers is surrendering to the Indian Army, linking the footage to recent events in India.

Fact Check:

Upon receiving the viral posts, we analysed the keyframes of the video through Google Lens search. The search directed us to credible news sources in Bangladesh, which confirmed that the video was filmed during a surrender event involving criminals in Bangladesh, not India.

We further verified the video by cross-referencing it with official military and news reports from India. None of the sources supported the claim that the video involved the Indian Army. Instead, the video was linked to another similar Bangladesh Media covering the news.

No evidence was found in any credible Indian news media outlets that covered the video. The viral video was clearly taken out of context and misrepresented to mislead viewers.

Conclusion:

The viral video claiming to show lawbreakers surrendering to the Indian Army is footage from Bangladesh. The CyberPeace Research Team confirms that the video is falsely attributed to India, misleading the claim.

- Claim: The video shows miscreants surrendering to the Indian Army.

- Claimed on: Facebook, X, YouTube

- Fact Check: False & Misleading

Overview:

The National Payments Corporation of India (NPCI) officially revealed on the 31st of July 2024 that its client C-Edge Technologies had been subject to a ransomware attack. These circumstances have caused C-Edge to be separated from retail payment systems to eliminate more threats to the national payment systems. More than 200 cooperative and regional rural banks have been affected leading to disruptions in normal services including ATM withdrawals and UPI transactions.

About C-Edge Technologies:

C-Edge Technologies was founded in the year 2010 especially to meet the specific requirements of the Indian banking and other allied sectors accentuating more on the cooperative and the regional rural banks. The company offers a range of services such as Core Banking Solutions by functioning as the center of a bank where customers’ records are managed and accounting of transactions takes place, Payment Solutions through the implementation of payment gateways and mobile banking facilities, cybersecurity through threat detection and incident response to protect banking organizations, data analytics and AI through the analytics of big banking data to reduce risks and detect frauds.

Details of Ransomware attack:

Reports say, this ransomware attack has been attributed by the RansomEXX group which primarily targeted Brontoo Technology Solutions, a key collaborator with C-Edge, through a misconfigured Jenkins server, which allowed unauthorized access to the systems.

The RansomExx group also known as Defray777 or Ransom X utilized a sophisticated variant known as RansomEXX v2.0 to execute the attack. This group often targets large organizations and demands substantial ransoms. RansomEXX uses various malware tools such as IcedID, Vatet Loader, and PyXie RAT. It typically infiltrates systems through phishing emails, exploiting vulnerabilities in applications and services, including Remote Desktop Protocol (RDP). The ransomware encrypts files using the Advanced Encryption Standard (AES), with the encryption key further secured using RSA encryption. This dual-layer encryption complicates recovery efforts for victims. RansomEXX operates on a ransomware-as-a-service model, allowing affiliates to conduct attacks using its infrastructure. Earlier in 2021, it attacked StarHub and Gigabyte’s servers for ransome.

Impact due to the attack:

The immediate consequences of the ransomware attack include:

- Service Disruption: This has negative implications to consumers especially the citizens who use the banks to do their day to day banking activities such as withdrawals and online transactions. Among the complaints some of them relate to cases where the sender’s account has been debited without the corresponding credit to the receiver account.

- Isolation Measures: Likely, NPCI is already following the right measures as it had disconnected C-Edge from its networks to contain the proliferation of the ransomware. This decision was made as a precautionary measure so that all functional aspects in a larger financial system are safeguarded.

Operations resumed:

The National Payments Corporation of India (NPCI) said it has restored connectivity with C-Edge Technologies Ltd after the latter’s network connection was severed by NPCI over security concerns that were evaluated by an external forensic auditing firm. The audit affirmed that all affected systems were contained in order to avoid the occurrence of ransomware attack contagion. All the affected systems were localized in C-Edge’s data center and no repercussion was evidenced regarding the infrastructure of the cooperative banks or the regional rural banks that are involved in the business. Both NPCI and C-Edge Technologies have resumed normalcy so that the banking and financial services being offered by these banks remain safe and secure.

Major Implications for Banking Sector:

The attack on C-Edge Technologies raises several critical concerns for the Indian banking sector:

- Cybersecurity Vulnerabilities: It also shows the weak linkages which are present within the technology system that help smaller sized banks. Nevertheless, the service has been offered by C-Edge regarding their cybersecurity solution, this attack evidence that the securities required should improve in all types of banks and banking applications.

- Financial Inclusion Risks: Co operative and regional rural banks also have its importance in the financial inclusion especially in rural and semi urban areas. Gradually, interruptions to their services pose a risk to signal diminished improvement in financial literacy for the excluded groups contrary to the common year advancement.

- Regulatory Scrutiny: After this event, agencies such as the Reserve Bank of India (RBI) may enhance the examination of the banking sector’s cybersecurity mechanisms. Some of the directives may even require institutions to adhere to higher compliance measures regarding the defense against cyber threats.

Way Forward: Mitigation

- Strengthening Cybersecurity: It is important to enhance the cyber security to eliminate this kind of attacks in the future. This may include using better threat detection systems, penetration testing to find the vulnerabilities, system hardening, and network monitoring from time to time.

- Transition to Cloud-Based Solutions: The application of adaptations in cloud solutions can contribute to the enhancement in operative efficiency as well as optimization in the utilization of resources. The security features of cloud should be implemented for safety and protection against cyber threats for SMEs in the banking sector.

- Leveraging AI and Data Analytics: Development of the AI-based solutions for fraud and risk control means that bank organizations get the chance to address threats and to regain clients’ trust.

Conclusion:

This ransomware attack in C-Edge Technologies in the banking sector provides a warning for all the infrastructures. Initial cleanup methodologies and quarantining are effective. The continuous monitoring of cyber security features in the infrastructure and awareness between employees helps to avoid these kinds of attacks. Building up cyber security areas will also effectively safeguard the institution against other cyber risks in the future and fortify the confidence and reliability of the financial system, especially the regional rural banks.

Reference:

- https://www.businesstoday.in/technology/news/story/c-edge-technologies-a-deep-dive-into-the-indian-fintech-powerhouse-hit-by-major-cyberattack-439657-2024-08-01

- https://www.thehindu.com/sci-tech/technology/customers-at-several-small-sized-banks-affected-as-tech-provider-c-edge-suffers-ransomware-attack/article68470198.ece

- https://www.cnbctv18.com/technology/ransomware-attack-disrupts-over-200-co-operative-banks-regional-rural-banks-19452521.htm

- https://timesofindia.indiatimes.com/city/ahmedabad/ransomware-breach-at-c-edge-impacts-transactions-for-cooperative-banks/articleshow/112180914.cms

- https://www.emsisoft.com/en/blog/41027/ransomware-profile-ransomexx/

Introduction

Beginning with the premise that the advent of the internet has woven a rich but daunting digital web, intertwining the very fabric of technology with the variegated hues of human interaction, the EU has stepped in as the custodian of this ever-evolving tableau. It is within this sprawling network—a veritable digital Minotaur's labyrinth—that the European Union has launched a vigilant quest, seeking not merely to chart its enigmatic corridors but to instil a sense of order in its inherent chaos.

The Digital Services Act (DSA) is the EU's latest testament to this determined pilgrimage, a voyage to assert dominion over the nebulous realms of cyberspace. In its latest sagacious move, the EU has levelled its regulatory lance at the behemoths of digital indulgence—Pornhub, XVideos, and Stripchat—monarchs in the realm of adult entertainment, each commanding millions of devoted followers.

Applicability of DSA

Graced with the moniker of Very Large Online Platforms (VLOPs), these titans of titillation are now facing the complex weave of duties delineated by the DSA, a legislative leviathan whose coils envelop the shadowy expanses of the internet with an aim to safeguard its citizens from the snares and pitfalls ensconced within. Like a vigilant Minotaur, the European Commission, the EU's executive arm, stands steadfast, enforcing compliance with an unwavering gaze.

The DSA is more than a mere compilation of edicts; it encapsulates a deeper, more profound ethos—a clarion call announcing that the wild frontiers of the digital domain shall be tamed, transforming into enclaves where the sanctity of individual dignity and rights is zealously championed. The three corporations, singled out as the pioneers to be ensnared by the DSA's intricate net, are now beckoned to embark on an odyssey of transformation, realigning their operations with the EU's noble envisioning of a safeguarded internet ecosystem.

The Paradigm Shift

In a resolute succession, following its first decree addressing 19 Very Large Online Platforms and Search Engines, the Commission has now ensconced the trinity of adult content purveyors within the DSA's embrace. The act demands that these platforms establish intuitive user mechanisms for reporting illicit content, prioritize communications from entities bestowed with the 'trusted flaggers' title, and elucidate to users the rationale behind actions taken to restrict or remove content. Paramount to the DSA's ethos, they are also tasked with constructing internal mechanisms to address complaints, forthwith apprising law enforcement of content hinting at criminal infractions, and revising their operational underpinnings to ensure the confidentiality, integrity, and security of minors.

But the aspirations of the DSA stretch farther, encompassing a realm where platforms are agents against deception and manipulation of users, categorically eschewing targeted advertisement that exploits sensitive profiling data or is aimed at impressionable minors. The platforms must operate with an air of diligence and equitable objectivity, deftly applying their terms of use, and are compelled to reveal their content moderation practices through annual declarations of transparency.

The DSA bestows upon the designated VLOPs an even more intensive catalogue of obligations. Within a scant four months of their designation, Pornhub, XVideos, and Stripchat are mandated to implement measures that both empower and shield their users—especially the most vulnerable, minors—from harms that traverse their digital portals. Augmented content moderation measures are requisite, with critical risk analyses and mitigation strategies directed at halting the spread of unlawful content, such as child exploitation material or the non-consensual circulation of intimate imagery, as well as curbing the proliferation and repercussions of deepfake-generated pornography.

The New Rules

The DSA enshrines the preeminence of protecting minors, with a staunch requirement for VLOPs to contrive their services so as to anticipate and enfeeble any potential threats to the welfare of young internet navigators. They must enact operational measures to deter access to pornographic content by minors, including the utilization of robust age verification systems. The themes of transparency and accountability are amplified under the DSA's auspices, with VLOPs subject to external audits of their risk assessments and adherence to stipulations, the obligation to maintain accessible advertising repositories, and the provision of data access to rigorously vetted researchers.

Coordinated by the Commission in concert with the Member States' Digital Services Coordinators, vigilant supervision will be maintained to ensure the scrupulous compliance of Pornhub, Stripchat, and XVideos with the DSA's stringent directives. The Commission's services are poised to engage with the newly designated platforms diligently, affirming that initiatives aimed at shielding minors from pernicious content, as well as curbing the distribution of illegal content, are effectively addressed.

The EU's monumental crusade, distilled into the DSA, symbolises a pledge—a testament to its steadfast resolve to shepherd cyberspace, ensuring the Minotaur of regulation keeps the bedlam at a manageable compass and the sacrosanctity of the digital realm inviolate for all who meander through its infinite expanses. As we cast our gazes toward February 17, 2024—the cusp of the DSA's comprehensive application—it is palpable that this legislative milestone is not simply a set of guidelines; it stands as a bold, unflinching manifesto. It beckons the advent of a novel digital age, where every online platform, barring small and micro-enterprises, will be enshrined in the lofty ideals imparted by the DSA.

Conclusion

As we teeter on the edge of this nascent digital horizon, it becomes unequivocally clear: the European Union's Digital Services Act is more than a mundane policy—it is a pledge, a resolute statement of purpose, asserting that amid the vast, interwoven tapestry of the internet, each user's safety, dignity, and freedoms are enshrined and hold the intrinsic significance meriting the force of the EU's legislative guard. Although the labyrinth of the digital domain may be convoluted with complexity, guided by the DSA's insightful thread, the march toward a more secure, conscientious online sphere forges on—resolute, unerring, one deliberate stride at a time.

References

https://ec.europa.eu/commission/presscorner/detail/en/ip_23_6763https://www.breakingnews.ie/world/three-of-the-biggest-porn-sites-must-verify-ages-under-eus-new-digital-law-1566874.html